私的AI研究会 > NUCGen10

Intel® NUC に OpenVINO™ Toolkit をインストールして 「AIエッジコンピューティング」を実践する。第10世代 CPU の速度を試す。

最新版「2021.3」をインストールする。

※1. Linux のインストールできるハードウェア環境(VT-X テクノロジーに対応したインテル CPU は必要)であれば以下の手順でインストールできる。

※2. CPU によっては、モデル変換・サンプルソフトの実行でエラーが発生する(Celeron®など)。

この場合は別途変換済みの学習済みのモデルとビルド済みのサンプルデモファイルを用意しコピーすることで対応する。

$ sudo apt update ... $ sudo apt install -y fonts-noto

$ sudo apt install gnome-tweaks

$ sudo apt install openssh-server

$ sudo apt install net-tools

$ vi ~/.vimrc set nocompatible set backspace=indent,eol,start set expandtab set tabstop=4 set shiftwidth=4 set autoindentsudo 付きで vi を実行した場合は、root の設定が使用されるので、rootの .vimrcを ~/vimrc のシンボリックリンクにする。

$ sudo ln -s ~/.vimrc /root/.vimrc $ sudo ls -la /root 合計 24 drwx------ 4 root root 4096 3月 31 19:07 . drwxr-xr-x 20 root root 4096 3月 31 17:29 .. -rw-r--r-- 1 root root 3106 12月 5 2019 .bashrc drwx------ 2 root root 4096 2月 10 03:51 .cache -rw-r--r-- 1 root root 161 12月 5 2019 .profile lrwxrwxrwx 1 root root 19 3月 31 19:07 .vimrc -> /home/mizutu/.vimrc drwxr-xr-x 3 root root 4096 3月 31 17:33 snap

$ sudo gsettings set org.gnome.Vino require-encryption false確認

$ gsettings list-recursively org.gnome.Vino

org.gnome.Vino prompt-enabled false

org.gnome.Vino require-encryption true <==ここが falseに!

org.gnome.Vino use-alternative-port false

:

設定できていないので$ DISPLAY=:0 gsettings set org.gnome.Vino require-encryption falseVNCビューアから接続<IPアドレス>:5900

$ cd ダウンロード $ ls l_openvino_toolkit_p_2021.3.384.tgz $ tar -xvzf l_openvino_toolkit_p_2021.3.394.tgz

$ cd l_openvino_toolkit_p_2021.3.394 $ sudo ./install_GUI.shGUI のインストール手順に従いインストールを進める。

$ cd /opt/intel/openvino_2021/install_dependencies $ sudo -E ./install_openvino_dependencies.sh

$ source /opt/intel/openvino_2021/bin/setupvars.sh [setupvars.sh] OpenVINO environment initializedシェルを起動時に自動的に環境変数を設定するため 「~/.bashrc」ファイルの最後に「source /opt/intel/openvino_2021/bin/setupvars.sh」の1行を追記する。

[setupvars.sh] OpenVINO environment initialized

$ cd /opt/intel/openvino_2021/deployment_tools/model_optimizer/install_prerequisites $ sudo ./install_prerequisites.sh

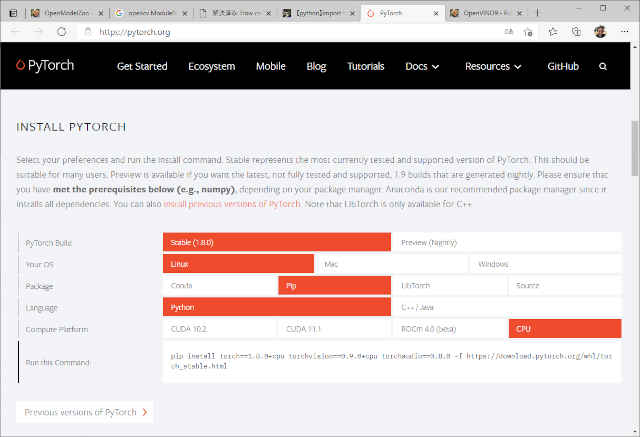

$ pip3 install torch==1.8.1+cpu torchvision==0.9.1+cpu torchaudio==0.8.1 -f https://download.pytorch.org/whl/torch_stable.html

$ pip3 install scipy

Defaulting to user installation because normal site-packages is not writeable

Collecting scipy

Downloading scipy-1.6.3-cp38-cp38-manylinux1_x86_64.whl (27.2 MB)

|████████████████████████████████| 27.2 MB 38 kB/s

Requirement already satisfied: numpy<1.23.0,>=1.16.5 in /usr/local/lib/python3.8/dist-packages (from scipy) (1.18.5)

Installing collected packages: scipy

Successfully installed scipy-1.6.3$ cd /opt/intel/openvino_2021/deployment_tools/demo

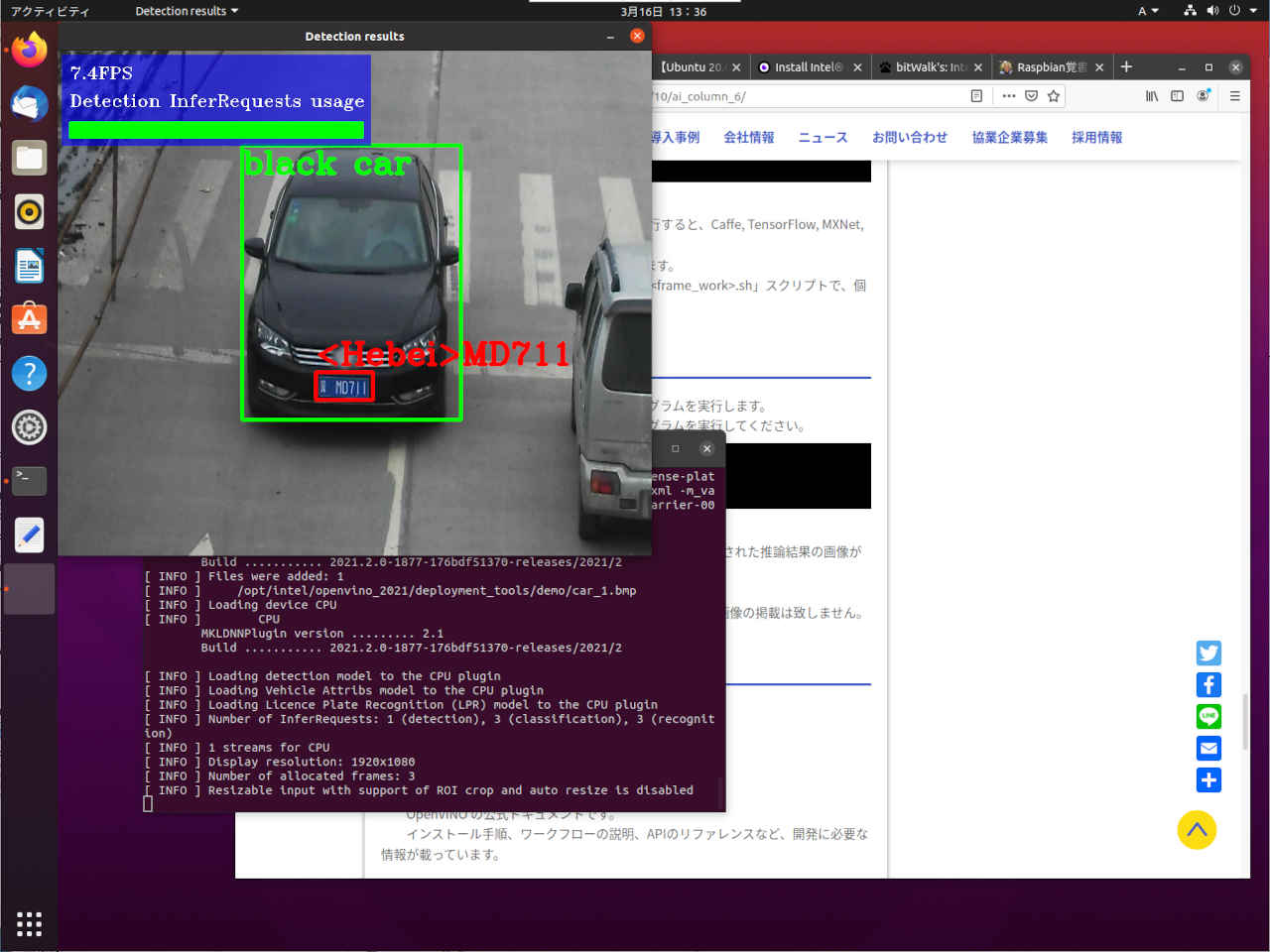

$ ./demo_security_barrier_camera.sh

:

:

Build Inference Engine demos

-- The C compiler identification is GNU 9.3.0

-- The CXX compiler identification is GNU 9.3.0

-- Check for working C compiler: /usr/bin/cc

-- Check for working C compiler: /usr/bin/cc -- works

-- Detecting C compiler ABI info

:

[ 92%] Building CXX object security_barrier_camera_demo/CMakeFiles/security_barrier_camera_demo.dir/main.cpp.o

[100%] Linking CXX executable ../intel64/Release/security_barrier_camera_demo

[100%] Built target security_barrier_camera_demo

###################################################

:

Run Inference Engine security_barrier_camera demo Run ./security_barrier_camera_demo -d CPU -d_va CPU -d_lpr CPU -i /opt/intel/openvino_2021/deployment_tools/demo/car_1.bmp -m /home/mizutu/openvino_models/ir/intel/vehicle-license-plate-detection-barrier-0106/FP16/vehicle-license-plate-detection-barrier-0106.xml -m_lpr /home/mizutu/openvino_models/ir/intel/license-plate-recognition-barrier-0001/FP16/license-plate-recognition-barrier-0001.xml -m_va /home/mizutu/openvino_models/ir/intel/vehicle-attributes-recognition-barrier-0039/FP16/vehicle-attributes-recognition-barrier-0039.xml [ INFO ] InferenceEngine: API version ......... 2.1 Build ........... 2021.3.0-2787-60059f2c755-releases/2021/3 [ INFO ] Files were added: 1 [ INFO ] /opt/intel/openvino_2021/deployment_tools/demo/car_1.bmp [ INFO ] Loading device CPU [ INFO ] CPU MKLDNNPlugin version ......... 2.1 Build ........... 2021.3.0-2787-60059f2c755-releases/2021/3 [ INFO ] Loading detection model to the CPU plugin [ INFO ] Loading Vehicle Attribs model to the CPU plugin [ INFO ] Loading Licence Plate Recognition (LPR) model to the CPU plugin [ INFO ] Number of InferRequests: 1 (detection), 3 (classification), 3 (recognition) [ INFO ] 4 streams for CPU [ INFO ] Display resolution: 1920x1080 [ INFO ] Number of allocated frames: 3 [ INFO ] Resizable input with support of ROI crop and auto resize is disabled 0.7FPS for (3 / 1) frames Detection InferRequests usage: 0.0% [ INFO ] Execution successful ################################################### Demo completed successfully.

$ cd /opt/intel/openvino_2021/deployment_tools/demo $ ./demo_squeezenet_download_convert_run.sh

Run Inference Engine classification sample Run ./classification_sample_async -d CPU -i /opt/intel/openvino_2021/deployment_tools/demo/car.png -m /home/mizutu/openvino_models/ir/public/squeezenet1.1/FP16/squeezenet1.1.xml [ INFO ] InferenceEngine: API version ............ 2.1 Build .................. 2021.3.0-2787-60059f2c755-releases/2021/3 Description ....... API [ INFO ] Parsing input parameters [ INFO ] Parsing input parameters [ INFO ] Files were added: 1 [ INFO ] /opt/intel/openvino_2021/deployment_tools/demo/car.png [ INFO ] Creating Inference Engine CPU MKLDNNPlugin version ......... 2.1 Build ........... 2021.3.0-2787-60059f2c755-releases/2021/3 [ INFO ] Loading network files [ INFO ] Preparing input blobs [ WARNING ] Image is resized from (787, 259) to (227, 227) [ INFO ] Batch size is 1 [ INFO ] Loading model to the device [ INFO ] Create infer request [ INFO ] Start inference (10 asynchronous executions) [ INFO ] Completed 1 async request execution [ INFO ] Completed 2 async request execution [ INFO ] Completed 3 async request execution [ INFO ] Completed 4 async request execution [ INFO ] Completed 5 async request execution [ INFO ] Completed 6 async request execution [ INFO ] Completed 7 async request execution [ INFO ] Completed 8 async request execution [ INFO ] Completed 9 async request execution [ INFO ] Completed 10 async request execution [ INFO ] Processing output blobs Top 10 results: Image /opt/intel/openvino_2021/deployment_tools/demo/car.png classid probability label ------- ----------- ----- 817 0.6853030 sports car, sport car 479 0.1835197 car wheel 511 0.0917197 convertible 436 0.0200694 beach wagon, station wagon, wagon, estate car, beach waggon, station waggon, waggon 751 0.0069604 racer, race car, racing car 656 0.0044177 minivan 717 0.0024739 pickup, pickup truck 581 0.0017788 grille, radiator grille 468 0.0013083 cab, hack, taxi, taxicab 661 0.0007443 Model T [ INFO ] Execution successful [ INFO ] This sample is an API example, for any performance measurements please use the dedicated benchmark_app tool ################################################### Demo completed successfully.

$ cd /opt/intel/openvino_2021/deployment_tools/demo $ ./demo_benchmark_app.sh

Run Inference Engine benchmark app Run ./benchmark_app -d CPU -i /opt/intel/openvino_2021/deployment_tools/demo/car.png -m /home/mizutu/openvino_models/ir/public/squeezenet1.1/FP16/squeezenet1.1.xml -pc -niter 1000 [Step 1/11] Parsing and validating input arguments [ INFO ] Parsing input parameters [ INFO ] Files were added: 1 [ INFO ] /opt/intel/openvino_2021/deployment_tools/demo/car.png [Step 2/11] Loading Inference Engine [ INFO ] InferenceEngine: API version ............ 2.1 Build .................. 2021.3.0-2787-60059f2c755-releases/2021/3 Description ....... API [ INFO ] Device info: CPU MKLDNNPlugin version ......... 2.1 Build ........... 2021.3.0-2787-60059f2c755-releases/2021/3 [Step 3/11] Setting device configuration [ WARNING ] -nstreams default value is determined automatically for CPU device. Although the automatic selection usually provides a reasonable performance, but it still may be non-optimal for some cases, for more information look at README. [Step 4/11] Reading network files [ INFO ] Loading network files [ INFO ] Read network took 6.63 ms [Step 5/11] Resizing network to match image sizes and given batch [ INFO ] Network batch size: 1 [Step 6/11] Configuring input of the model [Step 7/11] Loading the model to the device [ INFO ] Load network took 81.37 ms [Step 8/11] Setting optimal runtime parameters [Step 9/11] Creating infer requests and filling input blobs with images [ INFO ] Network input 'data' precision U8, dimensions (NCHW): 1 3 227 227 [ WARNING ] Some image input files will be duplicated: 4 files are required but only 1 are provided [ INFO ] Infer Request 0 filling [ INFO ] Prepare image /opt/intel/openvino_2021/deployment_tools/demo/car.png [ WARNING ] Image is resized from (787, 259) to (227, 227) [ INFO ] Infer Request 1 filling [ INFO ] Prepare image /opt/intel/openvino_2021/deployment_tools/demo/car.png [ WARNING ] Image is resized from (787, 259) to (227, 227) [ INFO ] Infer Request 2 filling [ INFO ] Prepare image /opt/intel/openvino_2021/deployment_tools/demo/car.png [ WARNING ] Image is resized from (787, 259) to (227, 227) [ INFO ] Infer Request 3 filling [ INFO ] Prepare image /opt/intel/openvino_2021/deployment_tools/demo/car.png [ WARNING ] Image is resized from (787, 259) to (227, 227) [Step 10/11] Measuring performance (Start inference asynchronously, 4 inference requests using 4 streams for CPU, limits: 1000 iterations) [ INFO ] First inference took 4.67 ms [Step 11/11] Dumping statistics report [ INFO ] Performance counts for 0-th infer request: data/mean_const_biases NOT_RUN layerType: Const realTime: 0 cpu: 0 execType: unknown_FP32 data/mean_const_weights NOT_RUN layerType: Const realTime: 0 cpu: 0 execType: unknown_FP32 data/mean EXECUTED layerType: ScaleShift realTime: 70 cpu: 70 execType: jit_avx2_I8 conv1 EXECUTED layerType: Convolution realTime: 567 cpu: 567 execType: jit_avx2_FP32 relu_conv1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef pool1 EXECUTED layerType: Pooling realTime: 366 cpu: 366 execType: jit_avx_FP32 fire2/squeeze1x1 EXECUTED layerType: Convolution realTime: 80 cpu: 80 execType: jit_avx2_1x1_FP32 fire2/relu_squeeze1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire2/expand1x1 EXECUTED layerType: Convolution realTime: 81 cpu: 81 execType: jit_avx2_1x1_FP32 fire2/relu_expand1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire2/expand3x3 EXECUTED layerType: Convolution realTime: 631 cpu: 631 execType: jit_avx2_FP32 fire2/relu_expand3x3 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire2/concat EXECUTED layerType: Concat realTime: 3 cpu: 3 execType: unknown_FP32 fire3/squeeze1x1 EXECUTED layerType: Convolution realTime: 176 cpu: 176 execType: jit_avx2_1x1_FP32 fire3/relu_squeeze1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire3/expand1x1 EXECUTED layerType: Convolution realTime: 78 cpu: 78 execType: jit_avx2_1x1_FP32 fire3/relu_expand1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire3/expand3x3 EXECUTED layerType: Convolution realTime: 545 cpu: 545 execType: jit_avx2_FP32 fire3/relu_expand3x3 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire3/concat EXECUTED layerType: Concat realTime: 2 cpu: 2 execType: unknown_FP32 pool3 EXECUTED layerType: Pooling realTime: 192 cpu: 192 execType: jit_avx_FP32 fire4/squeeze1x1 EXECUTED layerType: Convolution realTime: 78 cpu: 78 execType: jit_avx2_1x1_FP32 fire4/relu_squeeze1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire4/expand1x1 EXECUTED layerType: Convolution realTime: 69 cpu: 69 execType: jit_avx2_1x1_FP32 fire4/relu_expand1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire4/expand3x3 EXECUTED layerType: Convolution realTime: 576 cpu: 576 execType: jit_avx2_FP32 fire4/relu_expand3x3 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire4/concat EXECUTED layerType: Concat realTime: 2 cpu: 2 execType: unknown_FP32 fire5/squeeze1x1 EXECUTED layerType: Convolution realTime: 153 cpu: 153 execType: jit_avx2_1x1_FP32 fire5/relu_squeeze1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire5/expand1x1 EXECUTED layerType: Convolution realTime: 67 cpu: 67 execType: jit_avx2_1x1_FP32 fire5/relu_expand1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire5/expand3x3 EXECUTED layerType: Convolution realTime: 553 cpu: 553 execType: jit_avx2_FP32 fire5/relu_expand3x3 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire5/concat EXECUTED layerType: Concat realTime: 2 cpu: 2 execType: unknown_FP32 pool5 EXECUTED layerType: Pooling realTime: 75 cpu: 75 execType: jit_avx_FP32 fire6/squeeze1x1 EXECUTED layerType: Convolution realTime: 52 cpu: 52 execType: jit_avx2_1x1_FP32 fire6/relu_squeeze1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire6/expand1x1 EXECUTED layerType: Convolution realTime: 38 cpu: 38 execType: jit_avx2_1x1_FP32 fire6/relu_expand1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire6/expand3x3 EXECUTED layerType: Convolution realTime: 322 cpu: 322 execType: jit_avx2_FP32 fire6/relu_expand3x3 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire6/concat EXECUTED layerType: Concat realTime: 2 cpu: 2 execType: unknown_FP32 fire7/squeeze1x1 EXECUTED layerType: Convolution realTime: 76 cpu: 76 execType: jit_avx2_1x1_FP32 fire7/relu_squeeze1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire7/expand1x1 EXECUTED layerType: Convolution realTime: 38 cpu: 38 execType: jit_avx2_1x1_FP32 fire7/relu_expand1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire7/expand3x3 EXECUTED layerType: Convolution realTime: 326 cpu: 326 execType: jit_avx2_FP32 fire7/relu_expand3x3 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire7/concat EXECUTED layerType: Concat realTime: 1 cpu: 1 execType: unknown_FP32 fire8/squeeze1x1 EXECUTED layerType: Convolution realTime: 99 cpu: 99 execType: jit_avx2_1x1_FP32 fire8/relu_squeeze1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire8/expand1x1 EXECUTED layerType: Convolution realTime: 65 cpu: 65 execType: jit_avx2_1x1_FP32 fire8/relu_expand1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire8/expand3x3 EXECUTED layerType: Convolution realTime: 576 cpu: 576 execType: jit_avx2_FP32 fire8/relu_expand3x3 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire8/concat EXECUTED layerType: Concat realTime: 2 cpu: 2 execType: unknown_FP32 fire9/squeeze1x1 EXECUTED layerType: Convolution realTime: 138 cpu: 138 execType: jit_avx2_1x1_FP32 fire9/relu_squeeze1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire9/expand1x1 EXECUTED layerType: Convolution realTime: 66 cpu: 66 execType: jit_avx2_1x1_FP32 fire9/relu_expand1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire9/expand3x3 EXECUTED layerType: Convolution realTime: 588 cpu: 588 execType: jit_avx2_FP32 fire9/relu_expand3x3 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire9/concat EXECUTED layerType: Concat realTime: 2 cpu: 2 execType: unknown_FP32 conv10 EXECUTED layerType: Convolution realTime: 1973 cpu: 1973 execType: jit_avx2_1x1_FP32 relu_conv10 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef pool10/reduce EXECUTED layerType: Pooling realTime: 51 cpu: 51 execType: jit_avx_FP32 prob EXECUTED layerType: SoftMax realTime: 4 cpu: 4 execType: jit_avx2_FP32 prob_aBcd8b_abcd_out_prob EXECUTED layerType: Reorder realTime: 5 cpu: 5 execType: jit_uni_FP32 out_prob NOT_RUN layerType: Output realTime: 0 cpu: 0 execType: unknown_FP32 Total time: 8790 microseconds Full device name: Intel(R) Core(TM) i5-10210U CPU @ 1.60GHz [ INFO ] Performance counts for 1-th infer request: data/mean_const_biases NOT_RUN layerType: Const realTime: 0 cpu: 0 execType: unknown_FP32 data/mean_const_weights NOT_RUN layerType: Const realTime: 0 cpu: 0 execType: unknown_FP32 data/mean EXECUTED layerType: ScaleShift realTime: 71 cpu: 71 execType: jit_avx2_I8 conv1 EXECUTED layerType: Convolution realTime: 562 cpu: 562 execType: jit_avx2_FP32 relu_conv1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef pool1 EXECUTED layerType: Pooling realTime: 383 cpu: 383 execType: jit_avx_FP32 fire2/squeeze1x1 EXECUTED layerType: Convolution realTime: 82 cpu: 82 execType: jit_avx2_1x1_FP32 fire2/relu_squeeze1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire2/expand1x1 EXECUTED layerType: Convolution realTime: 144 cpu: 144 execType: jit_avx2_1x1_FP32 fire2/relu_expand1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire2/expand3x3 EXECUTED layerType: Convolution realTime: 545 cpu: 545 execType: jit_avx2_FP32 fire2/relu_expand3x3 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire2/concat EXECUTED layerType: Concat realTime: 4 cpu: 4 execType: unknown_FP32 fire3/squeeze1x1 EXECUTED layerType: Convolution realTime: 178 cpu: 178 execType: jit_avx2_1x1_FP32 fire3/relu_squeeze1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire3/expand1x1 EXECUTED layerType: Convolution realTime: 78 cpu: 78 execType: jit_avx2_1x1_FP32 fire3/relu_expand1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire3/expand3x3 EXECUTED layerType: Convolution realTime: 553 cpu: 553 execType: jit_avx2_FP32 fire3/relu_expand3x3 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire3/concat EXECUTED layerType: Concat realTime: 2 cpu: 2 execType: unknown_FP32 pool3 EXECUTED layerType: Pooling realTime: 161 cpu: 161 execType: jit_avx_FP32 fire4/squeeze1x1 EXECUTED layerType: Convolution realTime: 82 cpu: 82 execType: jit_avx2_1x1_FP32 fire4/relu_squeeze1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire4/expand1x1 EXECUTED layerType: Convolution realTime: 75 cpu: 75 execType: jit_avx2_1x1_FP32 fire4/relu_expand1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire4/expand3x3 EXECUTED layerType: Convolution realTime: 555 cpu: 555 execType: jit_avx2_FP32 fire4/relu_expand3x3 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire4/concat EXECUTED layerType: Concat realTime: 2 cpu: 2 execType: unknown_FP32 fire5/squeeze1x1 EXECUTED layerType: Convolution realTime: 154 cpu: 154 execType: jit_avx2_1x1_FP32 fire5/relu_squeeze1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire5/expand1x1 EXECUTED layerType: Convolution realTime: 69 cpu: 69 execType: jit_avx2_1x1_FP32 fire5/relu_expand1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire5/expand3x3 EXECUTED layerType: Convolution realTime: 559 cpu: 559 execType: jit_avx2_FP32 fire5/relu_expand3x3 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire5/concat EXECUTED layerType: Concat realTime: 2 cpu: 2 execType: unknown_FP32 pool5 EXECUTED layerType: Pooling realTime: 66 cpu: 66 execType: jit_avx_FP32 fire6/squeeze1x1 EXECUTED layerType: Convolution realTime: 53 cpu: 53 execType: jit_avx2_1x1_FP32 fire6/relu_squeeze1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire6/expand1x1 EXECUTED layerType: Convolution realTime: 39 cpu: 39 execType: jit_avx2_1x1_FP32 fire6/relu_expand1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire6/expand3x3 EXECUTED layerType: Convolution realTime: 335 cpu: 335 execType: jit_avx2_FP32 fire6/relu_expand3x3 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire6/concat EXECUTED layerType: Concat realTime: 2 cpu: 2 execType: unknown_FP32 fire7/squeeze1x1 EXECUTED layerType: Convolution realTime: 79 cpu: 79 execType: jit_avx2_1x1_FP32 fire7/relu_squeeze1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire7/expand1x1 EXECUTED layerType: Convolution realTime: 39 cpu: 39 execType: jit_avx2_1x1_FP32 fire7/relu_expand1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire7/expand3x3 EXECUTED layerType: Convolution realTime: 331 cpu: 331 execType: jit_avx2_FP32 fire7/relu_expand3x3 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire7/concat EXECUTED layerType: Concat realTime: 1 cpu: 1 execType: unknown_FP32 fire8/squeeze1x1 EXECUTED layerType: Convolution realTime: 102 cpu: 102 execType: jit_avx2_1x1_FP32 fire8/relu_squeeze1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire8/expand1x1 EXECUTED layerType: Convolution realTime: 67 cpu: 67 execType: jit_avx2_1x1_FP32 fire8/relu_expand1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire8/expand3x3 EXECUTED layerType: Convolution realTime: 571 cpu: 571 execType: jit_avx2_FP32 fire8/relu_expand3x3 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire8/concat EXECUTED layerType: Concat realTime: 2 cpu: 2 execType: unknown_FP32 fire9/squeeze1x1 EXECUTED layerType: Convolution realTime: 140 cpu: 140 execType: jit_avx2_1x1_FP32 fire9/relu_squeeze1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire9/expand1x1 EXECUTED layerType: Convolution realTime: 67 cpu: 67 execType: jit_avx2_1x1_FP32 fire9/relu_expand1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire9/expand3x3 EXECUTED layerType: Convolution realTime: 574 cpu: 574 execType: jit_avx2_FP32 fire9/relu_expand3x3 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire9/concat EXECUTED layerType: Concat realTime: 2 cpu: 2 execType: unknown_FP32 conv10 EXECUTED layerType: Convolution realTime: 1948 cpu: 1948 execType: jit_avx2_1x1_FP32 relu_conv10 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef pool10/reduce EXECUTED layerType: Pooling realTime: 53 cpu: 53 execType: jit_avx_FP32 prob EXECUTED layerType: SoftMax realTime: 4 cpu: 4 execType: jit_avx2_FP32 prob_aBcd8b_abcd_out_prob EXECUTED layerType: Reorder realTime: 5 cpu: 5 execType: jit_uni_FP32 out_prob NOT_RUN layerType: Output realTime: 0 cpu: 0 execType: unknown_FP32 Total time: 8741 microseconds Full device name: Intel(R) Core(TM) i5-10210U CPU @ 1.60GHz [ INFO ] Performance counts for 2-th infer request: data/mean_const_biases NOT_RUN layerType: Const realTime: 0 cpu: 0 execType: unknown_FP32 data/mean_const_weights NOT_RUN layerType: Const realTime: 0 cpu: 0 execType: unknown_FP32 data/mean EXECUTED layerType: ScaleShift realTime: 71 cpu: 71 execType: jit_avx2_I8 conv1 EXECUTED layerType: Convolution realTime: 562 cpu: 562 execType: jit_avx2_FP32 relu_conv1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef pool1 EXECUTED layerType: Pooling realTime: 383 cpu: 383 execType: jit_avx_FP32 fire2/squeeze1x1 EXECUTED layerType: Convolution realTime: 82 cpu: 82 execType: jit_avx2_1x1_FP32 fire2/relu_squeeze1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire2/expand1x1 EXECUTED layerType: Convolution realTime: 144 cpu: 144 execType: jit_avx2_1x1_FP32 fire2/relu_expand1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire2/expand3x3 EXECUTED layerType: Convolution realTime: 545 cpu: 545 execType: jit_avx2_FP32 fire2/relu_expand3x3 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire2/concat EXECUTED layerType: Concat realTime: 4 cpu: 4 execType: unknown_FP32 fire3/squeeze1x1 EXECUTED layerType: Convolution realTime: 178 cpu: 178 execType: jit_avx2_1x1_FP32 fire3/relu_squeeze1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire3/expand1x1 EXECUTED layerType: Convolution realTime: 78 cpu: 78 execType: jit_avx2_1x1_FP32 fire3/relu_expand1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire3/expand3x3 EXECUTED layerType: Convolution realTime: 553 cpu: 553 execType: jit_avx2_FP32 fire3/relu_expand3x3 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire3/concat EXECUTED layerType: Concat realTime: 2 cpu: 2 execType: unknown_FP32 pool3 EXECUTED layerType: Pooling realTime: 161 cpu: 161 execType: jit_avx_FP32 fire4/squeeze1x1 EXECUTED layerType: Convolution realTime: 82 cpu: 82 execType: jit_avx2_1x1_FP32 fire4/relu_squeeze1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire4/expand1x1 EXECUTED layerType: Convolution realTime: 75 cpu: 75 execType: jit_avx2_1x1_FP32 fire4/relu_expand1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire4/expand3x3 EXECUTED layerType: Convolution realTime: 555 cpu: 555 execType: jit_avx2_FP32 fire4/relu_expand3x3 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire4/concat EXECUTED layerType: Concat realTime: 2 cpu: 2 execType: unknown_FP32 fire5/squeeze1x1 EXECUTED layerType: Convolution realTime: 154 cpu: 154 execType: jit_avx2_1x1_FP32 fire5/relu_squeeze1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire5/expand1x1 EXECUTED layerType: Convolution realTime: 69 cpu: 69 execType: jit_avx2_1x1_FP32 fire5/relu_expand1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire5/expand3x3 EXECUTED layerType: Convolution realTime: 559 cpu: 559 execType: jit_avx2_FP32 fire5/relu_expand3x3 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire5/concat EXECUTED layerType: Concat realTime: 2 cpu: 2 execType: unknown_FP32 pool5 EXECUTED layerType: Pooling realTime: 66 cpu: 66 execType: jit_avx_FP32 fire6/squeeze1x1 EXECUTED layerType: Convolution realTime: 53 cpu: 53 execType: jit_avx2_1x1_FP32 fire6/relu_squeeze1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire6/expand1x1 EXECUTED layerType: Convolution realTime: 39 cpu: 39 execType: jit_avx2_1x1_FP32 fire6/relu_expand1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire6/expand3x3 EXECUTED layerType: Convolution realTime: 335 cpu: 335 execType: jit_avx2_FP32 fire6/relu_expand3x3 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire6/concat EXECUTED layerType: Concat realTime: 2 cpu: 2 execType: unknown_FP32 fire7/squeeze1x1 EXECUTED layerType: Convolution realTime: 79 cpu: 79 execType: jit_avx2_1x1_FP32 fire7/relu_squeeze1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire7/expand1x1 EXECUTED layerType: Convolution realTime: 39 cpu: 39 execType: jit_avx2_1x1_FP32 fire7/relu_expand1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire7/expand3x3 EXECUTED layerType: Convolution realTime: 331 cpu: 331 execType: jit_avx2_FP32 fire7/relu_expand3x3 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire7/concat EXECUTED layerType: Concat realTime: 1 cpu: 1 execType: unknown_FP32 fire8/squeeze1x1 EXECUTED layerType: Convolution realTime: 102 cpu: 102 execType: jit_avx2_1x1_FP32 fire8/relu_squeeze1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire8/expand1x1 EXECUTED layerType: Convolution realTime: 67 cpu: 67 execType: jit_avx2_1x1_FP32 fire8/relu_expand1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire8/expand3x3 EXECUTED layerType: Convolution realTime: 571 cpu: 571 execType: jit_avx2_FP32 fire8/relu_expand3x3 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire8/concat EXECUTED layerType: Concat realTime: 2 cpu: 2 execType: unknown_FP32 fire9/squeeze1x1 EXECUTED layerType: Convolution realTime: 140 cpu: 140 execType: jit_avx2_1x1_FP32 fire9/relu_squeeze1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire9/expand1x1 EXECUTED layerType: Convolution realTime: 67 cpu: 67 execType: jit_avx2_1x1_FP32 fire9/relu_expand1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire9/expand3x3 EXECUTED layerType: Convolution realTime: 574 cpu: 574 execType: jit_avx2_FP32 fire9/relu_expand3x3 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire9/concat EXECUTED layerType: Concat realTime: 2 cpu: 2 execType: unknown_FP32 conv10 EXECUTED layerType: Convolution realTime: 1948 cpu: 1948 execType: jit_avx2_1x1_FP32 relu_conv10 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef pool10/reduce EXECUTED layerType: Pooling realTime: 53 cpu: 53 execType: jit_avx_FP32 prob EXECUTED layerType: SoftMax realTime: 4 cpu: 4 execType: jit_avx2_FP32 prob_aBcd8b_abcd_out_prob EXECUTED layerType: Reorder realTime: 5 cpu: 5 execType: jit_uni_FP32 out_prob NOT_RUN layerType: Output realTime: 0 cpu: 0 execType: unknown_FP32 Total time: 8741 microseconds Full device name: Intel(R) Core(TM) i5-10210U CPU @ 1.60GHz [ INFO ] Performance counts for 3-th infer request: data/mean_const_biases NOT_RUN layerType: Const realTime: 0 cpu: 0 execType: unknown_FP32 data/mean_const_weights NOT_RUN layerType: Const realTime: 0 cpu: 0 execType: unknown_FP32 data/mean EXECUTED layerType: ScaleShift realTime: 68 cpu: 68 execType: jit_avx2_I8 conv1 EXECUTED layerType: Convolution realTime: 554 cpu: 554 execType: jit_avx2_FP32 relu_conv1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef pool1 EXECUTED layerType: Pooling realTime: 362 cpu: 362 execType: jit_avx_FP32 fire2/squeeze1x1 EXECUTED layerType: Convolution realTime: 79 cpu: 79 execType: jit_avx2_1x1_FP32 fire2/relu_squeeze1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire2/expand1x1 EXECUTED layerType: Convolution realTime: 83 cpu: 83 execType: jit_avx2_1x1_FP32 fire2/relu_expand1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire2/expand3x3 EXECUTED layerType: Convolution realTime: 523 cpu: 523 execType: jit_avx2_FP32 fire2/relu_expand3x3 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire2/concat EXECUTED layerType: Concat realTime: 4 cpu: 4 execType: unknown_FP32 fire3/squeeze1x1 EXECUTED layerType: Convolution realTime: 177 cpu: 177 execType: jit_avx2_1x1_FP32 fire3/relu_squeeze1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire3/expand1x1 EXECUTED layerType: Convolution realTime: 75 cpu: 75 execType: jit_avx2_1x1_FP32 fire3/relu_expand1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire3/expand3x3 EXECUTED layerType: Convolution realTime: 527 cpu: 527 execType: jit_avx2_FP32 fire3/relu_expand3x3 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire3/concat EXECUTED layerType: Concat realTime: 2 cpu: 2 execType: unknown_FP32 pool3 EXECUTED layerType: Pooling realTime: 176 cpu: 176 execType: jit_avx_FP32 fire4/squeeze1x1 EXECUTED layerType: Convolution realTime: 78 cpu: 78 execType: jit_avx2_1x1_FP32 fire4/relu_squeeze1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire4/expand1x1 EXECUTED layerType: Convolution realTime: 68 cpu: 68 execType: jit_avx2_1x1_FP32 fire4/relu_expand1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire4/expand3x3 EXECUTED layerType: Convolution realTime: 549 cpu: 549 execType: jit_avx2_FP32 fire4/relu_expand3x3 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire4/concat EXECUTED layerType: Concat realTime: 2 cpu: 2 execType: unknown_FP32 fire5/squeeze1x1 EXECUTED layerType: Convolution realTime: 170 cpu: 170 execType: jit_avx2_1x1_FP32 fire5/relu_squeeze1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire5/expand1x1 EXECUTED layerType: Convolution realTime: 66 cpu: 66 execType: jit_avx2_1x1_FP32 fire5/relu_expand1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire5/expand3x3 EXECUTED layerType: Convolution realTime: 547 cpu: 547 execType: jit_avx2_FP32 fire5/relu_expand3x3 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire5/concat EXECUTED layerType: Concat realTime: 2 cpu: 2 execType: unknown_FP32 pool5 EXECUTED layerType: Pooling realTime: 68 cpu: 68 execType: jit_avx_FP32 fire6/squeeze1x1 EXECUTED layerType: Convolution realTime: 53 cpu: 53 execType: jit_avx2_1x1_FP32 fire6/relu_squeeze1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire6/expand1x1 EXECUTED layerType: Convolution realTime: 39 cpu: 39 execType: jit_avx2_1x1_FP32 fire6/relu_expand1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire6/expand3x3 EXECUTED layerType: Convolution realTime: 330 cpu: 330 execType: jit_avx2_FP32 fire6/relu_expand3x3 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire6/concat EXECUTED layerType: Concat realTime: 2 cpu: 2 execType: unknown_FP32 fire7/squeeze1x1 EXECUTED layerType: Convolution realTime: 75 cpu: 75 execType: jit_avx2_1x1_FP32 fire7/relu_squeeze1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire7/expand1x1 EXECUTED layerType: Convolution realTime: 38 cpu: 38 execType: jit_avx2_1x1_FP32 fire7/relu_expand1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire7/expand3x3 EXECUTED layerType: Convolution realTime: 333 cpu: 333 execType: jit_avx2_FP32 fire7/relu_expand3x3 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire7/concat EXECUTED layerType: Concat realTime: 1 cpu: 1 execType: unknown_FP32 fire8/squeeze1x1 EXECUTED layerType: Convolution realTime: 105 cpu: 105 execType: jit_avx2_1x1_FP32 fire8/relu_squeeze1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire8/expand1x1 EXECUTED layerType: Convolution realTime: 65 cpu: 65 execType: jit_avx2_1x1_FP32 fire8/relu_expand1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire8/expand3x3 EXECUTED layerType: Convolution realTime: 594 cpu: 594 execType: jit_avx2_FP32 fire8/relu_expand3x3 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire8/concat EXECUTED layerType: Concat realTime: 2 cpu: 2 execType: unknown_FP32 fire9/squeeze1x1 EXECUTED layerType: Convolution realTime: 133 cpu: 133 execType: jit_avx2_1x1_FP32 fire9/relu_squeeze1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire9/expand1x1 EXECUTED layerType: Convolution realTime: 67 cpu: 67 execType: jit_avx2_1x1_FP32 fire9/relu_expand1x1 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire9/expand3x3 EXECUTED layerType: Convolution realTime: 601 cpu: 601 execType: jit_avx2_FP32 fire9/relu_expand3x3 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef fire9/concat EXECUTED layerType: Concat realTime: 2 cpu: 2 execType: unknown_FP32 conv10 EXECUTED layerType: Convolution realTime: 1931 cpu: 1931 execType: jit_avx2_1x1_FP32 relu_conv10 NOT_RUN layerType: ReLU realTime: 0 cpu: 0 execType: undef pool10/reduce EXECUTED layerType: Pooling realTime: 54 cpu: 54 execType: jit_avx_FP32 prob EXECUTED layerType: SoftMax realTime: 4 cpu: 4 execType: jit_avx2_FP32 prob_aBcd8b_abcd_out_prob EXECUTED layerType: Reorder realTime: 4 cpu: 4 execType: jit_uni_FP32 out_prob NOT_RUN layerType: Output realTime: 0 cpu: 0 execType: unknown_FP32 Total time: 8613 microseconds Full device name: Intel(R) Core(TM) i5-10210U CPU @ 1.60GHz Count: 1000 iterations Duration: 2212.07 ms Latency: 8.51 ms Throughput: 452.07 FPS ################################################### Inference Engine benchmark app completed successfully.

$ cd ~/openvino_models $ ls cache ir models $ python3 /opt/intel/openvino_2021/deployment_tools/tools/model_downloader/downloader.py --allダウンロードには数時間を要する。気長に待つべし。

$ python3 /opt/intel/openvino_2021/deployment_tools/tools/model_downloader/converter.py --all

:

[ SUCCESS ] Generated IR version 10 model.

[ SUCCESS ] XML file: /home/mizutu/openvino_models/public/yolo-v4-tf/FP32/yolo-v4-tf.xml

[ SUCCESS ] BIN file: /home/mizutu/openvino_models/public/yolo-v4-tf/FP32/yolo-v4-tf.bin

[ SUCCESS ] Total execution time: 27.98 seconds.

[ SUCCESS ] Memory consumed: 1801 MB.

FAILED:

efficientdet-d0-tf

efficientdet-d1-tf

regnetx-3.2gf

rexnet-v1-x1.0

コンバートできないモデルは4つだけ。$ sudo usermod -a -G users "$(whoami)"

$ sudo cp /opt/intel/openvino_2021/inference_engine/external/97-myriad-usbboot.rules /etc/udev/rules.d/ $ sudo udevadm control --reload-rules $ sudo udevadm trigger $ sudo ldconfig

$ lsusb Bus 004 Device 001: ID 1d6b:0003 Linux Foundation 3.0 root hub Bus 003 Device 001: ID 1d6b:0002 Linux Foundation 2.0 root hub Bus 002 Device 001: ID 1d6b:0003 Linux Foundation 3.0 root hub Bus 001 Device 004: ID 03e7:2485 Intel Movidius MyriadX Bus 001 Device 003: ID 8087:0026 Intel Corp. Bus 001 Device 002: ID 046d:c534 Logitech, Inc. Unifying Receiver Bus 001 Device 001: ID 1d6b:0002 Linux Foundation 2.0 root hub $ id mizutu uid=1000(mizutu) gid=1000(mizutu) groups=1000(mizutu),4(adm),24(cdrom),27(sudo),30(dip),46(plugdev),100(users),120(lpadmin),131(lxd),132(sambashare)

$ python3

Python 3.8.5 (default, Jan 27 2021, 15:41:15)

[GCC 9.3.0] on linux

Type "help", "copyright", "credits" or "license" for more information.

>>> import cv2

>>> print(cv2.getBuildInformation())

General configuration for OpenCV 4.5.2-openvino =====================================

Version control: 898c639f6ab195b81a1d3e9b2470a3f03123dd03

Platform:

Timestamp: 2021-03-19T02:52:41Z

Host: Linux 5.3.0-46-generic x86_64

CMake: 3.14.5

CMake generator: Ninja

CMake build tool: /opt/miniconda/envs/build/bin/ninja

Configuration: Release

CPU/HW features:

Baseline: SSE SSE2 SSE3 SSSE3 SSE4_1 POPCNT SSE4_2

requested: SSE4_2

Dispatched code generation: FP16 AVX AVX2 AVX512_SKX

requested: SSE4_1 SSE4_2 AVX FP16 AVX2 AVX512_SKX

FP16 (1 files): + FP16 AVX

AVX (5 files): + AVX

AVX2 (31 files): + FP16 FMA3 AVX AVX2

AVX512_SKX (7 files): + FP16 FMA3 AVX AVX2 AVX_512F AVX512_COMMON AVX512_SKX

C/C++:

Built as dynamic libs?: YES

C++ standard: 11

C++ Compiler: /usr/bin/c++ (ver 9.3.0)

C++ flags (Release): -fsigned-char -W -Wall -Werror=return-type -Werror=non-virtual-dtor -Werror=address -Werror=sequence-point -Wformat -Werror=format-security -Wmissing-declarations -Wundef -Winit-self -Wpointer-arith -Wshadow -Wsign-promo -Wuninitialized -Wsuggest-override -Wno-delete-non-virtual-dtor -Wno-comment -Wimplicit-fallthrough=3 -Wno-strict-overflow -fdiagnostics-show-option -Wno-long-long -pthread -fomit-frame-pointer -ffunction-sections -fdata-sections -msse -msse2 -msse3 -mssse3 -msse4.1 -mpopcnt -msse4.2 -fvisibility=hidden -fvisibility-inlines-hidden -fstack-protector-strong -fPIC -O2 -DNDEBUG -DNDEBUG -D_FORTIFY_SOURCE=2

C++ flags (Debug): -fsigned-char -W -Wall -Werror=return-type -Werror=non-virtual-dtor -Werror=address -Werror=sequence-point -Wformat -Werror=format-security -Wmissing-declarations -Wundef -Winit-self -Wpointer-arith -Wshadow -Wsign-promo -Wuninitialized -Wsuggest-override -Wno-delete-non-virtual-dtor -Wno-comment -Wimplicit-fallthrough=3 -Wno-strict-overflow -fdiagnostics-show-option -Wno-long-long -pthread -fomit-frame-pointer -ffunction-sections -fdata-sections -msse -msse2 -msse3 -mssse3 -msse4.1 -mpopcnt -msse4.2 -fvisibility=hidden -fvisibility-inlines-hidden -fstack-protector-strong -fPIC -g -O0 -DDEBUG -D_DEBUG

C Compiler: /usr/bin/cc

C flags (Release): -fsigned-char -W -Wall -Werror=return-type -Werror=address -Werror=sequence-point -Wformat -Werror=format-security -Wmissing-declarations -Wmissing-prototypes -Wstrict-prototypes -Wundef -Winit-self -Wpointer-arith -Wshadow -Wuninitialized -Wno-comment -Wimplicit-fallthrough=3 -Wno-strict-overflow -fdiagnostics-show-option -Wno-long-long -pthread -fomit-frame-pointer -ffunction-sections -fdata-sections -msse -msse2 -msse3 -mssse3 -msse4.1 -mpopcnt -msse4.2 -fvisibility=hidden -fstack-protector-strong -fPIC -O2 -DNDEBUG -DNDEBUG -D_FORTIFY_SOURCE=2

C flags (Debug): -fsigned-char -W -Wall -Werror=return-type -Werror=address -Werror=sequence-point -Wformat -Werror=format-security -Wmissing-declarations -Wmissing-prototypes -Wstrict-prototypes -Wundef -Winit-self -Wpointer-arith -Wshadow -Wuninitialized -Wno-comment -Wimplicit-fallthrough=3 -Wno-strict-overflow -fdiagnostics-show-option -Wno-long-long -pthread -fomit-frame-pointer -ffunction-sections -fdata-sections -msse -msse2 -msse3 -mssse3 -msse4.1 -mpopcnt -msse4.2 -fvisibility=hidden -fstack-protector-strong -fPIC -g -O0 -DDEBUG -D_DEBUG

Linker flags (Release): -Wl,--exclude-libs,libippicv.a -Wl,--exclude-libs,libippiw.a -Wl,--gc-sections -Wl,--as-needed -z noexecstack -z relro -z now

Linker flags (Debug): -Wl,--exclude-libs,libippicv.a -Wl,--exclude-libs,libippiw.a -Wl,--gc-sections -Wl,--as-needed -z noexecstack -z relro -z now

ccache: YES

Precompiled headers: NO

Extra dependencies: dl m pthread rt

3rdparty dependencies:

OpenCV modules:

To be built: calib3d core dnn features2d flann gapi highgui imgcodecs imgproc ml objdetect photo python3 stitching ts video videoio

Disabled: world

Disabled by dependency: -

Unavailable: java python2

Applications: tests perf_tests apps

Documentation: NO

Non-free algorithms: NO

GUI:

GTK+: YES (ver 3.24.20)

GThread : YES (ver 2.64.6)

GtkGlExt: NO

Media I/O:

ZLib: build (ver 1.2.11)

JPEG: build-libjpeg-turbo (ver 2.0.6-62)

PNG: build (ver 1.6.37)

HDR: YES

SUNRASTER: YES

PXM: YES

PFM: YES

Video I/O:

FFMPEG: YES

avcodec: YES (58.54.100)

avformat: YES (58.29.100)

avutil: YES (56.31.100)

swscale: YES (5.5.100)

avresample: YES (4.0.0)

GStreamer: YES (1.16.2)

v4l/v4l2: YES (linux/videodev2.h)

Intel Media SDK: YES (/mnt/nfs/msdk/lin-18.4.1/lib64/libmfx.so)

Parallel framework: pthreads

Trace: YES (with Intel ITT)

Other third-party libraries:

Intel IPP: 2020.0.0 Gold [2020.0.0]

at: /home/jenkins/workspace/OpenCV/OpenVINO/2021.3/build/ubuntu20/build_release/3rdparty/ippicv/ippicv_lnx/icv

Intel IPP IW: sources (2020.0.0)

at: /home/jenkins/workspace/OpenCV/OpenVINO/2021.3/build/ubuntu20/build_release/3rdparty/ippicv/ippicv_lnx/iw

VA: YES

Inference Engine: YES (2021030000 / 2.1.0)

* libs: /home/jenkins/workspace/OpenCV/OpenVINO/2021.3/build/ubuntu20/deployment_tools/inference_engine/lib/intel64/libinference_engine.so

* includes: /home/jenkins/workspace/OpenCV/OpenVINO/2021.3/build/ubuntu20/deployment_tools/inference_engine/include

nGraph: YES (0.0.0+a5d9f96)

* libs: /home/jenkins/workspace/OpenCV/OpenVINO/2021.3/build/ubuntu20/deployment_tools/ngraph/lib/libngraph.so

* includes: /home/jenkins/workspace/OpenCV/OpenVINO/2021.3/build/ubuntu20/deployment_tools/ngraph/include

Custom HAL: NO

Protobuf: build (3.5.1)

OpenCL: YES (INTELVA)

Include path: /home/jenkins/workspace/OpenCV/OpenVINO/2021.3/build/ubuntu20/opencv/3rdparty/include/opencl/1.2

Link libraries: Dynamic load

Python 3:

Interpreter: /opt/miniconda/envs/py3_env/bin/python (ver 3.4.5)

Libraries: /opt/miniconda/envs/py3_env/lib/libpython3.4m.so (ver 3.4.5)

numpy: /opt/miniconda/envs/py3_env/lib/python3.4/site-packages/numpy/core/include (ver 1.11.3)

install path: /home/jenkins/workspace/OpenCV/OpenVINO/2021.3/build/ubuntu20/build_release/install/python/python3

Python (for build): /usr/bin/python2.7

Install to: /home/jenkins/workspace/OpenCV/OpenVINO/2021.3/build/ubuntu20/build_release/install

$ gcc --version gcc (Ubuntu 9.3.0-17ubuntu1~20.04) 9.3.0 Copyright (C) 2019 Free Software Foundation, Inc. This is free software; see the source for copying conditions. There is NO warranty; not even for MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. $ cmake --version cmake version 3.16.3 CMake suite maintained and supported by Kitware (kitware.com/cmake).

Build the Demo Applications on Linux* The officially supported Linux* build environment is the following: ・Ubuntu* 16.04 LTS 64-bit or CentOS* 7.4 64-bit ・GCC* 5.4.0 (for Ubuntu* 16.04) or GCC* 4.8.5 (for CentOS* 7.4) ・CMake* version 2.8 or higher.

INTEL_OPENVINO_DIR=/opt/intel/openvino_2021

オフィシャルサイト Open Model Zoo Demos の手順で付属のデモを構築する。

$ cd /opt/intel/openvino_2021/deployment_tools/open_model_zoo/demos $ ./build_demos.sh -DENABLE_PYTHON=ON

Setting environment variables for building demos...

[setupvars.sh] OpenVINO environment initialized

-- The C compiler identification is GNU 9.3.0

-- The CXX compiler identification is GNU 9.3.0

-- Check for working C compiler: /usr/bin/cc

-- Check for working C compiler: /usr/bin/cc -- works

-- Detecting C compiler ABI info

-- Detecting C compiler ABI info - done

-- Detecting C compile features

-- Detecting C compile features - done

-- Check for working CXX compiler: /usr/bin/c++

-- Check for working CXX compiler: /usr/bin/c++ -- works

-- Detecting CXX compiler ABI info

-- Detecting CXX compiler ABI info - done

-- Detecting CXX compile features

-- Detecting CXX compile features - done

-- Looking for C++ include unistd.h

-- Looking for C++ include unistd.h - found

-- Looking for C++ include stdint.h

-- Looking for C++ include stdint.h - found

-- Looking for C++ include sys/types.h

-- Looking for C++ include sys/types.h - found

-- Looking for C++ include fnmatch.h

-- Looking for C++ include fnmatch.h - found

-- Looking for C++ include stddef.h

-- Looking for C++ include stddef.h - found

-- Check size of uint32_t

-- Check size of uint32_t - done

-- Looking for strtoll

-- Looking for strtoll - found

-- Found InferenceEngine: /opt/intel/openvino_2021/deployment_tools/inference_engine/lib/intel64/libinference_engine.so (Required is at least version "2.0")

-- Found OpenCV: /opt/intel/openvino_2021.2.185/opencv (found version "4.5.1") found components: core imgcodecs videoio

-- Found OpenCV: /opt/intel/openvino_2021.2.185/opencv (found version "4.5.1") found components: core imgproc

-- Looking for pthread.h

-- Looking for pthread.h - found

-- Performing Test CMAKE_HAVE_LIBC_PTHREAD

-- Performing Test CMAKE_HAVE_LIBC_PTHREAD - Failed

-- Looking for pthread_create in pthreads

-- Looking for pthread_create in pthreads - not found

-- Looking for pthread_create in pthread

-- Looking for pthread_create in pthread - found

-- Found Threads: TRUE

-- Found PythonInterp: /usr/bin/python3 (found suitable version "3.8.5", minimum required is "3.6")

-- Found PythonLibs: /usr/lib/x86_64-linux-gnu/libpython3.8.so (found suitable exact version "3.8.5")

-- Found OpenCV: /opt/intel/openvino_2021.2.185/opencv (found suitable version "4.5.1", minimum required is "4") found components: core imgproc

-- Found OpenCV: /opt/intel/openvino_2021.2.185/opencv (found suitable version "4.5.1", minimum required is "4") found components: core

-- Configuring done

-- Generating done

-- Build files have been written to: /home/mizutu/omz_demos_build

Scanning dependencies of target gflags_nothreads_static

Scanning dependencies of target monitors

Scanning dependencies of target pose_extractor

Scanning dependencies of target ctcdecode_numpy_impl

[ 1%] Building CXX object thirdparty/gflags/CMakeFiles/gflags_nothreads_static.dir/src/gflags.cc.o

[ 3%] Building CXX object thirdparty/gflags/CMakeFiles/gflags_nothreads_static.dir/src/gflags_reporting.cc.o

[ 3%] Building CXX object thirdparty/gflags/CMakeFiles/gflags_nothreads_static.dir/src/gflags_completions.cc.o

[ 4%] Building CXX object common/monitors/CMakeFiles/monitors.dir/src/cpu_monitor.cpp.o

[ 5%] Building CXX object common/monitors/CMakeFiles/monitors.dir/src/presenter.cpp.o

[ 6%] Building CXX object common/monitors/CMakeFiles/monitors.dir/src/memory_monitor.cpp.o

[ 7%] Building CXX object python_demos/speech_recognition_demo/ctcdecode-numpy/CMakeFiles/ctcdecode_numpy_impl.dir/ctcdecode_numpy/binding.cpp.o

[ 8%] Building CXX object python_demos/human_pose_estimation_3d_demo/pose_extractor/CMakeFiles/pose_extractor.dir/wrapper.cpp.o

[ 9%] Building CXX object python_demos/human_pose_estimation_3d_demo/pose_extractor/CMakeFiles/pose_extractor.dir/src/extract_poses.cpp.o

[ 10%] Building CXX object python_demos/speech_recognition_demo/ctcdecode-numpy/CMakeFiles/ctcdecode_numpy_impl.dir/ctcdecode_numpy/ctc_beam_search_decoder.cpp.o

[ 11%] Building CXX object python_demos/human_pose_estimation_3d_demo/pose_extractor/CMakeFiles/pose_extractor.dir/src/human_pose.cpp.o

[ 11%] Building CXX object python_demos/human_pose_estimation_3d_demo/pose_extractor/CMakeFiles/pose_extractor.dir/src/peak.cpp.o

[ 12%] Building CXX object python_demos/speech_recognition_demo/ctcdecode-numpy/CMakeFiles/ctcdecode_numpy_impl.dir/ctcdecode_numpy/decoder_utils.cpp.o

[ 13%] Building CXX object python_demos/speech_recognition_demo/ctcdecode-numpy/CMakeFiles/ctcdecode_numpy_impl.dir/ctcdecode_numpy/decoders_wrap.cpp.o

[ 13%] Building CXX object python_demos/speech_recognition_demo/ctcdecode-numpy/CMakeFiles/ctcdecode_numpy_impl.dir/ctcdecode_numpy/path_trie.cpp.o

[ 14%] Building CXX object python_demos/speech_recognition_demo/ctcdecode-numpy/CMakeFiles/ctcdecode_numpy_impl.dir/ctcdecode_numpy/scorer_base.cpp.o

[ 15%] Linking CXX shared module ../../../intel64/Release/lib/pose_extractor.so

[ 16%] Building CXX object python_demos/speech_recognition_demo/ctcdecode-numpy/CMakeFiles/ctcdecode_numpy_impl.dir/ctcdecode_numpy/scorer_yoklm.cpp.o

[ 17%] Building CXX object python_demos/speech_recognition_demo/ctcdecode-numpy/CMakeFiles/ctcdecode_numpy_impl.dir/ctcdecode_numpy/word_prefix_set.cpp.o

[ 17%] Built target pose_extractor

[ 18%] Building CXX object python_demos/speech_recognition_demo/ctcdecode-numpy/CMakeFiles/ctcdecode_numpy_impl.dir/ctcdecode_numpy/yoklm/kenlm_v5_loader.cpp.o

[ 18%] Linking CXX static library ../../intel64/Release/lib/libgflags_nothreads.a

[ 18%] Built target gflags_nothreads_static

[ 19%] Building CXX object python_demos/speech_recognition_demo/ctcdecode-numpy/CMakeFiles/ctcdecode_numpy_impl.dir/ctcdecode_numpy/yoklm/language_model.cpp.o

[ 19%] Building CXX object python_demos/speech_recognition_demo/ctcdecode-numpy/CMakeFiles/ctcdecode_numpy_impl.dir/ctcdecode_numpy/yoklm/memory_section.cpp.o

[ 20%] Building CXX object python_demos/speech_recognition_demo/ctcdecode-numpy/CMakeFiles/ctcdecode_numpy_impl.dir/ctcdecode_numpy/yoklm/vocabulary.cpp.o

Scanning dependencies of target common

[ 21%] Building CXX object common/CMakeFiles/common.dir/src/args_helper.cpp.o

[ 22%] Building CXX object common/CMakeFiles/common.dir/src/images_capture.cpp.o

[ 23%] Building CXX object common/CMakeFiles/common.dir/src/performance_metrics.cpp.o

In file included from /usr/include/string.h:495,

from /usr/include/python3.8/Python.h:30,

from /opt/intel/openvino_2021/deployment_tools/open_model_zoo/demos/python_demos/speech_recognition_demo/ctcdecode-numpy/ctcdecode_numpy/decoders_wrap.cpp:173:

In function ‘char* strncpy(char*, const char*, size_t)’,

inlined from ‘void SWIG_Python_FixMethods(PyMethodDef*, swig_const_info*, swig_type_info**, swig_type_info**)’ at /opt/intel/openvino_2021/deployment_tools/open_model_zoo/demos/python_demos/speech_recognition_demo/ctcdecode-numpy/ctcdecode_numpy/decoders_wrap.cpp:12616:22,

inlined from ‘PyObject* PyInit__impl()’ at /opt/intel/openvino_2021/deployment_tools/open_model_zoo/demos/python_demos/speech_recognition_demo/ctcdecode-numpy/ctcdecode_numpy/decoders_wrap.cpp:12711:25:

/usr/include/x86_64-linux-gnu/bits/string_fortified.h:106:34: warning: ‘char* __builtin_strncpy(char*, const char*, long unsigned int)’ output truncated before terminating nul copying 10 bytes from a string of the same length [-Wstringop-truncation]

106 | return __builtin___strncpy_chk (__dest, __src, __len, __bos (__dest));

| ~~~~~~~~~~~~~~~~~~~~~~~~^~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~

[ 24%] Linking CXX static library ../../intel64/Release/lib/libmonitors.a

[ 24%] Built target monitors

[ 25%] Linking CXX shared module ../../../intel64/Release/lib/ctcdecode_numpy/_impl.so

[ 25%] Built target ctcdecode_numpy_impl

Scanning dependencies of target ctcdecode_numpy

[ 25%] Built target ctcdecode_numpy

[ 26%] Linking CXX static library ../intel64/Release/lib/libcommon.a

[ 26%] Built target common

Scanning dependencies of target crossroad_camera_demo

Scanning dependencies of target mask_rcnn_demo

Scanning dependencies of target classification_demo

Scanning dependencies of target gaze_estimation_demo

Scanning dependencies of target text_detection_demo

Scanning dependencies of target models

Scanning dependencies of target interactive_face_detection_demo

Scanning dependencies of target human_pose_estimation_demo

[ 26%] Building CXX object classification_demo/CMakeFiles/classification_demo.dir/main.cpp.o

[ 26%] Building CXX object mask_rcnn_demo/CMakeFiles/mask_rcnn_demo.dir/main.cpp.o

[ 27%] Building CXX object crossroad_camera_demo/CMakeFiles/crossroad_camera_demo.dir/main.cpp.o

[ 27%] Building CXX object text_detection_demo/CMakeFiles/text_detection_demo.dir/main.cpp.o

[ 28%] Building CXX object human_pose_estimation_demo/CMakeFiles/human_pose_estimation_demo.dir/main.cpp.o

[ 29%] Building CXX object gaze_estimation_demo/CMakeFiles/gaze_estimation_demo.dir/main.cpp.o

[ 30%] Building CXX object common/models/CMakeFiles/models.dir/src/detection_model.cpp.o

[ 31%] Building CXX object interactive_face_detection_demo/CMakeFiles/interactive_face_detection_demo.dir/detectors.cpp.o

[ 32%] Building CXX object human_pose_estimation_demo/CMakeFiles/human_pose_estimation_demo.dir/src/human_pose.cpp.o

[ 33%] Building CXX object gaze_estimation_demo/CMakeFiles/gaze_estimation_demo.dir/src/exponential_averager.cpp.o

[ 34%] Building CXX object gaze_estimation_demo/CMakeFiles/gaze_estimation_demo.dir/src/eye_state_estimator.cpp.o

[ 35%] Building CXX object common/models/CMakeFiles/models.dir/src/detection_model_ssd.cpp.o

[ 36%] Building CXX object human_pose_estimation_demo/CMakeFiles/human_pose_estimation_demo.dir/src/human_pose_estimator.cpp.o

[ 37%] Building CXX object text_detection_demo/CMakeFiles/text_detection_demo.dir/src/cnn.cpp.o

[ 38%] Linking CXX executable ../intel64/Release/mask_rcnn_demo

[ 38%] Built target mask_rcnn_demo

[ 38%] Building CXX object gaze_estimation_demo/CMakeFiles/gaze_estimation_demo.dir/src/face_detector.cpp.o

[ 39%] Linking CXX executable ../intel64/Release/classification_demo

[ 40%] Linking CXX executable ../intel64/Release/crossroad_camera_demo

[ 40%] Built target classification_demo

Scanning dependencies of target multi_channel_common

[ 41%] Building CXX object multi_channel/common/CMakeFiles/multi_channel_common.dir/decoder.cpp.o

[ 41%] Built target crossroad_camera_demo

Scanning dependencies of target pedestrian_tracker_demo

[ 42%] Building CXX object pedestrian_tracker_demo/CMakeFiles/pedestrian_tracker_demo.dir/main.cpp.o

[ 43%] Building CXX object gaze_estimation_demo/CMakeFiles/gaze_estimation_demo.dir/src/face_inference_results.cpp.o

[ 44%] Building CXX object human_pose_estimation_demo/CMakeFiles/human_pose_estimation_demo.dir/src/peak.cpp.o

[ 45%] Building CXX object interactive_face_detection_demo/CMakeFiles/interactive_face_detection_demo.dir/face.cpp.o

[ 46%] Building CXX object common/models/CMakeFiles/models.dir/src/detection_model_yolo.cpp.o

[ 47%] Building CXX object text_detection_demo/CMakeFiles/text_detection_demo.dir/src/text_detection.cpp.o

[ 48%] Building CXX object gaze_estimation_demo/CMakeFiles/gaze_estimation_demo.dir/src/gaze_estimator.cpp.o

[ 49%] Building CXX object multi_channel/common/CMakeFiles/multi_channel_common.dir/graph.cpp.o

[ 50%] Building CXX object gaze_estimation_demo/CMakeFiles/gaze_estimation_demo.dir/src/head_pose_estimator.cpp.o

[ 51%] Building CXX object human_pose_estimation_demo/CMakeFiles/human_pose_estimation_demo.dir/src/render_human_pose.cpp.o

[ 52%] Building CXX object pedestrian_tracker_demo/CMakeFiles/pedestrian_tracker_demo.dir/src/cnn.cpp.o

[ 53%] Building CXX object interactive_face_detection_demo/CMakeFiles/interactive_face_detection_demo.dir/main.cpp.o

[ 53%] Linking CXX executable ../intel64/Release/human_pose_estimation_demo

[ 53%] Built target human_pose_estimation_demo

Scanning dependencies of target security_barrier_camera_demo

[ 54%] Building CXX object security_barrier_camera_demo/CMakeFiles/security_barrier_camera_demo.dir/main.cpp.o

[ 55%] Building CXX object text_detection_demo/CMakeFiles/text_detection_demo.dir/src/text_recognition.cpp.o

[ 56%] Building CXX object gaze_estimation_demo/CMakeFiles/gaze_estimation_demo.dir/src/ie_wrapper.cpp.o

[ 57%] Building CXX object common/models/CMakeFiles/models.dir/src/segmentation_model.cpp.o

[ 58%] Building CXX object gaze_estimation_demo/CMakeFiles/gaze_estimation_demo.dir/src/landmarks_estimator.cpp.o

[ 59%] Linking CXX executable ../intel64/Release/text_detection_demo

[ 59%] Built target text_detection_demo

Scanning dependencies of target segmentation_demo

[ 60%] Building CXX object segmentation_demo/CMakeFiles/segmentation_demo.dir/main.cpp.o

[ 61%] Building CXX object multi_channel/common/CMakeFiles/multi_channel_common.dir/input.cpp.o

[ 62%] Building CXX object interactive_face_detection_demo/CMakeFiles/interactive_face_detection_demo.dir/visualizer.cpp.o

[ 63%] Building CXX object multi_channel/common/CMakeFiles/multi_channel_common.dir/output.cpp.o

[ 64%] Building CXX object pedestrian_tracker_demo/CMakeFiles/pedestrian_tracker_demo.dir/src/detector.cpp.o

[ 64%] Building CXX object gaze_estimation_demo/CMakeFiles/gaze_estimation_demo.dir/src/results_marker.cpp.o

[ 64%] Linking CXX static library ../../intel64/Release/lib/libmodels.a

[ 64%] Built target models

Scanning dependencies of target smart_classroom_demo

[ 65%] Building CXX object smart_classroom_demo/CMakeFiles/smart_classroom_demo.dir/main.cpp.o

[ 66%] Building CXX object gaze_estimation_demo/CMakeFiles/gaze_estimation_demo.dir/src/utils.cpp.o

[ 67%] Linking CXX executable ../intel64/Release/segmentation_demo

[ 67%] Built target segmentation_demo

Scanning dependencies of target super_resolution_demo

[ 68%] Building CXX object super_resolution_demo/CMakeFiles/super_resolution_demo.dir/main.cpp.o

[ 69%] Linking CXX executable ../intel64/Release/interactive_face_detection_demo

Scanning dependencies of target monitors_extension

[ 70%] Linking CXX executable ../intel64/Release/gaze_estimation_demo

[ 71%] Building CXX object python_demos/common/monitors_extension/CMakeFiles/monitors_extension.dir/monitors_extension.cpp.o

[ 71%] Built target interactive_face_detection_demo

Scanning dependencies of target pipelines

[ 72%] Building CXX object multi_channel/common/CMakeFiles/multi_channel_common.dir/perf_timer.cpp.o

[ 73%] Building CXX object common/pipelines/CMakeFiles/pipelines.dir/src/async_pipeline.cpp.o

[ 73%] Built target gaze_estimation_demo

[ 73%] Building CXX object common/pipelines/CMakeFiles/pipelines.dir/src/config_factory.cpp.o

[ 73%] Building CXX object multi_channel/common/CMakeFiles/multi_channel_common.dir/threading.cpp.o

[ 74%] Linking CXX static library ../../intel64/Release/lib/libmulti_channel_common.a

[ 74%] Built target multi_channel_common

Scanning dependencies of target multi_channel_face_detection_demo

[ 75%] Building CXX object multi_channel/face_detection_demo/CMakeFiles/multi_channel_face_detection_demo.dir/main.cpp.o

[ 75%] Linking CXX shared module ../../../intel64/Release/lib/monitors_extension.so

[ 75%] Built target monitors_extension

Scanning dependencies of target multi_channel_human_pose_estimation_demo

[ 76%] Building CXX object multi_channel/human_pose_estimation_demo/CMakeFiles/multi_channel_human_pose_estimation_demo.dir/human_pose.cpp.o

[ 77%] Building CXX object common/pipelines/CMakeFiles/pipelines.dir/src/requests_pool.cpp.o

[ 78%] Building CXX object multi_channel/human_pose_estimation_demo/CMakeFiles/multi_channel_human_pose_estimation_demo.dir/main.cpp.o

[ 79%] Linking CXX executable ../intel64/Release/security_barrier_camera_demo

[ 79%] Linking CXX executable ../intel64/Release/super_resolution_demo

[ 79%] Built target security_barrier_camera_demo

Scanning dependencies of target multi_channel_object_detection_demo_yolov3

[ 80%] Building CXX object multi_channel/object_detection_demo_yolov3/CMakeFiles/multi_channel_object_detection_demo_yolov3.dir/main.cpp.o

[ 80%] Built target super_resolution_demo

[ 80%] Building CXX object multi_channel/human_pose_estimation_demo/CMakeFiles/multi_channel_human_pose_estimation_demo.dir/peak.cpp.o

[ 80%] Building CXX object pedestrian_tracker_demo/CMakeFiles/pedestrian_tracker_demo.dir/src/distance.cpp.o

[ 81%] Building CXX object pedestrian_tracker_demo/CMakeFiles/pedestrian_tracker_demo.dir/src/kuhn_munkres.cpp.o

[ 82%] Building CXX object smart_classroom_demo/CMakeFiles/smart_classroom_demo.dir/src/action_detector.cpp.o

[ 83%] Linking CXX static library ../../intel64/Release/lib/libpipelines.a

[ 83%] Built target pipelines

Scanning dependencies of target object_detection_demo

[ 84%] Building CXX object object_detection_demo/CMakeFiles/object_detection_demo.dir/main.cpp.o

[ 85%] Linking CXX executable ../../intel64/Release/multi_channel_face_detection_demo

[ 86%] Building CXX object pedestrian_tracker_demo/CMakeFiles/pedestrian_tracker_demo.dir/src/tracker.cpp.o

[ 86%] Built target multi_channel_face_detection_demo

Scanning dependencies of target segmentation_demo_async

[ 86%] Building CXX object segmentation_demo_async/CMakeFiles/segmentation_demo_async.dir/main.cpp.o

[ 87%] Building CXX object pedestrian_tracker_demo/CMakeFiles/pedestrian_tracker_demo.dir/src/utils.cpp.o

[ 88%] Building CXX object multi_channel/human_pose_estimation_demo/CMakeFiles/multi_channel_human_pose_estimation_demo.dir/postprocess.cpp.o

[ 89%] Building CXX object multi_channel/human_pose_estimation_demo/CMakeFiles/multi_channel_human_pose_estimation_demo.dir/postprocessor.cpp.o

[ 90%] Building CXX object multi_channel/human_pose_estimation_demo/CMakeFiles/multi_channel_human_pose_estimation_demo.dir/render_human_pose.cpp.o

[ 91%] Building CXX object smart_classroom_demo/CMakeFiles/smart_classroom_demo.dir/src/align_transform.cpp.o

[ 92%] Linking CXX executable ../../intel64/Release/multi_channel_human_pose_estimation_demo

[ 92%] Built target multi_channel_human_pose_estimation_demo

[ 93%] Building CXX object smart_classroom_demo/CMakeFiles/smart_classroom_demo.dir/src/cnn.cpp.o

[ 94%] Linking CXX executable ../intel64/Release/object_detection_demo

[ 94%] Built target object_detection_demo

[ 94%] Building CXX object smart_classroom_demo/CMakeFiles/smart_classroom_demo.dir/src/detector.cpp.o

[ 95%] Building CXX object smart_classroom_demo/CMakeFiles/smart_classroom_demo.dir/src/logger.cpp.o

[ 96%] Linking CXX executable ../intel64/Release/segmentation_demo_async

[ 96%] Built target segmentation_demo_async

[ 97%] Building CXX object smart_classroom_demo/CMakeFiles/smart_classroom_demo.dir/src/reid_gallery.cpp.o

[ 97%] Linking CXX executable ../../intel64/Release/multi_channel_object_detection_demo_yolov3

[ 98%] Building CXX object smart_classroom_demo/CMakeFiles/smart_classroom_demo.dir/src/tracker.cpp.o

[ 98%] Built target multi_channel_object_detection_demo_yolov3

[ 99%] Linking CXX executable ../intel64/Release/pedestrian_tracker_demo

[ 99%] Built target pedestrian_tracker_demo

[100%] Linking CXX executable ../intel64/Release/smart_classroom_demo

[100%] Built target smart_classroom_demo

Scanning dependencies of target ie_samples

[100%] Built target ie_samples

Build completed, you can find binaries for all demos in the /home/mizutu/omz_demos_build/intel64/Release subfolder.

omz_demos.sh #!/bin/sh echo [OMZ_demos.sh] Open Model Zoo Demos environment initialized export PYTHONPATH=$PYTHONPATH:$HOME/omz_demos_build/intel64/Release/lib

~/model/intel

├── FP16

│ ├── action-recognition-0001-decoder.bin

│ ├── action-recognition-0001-decoder.xml

│ ├── action-recognition-0001-encoder.bin

│ ├── action-recognition-0001-encoder.xml

│ ├── age-gender-recognition-retail-0013.bin

│ ├── age-gender-recognition-retail-0013.xml

│ ├── asl-recognition-0004.bin

│ ├── asl-recognition-0004.xml

│ ├── bert-large-uncased-whole-word-masking-squad-0001.bin

│ ├── bert-large-uncased-whole-word-masking-squad-0001.xml

│ ├── bert-large-uncased-whole-word-masking-squad-emb-0001.bin

│ ├── bert-large-uncased-whole-word-masking-squad-emb-0001.xml

│ ├── bert-small-uncased-whole-word-masking-squad-0001.bin

│ ├── bert-small-uncased-whole-word-masking-squad-0001.xml

│ ├── bert-small-uncased-whole-word-masking-squad-0002.bin

│ ├── bert-small-uncased-whole-word-masking-squad-0002.xml

│ ├── driver-action-recognition-adas-0002-decoder.bin

│ ├── driver-action-recognition-adas-0002-decoder.xml

│ ├── driver-action-recognition-adas-0002-encoder.bin

│ ├── driver-action-recognition-adas-0002-encoder.xml

│ ├── emotions-recognition-retail-0003.bin

│ ├── emotions-recognition-retail-0003.xml

│ ├── face-detection-0200.bin

│ ├── face-detection-0200.xml

│ ├── face-detection-0202.bin

│ ├── face-detection-0202.xml

│ ├── face-detection-0204.bin

│ ├── face-detection-0204.xml

│ ├── face-detection-0205.bin

│ ├── face-detection-0205.xml

│ ├── face-detection-0206.bin

│ ├── face-detection-0206.xml

│ ├── face-detection-adas-0001.bin

│ ├── face-detection-adas-0001.xml

│ ├── face-detection-retail-0004.bin

│ ├── face-detection-retail-0004.xml

│ ├── face-detection-retail-0005.bin

│ ├── face-detection-retail-0005.xml

│ ├── face-reidentification-retail-0095.bin

│ ├── face-reidentification-retail-0095.xml

│ ├── facial-landmarks-35-adas-0002.bin

│ ├── facial-landmarks-35-adas-0002.xml

│ ├── faster-rcnn-resnet101-coco-sparse-60-0001.bin

│ ├── faster-rcnn-resnet101-coco-sparse-60-0001.xml

│ ├── formula-recognition-medium-scan-0001-im2latex-decoder.bin

│ ├── formula-recognition-medium-scan-0001-im2latex-decoder.xml

│ ├── formula-recognition-medium-scan-0001-im2latex-encoder.bin

│ ├── formula-recognition-medium-scan-0001-im2latex-encoder.xml

│ ├── formula-recognition-polynomials-handwritten-0001-decoder.bin

│ ├── formula-recognition-polynomials-handwritten-0001-decoder.xml

│ ├── formula-recognition-polynomials-handwritten-0001-encoder.bin

│ ├── formula-recognition-polynomials-handwritten-0001-encoder.xml

│ ├── gaze-estimation-adas-0002.bin

│ ├── gaze-estimation-adas-0002.xml

│ ├── handwritten-japanese-recognition-0001.bin

│ ├── handwritten-japanese-recognition-0001.xml

│ ├── handwritten-score-recognition-0003.bin

│ ├── handwritten-score-recognition-0003.xml

│ ├── handwritten-simplified-chinese-recognition-0001.bin

│ ├── handwritten-simplified-chinese-recognition-0001.xml

│ ├── head-pose-estimation-adas-0001.bin

│ ├── head-pose-estimation-adas-0001.xml

│ ├── horizontal-text-detection-0001.bin

│ ├── horizontal-text-detection-0001.xml

│ ├── human-pose-estimation-0001.bin

│ ├── human-pose-estimation-0001.xml

│ ├── human-pose-estimation-0005.bin

│ ├── human-pose-estimation-0005.xml

│ ├── human-pose-estimation-0006.bin

│ ├── human-pose-estimation-0006.xml

│ ├── human-pose-estimation-0007.bin

│ ├── human-pose-estimation-0007.xml

│ ├── icnet-camvid-ava-0001.bin

│ ├── icnet-camvid-ava-0001.xml

│ ├── icnet-camvid-ava-sparse-30-0001.bin

│ ├── icnet-camvid-ava-sparse-30-0001.xml

│ ├── icnet-camvid-ava-sparse-60-0001.bin

│ ├── icnet-camvid-ava-sparse-60-0001.xml

│ ├── image-retrieval-0001.bin

│ ├── image-retrieval-0001.xml

│ ├── instance-segmentation-security-0002.bin

│ ├── instance-segmentation-security-0002.xml

│ ├── instance-segmentation-security-0091.bin

│ ├── instance-segmentation-security-0091.xml

│ ├── instance-segmentation-security-0228.bin

│ ├── instance-segmentation-security-0228.xml

│ ├── instance-segmentation-security-1039.bin

│ ├── instance-segmentation-security-1039.xml

│ ├── instance-segmentation-security-1040.bin

│ ├── instance-segmentation-security-1040.xml

│ ├── landmarks-regression-retail-0009.bin

│ ├── landmarks-regression-retail-0009.xml

│ ├── license-plate-recognition-barrier-0001.bin

│ ├── license-plate-recognition-barrier-0001.xml

│ ├── machine-translation-nar-de-en-0001.bin

│ ├── machine-translation-nar-de-en-0001.xml

│ ├── machine-translation-nar-en-de-0001.bin

│ ├── machine-translation-nar-en-de-0001.xml

│ ├── machine-translation-nar-en-ru-0001.bin

│ ├── machine-translation-nar-en-ru-0001.xml

│ ├── machine-translation-nar-ru-en-0001.bin

│ ├── machine-translation-nar-ru-en-0001.xml

│ ├── pedestrian-and-vehicle-detector-adas-0001.bin

│ ├── pedestrian-and-vehicle-detector-adas-0001.xml

│ ├── pedestrian-detection-adas-0002.bin

│ ├── pedestrian-detection-adas-0002.xml

│ ├── person-attributes-recognition-crossroad-0230.bin

│ ├── person-attributes-recognition-crossroad-0230.xml

│ ├── person-attributes-recognition-crossroad-0234.bin

│ ├── person-attributes-recognition-crossroad-0234.xml

│ ├── person-attributes-recognition-crossroad-0238.bin

│ ├── person-attributes-recognition-crossroad-0238.xml

│ ├── person-detection-0106.bin

│ ├── person-detection-0106.xml

│ ├── person-detection-0200.bin

│ ├── person-detection-0200.xml

│ ├── person-detection-0201.bin

│ ├── person-detection-0201.xml

│ ├── person-detection-0202.bin

│ ├── person-detection-0202.xml

│ ├── person-detection-0203.bin

│ ├── person-detection-0203.xml

│ ├── person-detection-action-recognition-0005.bin

│ ├── person-detection-action-recognition-0005.xml

│ ├── person-detection-action-recognition-0006.bin

│ ├── person-detection-action-recognition-0006.xml

│ ├── person-detection-action-recognition-teacher-0002.bin

│ ├── person-detection-action-recognition-teacher-0002.xml

│ ├── person-detection-asl-0001.bin

│ ├── person-detection-asl-0001.xml

│ ├── person-detection-raisinghand-recognition-0001.bin

│ ├── person-detection-raisinghand-recognition-0001.xml

│ ├── person-detection-retail-0002.bin

│ ├── person-detection-retail-0002.xml

│ ├── person-detection-retail-0013.bin

│ ├── person-detection-retail-0013.xml

│ ├── person-reidentification-retail-0277.bin

│ ├── person-reidentification-retail-0277.xml

│ ├── person-reidentification-retail-0286.bin

│ ├── person-reidentification-retail-0286.xml

│ ├── person-reidentification-retail-0287.bin

│ ├── person-reidentification-retail-0287.xml

│ ├── person-reidentification-retail-0288.bin

│ ├── person-reidentification-retail-0288.xml

│ ├── person-vehicle-bike-detection-2000.bin

│ ├── person-vehicle-bike-detection-2000.xml

│ ├── person-vehicle-bike-detection-2001.bin

│ ├── person-vehicle-bike-detection-2001.xml

│ ├── person-vehicle-bike-detection-2002.bin

│ ├── person-vehicle-bike-detection-2002.xml

│ ├── person-vehicle-bike-detection-2003.bin

│ ├── person-vehicle-bike-detection-2003.xml

│ ├── person-vehicle-bike-detection-2004.bin

│ ├── person-vehicle-bike-detection-2004.xml

│ ├── person-vehicle-bike-detection-crossroad-0078.bin

│ ├── person-vehicle-bike-detection-crossroad-0078.xml

│ ├── person-vehicle-bike-detection-crossroad-1016.bin

│ ├── person-vehicle-bike-detection-crossroad-1016.xml

│ ├── person-vehicle-bike-detection-crossroad-yolov3-1020.bin

│ ├── person-vehicle-bike-detection-crossroad-yolov3-1020.xml

│ ├── product-detection-0001.bin

│ ├── product-detection-0001.xml

│ ├── road-segmentation-adas-0001.bin

│ ├── road-segmentation-adas-0001.xml

│ ├── semantic-segmentation-adas-0001.bin

│ ├── semantic-segmentation-adas-0001.xml

│ ├── single-image-super-resolution-1032.bin

│ ├── single-image-super-resolution-1032.xml

│ ├── single-image-super-resolution-1033.bin

│ ├── single-image-super-resolution-1033.xml

│ ├── text-detection-0003.bin

│ ├── text-detection-0003.xml

│ ├── text-detection-0004.bin

│ ├── text-detection-0004.xml

│ ├── text-image-super-resolution-0001.bin

│ ├── text-image-super-resolution-0001.xml

│ ├── text-recognition-0012.bin

│ ├── text-recognition-0012.xml

│ ├── text-recognition-0013.bin

│ ├── text-recognition-0013.xml

│ ├── text-spotting-0004-detector.bin

│ ├── text-spotting-0004-detector.xml

│ ├── text-spotting-0004-recognizer-decoder.bin

│ ├── text-spotting-0004-recognizer-decoder.xml

│ ├── text-spotting-0004-recognizer-encoder.bin

│ ├── text-spotting-0004-recognizer-encoder.xml

│ ├── text-to-speech-en-0001-duration-prediction.bin

│ ├── text-to-speech-en-0001-duration-prediction.xml

│ ├── text-to-speech-en-0001-generation.bin

│ ├── text-to-speech-en-0001-generation.xml

│ ├── text-to-speech-en-0001-regression.bin

│ ├── text-to-speech-en-0001-regression.xml

│ ├── unet-camvid-onnx-0001.bin

│ ├── unet-camvid-onnx-0001.xml

│ ├── vehicle-attributes-recognition-barrier-0039.bin

│ ├── vehicle-attributes-recognition-barrier-0039.xml

│ ├── vehicle-attributes-recognition-barrier-0042.bin

│ ├── vehicle-attributes-recognition-barrier-0042.xml

│ ├── vehicle-detection-0200.bin

│ ├── vehicle-detection-0200.xml

│ ├── vehicle-detection-0201.bin

│ ├── vehicle-detection-0201.xml

│ ├── vehicle-detection-0202.bin

│ ├── vehicle-detection-0202.xml

│ ├── vehicle-detection-adas-0002.bin

│ ├── vehicle-detection-adas-0002.xml

│ ├── vehicle-license-plate-detection-barrier-0106.bin

│ ├── vehicle-license-plate-detection-barrier-0106.xml

│ ├── weld-porosity-detection-0001.bin

│ ├── weld-porosity-detection-0001.xml

│ ├── yolo-v2-ava-0001.bin

│ ├── yolo-v2-ava-0001.xml

│ ├── yolo-v2-ava-sparse-35-0001.bin

│ ├── yolo-v2-ava-sparse-35-0001.xml

│ ├── yolo-v2-ava-sparse-70-0001.bin

│ ├── yolo-v2-ava-sparse-70-0001.xml

│ ├── yolo-v2-tiny-ava-0001.bin

│ ├── yolo-v2-tiny-ava-0001.xml

│ ├── yolo-v2-tiny-ava-sparse-30-0001.bin

│ ├── yolo-v2-tiny-ava-sparse-30-0001.xml

│ ├── yolo-v2-tiny-ava-sparse-60-0001.bin

│ ├── yolo-v2-tiny-ava-sparse-60-0001.xml

│ ├── yolo-v2-tiny-vehicle-detection-0001.bin

│ └── yolo-v2-tiny-vehicle-detection-0001.xml

└── FP32

├── action-recognition-0001-decoder.bin

├── action-recognition-0001-decoder.xml

├── action-recognition-0001-encoder.bin

├── action-recognition-0001-encoder.xml

├── age-gender-recognition-retail-0013.bin

├── age-gender-recognition-retail-0013.xml

├── asl-recognition-0004.bin

├── asl-recognition-0004.xml

├── bert-large-uncased-whole-word-masking-squad-0001.bin

├── bert-large-uncased-whole-word-masking-squad-0001.xml

├── bert-large-uncased-whole-word-masking-squad-emb-0001.bin

├── bert-large-uncased-whole-word-masking-squad-emb-0001.xml

├── bert-small-uncased-whole-word-masking-squad-0001.bin

├── bert-small-uncased-whole-word-masking-squad-0001.xml

├── bert-small-uncased-whole-word-masking-squad-0002.bin

├── bert-small-uncased-whole-word-masking-squad-0002.xml

├── driver-action-recognition-adas-0002-decoder.bin

├── driver-action-recognition-adas-0002-decoder.xml

├── driver-action-recognition-adas-0002-encoder.bin

├── driver-action-recognition-adas-0002-encoder.xml

├── emotions-recognition-retail-0003.bin

├── emotions-recognition-retail-0003.xml

├── face-detection-0200.bin

├── face-detection-0200.xml

├── face-detection-0202.bin

├── face-detection-0202.xml

├── face-detection-0204.bin

├── face-detection-0204.xml

├── face-detection-0205.bin

├── face-detection-0205.xml

├── face-detection-0206.bin

├── face-detection-0206.xml

├── face-detection-adas-0001.bin

├── face-detection-adas-0001.xml

├── face-detection-retail-0004.bin

├── face-detection-retail-0004.xml

├── face-detection-retail-0005.bin

├── face-detection-retail-0005.xml

├── face-reidentification-retail-0095.bin

├── face-reidentification-retail-0095.xml

├── facial-landmarks-35-adas-0002.bin

├── facial-landmarks-35-adas-0002.xml

├── faster-rcnn-resnet101-coco-sparse-60-0001.bin

├── faster-rcnn-resnet101-coco-sparse-60-0001.xml

├── formula-recognition-medium-scan-0001-im2latex-decoder.bin

├── formula-recognition-medium-scan-0001-im2latex-decoder.xml

├── formula-recognition-medium-scan-0001-im2latex-encoder.bin

├── formula-recognition-medium-scan-0001-im2latex-encoder.xml

├── formula-recognition-polynomials-handwritten-0001-decoder.bin

├── formula-recognition-polynomials-handwritten-0001-decoder.xml

├── formula-recognition-polynomials-handwritten-0001-encoder.bin

├── formula-recognition-polynomials-handwritten-0001-encoder.xml

├── gaze-estimation-adas-0002.bin

├── gaze-estimation-adas-0002.xml

├── handwritten-japanese-recognition-0001.bin

├── handwritten-japanese-recognition-0001.xml

├── handwritten-score-recognition-0003.bin

├── handwritten-score-recognition-0003.xml

├── handwritten-simplified-chinese-recognition-0001.bin

├── handwritten-simplified-chinese-recognition-0001.xml

├── head-pose-estimation-adas-0001.bin

├── head-pose-estimation-adas-0001.xml

├── horizontal-text-detection-0001.bin

├── horizontal-text-detection-0001.xml

├── human-pose-estimation-0001.bin

├── human-pose-estimation-0001.xml

├── human-pose-estimation-0005.bin

├── human-pose-estimation-0005.xml

├── human-pose-estimation-0006.bin

├── human-pose-estimation-0006.xml

├── human-pose-estimation-0007.bin

├── human-pose-estimation-0007.xml

├── icnet-camvid-ava-0001.bin

├── icnet-camvid-ava-0001.xml

├── icnet-camvid-ava-sparse-30-0001.bin

├── icnet-camvid-ava-sparse-30-0001.xml

├── icnet-camvid-ava-sparse-60-0001.bin

├── icnet-camvid-ava-sparse-60-0001.xml

├── image-retrieval-0001.bin

├── image-retrieval-0001.xml

├── instance-segmentation-security-0002.bin

├── instance-segmentation-security-0002.xml

├── instance-segmentation-security-0091.bin

├── instance-segmentation-security-0091.xml