私的AI研究会 > OpenModelZoo

Open Model Zoo Demos を動かす †

OpenVINO™ Toolkit に付属するデモソフトを動かしてアプリケーションで推論エンジンを使用する方法を調べる。

※ 最終更新:2021/04/15

このデモでは、OpenVINO™を使用して 3D ヒューマン ポーズ推定モデルを実行する。(機械翻訳)

使用する事前トレーニング済みモデル †

デモの動作 †

- デモ アプリケーションでは、中間表現 (IR) 形式で 3D 人のポーズ推定モデルを想定している。

- ビデオフレームを1つずつ読み取り、特定のフレーム内の3D人のポーズを推定する。

- 入力画像上にオーバーレイされている2Dポーズと、対応する3Dポーズを持つキャンバスを持つグラフィカルウィンドウとして、その作業結果を視覚化する。

デモの実行 †

(前提条件)このデモ アプリケーションを実行するには、ネイティブ Python 拡張モジュールをビルドする必要がある。

▼ 「2021.3」

▲ 「2021.3」

- 実行時のディレクトリ: /opt/intel/openvino_2021/deployment_tools/open_model_zoo/demos/human_pose_estimation_3d_demo/python/

- 実行ファイル : python3 human_pose_estimation_3d_demo.py

- 「-h」オプションを指定してアプリケーションを実行すると、次の使用法メッセージが表示される。

$ python3 action_recognition_demo.py -h

usage: action_recognition_demo.py [-h] -i INPUT [--loop] [-o OUTPUT]

[-limit OUTPUT_LIMIT] -at

{en-de,en-mean,i3d-rgb} -m_en M_ENCODER

[-m_de M_DECODER | --seq DECODER_SEQ_SIZE]

[-l CPU_EXTENSION] [-d DEVICE] [-lb LABELS]

[--no_show] [-s LABEL_SMOOTHING]

[-u UTILIZATION_MONITORS]

Options:

-h, --help Show this help message and exit.

-i INPUT, --input INPUT

Required. An input to process. The input must be a

single image, a folder of images, video file or camera

id.

--loop Optional. Enable reading the input in a loop.

-o OUTPUT, --output OUTPUT

Optional. Name of output to save.

-limit OUTPUT_LIMIT, --output_limit OUTPUT_LIMIT

Optional. Number of frames to store in output. If 0 is

set, all frames are stored.

-at {en-de,en-mean,i3d-rgb}, --architecture_type {en-de,en-mean,i3d-rgb}

Required. Specify architecture type.

-m_en M_ENCODER, --m_encoder M_ENCODER

Required. Path to encoder model.

-m_de M_DECODER, --m_decoder M_DECODER

Optional. Path to decoder model. Only for -at en-de.

--seq DECODER_SEQ_SIZE

Optional. Length of sequence that decoder takes as

input.

-l CPU_EXTENSION, --cpu_extension CPU_EXTENSION

Optional. For CPU custom layers, if any. Absolute path

to a shared library with the kernels implementation.

-d DEVICE, --device DEVICE

Optional. Specify a target device to infer on. CPU,

GPU, FPGA, HDDL or MYRIAD is acceptable. The demo will

look for a suitable plugin for the device specified.

Default value is CPU.

-lb LABELS, --labels LABELS

Optional. Path to file with label names.

--no_show Optional. Don't show output.

-s LABEL_SMOOTHING, --smooth LABEL_SMOOTHING

Optional. Number of frames used for output label

smoothing.

-u UTILIZATION_MONITORS, --utilization-monitors UTILIZATION_MONITORS

Optional. List of monitors to show initially.

- 実行例「Ubuntu Core™ i5-10210U」

$ python3 human_pose_estimation_3d_demo.py -m ~/model/public/FP32/human-pose-estimation-3d-0001.xml -i ~/Videos/driver.mp4

▼ 「2021.2」

▲ 「2021.2」

- 実行時のディレクトリ : ~/omz_demos_python/python_demos/human_pose_estimation_3d_demo/

- 実行ファイル : python3 human_pose_estimation_3d_demo.py

- 「-h」オプションを指定してアプリケーションを実行すると、次の使用法メッセージが表示される。

mizutu@ubuntu2004dk:~/omz_demos_python/python_demos/human_pose_estimation_3d_demo$ python3 human_pose_estimation_3d_demo.py -h

usage: human_pose_estimation_3d_demo.py [-h] -m MODEL [-i INPUT [INPUT ...]]

[-d DEVICE]

[--height_size HEIGHT_SIZE]

[--extrinsics_path EXTRINSICS_PATH]

[--fx FX] [--no_show]

[-u UTILIZATION_MONITORS]

Lightweight 3D human pose estimation demo. Press esc to exit, "p" to (un)pause

video or process next image.

Options:

-h, --help Show this help message and exit.

-m MODEL, --model MODEL

Required. Path to an .xml file with a trained model.

-i INPUT [INPUT ...], --input INPUT [INPUT ...]

Required. Path to input image, images, video file or

camera id.

-d DEVICE, --device DEVICE

Optional. Specify the target device to infer on: CPU,

GPU, FPGA, HDDL or MYRIAD. The demo will look for a

suitable plugin for device specified (by default, it

is CPU).

--height_size HEIGHT_SIZE

Optional. Network input layer height size.

--extrinsics_path EXTRINSICS_PATH

Optional. Path to file with camera extrinsics.

--fx FX Optional. Camera focal length.

--no_show Optional. Do not display output.

-u UTILIZATION_MONITORS, --utilization_monitors UTILIZATION_MONITORS

Optional. List of monitors to show initially.

デモを実行するには、あらかじめOpenVINO™モデル ダウンローダーを使用してダウンロードした、公開モデルまたは事前トレーニング済みのモデルを使用する。

- デデモ出力

デモでは、OpenCVを使用して、検出されたポーズと現在の推論のパフォーマンスを表示する。

- 実行例「Ubuntu Core™ i5-10210U」

mizutu@ubuntu-nuc10:~/omz_demos_python/python_demos/human_pose_estimation_3d_demo$ python3 human_pose_estimation_3d_demo.py -m ~/model/public/FP32/human-pose-estimation-3d-0001.xml -i ~/Videos/driver.mp4

- 実行例「Ubuntu Core™ i7-6700」

mizutu@ubuntu2004dk:~/omz_demos_python/python_demos/human_pose_estimation_3d_demo$ python3 human_pose_estimation_3d_demo.py -m ~/model/public/FP32/human-pose-estimation-3d-0001.xml -i ~/Videos/driver.mp4

- 実行例「Ubuntu Celeron® J4005」

mizutu@ubuntu-nuc:~/omz_demos_python/python_demos/human_pose_estimation_3d_demo$ python3 human_pose_estimation_3d_demo.py -m ~/model/public/FP32/human-pose-estimation-3d-0001.xml -i ~/Videos/driver.mp4

- 実行例「Ubuntu Celeron® J4005 + NCS2」

コマンド変更 「/FP32→/FP16」「-d MYRIAD」

mizutu@ubuntu-nuc:~/omz_demos_python/python_demos/human_pose_estimation_3d_demo$ python3 human_pose_estimation_3d_demo.py -m ~/model/public/FP32/human-pose-estimation-3d-0001.xml -i ~/Videos/driver.mp4 -d MYRIAD

速度比較 †

| 項目 | Core™ i5-10210 | Core™ i7-6700 | Celeron® J4005 | Celeron® J4005 + NCS2 | Core™ i7-2620M |

| fps | 13.5 | 6.6 | 1.1 | 3.9 | 1.5 |

入力ビデオで実行されているアクションを分類するアクション認識アルゴリズムのデモアプリケーション。

使用する事前トレーニング済みモデル †

デモの実行 †

▼ 「2021.3」

▲ 「2021.3」

- 実行時のディレクトリ:/opt/intel/openvino_2021/deployment_tools/open_model_zoo/demos/action_recognition_demo/python/

- 実行ファイル : python3 action_recognition.py

- 「-h」オプションを指定してアプリケーションを実行すると、次の使用法メッセージが表示される。

$ python3 action_recognition_demo.py -h

usage: action_recognition_demo.py [-h] -i INPUT [--loop] [-o OUTPUT]

[-limit OUTPUT_LIMIT] -at

{en-de,en-mean,i3d-rgb} -m_en M_ENCODER

[-m_de M_DECODER | --seq DECODER_SEQ_SIZE]

[-l CPU_EXTENSION] [-d DEVICE] [-lb LABELS]

[--no_show] [-s LABEL_SMOOTHING]

[-u UTILIZATION_MONITORS]

Options:

-h, --help Show this help message and exit.

-i INPUT, --input INPUT

Required. An input to process. The input must be a

single image, a folder of images, video file or camera

id.

--loop Optional. Enable reading the input in a loop.

-o OUTPUT, --output OUTPUT

Optional. Name of output to save.

-limit OUTPUT_LIMIT, --output_limit OUTPUT_LIMIT

Optional. Number of frames to store in output. If 0 is

set, all frames are stored.

-at {en-de,en-mean,i3d-rgb}, --architecture_type {en-de,en-mean,i3d-rgb}

Required. Specify architecture type.

-m_en M_ENCODER, --m_encoder M_ENCODER

Required. Path to encoder model.

-m_de M_DECODER, --m_decoder M_DECODER

Optional. Path to decoder model. Only for -at en-de.

--seq DECODER_SEQ_SIZE

Optional. Length of sequence that decoder takes as

input.

-l CPU_EXTENSION, --cpu_extension CPU_EXTENSION

Optional. For CPU custom layers, if any. Absolute path

to a shared library with the kernels implementation.

-d DEVICE, --device DEVICE

Optional. Specify a target device to infer on. CPU,

GPU, FPGA, HDDL or MYRIAD is acceptable. The demo will

look for a suitable plugin for the device specified.

Default value is CPU.

-lb LABELS, --labels LABELS

Optional. Path to file with label names.

--no_show Optional. Don't show output.

-s LABEL_SMOOTHING, --smooth LABEL_SMOOTHING

Optional. Number of frames used for output label

smoothing.

-u UTILIZATION_MONITORS, --utilization-monitors UTILIZATION_MONITORS

Optional. List of monitors to show initially.

- 実行例「Ubuntu Core™ i5-10210U」

$ python3 action_recognition_demo.py -m_en ~/model/intel/FP32/driver-action-recognition-adas-0002-encoder.xml -at en-de -m_de ~/model/intel/FP32/driver-action-recognition-adas-0002-decoder.xml -i ~/Videos/driver.mp4 -lb driver_actions.txt

Reading IR...

Loading IR to the plugin...

Reading IR...

Loading IR to the plugin...

To close the application, press 'CTRL+C' here or switch to the output window and press Esc or Q

Frame 15: Safe driving - 85.45% -- 3.22ms

Frame 16: Safe driving - 87.28% -- 6.82ms

:

;

Frame 145: Safe driving - 97.24% -- 27.94ms

Frame 146: Safe driving - 97.14% -- 27.96ms

Frame 147: Safe driving - 97.01% -- 27.97ms

finishing <action_recognition_demo.steps.RenderStep object at 0x7f3572d21910>

Data total: 1.24ms (+/-: 0.98) 805.59fps

Data own: 1.23ms (+/-: 0.98) 811.61fps

Encoder total: 4.14ms (+/-: 1.07) 241.51fps

Encoder own: 4.13ms (+/-: 1.07) 242.21fps

Decoder total: 22.66ms (+/-: 6.40) 44.13fps

Decoder own: 22.65ms (+/-: 6.40) 44.15fps

Render total: 43.39ms (+/-: 21.46) 23.04fps

Render own: 43.25ms (+/-: 21.02) 23.12fps

▼ 「2021.2」

▲ 「2021.2」

- 実行時のディレクトリ : ~/omz_demos_python/python_demos/action_recognition/

- 実行ファイル : python3 action_recognition.py

- 「-h」オプションを指定してアプリケーションを実行すると、次の使用法メッセージが表示される。

mizutu@ubuntu2004dk:~/omz_demos_python/python_demos/action_recognition$ python3 action_recognition.py -h

usage: action_recognition.py [-h] -i INPUT -m_en M_ENCODER [-m_de M_DECODER]

[-l CPU_EXTENSION] [-d DEVICE] [--fps FPS]

[-lb LABELS] [--no_show] [-s LABEL_SMOOTHING]

[--seq DECODER_SEQ_SIZE]

[-u UTILIZATION_MONITORS]

Options:

-h, --help Show this help message and exit.

-i INPUT, --input INPUT

Required. Id of the video capturing device to open (to

open default camera just pass 0), path to a video

-m_en M_ENCODER, --m_encoder M_ENCODER

Required. Path to encoder model

-m_de M_DECODER, --m_decoder M_DECODER

Optional. Path to decoder model. If not specified,

simple averaging of encoder's outputs over a time

window is applied

-l CPU_EXTENSION, --cpu_extension CPU_EXTENSION

Optional. For CPU custom layers, if any. Absolute path

to a shared library with the kernels implementation.

-d DEVICE, --device DEVICE

Optional. Specify a target device to infer on. CPU,

GPU, FPGA, HDDL or MYRIAD is acceptable. The demo will

look for a suitable plugin for the device specified.

Default value is CPU

--fps FPS Optional. FPS for renderer

-lb LABELS, --labels LABELS

Optional. Path to file with label names

--no_show Optional. Don't show output

--loop Optional. Run a video in cycle mode

-s LABEL_SMOOTHING, --smooth LABEL_SMOOTHING

Optional. Number of frames used for output label

smoothing

--seq DECODER_SEQ_SIZE

Optional. Length of sequence that decoder takes as

input

-u UTILIZATION_MONITORS, --utilization-monitors UTILIZATION_MONITORS

Optional. List of monitors to show initially.

- 実行例「Ubuntu Core™ i5-10210U」

mizutu@ubuntu-nuc10:~/omz_demos_python/python_demos/action_recognition$ python3 action_recognition.py -m_en ~/model/intel/FP32/driver-action-recognition-adas-0002-encoder.xml -m_de ~/model/intel/FP32/driver-action-recognition-adas-0002-decoder.xml -i ~/Videos/driver.mp4 -lb driver_actions.txt

Reading IR...

Loading IR to the plugin...

Reading IR...

Loading IR to the plugin...

To close the application, press 'CTRL+C' here or switch to the output window and press Esc or Q

Frame 15: Safe driving - 85.45% -- 3.25ms

Frame 16: Safe driving - 87.28% -- 7.06ms

:

:

Frame 131: Safe driving - 83.91% -- 28.68ms

Frame 132: Safe driving - 84.52% -- 28.70ms

finishing <action_recognition_demo.steps.RenderStep object at 0x7f99fe17fd30>

Data total: 0.87ms (+/-: 0.53) 1152.05fps

Data own: 0.86ms (+/-: 0.52) 1164.18fps

Encoder total: 4.25ms (+/-: 1.42) 235.36fps

Encoder own: 4.24ms (+/-: 1.42) 236.03fps

Decoder total: 22.85ms (+/-: 7.23) 43.76fps

Decoder own: 22.84ms (+/-: 7.23) 43.78fps

Render total: 37.44ms (+/-: 17.86) 26.71fps

Render own: 37.50ms (+/-: 17.46) 26.67fps

- 実行例「Ubuntu Core™ i7-6700」

mizutu@ubuntu2004dk:~/omz_demos_python/python_demos/action_recognition$ python3 action_recognition.py -m_en ~/model/intel/FP32/driver-action-recognition-adas-0002-encoder.xml -m_de ~/model/intel/FP32/driver-action-recognition-adas-0002-decoder.xml -i ~/Videos/driver.mp4 -lb driver_actions.txt

Reading IR...

Loading IR to the plugin...

Reading IR...

Loading IR to the plugin...

To close the application, press 'CTRL+C' here or switch to the output window and press Esc or Q

Frame 15: Safe driving - 84.34% -- 6.93ms

Frame 16: Safe driving - 89.18% -- 13.52ms

Frame 17: Safe driving - 90.36% -- 18.76ms

:

:

Frame 644: Safe driving - 99.50% -- 61.51ms

Frame 645: Safe driving - 99.42% -- 61.27ms

Frame 646: Safe driving - 99.41% -- 62.06ms

finishing <action_recognition_demo.steps.RenderStep object at 0x7f190620cd00>

Data total: 6.85ms (+/-: 3.98) 146.06fps

Data own: 6.83ms (+/-: 3.98) 146.51fps

Encoder total: 10.49ms (+/-: 3.95) 95.32fps

Encoder own: 10.48ms (+/-: 3.95) 95.44fps

Decoder total: 49.77ms (+/-: 9.86) 20.09fps

Decoder own: 49.76ms (+/-: 9.86) 20.10fps

Render total: 67.14ms (+/-: 48.16) 14.89fps

Render own: 41.76ms (+/-: 40.41) 23.94fps

- 実行例「Ubuntu Celeron® J4005」

mizutu@ubuntu-nuc:~/omz_demos_python/python_demos/action_recognition$ python3 action_recognition.py -m_en ~/model/intel/FP32/driver-action-recognition-adas-0002-encoder.xml -m_de ~/model/intel/FP32/driver-action-recognition-adas-0002-decoder.xml -i ~/Videos/driver.mp4 -lb driver_actions.txt

Reading IR...

Loading IR to the plugin...

Reading IR...

Loading IR to the plugin...

To close the application, press 'CTRL+C' here or switch to the output window and press Esc or Q

Frame 15: Safe driving - 84.34% -- 69.39ms

Frame 16: Safe driving - 89.18% -- 122.10ms

Frame 17: Safe driving - 90.36% -- 170.89ms

Frame 18: Safe driving - 89.54% -- 220.03ms

Frame 19: Safe driving - 86.94% -- 269.36ms

Frame 20: Safe driving - 86.38% -- 319.19ms

Frame 21: Safe driving - 84.50% -- 366.72ms

Frame 22: Safe driving - 84.57% -- 416.71ms

Frame 23: Safe driving - 88.56% -- 464.34ms

Frame 24: Safe driving - 92.15% -- 512.19ms

Frame 25: Safe driving - 91.29% -- 559.57ms

Frame 26: Safe driving - 92.38% -- 604.83ms

Frame 27: Safe driving - 92.69% -- 649.21ms

Frame 28: Safe driving - 92.93% -- 693.65ms

Frame 29: Safe driving - 92.55% -- 739.03ms

Frame 30: Safe driving - 93.86% -- 785.94ms

Frame 31: Safe driving - 91.99% -- 833.31ms

finishing <action_recognition_demo.steps.RenderStep object at 0x7ff8893afe50>

Data total: 10.07ms (+/-: 6.88) 99.27fps

Data own: 10.04ms (+/-: 6.88) 99.57fps

Encoder total: 90.65ms (+/-: 22.25) 11.03fps

Encoder own: 90.63ms (+/-: 22.25) 11.03fps

Decoder total: 399.59ms (+/-: 367.48) 2.50fps

Decoder own: 399.57ms (+/-: 367.47) 2.50fps

Render total: 493.77ms (+/-: 481.36) 2.03fps

Render own: 260.85ms (+/-: 387.20) 3.83fps

- 実行例「Ubuntu Celeron® J4005 + NCS2」

コマンド変更 「/FP32→/FP16」「-d MYRIAD」

mizutu@ubuntu-nuc:~/omz_demos_python/python_demos/action_recognition$ python3 action_recognition.py -m_en ~/model/intel/FP16/driver-action-recognition-adas-0002-encoder.xml -m_de ~/model/intel/FP16/driver-action-recognition-adas-0002-decoder.xml -i ~/Videos/driver.mp4 -d MYRIAD -lb driver_actions.txt

Reading IR...

Loading IR to the plugin...

[Warning][VPU][Config] Deprecated option was used : VPU_HW_STAGES_OPTIMIZATION

Reading IR...

Loading IR to the plugin...

To close the application, press 'CTRL+C' here or switch to the output window and press Esc or Q

Frame 15: Safe driving - 83.66% -- 2.86ms

Frame 16: Safe driving - 88.97% -- 4.09ms

Frame 17: Safe driving - 90.36% -- 5.07ms

Frame 18: Safe driving - 89.83% -- 6.42ms

:

:

Frame 396: Safe driving - 77.50% -- 27.69ms

Frame 397: Safe driving - 77.05% -- 28.23ms

Frame 398: Safe driving - 76.66% -- 28.74ms

Frame 399: Safe driving - 74.81% -- 28.36ms

Frame 400: Safe driving - 73.49% -- 28.21ms

Frame 401: Safe driving - 73.83% -- 28.39ms

finishing <action_recognition_demo.steps.RenderStep object at 0x7f838fda1070>

Data total: 11.85ms (+/-: 8.35) 84.38fps

Data own: 11.75ms (+/-: 8.34) 85.11fps

Encoder total: 5.69ms (+/-: 4.58) 175.60fps

Encoder own: 5.64ms (+/-: 4.57) 177.24fps

Decoder total: 18.95ms (+/-: 9.61) 52.78fps

Decoder own: 18.92ms (+/-: 9.61) 52.84fps

Render total: 37.54ms (+/-: 25.96) 26.64fps

Render own: 36.83ms (+/-: 24.76) 27.15fps

速度比較 †

| 項目 | Core™ i5-10210 | Core™ i7-6700 | Celeron® J4005 | Celeron® J4005 + NCS2 | Core™ i7-2620M |

| Data totai | fps | 1152 | 146 | 99 | 84 | 642 |

| ms | 0.87 | 10 | 10 | 12 | 1.56 |

| Encoder total | fps | 235 | 95 | 11 | 175 | 21.4 |

| ms | 4.3 | 10.5 | 91 | 5.7 | 46.8 |

| Decoder total | fps | 44 | 20 | 2.5 | 53 | 7.9 |

| ms | 23 | 50 | 400 | 19 | 126 |

| Render total | fps | 27 | 15 | 2.0 | 26.6 | 4.66 |

| ms | 37 | 67 | 494 | 38 | 215 |

このデモでは、同期 API と非同期 API を使用したオブジェクト検出について説明する。

非同期 API の使用は、推論が完了するのを待つのではなく、アプリがホスト上で処理を続行できるため、アクセラレータがビジー状態の間は、アプリケーションの全体的なフレーム レートを向上させることができる。具体的には、このデモでは、フラグを使用して設定した Infer Requests の数を保持する。一部の Infer Requests は IE によって処理される、他の要求は新しいフレーム データで満たされ、非同期的に開始されるか、次の出力をインフェース要求から取得して表示できる。

この手法は、例えば、結果として得られた(以前の)フレームを推論したり、顔検出結果の上にいくつかの感情検出のように、さらに推論を実行したりするなど、利用可能な任意の並列スラックに一般化することができる。パフォーマンスに関する重要な注意点はありますが、たとえば、並列で実行されるタスクは、共有コンピューティング リソースのオーバーサブスクライブを避けるようにすること。たとえば、推論がFPGA上で実行され、CPUが本質的にアイドル状態である場合、CPU上で並列に行う方が理にかなっている。しかし、推論がGPU上で行われる場合、デバイスがすでにビジーであるため、同じGPUで(結果のビデオ)エンコーディングを並列に行うのにはほとんど効果がない。(機械翻訳)

使用する事前トレーニング済みモデル †

デモの動作 †

- 起動時に、アプリケーションはコマンド ライン パラメータを読み取り、ネットワークを推論エンジンにロードする。

- OpenCV VideoCapture からフレームを取得すると、推論が実行され、結果が表示される。

- 非同期 API は、入力/出力をカプセル化し、スケジューリングと結果を待つ「インフェイン要求」の概念で動作する。

デモの実行 †

▼ 「2021.3」

▲ 「2021.3」

- 実行時のディレクトリ: /opt/intel/openvino_2021/deployment_tools/open_model_zoo/demos/object_detection_demo/python/

- 実行ファイル : python3 object_detection_demo.py

- 「-h」オプションを指定してアプリケーションを実行すると、次の使用法メッセージが表示される。

mizutu@ubuntu-nuc10:/opt/intel/openvino_2021/deployment_tools/open_model_zoo/demos/object_detection_demo/python$ python3 object_detection_demo.py -h

usage: object_detection_demo.py [-h] -m MODEL -at

{ssd,yolo,yolov4,faceboxes,centernet,ctpn,retinaface}

-i INPUT [-d DEVICE] [--labels LABELS]

[-t PROB_THRESHOLD] [--keep_aspect_ratio]

[--input_size INPUT_SIZE INPUT_SIZE]

[-nireq NUM_INFER_REQUESTS]

[-nstreams NUM_STREAMS]

[-nthreads NUM_THREADS] [--loop] [-o OUTPUT]

[-limit OUTPUT_LIMIT] [--no_show]

[-u UTILIZATION_MONITORS] [-r]

Options:

-h, --help Show this help message and exit.

-m MODEL, --model MODEL

Required. Path to an .xml file with a trained model.

-at {ssd,yolo,yolov4,faceboxes,centernet,ctpn,retinaface}, --architecture_type {ssd,yolo,yolov4,faceboxes,centernet,ctpn,retinaface}

Required. Specify model' architecture type.

-i INPUT, --input INPUT

Required. An input to process. The input must be a

single image, a folder of images, video file or camera

id.

-d DEVICE, --device DEVICE

Optional. Specify the target device to infer on; CPU,

GPU, FPGA, HDDL or MYRIAD is acceptable. The sample

will look for a suitable plugin for device specified.

Default value is CPU.

Common model options:

--labels LABELS Optional. Labels mapping file.

-t PROB_THRESHOLD, --prob_threshold PROB_THRESHOLD

Optional. Probability threshold for detections

filtering.

--keep_aspect_ratio Optional. Keeps aspect ratio on resize.

--input_size INPUT_SIZE INPUT_SIZE

Optional. The first image size used for CTPN model

reshaping. Default: 600 600. Note that submitted

images should have the same resolution, otherwise

predictions might be incorrect.

Inference options:

-nireq NUM_INFER_REQUESTS, --num_infer_requests NUM_INFER_REQUESTS

Optional. Number of infer requests

-nstreams NUM_STREAMS, --num_streams NUM_STREAMS

Optional. Number of streams to use for inference on

the CPU or/and GPU in throughput mode (for HETERO and

MULTI device cases use format

<device1>:<nstreams1>,<device2>:<nstreams2> or just

<nstreams>).

-nthreads NUM_THREADS, --num_threads NUM_THREADS

Optional. Number of threads to use for inference on

CPU (including HETERO cases).

Input/output options:

--loop Optional. Enable reading the input in a loop.

-o OUTPUT, --output OUTPUT

Optional. Name of output to save.

-limit OUTPUT_LIMIT, --output_limit OUTPUT_LIMIT

Optional. Number of frames to store in output. If 0 is

set, all frames are stored.

--no_show Optional. Don't show output.

-u UTILIZATION_MONITORS, --utilization_monitors UTILIZATION_MONITORS

Optional. List of monitors to show initially.

Debug options:

-r, --raw_output_message

Optional. Output inference results raw values showing.

- デモを実行するには、あらかじめOpenVINO™モデル ダウンローダーを使用してダウンロードした、公開モデルまたは事前トレーニング済みのモデルを使用する。

- Infer Requests の数はフラグで指定する。この数値の増加は、デバイスが並列化をサポートしている場合、複数の Infer 要求を同時に処理できるため、通常、パフォーマンス (スループット) の増加につながる。ただし、インフェース要求の数が多いと、各フレームが推論のために送信されるまで待機する必要があるため、待機時間が長くなる。

- FPS を高く設定する場合は、使用するすべてのデバイスで合計した値をわずかに超える値に設定することを勧める。

- 実行例「Ubuntu Core™ i5-10210U」

$ python3 object_detection_demo.py -i ~/Videos/car_person.mp4 -m ~/model/intel/FP32/person-vehicle-bike-detection-crossroad-yolov3-1020.xml -at yolo

[ INFO ] Initializing Inference Engine...

[ INFO ] Loading network...

[ INFO ] Reading network from IR...

[ INFO ] Loading network to CPU plugin...

[ INFO ] Starting inference...

To close the application, press 'CTRL+C' here or switch to the output window and press ESC key

Latency: 213.8 ms

FPS: 4.6

▼ 「2021.2」

▲ 「2021.2」

- 実行時のディレクトリ: ~/omz_demos_python/python_demos/object_detection_demo/

- 実行ファイル : python3 object_detection_demo.py

- 「-h」オプションを指定してアプリケーションを実行すると、次の使用法メッセージが表示される。

mizutu@ubuntu2004dk:~/omz_demos_python/python_demos/object_detection_demo$ python3 object_detection_demo.py -h

usage: object_detection_demo.py [-h] -m MODEL -at

{ssd,yolo,faceboxes,centernet,retina} -i INPUT

[-d DEVICE] [--labels LABELS]

[-t PROB_THRESHOLD] [--keep_aspect_ratio]

[-nireq NUM_INFER_REQUESTS]

[-nstreams NUM_STREAMS]

[-nthreads NUM_THREADS] [-loop] [-no_show]

[-u UTILIZATION_MONITORS] [-r]

Options:

-h, --help Show this help message and exit.

-m MODEL, --model MODEL

Required. Path to an .xml file with a trained model.

-at {ssd,yolo,faceboxes,centernet,retina}, --architecture_type {ssd,yolo,faceboxes,centernet,retina}

Required. Specify model' architecture type.

-i INPUT, --input INPUT

Required. Path to an image, folder with images, video

file or a numeric camera ID.

-d DEVICE, --device DEVICE

Optional. Specify the target device to infer on; CPU,

GPU, FPGA, HDDL or MYRIAD is acceptable. The sample

will look for a suitable plugin for device specified.

Default value is CPU.

Common model options:

--labels LABELS Optional. Labels mapping file.

-t PROB_THRESHOLD, --prob_threshold PROB_THRESHOLD

Optional. Probability threshold for detections

filtering.

--keep_aspect_ratio Optional. Keeps aspect ratio on resize.

Inference options:

-nireq NUM_INFER_REQUESTS, --num_infer_requests NUM_INFER_REQUESTS

Optional. Number of infer requests

-nstreams NUM_STREAMS, --num_streams NUM_STREAMS

Optional. Number of streams to use for inference on

the CPU or/and GPU in throughput mode (for HETERO and

MULTI device cases use format

<device1>:<nstreams1>,<device2>:<nstreams2> or just

<nstreams>).

-nthreads NUM_THREADS, --num_threads NUM_THREADS

Optional. Number of threads to use for inference on

CPU (including HETERO cases).

Input/output options:

-loop, --loop Optional. Loops input data.

-no_show, --no_show Optional. Don't show output.

-u UTILIZATION_MONITORS, --utilization_monitors UTILIZATION_MONITORS

Optional. List of monitors to show initially.

Debug options:

-r, --raw_output_message

Optional. Output inference results raw values showing.

- デモを実行するには、あらかじめOpenVINO™モデル ダウンローダーを使用してダウンロードした、公開モデルまたは事前トレーニング済みのモデルを使用する。

- Infer Requests の数はフラグで指定する。この数値の増加は、デバイスが並列化をサポートしている場合、複数の Infer 要求を同時に処理できるため、通常、パフォーマンス (スループット) の増加につながる。ただし、インフェース要求の数が多いと、各フレームが推論のために送信されるまで待機する必要があるため、待機時間が長くなる。

- FPS を高く設定する場合は、使用するすべてのデバイスで合計した値をわずかに超える値に設定することを勧める。

- 実行例「Ubuntu Core™ i5-10210U」

mizutu@ubuntu-nuc10:~/omz_demos_python/python_demos/object_detection_demo$ python3 object_detection_demo.py -i ~/Videos/car_person.mp4 -m ~/model/intel/FP32/person-vehicle-bike-detection-crossroad-yolov3-1020.xml -at yolo

[ INFO ] Initializing Inference Engine...

[ INFO ] Loading network...

[ INFO ] Reading network from IR...

[ INFO ] Loading network to CPU plugin...

[ INFO ] Starting inference...

To close the application, press 'CTRL+C' here or switch to the output window and press ESC key

Latency: 219.2 ms

FPS: 4.5

- 実行例「Ubuntu Core™ i7-6700」

mizutu@ubuntu2004dk:~/omz_demos_python/python_demos/object_detection_demo$ python3 object_detection_demo.py -i ~/Videos/car_person.mp4 -m ~/model/intel/FP32/person-vehicle-bike-detection-crossroad-yolov3-1020.xml -at yolo

[ INFO ] Initializing Inference Engine...

[ INFO ] Loading network...

[ INFO ] Reading network from IR...

[ INFO ] Loading network to CPU plugin...

[ INFO ] Starting inference...

To close the application, press 'CTRL+C' here or switch to the output window and press ESC key

Latency: 417.8 ms

FPS: 2.4

- 実行例「Ubuntu Celeron® J4005」

mizutu@ubuntu-nuc:~/omz_demos_python/python_demos/object_detection_demo$ python3 object_detection_demo.py -i ~/Videos/car_person.mp4 -m ~/model/intel/FP32/person-vehicle-bike-detection-crossroad-yolov3-1020.xml -at yolo

[ INFO ] Initializing Inference Engine...

[ INFO ] Loading network...

[ INFO ] Reading network from IR...

[ INFO ] Loading network to CPU plugin...

[ INFO ] Starting inference...

To close the application, press 'CTRL+C' here or switch to the output window and press ESC key

Latency: 6501.6 ms

FPS: 0.2

- 実行例「Ubuntu Celeron® J4005 + NCS2」

コマンド変更 「/FP32→/FP16」「-d MYRIAD」

mizutu@ubuntu-nuc:~/omz_demos_python/python_demos/object_detection_demo$ python3 object_detection_demo.py -i ~/Videos/car_person.mp4 -m ~/model/intel/FP16/person-vehicle-bike-detection-crossroad-yolov3-1020.xml -at yolo -d MYRIAD

[ INFO ] Initializing Inference Engine...

[ INFO ] Loading network...

[ INFO ] Reading network from IR...

[ INFO ] Loading network to MYRIAD plugin...

[ INFO ] Starting inference...

To close the application, press 'CTRL+C' here or switch to the output window and press ESC key

Latency: 518.5 ms

FPS: 1.9

速度比較 †

| 項目 | Core™ i5-10210 | Core™ i7-6700 | Celeron® J4005 | Celeron® J4005 + NCS2 | Core™ i7-2620M |

| Latency(ms) | 219.2 | 431 | 6575 | 1515 | 2623 |

| fps | 4.5 | 2.5 | 0.2 | 1.9 | 0.4 |

このデモでは、マルチパーソン 2D ポーズ推定アルゴリズムの作業を紹介する。タスクは、入力画像/ビデオ内のすべての人に対して、事前定義されたキーポイントのセットとそれらの間の接続で構成されるポーズ:ボディスケルトンを予測する。

デモ アプリケーションは、同期モードと非同期モードの両方で推論をサポートする。

- OpenCV* 経由でビデオ入力をサポート

- 結果のポーズの視覚化

- 非同期 API の動作のデモンストレーション。このため、デモはTabキーによって切り替えられる 2 つのモードを備えている。

- 「ユーザー指定」モードでは、イン推測要求、スループットストリーム、およびスレッドの数を設定できる。推論、新しい要求の開始、および完了した要求の結果の表示はすべて非同期的に実行される。このモードの目的は、利用可能なすべてのデバイスを完全に利用することで、より高いFPSを取得することである。

- 「最小待機時間」モードで、1 つのインフェース要求のみを使用する。このモードの目的は、最も低い待機時間を取得することである。

(機械翻訳)

使用する事前トレーニング済みモデル †

デモの動作 †

- 起動時に、アプリケーションはコマンド ライン パラメータを読み取り、ネットワークを推論エンジンにロードする。

- OpenCV VideoCapture からフレームを取得すると、推論が実行され、結果が表示される。

デモの実行 †

▼ 「2021.3」

▲ 「2021.3」

- 実行時のディレクトリ: /opt/intel/openvino_2021/deployment_tools/open_model_zoo/demos/human_pose_estimation_demo/python/

- 実行ファイル : python3 human_pose_estimation_demo.py

- 「-h」オプションを指定してアプリケーションを実行すると、次の使用法メッセージが表示される。

$ python3 human_pose_estimation_demo.py -h

usage: human_pose_estimation_demo.py [-h] -m MODEL -at {ae,openpose} -i INPUT

[--loop] [-o OUTPUT]

[-limit OUTPUT_LIMIT] [-d DEVICE]

[-t PROB_THRESHOLD] [--tsize TSIZE]

[-nireq NUM_INFER_REQUESTS]

[-nstreams NUM_STREAMS]

[-nthreads NUM_THREADS] [-no_show]

[-u UTILIZATION_MONITORS] [-r]

Options:

-h, --help Show this help message and exit.

-m MODEL, --model MODEL

Required. Path to an .xml file with a trained model.

-at {ae,openpose}, --architecture_type {ae,openpose}

Required. Specify model' architecture type.

-i INPUT, --input INPUT

Required. An input to process. The input must be a

single image, a folder of images, video file or camera

id.

--loop Optional. Enable reading the input in a loop.

-o OUTPUT, --output OUTPUT

Optional. Name of output to save.

-limit OUTPUT_LIMIT, --output_limit OUTPUT_LIMIT

Optional. Number of frames to store in output. If 0 is

set, all frames are stored.

-d DEVICE, --device DEVICE

Optional. Specify the target device to infer on; CPU,

GPU, FPGA, HDDL or MYRIAD is acceptable. The sample

will look for a suitable plugin for device specified.

Default value is CPU.

Common model options:

-t PROB_THRESHOLD, --prob_threshold PROB_THRESHOLD

Optional. Probability threshold for poses filtering.

--tsize TSIZE Optional. Target input size. This demo implements

image pre-processing pipeline that is common to human

pose estimation approaches. Image is first resized to

some target size and then the network is reshaped to

fit the input image shape. By default target image

size is determined based on the input shape from IR.

Alternatively it can be manually set via this

parameter. Note that for OpenPose-like nets image is

resized to a predefined height, which is the target

size in this case. For Associative Embedding-like nets

target size is the length of a short first image side.

Inference options:

-nireq NUM_INFER_REQUESTS, --num_infer_requests NUM_INFER_REQUESTS

Optional. Number of infer requests

-nstreams NUM_STREAMS, --num_streams NUM_STREAMS

Optional. Number of streams to use for inference on

the CPU or/and GPU in throughput mode (for HETERO and

MULTI device cases use format

<device1>:<nstreams1>,<device2>:<nstreams2> or just

<nstreams>).

-nthreads NUM_THREADS, --num_threads NUM_THREADS

Optional. Number of threads to use for inference on

CPU (including HETERO cases).

Input/output options:

-no_show, --no_show Optional. Don't show output.

-u UTILIZATION_MONITORS, --utilization_monitors UTILIZATION_MONITORS

Optional. List of monitors to show initially.

Debug options:

-r, --raw_output_message

Optional. Output inference results raw values showing.

デモを実行するには、あらかじめOpenVINO™モデル ダウンローダーを使用してダウンロードした、公開モデルまたは事前トレーニング済みのモデルを使用する。

- デデモ出力

デモでは OpenCV を使用して、推定ポーズで結果のフレームを表示する。

デモレポート

- FPS: ビデオフレーム処理の平均レート (1 秒あたりのフレーム数)

- 待機時間: 1 つのフレームを処理するのに必要な平均時間 (フレームの読み取りから結果の表示まで) これらのメトリックを使用して、アプリケーション レベルのパフォーマンスを測定できる。

- 実行例「Ubuntu Core™ i5-10210U」

$ python3 human_pose_estimation_demo.py -i ~/Videos/driver.mp4 -m ~/model/intel/FP32/human-pose-estimation-0001.xml -at openpose

[ INFO ] Initializing Inference Engine...

[ INFO ] Loading network...

[ INFO ] Reading network from IR...

[ INFO ] Reshape net to {'data': [1, 3, 256, 456]}

[ INFO ] Loading network to CPU plugin...

[ INFO ] Starting inference...

To close the application, press 'CTRL+C' here or switch to the output window and press ESC key

Latency: 43.5 ms

FPS: 21.7

▼ 「2021.2」

▲ 「2021.2」

- 実行時のディレクトリ: ~/omz_demos_python/python_demos/human_pose_estimation_demo/

- 実行ファイル : python3 human_pose_estimation.py

- 「-h」オプションを指定してアプリケーションを実行すると、次の使用法メッセージが表示される。

mizutu@ubuntu2004dk:~/omz_demos_python/python_demos/human_pose_estimation_demo$ python3 human_pose_estimation.py -h

usage: human_pose_estimation.py [-h] -i INPUT -m MODEL -at {ae,openpose}

[--tsize TSIZE] [-t PROB_THRESHOLD] [-r]

[-d DEVICE] [-nireq NUM_INFER_REQUESTS]

[-nstreams NUM_STREAMS]

[-nthreads NUM_THREADS] [-loop LOOP]

[-no_show] [-u UTILIZATION_MONITORS]

Options:

-h, --help Show this help message and exit.

-i INPUT, --input INPUT

Required. Path to an image, video file or a numeric

camera ID.

-m MODEL, --model MODEL

Required. Path to an .xml file with a trained model.

-at {ae,openpose}, --architecture_type {ae,openpose}

Required. Type of the network, either "ae" for

Associative Embedding or "openpose" for OpenPose.

--tsize TSIZE Optional. Target input size. This demo implements

image pre-processing pipeline that is common to human

pose estimation approaches. Image is resize first to

some target size and then the network is reshaped to

fit the input image shape. By default target image

size is determined based on the input shape from IR.

Alternatively it can be manually set via this

parameter. Note that for OpenPose-like nets image is

resized to a predefined height, which is the target

size in this case. For Associative Embedding-like nets

target size is the length of a short image side.

-t PROB_THRESHOLD, --prob_threshold PROB_THRESHOLD

Optional. Probability threshold for poses filtering.

-r, --raw_output_message

Optional. Output inference results raw values showing.

-d DEVICE, --device DEVICE

Optional. Specify the target device to infer on; CPU,

GPU, FPGA, HDDL or MYRIAD is acceptable. The sample

will look for a suitable plugin for device specified.

Default value is CPU.

-nireq NUM_INFER_REQUESTS, --num_infer_requests NUM_INFER_REQUESTS

Optional. Number of infer requests

-nstreams NUM_STREAMS, --num_streams NUM_STREAMS

Optional. Number of streams to use for inference on

the CPU or/and GPU in throughput mode (for HETERO and

MULTI device cases use format

<device1>:<nstreams1>,<device2>:<nstreams2> or just

<nstreams>)

-nthreads NUM_THREADS, --num_threads NUM_THREADS

Optional. Number of threads to use for inference on

CPU (including HETERO cases)

-loop LOOP, --loop LOOP

Optional. Number of times to repeat the input.

-no_show, --no_show Optional. Don't show output

-u UTILIZATION_MONITORS, --utilization_monitors UTILIZATION_MONITORS

Optional. List of monitors to show initially.

デモを実行するには、あらかじめOpenVINO™モデル ダウンローダーを使用してダウンロードした、公開モデルまたは事前トレーニング済みのモデルを使用する。

- デデモ出力

デモでは OpenCV を使用して、推定ポーズで結果のフレームを表示する。

デモレポート

- FPS: ビデオフレーム処理の平均レート (1 秒あたりのフレーム数)

- 待機時間: 1 つのフレームを処理するのに必要な平均時間 (フレームの読み取りから結果の表示まで) これらのメトリックを使用して、アプリケーション レベルのパフォーマンスを測定できる。

- 実行例「Ubuntu Core™ i5-10210U」

mizutu@ubuntu-nuc10:~/omz_demos_python/python_demos/human_pose_estimation_demo$ python3 human_pose_estimation.py -i ~/Videos/driver.mp4 -m ~/model/intel/FP32/human-pose-estimation-0001.xml -at openpose -d CPU

[ INFO ] Initializing Inference Engine...

[ INFO ] Loading network...

[ INFO ] Using USER_SPECIFIED mode

[ INFO ] Reading network from IR...

[ INFO ] Loading network to plugin...

[ INFO ] Reading network from IR...

[ INFO ] Loading network to plugin...

[ INFO ] Starting inference...

To close the application, press 'CTRL+C' here or switch to the output window and press ESC key

To switch between min_latency/user_specified modes, press TAB key in the output window

[ INFO ] Using MIN_LATENCY mode

[ INFO ]

[ INFO ] Mode: USER_SPECIFIED

[ INFO ] FPS: 17.3

[ INFO ] Latency: 44.1 ms

[ INFO ]

[ INFO ] Mode: MIN_LATENCY

[ INFO ] FPS: 19.9

[ INFO ] Latency: 48.1 ms

- 実行例「Ubuntu Core™ i7-6700」

mizutu@ubuntu2004dk:~/omz_demos_python/python_demos/human_pose_estimation_demo$ python3 human_pose_estimation.py -i ~/Videos/driver.mp4 -m ~/model/intel/FP32/human-pose-estimation-0001.xml -at openpose -d CPU

[ INFO ] Initializing Inference Engine...

[ INFO ] Loading network...

[ INFO ] Using USER_SPECIFIED mode

[ INFO ] Reading network from IR...

[ INFO ] Loading network to plugin...

[ INFO ] Reading network from IR...

[ INFO ] Loading network to plugin...

[ INFO ] Starting inference...

To close the application, press 'CTRL+C' here or switch to the output window and press ESC key

To switch between min_latency/user_specified modes, press TAB key in the output window

[ INFO ]

[ INFO ] Mode: USER_SPECIFIED

[ INFO ] FPS: 9.4

[ INFO ] Latency: 99.3 ms

- 実行例「Ubuntu Celeron® J4005」

mizutu@ubuntu-nuc:~/omz_demos_python/python_demos/human_pose_estimation_demo$ python3 human_pose_estimation.py -i ~/Videos/driver.mp4 -m ~/model/intel/FP32/human-pose-estimation-0001.xml -at openpose -d CPU

[ INFO ] Initializing Inference Engine...

[ INFO ] Loading network...

[ INFO ] Using USER_SPECIFIED mode

[ INFO ] Reading network from IR...

[ INFO ] Loading network to plugin...

[ INFO ] Reading network from IR...

[ INFO ] Loading network to plugin...

[ INFO ] Starting inference...

To close the application, press 'CTRL+C' here or switch to the output window and press ESC key

To switch between min_latency/user_specified modes, press TAB key in the output window

[ INFO ]

[ INFO ] Mode: USER_SPECIFIED

[ INFO ] FPS: 1.3

[ INFO ] Latency: 719.9 ms

- 実行例「Ubuntu Celeron® J4005 + NCS2」

コマンド変更 「/FP32→/FP16」「-d MYRIAD」

mizutu@ubuntu-nuc:~/omz_demos_python/python_demos/human_pose_estimation_demo$ python3 human_pose_estimation.py -i ~/Videos/driver.mp4 -m ~/model/intel/FP16/human-pose-estimation-0001.xml -at openpose -d MYRIAD

[ INFO ] Initializing Inference Engine...

[ INFO ] Loading network...

[ INFO ] Using USER_SPECIFIED mode

[ INFO ] Reading network from IR...

[ INFO ] Loading network to plugin...

[ INFO ] Reading network from IR...

[ INFO ] Loading network to plugin...

[ INFO ] Starting inference...

To close the application, press 'CTRL+C' here or switch to the output window and press ESC key

To switch between min_latency/user_specified modes, press TAB key in the output window

[ INFO ]

[ INFO ] Mode: USER_SPECIFIED

[ INFO ] FPS: 2.0

[ INFO ] Latency: 237.1 ms

速度比較 †

| 項目 | Core™ i5-10210 | Core™ i7-6700 | Celeron® J4005 | Celeron® J4005 + NCS2 | Core™ i7-2620M |

| USER_SPECIFIDE mode | FPS | 17.3 | 9.2 | 1.2 | 0.5 | 1.9 |

| Latency (ms) | 44.1 | 104 | 714 | 236 | 534 |

| MIN_LATENCY mode | FPS | 19.9 | 9.5 | 1.2 | 3.9 | 1.7 |

| Latency (ms) | 48.1 | 101 | 701 | 236.5 | 563 |

このデモでは、OpenVINO のツールキットを使用してジェスチャ (例: アメリカ手話 (ASL) ジェスチャ) 認識モデルを実行する方法を示す。(機械翻訳)

使用する事前トレーニング済みモデル †

デモの動作 †

- デモアプリケーションは、ビデオフレームを1つずつ読み取り、ROIを抽出する人の検出器を実行し、非常に一人称のROIを追跡する。フレームレートが一定のフレームのバッチを準備するために追加のプロセスが使用される。

- フレームのバッチと抽出された ROI は、ジェスチャを予測する人工ニューラル ネットワークに渡される。

- アプリは、次のオブジェクトが表示されているグラフィカルウィンドウとして、その作業の結果を視覚化する。

- ROI が検出された入力フレーム

- 最後に認識されたジェスチャ

- パフォーマンス特性

デモの実行 †

▼ 「2021.3」

▲ 「2021.3」

- 実行時のディレクトリ: /opt/intel/openvino_2021/deployment_tools/open_model_zoo/demos/gesture_recognition_demo/python/

- 実行ファイル : python3 gesture_recognition_demo.py

- 「-h」オプションを指定してアプリケーションを実行すると、次の使用法メッセージが表示される。

$ python3 gesture_recognition_demo.py -h

usage: gesture_recognition_demo.py [-h] -m_a ACTION_MODEL -m_d DETECTION_MODEL

-i INPUT [-o OUTPUT] [-limit OUTPUT_LIMIT]

-c CLASS_MAP [-s SAMPLES_DIR]

[-t ACTION_THRESHOLD] [-d DEVICE]

[-l CPU_EXTENSION] [--no_show]

[-u UTILIZATION_MONITORS]

Options:

-h, --help Show this help message and exit.

-m_a ACTION_MODEL, --action_model ACTION_MODEL

Required. Path to an .xml file with a trained gesture

recognition model.

-m_d DETECTION_MODEL, --detection_model DETECTION_MODEL

Required. Path to an .xml file with a trained person

detector model.

-i INPUT, --input INPUT

Required. Path to a video file or a device node of a

web-camera.

-o OUTPUT, --output OUTPUT

Optional. Name of output to save.

-limit OUTPUT_LIMIT, --output_limit OUTPUT_LIMIT

Optional. Number of frames to store in output. If 0 is

set, all frames are stored.

-c CLASS_MAP, --class_map CLASS_MAP

Required. Path to a file with gesture classes.

-s SAMPLES_DIR, --samples_dir SAMPLES_DIR

Optional. Path to a directory with video samples of

gestures.

-t ACTION_THRESHOLD, --action_threshold ACTION_THRESHOLD

Optional. Threshold for the predicted score of an

action.

-d DEVICE, --device DEVICE

Optional. Specify the target device to infer on: CPU,

GPU, FPGA, HDDL or MYRIAD. The demo will look for a

suitable plugin for device specified (by default, it

is CPU).

-l CPU_EXTENSION, --cpu_extension CPU_EXTENSION

Optional. Required for CPU custom layers. Absolute

path to a shared library with the kernels

implementations.

--no_show Optional. Do not visualize inference results.

-u UTILIZATION_MONITORS, --utilization_monitors UTILIZATION_MONITORS

Optional. List of monitors to show initially.

デモは人の追跡モードで開始し、アクション認識モードで切り替えるには、適切な検出ID(各境界ボックスの左上の数字)を持つボタンを押す必要がある。フレームに含まれる人が 1 人だけの場合、それらは自動的に選択される。その後、スペースボタンを押してトラッキングモードに戻ることができる。

デモを実行するには、IR 形式のジェスチャ認識モデルと人物検出モデル、クラス名のファイル、および入力ビデオへのパスを指定する。

python3 gesture_recognition_demo.py -m_a ~/model/intel/FP32/asl-recognition-0004.xml -m_d ~/model/intel/FP32/person-detection-asl-0001.xml -i ~/Videos/ASK_Please.mp4 -c /opt/intel/openvino_2021/deployment_tools/open_model_zoo/data/dataset_classes/msasl100.json

- デモ出力

アプリケーションは、OpenCVを使用して、ジェスチャ認識の結果と現在の推論のパフォーマンスを表示する。

▼ 「2021.2」

▲ 「2021.2」

- 実行時のディレクトリ: ~/omz_demos_python/python_demos/gesture_recognition_demo/

- 実行ファイル : python3 gesture_recognition_demo.py

- 「-h」オプションを指定してアプリケーションを実行すると、次の使用法メッセージが表示される。

mizutu@ubuntu2004dk:~/omz_demos_python/python_demos/gesture_recognition_demo$ python3 gesture_recognition_demo.py -h

usage: gesture_recognition_demo.py [-h] -m_a ACTION_MODEL -m_d DETECTION_MODEL

-i INPUT -c CLASS_MAP [-s SAMPLES_DIR]

[-t ACTION_THRESHOLD] [-d DEVICE]

[-l CPU_EXTENSION] [--no_show]

[-u UTILIZATION_MONITORS]

Options:

-h, --help Show this help message and exit.

-m_a ACTION_MODEL, --action_model ACTION_MODEL

Required. Path to an .xml file with a trained gesture

recognition model.

-m_d DETECTION_MODEL, --detection_model DETECTION_MODEL

Required. Path to an .xml file with a trained person

detector model.

-i INPUT, --input INPUT

Required. Path to a video file or a device node of a

web-camera.

-c CLASS_MAP, --class_map CLASS_MAP

Required. Path to a file with gesture classes.

-s SAMPLES_DIR, --samples_dir SAMPLES_DIR

Optional. Path to a directory with video samples of

gestures.

-t ACTION_THRESHOLD, --action_threshold ACTION_THRESHOLD

Optional. Threshold for the predicted score of an

action.

-d DEVICE, --device DEVICE

Optional. Specify the target device to infer on: CPU,

GPU, FPGA, HDDL or MYRIAD. The demo will look for a

suitable plugin for device specified (by default, it

is CPU).

-l CPU_EXTENSION, --cpu_extension CPU_EXTENSION

Optional. Required for CPU custom layers. Absolute

path to a shared library with the kernels

implementations.

--no_show Optional. Do not visualize inference results.

-u UTILIZATION_MONITORS, --utilization_monitors UTILIZATION_MONITORS

Optional. List of monitors to show initially.

デモは人の追跡モードで開始し、アクション認識モードで切り替えるには、適切な検出ID(各境界ボックスの左上の数字)を持つボタンを押す必要がある。フレームに含まれる人が 1 人だけの場合、それらは自動的に選択される。その後、スペースボタンを押してトラッキングモードに戻ることができる。

デモを実行するには、IR 形式のジェスチャ認識モデルと人物検出モデル、クラス名のファイル、および入力ビデオへのパスを指定する。

python3 gesture_recognition_demo.py -m_a ~/model/intel/FP32/asl-recognition-0004.xml -m_d ~/model/intel/FP32/person-detection-asl-0001.xml -i ~/Videos/ASL_TankYou.mp4 -c ./msasl100-classes.json

- デモ出力

アプリケーションは、OpenCVを使用して、ジェスチャ認識の結果と現在の推論のパフォーマンスを表示する。

- 実行例「Ubuntu Core™ i5-10210U」

mizutu@ubuntu-nuc10:~/omz_demos_python/python_demos/gesture_recognition_demo$ python3 gesture_recognition_demo.py -m_a ~/model/intel/FP32/asl-recognition-0004.xml -m_d ~/model/intel/FP32/person-detection-asl-0001.xml -i ~/Videos/ASK_Please.mp4 -c ./msasl100-classes.json

mizutu@ubuntu-nuc10:~/omz_demos_python/python_demos/gesture_recognition_demo$ python3 gesture_recognition_demo.py -m_a ~/model/intel/FP32/asl-recognition-0004.xml -m_d ~/model/intel/FP32/person-detection-asl-0001.xml -i ~/Videos/ASL_TankYou.mp4 -c ./msasl100-classes.json

- 実行例「Ubuntu Core™ i7-6700」

mizutu@ubuntu2004dk:~/omz_demos_python/python_demos/gesture_recognition_demo$ python3 gesture_recognition_demo.py -m_a ~/model/intel/FP32/asl-recognition-0004.xml -m_d ~/model/intel/FP32/person-detection-asl-0001.xml -i ~/Videos/ASK_Please.mp4 -c ./msasl100-classes.json

mizutu@ubuntu2004dk:~/omz_demos_python/python_demos/gesture_recognition_demo$ python3 gesture_recognition_demo.py -m_a ~/model/intel/FP32/asl-recognition-0004.xml -m_d ~/model/intel/FP32/person-detection-asl-0001.xml -i ~/Videos/ASL_TankYou.mp4 -c ./msasl100-classes.json

- 実行例「Ubuntu Celeron® J4005」

mizutu@ubuntu-nuc:~/omz_demos_python/python_demos/gesture_recognition_demo$ python3 gesture_recognition_demo.py -m_a ~/model/intel/FP32/asl-recognition-0004.xml -m_d ~/model/intel/FP32/person-detection-asl-0001.xml -i ~/Videos/ASK_Please.mp4 -c ./msasl100-classes.json

mizutu@ubuntu-nuc:~/omz_demos_python/python_demos/gesture_recognition_demo$ python3 gesture_recognition_demo.py -m_a ~/model/intel/FP32/asl-recognition-0004.xml -m_d ~/model/intel/FP32/person-detection-asl-0001.xml -i ~/Videos/ASL_TankYou.mp4 -c ./msasl100-classes.json

- 実行例「Ubuntu Celeron® J4005 + NCS2」

asl-recognition-0004 モデルは NCS2 をサポートしていないようだ。

速度比較 †

| 項目 | Core™ i5-10210 | Core™ i7-6700 | Celeron® J4005 | Celeron® J4005 + NCS2 | Core™ i7-2620M |

| fps | 14.3 | 8.7 | 0.95 | X | 1.9 |

| fps | 14.5 | 8.8 | 0.94 | X | 1.9 |

OpenVINO™を使用して手書きの日本語および簡体字中国語のテキスト行を認識する方法を示す。日本語の場合、このデモでは、Kondateと Nakayosiのデータセットのすべての文字がサポートされている。簡体字中国語の場合は、SCUT-EPT dataset の文字をサポートしている。(機械翻訳)

使用する事前トレーニング済みモデル †

デモの動作 †

- デモでは、まず画像を読み込み、サイズ変更やパディングなどの前処理を実行する。

- その後、プラグインにモデルをロードした後、推論が開始される。

- 返されたインデックスを文字にデコードした後、デモは予測されたテキストを表示する。

デモの実行 †

▼ 「2021.3」

▲ 「2021.3」

- 実行時のディレクトリ: /opt/intel/openvino_2021/deployment_tools/open_model_zoo/demos/handwritten_text_recognition_demo/python/

- 実行ファイル : python3 handwritten_text_recognition_demo.py

- 「-h」オプションを指定してアプリケーションを実行すると、次の使用法メッセージが表示される。

$ python3 handwritten_text_recognition_demo.py -h

usage: handwritten_text_recognition_demo.py [-h] -m MODEL -i INPUT [-d DEVICE]

[-ni NUMBER_ITER] [-cl CHARLIST]

[-dc DESIGNATED_CHARACTERS]

[-tk TOP_K]

Options:

-h, --help Show this help message and exit.

-m MODEL, --model MODEL

Path to an .xml file with a trained model.

-i INPUT, --input INPUT

Required. Path to an image to infer

-d DEVICE, --device DEVICE

Optional. Specify the target device to infer on; CPU,

GPU, FPGA, HDDL, MYRIAD or HETERO: is acceptable. The

sample will look for a suitable plugin for device

specified. Default value is CPU

-ni NUMBER_ITER, --number_iter NUMBER_ITER

Optional. Number of inference iterations

-cl CHARLIST, --charlist CHARLIST

Path to the decoding char list file. Default is for

Japanese

-dc DESIGNATED_CHARACTERS, --designated_characters DESIGNATED_CHARACTERS

Optional. Path to the designated character file

-tk TOP_K, --top_k TOP_K

Optional. Top k steps in looking up the decoded

character, until a designated one is found

デモを実行するには、あらかじめOpenVINO™モデル ダウンローダーを使用してダウンロードした、公開モデルまたは事前トレーニング済みのモデルを使用する。

引数が指定されている場合、出力文字が指定された文字に含まれていない場合、スクリプトは、指定された文字が見つかるまで、デコードされた文字を検索する上の k のステップをチェックする。これにより、出力文字は指定された領域に制限される。既定では、K は 20 に設定されている。

たとえば、出力文字を数字とハイフンのみに制限する場合は、指定された文字ファイルへのパスを指定する必要がある。その後、スクリプトは出力文字に対して後のフィルタリング処理を実行する、K が選択した最初の要素に何も含めなければ、他の文字が許可される可能性があることに注意すること。

- デデモ出力

アプリケーションは、結果の認識テキストと推論のパフォーマンスを表示するために、ターミナルを使用する。

▼ 「2021.2」

▲ 「2021.2」

- 実行時のディレクトリ: ~/omz_demos_python/python_demos/handwritten_text_recognition_demo/

- 実行ファイル : python3 handwritten_text_recognition_demo.py

- 「-h」オプションを指定してアプリケーションを実行すると、次の使用法メッセージが表示される。

mizutu@ubuntu2004dk:~/omz_demos_python/python_demos/handwritten_text_recognition_demo$ python3 handwritten_text_recognition_demo.py -h

usage: handwritten_text_recognition_demo.py [-h] -m MODEL -i INPUT [-d DEVICE]

[-ni NUMBER_ITER] [-cl CHARLIST]

[-dc DESIGNATED_CHARACTERS]

[-tk TOP_K]

Options:

-h, --help Show this help message and exit.

-m MODEL, --model MODEL

Path to an .xml file with a trained model.

-i INPUT, --input INPUT

Required. Path to an image to infer

-d DEVICE, --device DEVICE

Optional. Specify the target device to infer on; CPU,

GPU, FPGA, HDDL, MYRIAD or HETERO: is acceptable. The

sample will look for a suitable plugin for device

specified. Default value is CPU

-ni NUMBER_ITER, --number_iter NUMBER_ITER

Optional. Number of inference iterations

-cl CHARLIST, --charlist CHARLIST

Path to the decoding char list file. Default is for

Japanese

-dc DESIGNATED_CHARACTERS, --designated_characters DESIGNATED_CHARACTERS

Optional. Path to the designated character file

-tk TOP_K, --top_k TOP_K

Optional. Top k steps in looking up the decoded

character, until a designated one is found

デモを実行するには、あらかじめOpenVINO™モデル ダウンローダーを使用してダウンロードした、公開モデルまたは事前トレーニング済みのモデルを使用する。

引数が指定されている場合、出力文字が指定された文字に含まれていない場合、スクリプトは、指定された文字が見つかるまで、デコードされた文字を検索する上の k のステップをチェックする。これにより、出力文字は指定された領域に制限される。既定では、K は 20 に設定されている。

たとえば、出力文字を数字とハイフンのみに制限する場合は、指定された文字ファイルへのパスを指定する必要がある。その後、スクリプトは出力文字に対して後のフィルタリング処理を実行する、K が選択した最初の要素に何も含めなければ、他の文字が許可される可能性があることに注意すること。

- デデモ出力

アプリケーションは、結果の認識テキストと推論のパフォーマンスを表示するために、ターミナルを使用する。

- 実行例「Ubuntu Core™ i5-10210U」

mizutu@ubuntu-nuc10:~/omz_demos_python/python_demos/handwritten_text_recognition_demo$ python3 handwritten_text_recognition_demo.py -i data/handwritten_japanese_test.png -m ~/model/intel/FP32/handwritten-japanese-recognition-0001.xml

[ INFO ] Loading network

[ INFO ] Preparing input/output blobs

[ INFO ] Loading model to the plugin

[ INFO ] Starting inference (1 iterations)

['菊池朋子']

[ INFO ] Average throughput: 277.19688415527344 ms

- 実行例「Ubuntu Core™ i7-6700」

mizutu@ubuntu2004dk:~/omz_demos_python/python_demos/handwritten_text_recognition_demo$ python3 handwritten_text_recognition_demo.py -i data/handwritten_japanese_test.png -m ~/model/intel/FP32/handwritten-japanese-recognition-0001.xml

[ INFO ] Loading network

[ INFO ] Preparing input/output blobs

[ INFO ] Loading model to the plugin

[ INFO ] Starting inference (1 iterations)

['菊池朋子']

[ INFO ] Average throughput: 603.6098003387451 ms

- 実行例「Ubuntu Celeron® J4005」

mizutu@ubuntu-nuc:~/omz_demos_python/python_demos/handwritten_text_recognition_demo$ python3 handwritten_text_recognition_demo.py -i data/handwritten_japanese_test.png -m ~/model/intel/FP32/handwritten-japanese-recognition-0001.xml

[ INFO ] Loading network

[ INFO ] Preparing input/output blobs

[ INFO ] Loading model to the plugin

[ INFO ] Starting inference (1 iterations)

['菊池朋子']

[ INFO ] Average throughput: 4353.050708770752 ms

- 実行例「Ubuntu Celeron® J4005 + NCS2」

コマンド変更 「/FP32→/FP16」「-d MYRIAD」

mizutu@ubuntu-nuc:~/omz_demos_python/python_demos/handwritten_text_recognition_demo$ python3 handwritten_text_recognition_demo.py -i data/handwritten_japanese_test.png -m ~/model/intel/FP16/handwritten-japanese-recognition-0001.xml -d MYRIAD

[ INFO ] Loading network

[ INFO ] Preparing input/output blobs

[ INFO ] Loading model to the plugin

[ INFO ] Starting inference (1 iterations)

['菊池朋子']

[ INFO ] Average throughput: 866.3461208343506 ms

速度比較 †

| 項目 | Core™ i5-10210 | Core™ i7-6700 | Celeron® J4005 | Celeron® J4005 + NCS2 | Core™ i7-2620M |

| Average throughput(ms) | 277 | 603 | 4353 | 866 | 2742 |

ニューラルネットワークを使用して、さまざまな環境で任意の角度で回転した印刷テキストを検出して認識する例。

使用する事前トレーニング済みモデル †

デモの動作 †

- 起動時に、アプリケーションはコマンド ライン パラメータを読み取り、1 つのネットワークを推論エンジンにロードして実行する。

- 画像を取得すると、テキスト検出の推論を実行し、各テキスト境界ボックスの 4 つのポイント (x1,y1)、(x2,y2)、(x3,y3)、(x4,y4)として結果を出力する。

- テキスト認識モデルが提供されている場合、デモは認識されたテキストも表示する。

デモの実行 †

▼ 「2021.3」

▲ 「2021.3」

- 実行時のディレクトリ:~/omz_demos_build/intel64/Release

- 実行ファイル :./text_detection_demo

- 「-h」オプションを指定してアプリケーションを実行すると、次の使用法メッセージが表示される。

$ ./text_detection_demo -h

InferenceEngine: API version ......... 2.1

Build ........... 2021.3.0-2787-60059f2c755-releases/2021/3

text_detection_demo [OPTION]

Options:

-h Print a usage message.

-i Required. An input to process. The input must be a single image, a folder of images, video file or camera id.

-loop Optional. Enable reading the input in a loop.

-o "<path>" Optional. Name of output to save.

-limit "<num>" Optional. Number of frames to store in output. If 0 is set, all frames are stored.

-m_td "<path>" Required. Path to the Text Detection model (.xml) file.

-m_tr "<path>" Required. Path to the Text Recognition model (.xml) file.

-m_tr_ss "<value>" Optional. Symbol set for the Text Recognition model.

-tr_pt_first Optional. Specifies if pad token is the first symbol in the alphabet. Default is false

-tr_o_blb_nm Optional. Name of the output blob of the model which would be used as model output. If not stated, first blob of the model would be used.

-cc Optional. If it is set, then in case of absence of the Text Detector, the Text Recognition model takes a central image crop as an input, but not full frame.

-w_td "<value>" Optional. Input image width for Text Detection model.

-h_td "<value>" Optional. Input image height for Text Detection model.

-thr "<value>" Optional. Specify a recognition confidence threshold. Text detection candidates with text recognition confidence below specified threshold are rejected.

-cls_pixel_thr "<value>" Optional. Specify a confidence threshold for pixel classification. Pixels with classification confidence below specified threshold are rejected.

-link_pixel_thr "<value>" Optional. Specify a confidence threshold for pixel linkage. Pixels with linkage confidence below specified threshold are not linked.

-max_rect_num "<value>" Optional. Maximum number of rectangles to recognize. If it is negative, number of rectangles to recognize is not limited.

-d_td "<device>" Optional. Specify the target device for the Text Detection model to infer on (the list of available devices is shown below). The demo will look for a suitable plugin for a specified device. By default, it is CPU.

-d_tr "<device>" Optional. Specify the target device for the Text Recognition model to infer on (the list of available devices is shown below). The demo will look for a suitable plugin for a specified device. By default, it is CPU.

-l "<absolute_path>" Optional. Absolute path to a shared library with the CPU kernels implementation for custom layers.

-c "<absolute_path>" Optional. Absolute path to the GPU kernels implementation for custom layers.

-no_show Optional. If it is true, then detected text will not be shown on image frame. By default, it is false.

-r Optional. Output Inference results as raw values.

-u Optional. List of monitors to show initially.

-b Optional. Bandwidth for CTC beam search decoder. Default value is 0, in this case CTC greedy decoder will be used.

[E:] [BSL] found 0 ioexpander device

Available target devices: CPU GNA MYRIAD

- デモを実行するには、あらかじめOpenVINO™モデル ダウンローダーを使用してダウンロードした、公開モデルまたは事前トレーニング済みのモデルを使用する。

- 実行例「Ubuntu Core™ i5-10210U」

$ ./text_detection_demo -loop -m_td ~/model/intel/FP32/text-detection-0004.xml -m_tr ~/model/intel/FP32/text-recognition-0012.xml -i ~/Images/text-img.jpg

InferenceEngine: API version ......... 2.1

Build ........... 2021.3.0-2787-60059f2c755-releases/2021/3

[ INFO ] Parsing input parameters

[ INFO ] Loading Inference Engine

[ INFO ] Device info:

[ INFO ] CPU

MKLDNNPlugin version ......... 2.1

Build ........... 2021.3.0-2787-60059f2c755-releases/2021/3

[ INFO ] Loading network files

[ INFO ] Starting inference

To close the application, press 'CTRL+C' here or switch to the output window and press ESC or Q

text detection model inference (ms) (fps): 122.258 8.17942

text detection postprocessing (ms) (fps): 59.7419 16.7387

text recognition model inference (ms) (fps): 7.09908 140.863

text recognition postprocessing (ms) (fps): 0.00714286 140000

text crop (ms) (fps): 0.0477028 20963.1

▼ 「2021.2」

▲ 「2021.2」

- 実行時のディレクトリ:~/omz_demos_build/intel64/Release

- 実行ファイル :./text_detection_demo

- 「-h」オプションを指定してアプリケーションを実行すると、次の使用法メッセージが表示される。

mizutu@ubuntu2004dk:~/omz_demos_build/intel64/Release$ ./text_detection_demo -h

text_detection_demo [OPTION]

Options:

-h Print a usage message.

-i Required. An input to process. The input must be a single image, a folder of images or anything that cv::VideoCapture can process.

-loop Optional. Enable reading the input in a loop.

-m_td "<path>" Required. Path to the Text Detection model (.xml) file.

-m_tr "<path>" Required. Path to the Text Recognition model (.xml) file.

-m_tr_ss "<value>" Optional. Symbol set for the Text Recognition model.

-cc Optional. If it is set, then in case of absence of the Text Detector, the Text Recognition model takes a central image crop as an input, but not full frame.

-w_td "<value>" Optional. Input image width for Text Detection model.

-h_td "<value>" Optional. Input image height for Text Detection model.

-thr "<value>" Optional. Specify a recognition confidence threshold. Text detection candidates with text recognition confidence below specified threshold are rejected.

-cls_pixel_thr "<value>" Optional. Specify a confidence threshold for pixel classification. Pixels with classification confidence below specified threshold are rejected.

-link_pixel_thr "<value>" Optional. Specify a confidence threshold for pixel linkage. Pixels with linkage confidence below specified threshold are not linked.

-max_rect_num "<value>" Optional. Maximum number of rectangles to recognize. If it is negative, number of rectangles to recognize is not limited.

-d_td "<device>" Optional. Specify the target device for the Text Detection model to infer on (the list of available devices is shown below). The demo will look for a suitable plugin for a specified device. By default, it is CPU.

-d_tr "<device>" Optional. Specify the target device for the Text Recognition model to infer on (the list of available devices is shown below). The demo will look for a suitable plugin for a specified device. By default, it is CPU.

-l "<absolute_path>" Optional. Absolute path to a shared library with the CPU kernels implementation for custom layers.

-c "<absolute_path>" Optional. Absolute path to the GPU kernels implementation for custom layers.

-no_show Optional. If it is true, then detected text will not be shown on image frame. By default, it is false.

-r Optional. Output Inference results as raw values.

-u Optional. List of monitors to show initially.

-b Optional. Bandwidth for CTC beam search decoder. Default value is 0, in this case CTC greedy decoder will be used.

- デモを実行するには、あらかじめOpenVINO™モデル ダウンローダーを使用してダウンロードした、公開モデルまたは事前トレーニング済みのモデルを使用する。

- 実行例「Ubuntu Core™ i5-10210U」

mizutu@ubuntu-nuc10:~/omz_demos_build/intel64/Release$ ./text_detection_demo -loop -m_td ~/model/intel/FP32/text-detection-0004.xml -m_tr ~/model/intel/FP32/text-recognition-0012.xml -i ~/Images/text-img.jpg

InferenceEngine: API version ......... 2.1

Build ........... 2021.2.0-1877-176bdf51370-releases/2021/2

[ INFO ] Parsing input parameters

[ INFO ] Loading Inference Engine

[ INFO ] Device info:

[ INFO ] CPU

MKLDNNPlugin version ......... 2.1

Build ........... 2021.2.0-1877-176bdf51370-releases/2021/2

[ INFO ] Loading network files

[ INFO ] Starting inference

To close the application, press 'CTRL+C' here or switch to the output window and press ESC or Q

text detection model inference (ms) (fps): 105.533 9.47568

text detection postprocessing (ms) (fps): 56.9333 17.5644

text recognition model inference (ms) (fps): 7.18095 139.257

text recognition postprocessing (ms) (fps): 0.00634286 157658

text crop (ms) (fps): 0.0490619 20382.4

- 実行例「Ubuntu Core™ i7-6700」

mizutu@ubuntu2004dk:~/omz_demos_build/intel64/Release$ ./text_detection_demo -loop -m_td ~/model/intel/FP32/text-detection-0004.xml -m_tr ~/model/intel/FP32/text-recognition-0012.xml -i ~/Images/text-img.jpg

InferenceEngine: API version ......... 2.1

Build ........... 2021.2.0-1877-176bdf51370-releases/2021/2

[ INFO ] Parsing input parameters

[ INFO ] Loading Inference Engine

[ INFO ] Device info:

[ INFO ] CPU

MKLDNNPlugin version ......... 2.1

Build ........... 2021.2.0-1877-176bdf51370-releases/2021/2

[ INFO ] Loading network files

[ INFO ] Starting inference

To close the application, press 'CTRL+C' here or switch to the output window and press ESC or Q

text detection model inference (ms) (fps): 184.986 5.40582

text detection postprocessing (ms) (fps): 69.338 14.4221

text recognition model inference (ms) (fps): 14.5584 68.6891

text recognition postprocessing (ms) (fps): 0.0109014 91731.3

text crop (ms) (fps): 0.0665674 15022.4

- 実行例「Ubuntu Celeron® J4005」

mizutu@ubuntu-nuc:~/omz_demos_build/intel64/Release$ ./text_detection_demo -loop -m_td ~/model/intel/FP32/text-detection-0004.xml -m_tr ~/model/intel/FP32/text-recognition-0012.xml -i ~/Images/text-img.jpg

InferenceEngine: API version ......... 2.1

Build ........... 2021.2.0-1877-176bdf51370-releases/2021/2

[ INFO ] Parsing input parameters

[ INFO ] Loading Inference Engine

[ INFO ] Device info:

[ INFO ] CPU

MKLDNNPlugin version ......... 2.1

Build ........... 2021.2.0-1877-176bdf51370-releases/2021/2

[ INFO ] Loading network files

[ INFO ] Starting inference

To close the application, press 'CTRL+C' here or switch to the output window and press ESC or Q

text detection model inference (ms) (fps): 1153.89 0.866635

text detection postprocessing (ms) (fps): 147.889 6.76183

text recognition model inference (ms) (fps): 103.111 9.69828

text recognition postprocessing (ms) (fps): 0.0157857 63348.4

text crop (ms) (fps): 0.138127 7239.72

- 実行例「Ubuntu Celeron® J4005 + NCS2」

コマンド変更 「/FP32→/FP16」「-d MYRIAD」

mizutu@ubuntu-nuc:~/omz_demos_build/intel64/Release$ ./text_detection_demo -loop -m_td ~/model/intel/FP16/text-detection-0004.xml -m_tr ~/model/intel/FP16/text-recognition-0012.xml -d_td MYRIAD -d_tr MYRIAD -i ~/Images/text-img.jpg

InferenceEngine: API version ......... 2.1

Build ........... 2021.2.0-1877-176bdf51370-releases/2021/2

[ INFO ] Parsing input parameters

[ INFO ] Loading Inference Engine

[ INFO ] Device info:

[ INFO ] MYRIAD

myriadPlugin version ......... 2.1

Build ........... 2021.2.0-1877-176bdf51370-releases/2021/2

[ INFO ] Loading network files

[ INFO ] Starting inference

To close the application, press 'CTRL+C' here or switch to the output window and press ESC or Q

text detection model inference (ms) (fps): 684.778 1.46033

text detection postprocessing (ms) (fps): 153.889 6.49819

text recognition model inference (ms) (fps): 75.9704 13.163

text recognition postprocessing (ms) (fps): 0.0431778 23160.1

text crop (ms) (fps): 0.252793 3955.81

- Webカメラ入力実行例 実行例「Ubuntu Core™ i5-10210U」

mizutu@ubuntu-nuc10:~/omz_demos_build/intel64/Release$ ./text_detection_demo -m_td ~/model/intel/FP32/text-detection-0004.xml -m_tr ~/model/intel/FP32/text-recognition-0012.xml -i 0

InferenceEngine: API version ......... 2.1

Build ........... 2021.2.0-1877-176bdf51370-releases/2021/2

[ INFO ] Parsing input parameters

[ INFO ] Loading Inference Engine

[ INFO ] Device info:

[ INFO ] CPU

MKLDNNPlugin version ......... 2.1

Build ........... 2021.2.0-1877-176bdf51370-releases/2021/2

[ INFO ] Loading network files

[ INFO ] Starting inference

To close the application, press 'CTRL+C' here or switch to the output window and press ESC or Q

text detection model inference (ms) (fps): 102.432 9.76253

text detection postprocessing (ms) (fps): 40.4324 24.7326

text recognition model inference (ms) (fps): 7.14976 139.865

text recognition postprocessing (ms) (fps): 0.00733333 136364

text crop (ms) (fps): 0.0526039 19010

- Webカメラ入力実行例「Ubuntu Core™ i7-6700」

コマンド変更「入力ソースを'0'」

mizutu@ubuntu-nuc:~/omz_demos_build/intel64/Release$ ./text_detection_demo -m_td ~/model/intel/FP32/text-detection-0004.xml -m_tr ~/model/intel/FP32/text-recognition-0012.xml -i 0

InferenceEngine: API version ......... 2.1

Build ........... 2021.2.0-1877-176bdf51370-releases/2021/2

[ INFO ] Parsing input parameters

[ INFO ] Loading Inference Engine

[ INFO ] Device info:

[ INFO ] CPU

MKLDNNPlugin version ......... 2.1

Build ........... 2021.2.0-1877-176bdf51370-releases/2021/2

[ INFO ] Loading network files

[ INFO ] Starting inference

To close the application, press 'CTRL+C' here or switch to the output window and press ESC or Q

text detection model inference (ms) (fps): 1195.22 0.836664

text detection postprocessing (ms) (fps): 143.111 6.98758

text recognition model inference (ms) (fps): 109.056 9.16964

text recognition postprocessing (ms) (fps): 0.0181667 55045.9

text crop (ms) (fps): 0.149852 6673.26

- Webカメラ入力実行例「Ubuntu Celeron® J4005 + NCS2」

コマンド変更 「/FP32→/FP16」「-d MYRAD」「入力ソースを'0'」

mizutu@ubuntu-nuc:~/omz_demos_build/intel64/Release$ ./text_detection_demo -m_td ~/model/intel/FP16/text-detection-0004.xml -m_tr ~/model/intel/FP16/text-recognition-0012.xml -d_td MYRIAD -d_tr MYRIAD -i 0

InferenceEngine: API version ......... 2.1

Build ........... 2021.2.0-1877-176bdf51370-releases/2021/2

[ INFO ] Parsing input parameters

[ INFO ] Loading Inference Engine

[ INFO ] Device info:

[ INFO ] MYRIAD

myriadPlugin version ......... 2.1

Build ........... 2021.2.0-1877-176bdf51370-releases/2021/2

[ INFO ] Loading network files

[ INFO ] Starting inference

To close the application, press 'CTRL+C' here or switch to the output window and press ESC or Q

text detection model inference (ms) (fps): 663.556 1.50703

text detection postprocessing (ms) (fps): 143.222 6.98216

text recognition model inference (ms) (fps): 75.4247 13.2583

text recognition postprocessing (ms) (fps): 0.0435068 22984.9

text crop (ms) (fps): 0.218205 4582.84

速度比較 †

| 項目 | Core™ i5-10210 | Core™ i7-6700 | Celeron® J4005 | Celeron® J4005 + NCS2 | Core™ i7-2620M |

| detection model inference | ms | 102 | 185 | 1154 | 685 | 1079 |

| fps | 9.8 | 5.4 | 0.87 | 1.46 | 0.93 |

| detection model postprocessing | ms | 40.4 | 69 | 148 | 154 | 111 |

| fps | 24.7 | 14.4 | 6.76 | 6.50 | 9.0 |

| recognition model inference | ms | 7.1 | 14.6 | 103 | 76.0 | 77.3 |

| fps | 140 | 68.7 | 9.70 | 13.2 | 12.9 |

| recognition model postprocessing | ms | 0.007 | 0.01 | 0.015 | 0.04 | 0.012 |

| fps | 136364 | 91731 | 63348 | 23160 | 81328 |

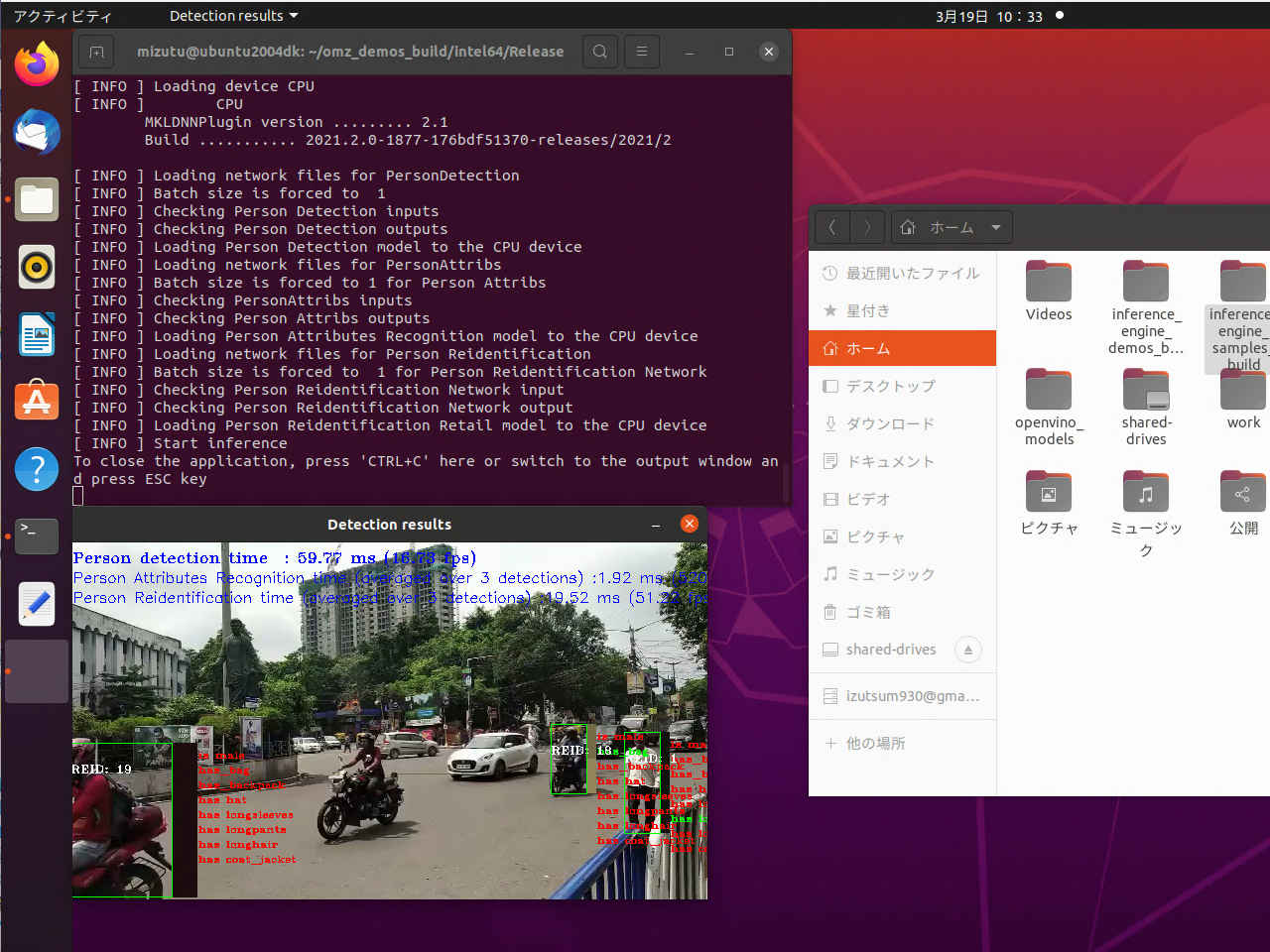

このデモでは、人の検出、認識、再識別のための推論パイプラインを提供する。デモでは、人物検出ネットワークを使用し、その後に検出結果の上に適用される個人属性認識および個人再識別ネットワークを使用する。

使用する事前トレーニング済みモデル †

デモの目的 †

- OpenCV* 経由で画像/ビデオ/カメラを入力する。

- シンプルネットワークパイプラインの例: 人の属性と人の再識別ネットワークが人の検出結果の上で実行される。

- 検出された各人の個人属性と人物再識別(REID)情報の可視化する。

デモの動作 †

- 起動時に、アプリケーションはコマンド ライン パラメータを読み取り、指定されたネットワークを読み込む。人の検出ネットワークは必須で、他の2つはオプション。

- OpenCV VideoCaptureからフレームを取得すると、アプリケーションは人の検出ネットワークの推論を実行し、コマンドラインで指定された場合は、個人属性認識と個人再識別ネットワークの別の2つの推論を実行し、結果を表示する。

- 個人再識別ネットワークが指定されている場合、検出された各ユーザーに対して結果のベクトルが生成される。このベクトルは、コサイン類似度アルゴリズムを使用して、以前に検出されたすべての人物ベクトルと1つずつ比較される。比較結果が指定された (またはデフォルトの) しきい値より大きい場合、そのユーザーはすでに検出済みであり、既知の REID 値が割り当てられていると結論付ける。それ以外の場合、ベクトルはグローバル・リストに追加され、新しい REID 値が割り当てられる。

デモの実行 †

▼ 「2021.3」

▲ 「2021.3」

- 実行時のディレクトリ:~/omz_demos_build/intel64/Release

- 実行ファイル :./crossroad_camera_demo

- 「-h」オプションを指定してアプリケーションを実行すると、次の使用法メッセージが表示される。

$ ./crossroad_camera_demo -h

InferenceEngine: API version ......... 2.1

Build ........... 2021.3.0-2787-60059f2c755-releases/2021/3

crossroad_camera_demo [OPTION]

Options:

-h Print a usage message.

-i Required. An input to process. The input must be a single image, a folder of images, video file or camera id.

-loop Optional. Enable reading the input in a loop.

-o "<path>" Optional. Name of output to save.

-limit "<num>" Optional. Number of frames to store in output. If 0 is set, all frames are stored.

-m "<path>" Required. Path to the Person/Vehicle/Bike Detection Crossroad model (.xml) file.

-m_pa "<path>" Optional. Path to the Person Attributes Recognition Crossroad model (.xml) file.

-m_reid "<path>" Optional. Path to the Person Reidentification Retail model (.xml) file.

-l "<absolute_path>" Optional. For MKLDNN (CPU)-targeted custom layers, if any. Absolute path to a shared library with the kernels impl.

Or

-c "<absolute_path>" Optional. For clDNN (GPU)-targeted custom kernels, if any. Absolute path to the xml file with the kernels desc.

-d "<device>" Optional. Specify the target device for Person/Vehicle/Bike Detection. The list of available devices is shown below. Default value is CPU. Use "-d HETERO:<comma-separated_devices_list>" format to specify HETERO plugin. The application looks for a suitable plugin for the specified device.

-d_pa "<device>" Optional. Specify the target device for Person Attributes Recognition. The list of available devices is shown below. Default value is CPU. Use "-d HETERO:<comma-separated_devices_list>" format to specify HETERO plugin. The application looks for a suitable plugin for the specified device.

-d_reid "<device>" Optional. Specify the target device for Person Reidentification Retail. The list of available devices is shown below. Default value is CPU. Use "-d HETERO:<comma-separated_devices_list>" format to specify HETERO plugin. The application looks for a suitable plugin for the specified device.

-pc Optional. Enables per-layer performance statistics.

-r Optional. Output Inference results as raw values.

-t Optional. Probability threshold for person/vehicle/bike crossroad detections.

-t_reid Optional. Cosine similarity threshold between two vectors for person reidentification.

-no_show Optional. No show processed video.

-auto_resize Optional. Enables resizable input with support of ROI crop & auto resize.

-u Optional. List of monitors to show initially.

-person_label Optional. The integer index of the objects' category corresponding to persons (as it is returned from the detection network, may vary from one network to another). The default value is 1.

[E:] [BSL] found 0 ioexpander device

Available target devices: CPU GNA MYRIAD

- デモを実行するには、あらかじめOpenVINO™モデル ダウンローダーを使用してダウンロードした、公開モデルまたは事前トレーニング済みのモデルを使用する。

- 実行例「Ubuntu Core™ i5-10210U」

$ ./crossroad_camera_demo -i ~/Videos/car.mp4 -m ~/model/intel/FP32/person-vehicle-bike-detection-crossroad-0078.xml

InferenceEngine: API version ......... 2.1

Build ........... 2021.3.0-2787-60059f2c755-releases/2021/3

[ INFO ] Parsing input parameters

[ INFO ] Person Attributes Recognition detection DISABLED

[ INFO ] Person Reidentification Retail detection DISABLED

[ INFO ] Loading device CPU

[ INFO ] CPU

MKLDNNPlugin version ......... 2.1

Build ........... 2021.3.0-2787-60059f2c755-releases/2021/3

[ INFO ] Loading network files for PersonDetection

[ INFO ] Batch size is forced to 1

[ INFO ] Checking Person Detection inputs

[ INFO ] Checking Person Detection outputs

[ INFO ] Loading Person Detection model to the CPU device

[ INFO ] Start inference

To close the application, press 'CTRL+C' here or switch to the output window and press ESC key

[ INFO ] Total Inference time: 8901.55

[ INFO ] Execution successful

▼ 「2021.2」

▲ 「2021.2」

- 実行時のディレクトリ:~/omz_demos_build/intel64/Release

- 実行ファイル :./crossroad_camera_demo

- 「-h」オプションを指定してアプリケーションを実行すると、次の使用法メッセージが表示される。

mizutu@ubuntu2004dk:~/omz_demos_build/intel64/Release$ ./crossroad_camera_demo -h

crossroad_camera_demo [OPTION]

Options:

-h Print a usage message.

-i Required. An input to process. The input must be a single image, a folder of images or anything that cv::VideoCapture can process.

-loop Optional. Enable reading the input in a loop.

-m "<path>" Required. Path to the Person/Vehicle/Bike Detection Crossroad model (.xml) file.

-m_pa "<path>" Optional. Path to the Person Attributes Recognition Crossroad model (.xml) file.

-m_reid "<path>" Optional. Path to the Person Reidentification Retail model (.xml) file.

-l "<absolute_path>" Optional. For MKLDNN (CPU)-targeted custom layers, if any. Absolute path to a shared library with the kernels impl.

Or

-c "<absolute_path>" Optional. For clDNN (GPU)-targeted custom kernels, if any. Absolute path to the xml file with the kernels desc.

-d "<device>" Optional. Specify the target device for Person/Vehicle/Bike Detection. The list of available devices is shown below. Default value is CPU. Use "-d HETERO:<comma-separated_devices_list>" format to specify HETERO plugin. The application looks for a suitable plugin for the specified device.

-d_pa "<device>" Optional. Specify the target device for Person Attributes Recognition. The list of available devices is shown below. Default value is CPU. Use "-d HETERO:<comma-separated_devices_list>" format to specify HETERO plugin. The application looks for a suitable plugin for the specified device.

-d_reid "<device>" Optional. Specify the target device for Person Reidentification Retail. The list of available devices is shown below. Default value is CPU. Use "-d HETERO:<comma-separated_devices_list>" format to specify HETERO plugin. The application looks for a suitable plugin for the specified device.

-pc Optional. Enables per-layer performance statistics.

-r Optional. Output Inference results as raw values.

-t Optional. Probability threshold for person/vehicle/bike crossroad detections.

-t_reid Optional. Cosine similarity threshold between two vectors for person reidentification.

-no_show Optional. No show processed video.

-auto_resize Optional. Enables resizable input with support of ROI crop & auto resize.

-u Optional. List of monitors to show initially.

-person_label Optional. The integer index of the objects' category corresponding to persons (as it is returned from the detection network, may vary from one network to another). The default value is 1.

[E:] [BSL] found 0 ioexpander device

Available target devices: CPU GNA

- デモを実行するには、あらかじめOpenVINO™モデル ダウンローダーを使用してダウンロードした、公開モデルまたは事前トレーニング済みのモデルを使用する。

- 実行例「Ubuntu Core™ i5-10210U」

mizutu@ubuntu-nuc10:~/omz_demos_build/intel64/Release$ ./crossroad_camera_demo -i ~/Videos/car.mp4 -m ~/model/intel/FP32/person-vehicle-bike-detection-crossroad-0078.xml

InferenceEngine: API version ......... 2.1

Build ........... 2021.2.0-1877-176bdf51370-releases/2021/2

[ INFO ] Parsing input parameters

[ INFO ] Person Attributes Recognition detection DISABLED