私的AI研究会 > YOLOv7

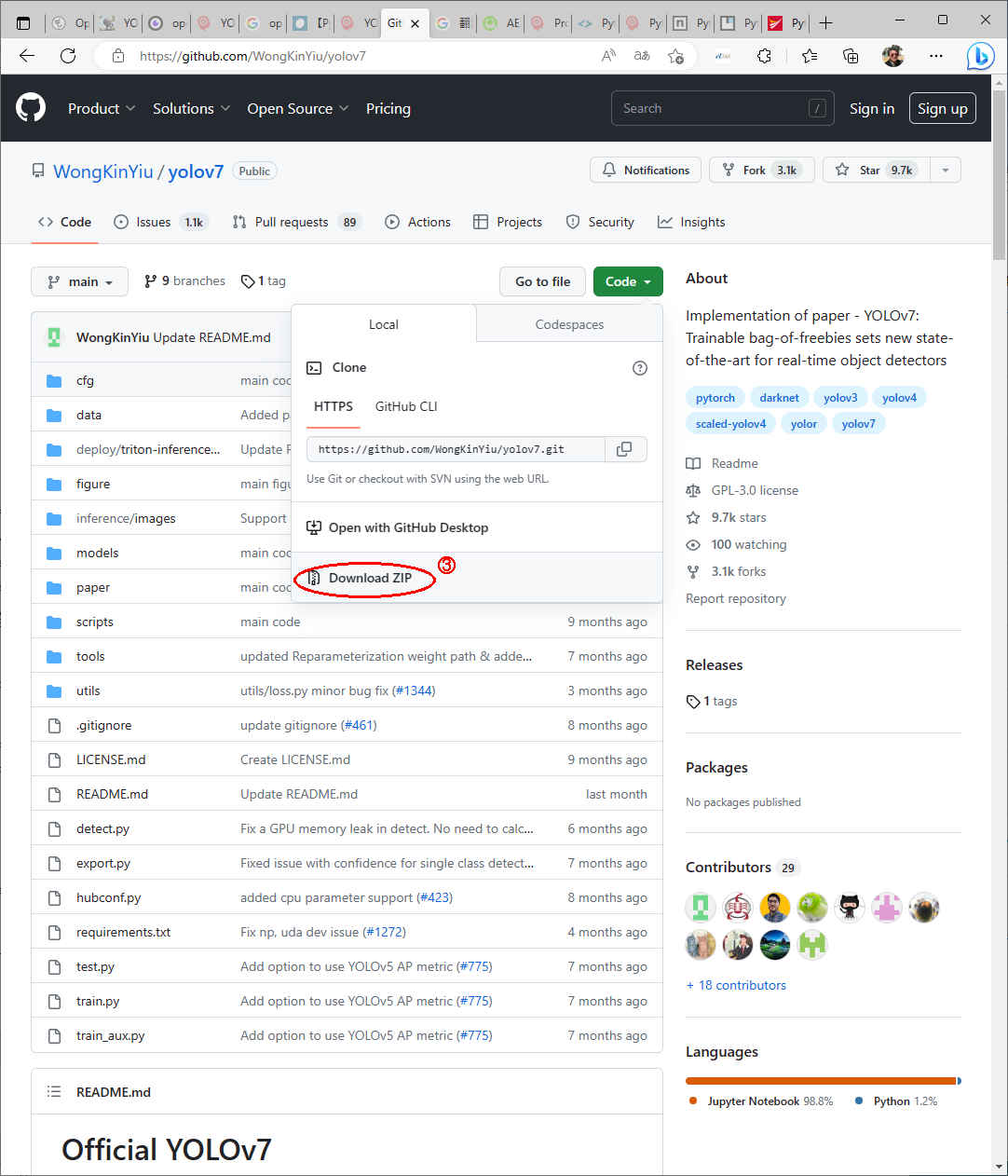

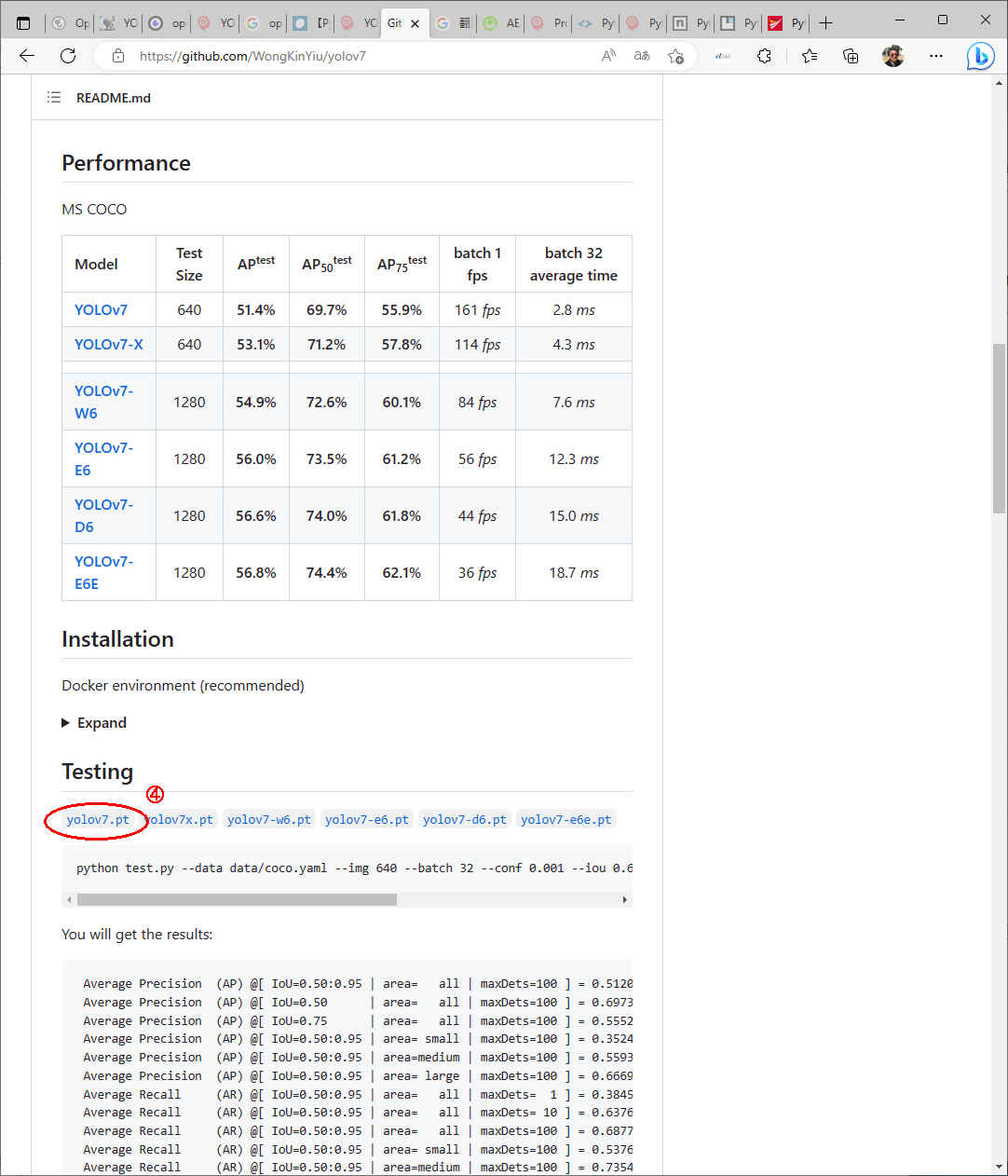

物体認識のタスク YOLO の新しい版である YOLO v7 を試してみる。

プロジェクト・ホーム(c:/anaconda_win または ~/)

├─Images ← 画像データ

├─model ← 学習済みモデル

├─Videos ← 動画データ

│ :

├─work ← 今回使用するプロジェクト・ディレクトリ

│ ├─openvino

│ └─yolov7

├─workspace_py37

│ └─mylib ← python 共有ライブラリ (パスが通っていること)

└─workspace_py38 ← anaconda 環境下のアプリケーション・プロジェクト

├─ :

└─stable_diffusion| コマンド | 内容 |

| pip list | インストール済みパッケージ名とバージョン一覧 |

| pip freeze | インストール済みパッケージ名とバージョン一覧(パッケージ管理除外) |

| pip install <パッケージ名> | パッケージのインストール |

| pip install <パッケージ名>==<バージョン> | バージョンを指定してインストール (バージョンを省略するとインストール可能なバージョン表示) |

| pip install -r requirements.txt | パッケージリストファイルに従ってインストール |

| pip uninstall <パッケージ名> | インストール済みパッケージのアンインストール |

| pip uninstall <パッケージ名> <パッケージ名> ... | 複数インストール済みパッケージのアンインストール |

| コマンド | 内容 |

| conda update -n base conda | conda のアップデート |

| conda create -n py37 python=3.7 | 仮想環境の作成(python versionを指定) |

| conda env create -f env1.yaml | 仮想環境の作成(yamlファイルの読み込み) |

| conda create -n 新しい環境名 --clone 複製したいベースの環境名 | 仮想環境を複製 |

| conda info -e | 作成した仮想環境一覧の表示 |

| conda activate py37 | 作成した仮想環境に切り替える |

| conda deactivate | 現在の仮想環境から出る |

| conda env remove -n py37 | 仮想環境の削除 |

| conda remove —-all | すべての仮想環境の削除 |

| conda env export > env1.yaml | 現在の仮想環境の設定ファイルを書き出す |

| conda list | 現在の仮想環境のパッケージを確認 |

(py38) PS C:\anaconda_win\work> conda info -e

(py38) PS C:\anaconda_win\workspace_py38> cd ..\work\

(py38) PS C:\anaconda_win\work> ls ← 今回のプロジェクト・フォルダ

ディレクトリ: N:\anaconda_win\work

Mode LastWriteTime Length Name

---- ------------- ------ ----

d----- 2023/04/13 4:39 openvino

d----- 2023/04/13 4:39 yolov7

------ 2023/04/10 13:36 294 requirements.txt

(py38) PS C:\anaconda_win\work> conda info -e

# conda environments:

#

base C:\Users\(xxxxx)\anaconda3

:

py37 C:\Users\(xxxxx)\anaconda3\envs\py37

py38 * C:\Users\(xxxxx)\anaconda3\envs\py38 ← 現在アクティブな仮想環境

(py38) PS C:\anaconda_win\work> conda create -n py38a python=3.8

(py38) PS C:\anaconda_win\work> conda create -n py38a python=3.8

Collecting package metadata (current_repodata.json): done

Solving environment: done

==> WARNING: A newer version of conda exists. <==

current version: 4.13.0

latest version: 23.3.1

Please update conda by running

$ conda update -n base -c defaults conda ← このメッセージが出たら後で「anaconda」をアップデートする

## Package Plan ##

environment location: C:\Users\izuts\anaconda3\envs\py38a

added / updated specs:

- python=3.8

The following packages will be downloaded:

package | build

---------------------------|-----------------

ca-certificates-2023.01.10 | haa95532_0 121 KB

certifi-2022.12.7 | py38haa95532_0 148 KB

libffi-3.4.2 | hd77b12b_6 109 KB

openssl-1.1.1t | h2bbff1b_0 5.5 MB

pip-23.0.1 | py38haa95532_0 2.7 MB

python-3.8.16 | h6244533_3 18.9 MB

setuptools-65.6.3 | py38haa95532_0 1.1 MB

sqlite-3.41.1 | h2bbff1b_0 897 KB

wheel-0.38.4 | py38haa95532_0 83 KB

------------------------------------------------------------

Total: 29.6 MB

The following NEW packages will be INSTALLED:

ca-certificates pkgs/main/win-64::ca-certificates-2023.01.10-haa95532_0

certifi pkgs/main/win-64::certifi-2022.12.7-py38haa95532_0

libffi pkgs/main/win-64::libffi-3.4.2-hd77b12b_6

openssl pkgs/main/win-64::openssl-1.1.1t-h2bbff1b_0

pip pkgs/main/win-64::pip-23.0.1-py38haa95532_0

python pkgs/main/win-64::python-3.8.16-h6244533_3

setuptools pkgs/main/win-64::setuptools-65.6.3-py38haa95532_0

sqlite pkgs/main/win-64::sqlite-3.41.1-h2bbff1b_0

vc pkgs/main/win-64::vc-14.2-h21ff451_1

vs2015_runtime pkgs/main/win-64::vs2015_runtime-14.27.29016-h5e58377_2

wheel pkgs/main/win-64::wheel-0.38.4-py38haa95532_0

wincertstore pkgs/main/win-64::wincertstore-0.2-py38haa95532_2

Proceed ([y]/n)?

Downloading and Extracting Packages

python-3.8.16 | 18.9 MB | #################################### | 100%

wheel-0.38.4 | 83 KB | #################################### | 100%

pip-23.0.1 | 2.7 MB | #################################### | 100%

libffi-3.4.2 | 109 KB | #################################### | 100%

sqlite-3.41.1 | 897 KB | #################################### | 100%

ca-certificates-2023 | 121 KB | #################################### | 100%

setuptools-65.6.3 | 1.1 MB | #################################### | 100%

certifi-2022.12.7 | 148 KB | #################################### | 100%

openssl-1.1.1t | 5.5 MB | #################################### | 100%

Preparing transaction: done

Verifying transaction: done

Executing transaction: done

#

# To activate this environment, use

#

# $ conda activate py38a

#

# To deactivate an active environment, use

#

# $ conda deactivate

(py38) PS C:\anaconda_win\work> conda activate py38a

(py38) PS C:\anaconda_win\work> conda activate py38a

(py38a) PS C:\anaconda_win\work> conda list

# packages in environment at C:\Users\izuts\anaconda3\envs\py38a:

#

# Name Version Build Channel

ca-certificates 2023.01.10 haa95532_0

certifi 2022.12.7 py38haa95532_0

libffi 3.4.2 hd77b12b_6

openssl 1.1.1t h2bbff1b_0

pip 23.0.1 py38haa95532_0

python 3.8.16 h6244533_3

setuptools 65.6.3 py38haa95532_0

sqlite 3.41.1 h2bbff1b_0

vc 14.2 h21ff451_1

vs2015_runtime 14.27.29016 h5e58377_2

wheel 0.38.4 py38haa95532_0

wincertstore 0.2 py38haa95532_2

(py38a) PS N:\anaconda_win\work> conda info -e

# conda environments:

#

base C:\Users\izuts\anaconda3

:

py37 C:\Users\izuts\anaconda3\envs\py37

py38 C:\Users\izuts\anaconda3\envs\py38

py38a * C:\Users\izuts\anaconda3\envs\py38a ← 現在アクティブな仮想環境

● OpenVINO™ランタイムを使用して「Stable Diffusion」と「YOLOv7」が動作するようにする。

「requirements.txt」

# Stable Diffusion & YOLOv7_OpenVINO openvino==2022.1.0 numpy==1.19.5 opencv-python==4.5.5.64 transformers==4.16.2 diffusers==0.2.4 tqdm==4.64.0 huggingface_hub==0.9.0 scipy==1.9.0 streamlit==1.12.0 watchdog==2.1.9 ftfy==6.1.1 PyMuPDF torchvision matplotlib seaborn onnx googletrans==4.0.0-rc1

(py38a) PS C:\anaconda_win\work> pip install -r requirements.txt

(py38a) PS C:\anaconda_win\work> pip install -r requirements.txt

Collecting openvino==2022.1.0

Using cached openvino-2022.1.0-7019-cp38-cp38-win_amd64.whl (22.9 MB)

Collecting numpy==1.19.5

Using cached numpy-1.19.5-cp38-cp38-win_amd64.whl (13.3 MB)

Collecting opencv-python==4.5.5.64

Using cached opencv_python-4.5.5.64-cp36-abi3-win_amd64.whl (35.4 MB)

Collecting transformers==4.16.2

Using cached transformers-4.16.2-py3-none-any.whl (3.5 MB)

Collecting diffusers==0.2.4

Using cached diffusers-0.2.4-py3-none-any.whl (112 kB)

Collecting tqdm==4.64.0

Using cached tqdm-4.64.0-py2.py3-none-any.whl (78 kB)

Collecting huggingface_hub==0.9.0

Using cached huggingface_hub-0.9.0-py3-none-any.whl (120 kB)

Collecting scipy==1.9.0

Using cached scipy-1.9.0-cp38-cp38-win_amd64.whl (38.6 MB)

Collecting streamlit==1.12.0

Using cached streamlit-1.12.0-py2.py3-none-any.whl (9.1 MB)

Collecting watchdog==2.1.9

Using cached watchdog-2.1.9-py3-none-win_amd64.whl (78 kB)

Collecting ftfy==6.1.1

Using cached ftfy-6.1.1-py3-none-any.whl (53 kB)

Collecting PyMuPDF

Downloading PyMuPDF-1.21.1-cp38-cp38-win_amd64.whl (11.7 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 11.7/11.7 MB 31.2 MB/s eta 0:00:00

Collecting torchvision

Downloading torchvision-0.15.1-cp38-cp38-win_amd64.whl (1.2 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 1.2/1.2 MB 12.6 MB/s eta 0:00:00

Collecting matplotlib

Downloading matplotlib-3.7.1-cp38-cp38-win_amd64.whl (7.6 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 7.6/7.6 MB 27.2 MB/s eta 0:00:00

Collecting seaborn

Downloading seaborn-0.12.2-py3-none-any.whl (293 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 293.3/293.3 kB ? eta 0:00:00

Collecting onnx

Downloading onnx-1.13.1-cp38-cp38-win_amd64.whl (12.2 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 12.2/12.2 MB 34.4 MB/s eta 0:00:00

Collecting googletrans==4.0.0-rc1

Using cached googletrans-4.0.0rc1-py3-none-any.whl

Collecting pyyaml>=5.1

Using cached PyYAML-6.0-cp38-cp38-win_amd64.whl (155 kB)

Collecting filelock

Downloading filelock-3.11.0-py3-none-any.whl (10.0 kB)

Collecting tokenizers!=0.11.3,>=0.10.1

Downloading tokenizers-0.13.3-cp38-cp38-win_amd64.whl (3.5 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 3.5/3.5 MB 31.7 MB/s eta 0:00:00

Collecting requests

Downloading requests-2.28.2-py3-none-any.whl (62 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 62.8/62.8 kB ? eta 0:00:00

Collecting sacremoses

Using cached sacremoses-0.0.53-py3-none-any.whl

Collecting packaging>=20.0

Downloading packaging-23.1-py3-none-any.whl (48 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 48.9/48.9 kB 2.4 MB/s eta 0:00:00

Collecting regex!=2019.12.17

Downloading regex-2023.3.23-cp38-cp38-win_amd64.whl (267 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 267.9/267.9 kB ? eta 0:00:00

Collecting importlib-metadata

Downloading importlib_metadata-6.3.0-py3-none-any.whl (22 kB)

Collecting Pillow

Downloading Pillow-9.5.0-cp38-cp38-win_amd64.whl (2.5 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 2.5/2.5 MB 20.2 MB/s eta 0:00:00

Collecting torch>=1.4

Downloading torch-2.0.0-cp38-cp38-win_amd64.whl (172.3 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 172.3/172.3 MB 7.5 MB/s eta 0:00:00

Requirement already satisfied: colorama in c:\users\izuts\appdata\roaming\python\python38\site-packages (from tqdm==4.64.0->-r requirements.txt (line 7)) (0.4.3)

Collecting typing-extensions>=3.7.4.3

Downloading typing_extensions-4.5.0-py3-none-any.whl (27 kB)

Collecting protobuf<4,>=3.12

Using cached protobuf-3.20.3-cp38-cp38-win_amd64.whl (904 kB)

Collecting pympler>=0.9

Using cached Pympler-1.0.1-py3-none-any.whl (164 kB)

Collecting click>=7.0

Using cached click-8.1.3-py3-none-any.whl (96 kB)

Collecting pydeck>=0.1.dev5

Using cached pydeck-0.8.0-py2.py3-none-any.whl (4.7 MB)

Collecting blinker>=1.0.0

Downloading blinker-1.6.1-py3-none-any.whl (13 kB)

Collecting semver

Downloading semver-3.0.0-py3-none-any.whl (17 kB)

Collecting rich>=10.11.0

Downloading rich-13.3.4-py3-none-any.whl (238 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 238.7/238.7 kB 7.4 MB/s eta 0:00:00

Collecting pandas>=0.21.0

Downloading pandas-2.0.0-cp38-cp38-win_amd64.whl (11.3 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 11.3/11.3 MB 28.5 MB/s eta 0:00:00

Collecting tzlocal>=1.1

Downloading tzlocal-4.3-py3-none-any.whl (20 kB)

Collecting tornado>=5.0

Using cached tornado-6.2-cp37-abi3-win_amd64.whl (425 kB)

Collecting python-dateutil

Using cached python_dateutil-2.8.2-py2.py3-none-any.whl (247 kB)

Collecting cachetools>=4.0

Downloading cachetools-5.3.0-py3-none-any.whl (9.3 kB)

Collecting pyarrow>=4.0

Downloading pyarrow-11.0.0-cp38-cp38-win_amd64.whl (20.6 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 20.6/20.6 MB 26.1 MB/s eta 0:00:00

Collecting gitpython!=3.1.19

Downloading GitPython-3.1.31-py3-none-any.whl (184 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 184.3/184.3 kB 11.6 MB/s eta 0:00:00

Collecting validators>=0.2

Using cached validators-0.20.0-py3-none-any.whl

Collecting toml

Using cached toml-0.10.2-py2.py3-none-any.whl (16 kB)

Collecting altair>=3.2.0

Downloading altair-4.2.2-py3-none-any.whl (813 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 813.6/813.6 kB 25.1 MB/s eta 0:00:00

Collecting wcwidth>=0.2.5

Downloading wcwidth-0.2.6-py2.py3-none-any.whl (29 kB)

Collecting httpx==0.13.3

Using cached httpx-0.13.3-py3-none-any.whl (55 kB)

Collecting sniffio

Using cached sniffio-1.3.0-py3-none-any.whl (10 kB)

Collecting httpcore==0.9.*

Using cached httpcore-0.9.1-py3-none-any.whl (42 kB)

Collecting chardet==3.*

Using cached chardet-3.0.4-py2.py3-none-any.whl (133 kB)

Collecting rfc3986<2,>=1.3

Using cached rfc3986-1.5.0-py2.py3-none-any.whl (31 kB)

Collecting idna==2.*

Using cached idna-2.10-py2.py3-none-any.whl (58 kB)

Collecting hstspreload

Downloading hstspreload-2023.1.1-py3-none-any.whl (1.5 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 1.5/1.5 MB 32.6 MB/s eta 0:00:00

Requirement already satisfied: certifi in c:\users\izuts\anaconda3\envs\py38a\lib\site-packages (from httpx==0.13.3->googletrans==4.0.0-rc1->-r requirements.txt (line 18)) (2022.12.7)

Collecting h11<0.10,>=0.8

Using cached h11-0.9.0-py2.py3-none-any.whl (53 kB)

Collecting h2==3.*

Using cached h2-3.2.0-py2.py3-none-any.whl (65 kB)

Collecting hyperframe<6,>=5.2.0

Using cached hyperframe-5.2.0-py2.py3-none-any.whl (12 kB)

Collecting hpack<4,>=3.0

Using cached hpack-3.0.0-py2.py3-none-any.whl (38 kB)

Collecting sympy

Downloading sympy-1.11.1-py3-none-any.whl (6.5 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 6.5/6.5 MB 29.5 MB/s eta 0:00:00

Collecting jinja2

Using cached Jinja2-3.1.2-py3-none-any.whl (133 kB)

Collecting networkx

Downloading networkx-3.1-py3-none-any.whl (2.1 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 2.1/2.1 MB 21.9 MB/s eta 0:00:00

Collecting importlib-resources>=3.2.0

Downloading importlib_resources-5.12.0-py3-none-any.whl (36 kB)

Collecting kiwisolver>=1.0.1

Downloading kiwisolver-1.4.4-cp38-cp38-win_amd64.whl (55 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 55.4/55.4 kB 3.0 MB/s eta 0:00:00

Collecting contourpy>=1.0.1

Downloading contourpy-1.0.7-cp38-cp38-win_amd64.whl (162 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 163.0/163.0 kB ? eta 0:00:00

Collecting cycler>=0.10

Downloading cycler-0.11.0-py3-none-any.whl (6.4 kB)

Collecting fonttools>=4.22.0

Downloading fonttools-4.39.3-py3-none-any.whl (1.0 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 1.0/1.0 MB 62.4 MB/s eta 0:00:00

Collecting matplotlib

Downloading matplotlib-3.7.0-cp38-cp38-win_amd64.whl (7.7 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 7.7/7.7 MB 7.0 MB/s eta 0:00:00

Downloading matplotlib-3.6.3-cp38-cp38-win_amd64.whl (7.2 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 7.2/7.2 MB 32.9 MB/s eta 0:00:00

Collecting pyparsing>=2.2.1

Using cached pyparsing-3.0.9-py3-none-any.whl (98 kB)

Collecting entrypoints

Using cached entrypoints-0.4-py3-none-any.whl (5.3 kB)

Collecting toolz

Using cached toolz-0.12.0-py3-none-any.whl (55 kB)

Collecting jsonschema>=3.0

Downloading jsonschema-4.17.3-py3-none-any.whl (90 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 90.4/90.4 kB 1.7 MB/s eta 0:00:00

Collecting gitdb<5,>=4.0.1

Downloading gitdb-4.0.10-py3-none-any.whl (62 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 62.7/62.7 kB ? eta 0:00:00

Collecting zipp>=0.5

Downloading zipp-3.15.0-py3-none-any.whl (6.8 kB)

Collecting pandas>=0.21.0

Downloading pandas-1.5.3-cp38-cp38-win_amd64.whl (11.0 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 11.0/11.0 MB 23.4 MB/s eta 0:00:00

Downloading pandas-1.5.2-cp38-cp38-win_amd64.whl (11.0 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 11.0/11.0 MB 8.7 MB/s eta 0:00:00

Using cached pandas-1.5.1-cp38-cp38-win_amd64.whl (11.0 MB)

Using cached pandas-1.5.0-cp38-cp38-win_amd64.whl (11.0 MB)

Using cached pandas-1.4.4-cp38-cp38-win_amd64.whl (10.6 MB)

Collecting pytz>=2020.1

Downloading pytz-2023.3-py2.py3-none-any.whl (502 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 502.3/502.3 kB 32.8 MB/s eta 0:00:00

Requirement already satisfied: six>=1.5 in c:\users\izuts\appdata\roaming\python\python38\site-packages (from python-dateutil->streamlit==1.12.0->-r requirements.txt (line 10)) (1.14.0)

Collecting urllib3<1.27,>=1.21.1

Downloading urllib3-1.26.15-py2.py3-none-any.whl (140 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 140.9/140.9 kB 8.2 MB/s eta 0:00:00

Collecting charset-normalizer<4,>=2

Downloading charset_normalizer-3.1.0-cp38-cp38-win_amd64.whl (96 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 96.4/96.4 kB 5.4 MB/s eta 0:00:00

Collecting markdown-it-py<3.0.0,>=2.2.0

Downloading markdown_it_py-2.2.0-py3-none-any.whl (84 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 84.5/84.5 kB ? eta 0:00:00

Collecting pygments<3.0.0,>=2.13.0

Downloading Pygments-2.15.0-py3-none-any.whl (1.1 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 1.1/1.1 MB 24.2 MB/s eta 0:00:00

Collecting backports.zoneinfo

Using cached backports.zoneinfo-0.2.1-cp38-cp38-win_amd64.whl (38 kB)

Collecting tzdata

Downloading tzdata-2023.3-py2.py3-none-any.whl (341 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 341.8/341.8 kB 22.1 MB/s eta 0:00:00

Collecting pytz-deprecation-shim

Using cached pytz_deprecation_shim-0.1.0.post0-py2.py3-none-any.whl (15 kB)

Collecting decorator>=3.4.0

Using cached decorator-5.1.1-py3-none-any.whl (9.1 kB)

Collecting joblib

Using cached joblib-1.2.0-py3-none-any.whl (297 kB)

Collecting smmap<6,>=3.0.1

Using cached smmap-5.0.0-py3-none-any.whl (24 kB)

Collecting MarkupSafe>=2.0

Downloading MarkupSafe-2.1.2-cp38-cp38-win_amd64.whl (16 kB)

Collecting pkgutil-resolve-name>=1.3.10

Using cached pkgutil_resolve_name-1.3.10-py3-none-any.whl (4.7 kB)

Collecting pyrsistent!=0.17.0,!=0.17.1,!=0.17.2,>=0.14.0

Downloading pyrsistent-0.19.3-cp38-cp38-win_amd64.whl (62 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 62.7/62.7 kB 845.9 kB/s eta 0:00:00

Collecting attrs>=17.4.0

Downloading attrs-22.2.0-py3-none-any.whl (60 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 60.0/60.0 kB ? eta 0:00:00

Collecting mdurl~=0.1

Downloading mdurl-0.1.2-py3-none-any.whl (10.0 kB)

Collecting mpmath>=0.19

Downloading mpmath-1.3.0-py3-none-any.whl (536 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 536.2/536.2 kB 32.9 MB/s eta 0:00:00

Installing collected packages: wcwidth, tokenizers, rfc3986, pytz, mpmath, hyperframe, hpack, h11, chardet, zipp, watchdog, urllib3, tzdata, typing-extensions, tqdm, tornado, toolz, toml, sympy, sniffio, smmap, semver, regex, pyyaml, python-dateutil, pyrsistent, pyparsing, PyMuPDF, pympler, pygments, protobuf, pkgutil-resolve-name, Pillow, packaging, numpy, networkx, mdurl, MarkupSafe, kiwisolver, joblib, idna, hstspreload, h2, ftfy, fonttools, filelock, entrypoints, decorator, cycler, click, charset-normalizer, cachetools, backports.zoneinfo, attrs, validators, scipy, sacremoses, requests, pytz-deprecation-shim, pyarrow, pandas, openvino, opencv-python, onnx, markdown-it-py, jinja2, importlib-resources, importlib-metadata, httpcore, gitdb, contourpy, blinker, tzlocal, torch, rich, pydeck, matplotlib, jsonschema, huggingface_hub, httpx, gitpython, transformers, torchvision, seaborn, googletrans, diffusers, altair, streamlit

Successfully installed MarkupSafe-2.1.2 Pillow-9.5.0 PyMuPDF-1.21.1 altair-4.2.2 attrs-22.2.0 backports.zoneinfo-0.2.1 blinker-1.6.1 cachetools-5.3.0 chardet-3.0.4 charset-normalizer-3.1.0 click-8.1.3 contourpy-1.0.7 cycler-0.11.0 decorator-5.1.1 diffusers-0.2.4 entrypoints-0.4 filelock-3.11.0 fonttools-4.39.3 ftfy-6.1.1 gitdb-4.0.10 gitpython-3.1.31 googletrans-4.0.0rc1 h11-0.9.0 h2-3.2.0 hpack-3.0.0 hstspreload-2023.1.1 httpcore-0.9.1 httpx-0.13.3 huggingface_hub-0.9.0 hyperframe-5.2.0 idna-2.10 importlib-metadata-6.3.0 importlib-resources-5.12.0 jinja2-3.1.2 joblib-1.2.0 jsonschema-4.17.3 kiwisolver-1.4.4 markdown-it-py-2.2.0 matplotlib-3.6.3 mdurl-0.1.2 mpmath-1.3.0 networkx-3.1 numpy-1.19.5 onnx-1.13.1 opencv-python-4.5.5.64 openvino-2022.1.0 packaging-23.1 pandas-1.4.4 pkgutil-resolve-name-1.3.10 protobuf-3.20.3 pyarrow-11.0.0 pydeck-0.8.0 pygments-2.15.0 pympler-1.0.1 pyparsing-3.0.9 pyrsistent-0.19.3 python-dateutil-2.8.2 pytz-2023.3 pytz-deprecation-shim-0.1.0.post0 pyyaml-6.0 regex-2023.3.23 requests-2.28.2 rfc3986-1.5.0 rich-13.3.4 sacremoses-0.0.53 scipy-1.9.0 seaborn-0.12.2 semver-3.0.0 smmap-5.0.0 sniffio-1.3.0 streamlit-1.12.0 sympy-1.11.1 tokenizers-0.13.3 toml-0.10.2 toolz-0.12.0 torch-2.0.0 torchvision-0.15.1 tornado-6.2 tqdm-4.64.0 transformers-4.16.2 typing-extensions-4.5.0 tzdata-2023.3 tzlocal-4.3 urllib3-1.26.15 validators-0.20.0 watchdog-2.1.9 wcwidth-0.2.6 zipp-3.15.0

(py38a) PS C:\anaconda_win\work> pip list

(py38a) PS C:\anaconda_win\work> pip list Package Version --------------------- ----------- altair 4.2.2 astroid 2.3.3 attrs 22.2.0 backports.zoneinfo 0.2.1 blinker 1.6.1 cachetools 5.3.0 certifi 2022.12.7 chardet 3.0.4 charset-normalizer 3.1.0 click 8.1.3 colorama 0.4.3 contourpy 1.0.7 cycler 0.11.0 decorator 5.1.1 diffusers 0.2.4 entrypoints 0.4 filelock 3.11.0 fonttools 4.39.3 ftfy 6.1.1 gitdb 4.0.10 GitPython 3.1.31 googletrans 4.0.0rc1 h11 0.9.0 h2 3.2.0 hpack 3.0.0 hstspreload 2023.1.1 httpcore 0.9.1 httpx 0.13.3 huggingface-hub 0.9.0 hyperframe 5.2.0 idna 2.10 importlib-metadata 6.3.0 importlib-resources 5.12.0 isort 4.3.21 Jinja2 3.1.2 joblib 1.2.0 jsonschema 4.17.3 kiwisolver 1.4.4 lazy-object-proxy 1.4.3 markdown-it-py 2.2.0 MarkupSafe 2.1.2 matplotlib 3.6.3 mccabe 0.6.1 mdurl 0.1.2 mpmath 1.3.0 networkx 3.1 numpy 1.19.5 onnx 1.13.1 opencv-python 4.5.5.64 openvino 2022.1.0 packaging 23.1 pandas 1.4.4 Pillow 9.5.0 pip 23.0.1 pkgutil_resolve_name 1.3.10 protobuf 3.20.3 pyarrow 11.0.0 pydeck 0.8.0 Pygments 2.15.0 pylint 2.4.4 Pympler 1.0.1 PyMuPDF 1.21.1 pyparsing 3.0.9 pyrsistent 0.19.3 python-dateutil 2.8.2 pytz 2023.3 pytz-deprecation-shim 0.1.0.post0 PyYAML 6.0 regex 2023.3.23 requests 2.28.2 rfc3986 1.5.0 rich 13.3.4 sacremoses 0.0.53 scipy 1.9.0 seaborn 0.12.2 semver 3.0.0 setuptools 65.6.3 six 1.14.0 smmap 5.0.0 sniffio 1.3.0 streamlit 1.12.0 sympy 1.11.1 tokenizers 0.13.3 toml 0.10.2 toolz 0.12.0 torch 2.0.0 torchvision 0.15.1 tornado 6.2 tqdm 4.64.0 transformers 4.16.2 typing_extensions 4.5.0 tzdata 2023.3 tzlocal 4.3 urllib3 1.26.15 validators 0.20.0 watchdog 2.1.9 wcwidth 0.2.6 wheel 0.38.4 wincertstore 0.2 wrapt 1.11.2 zipp 3.15.0

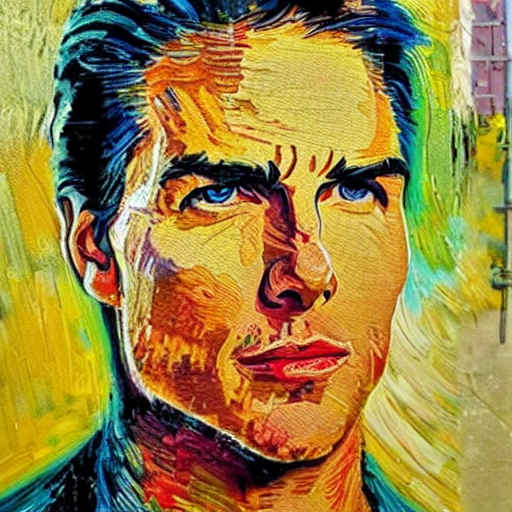

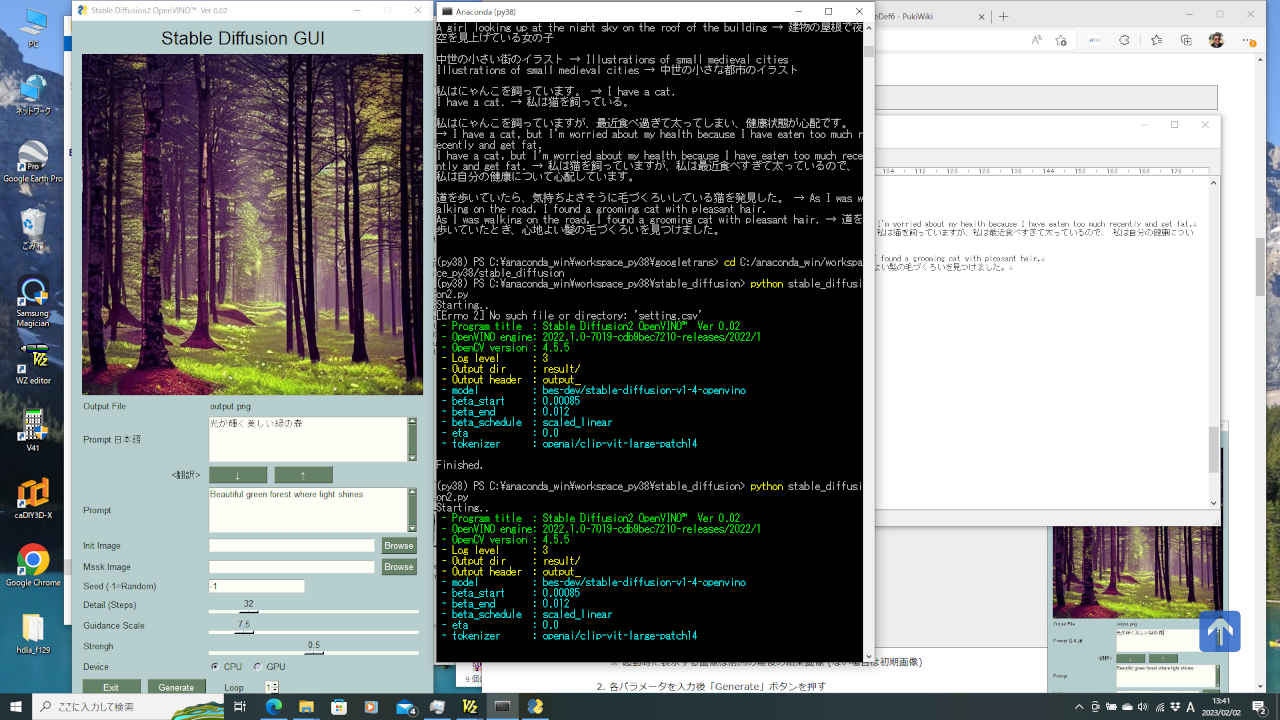

(py38a) PS C:\anaconda_win\work> cd ../workspace_py38/stable_diffusion (py38a) PS C:\anaconda_win\workspace_py38\stable_diffusion> python demo.py --prompt "Street-art painting of Tom Cruise in style of Gogh, photorealism" 32it [04:03, 7.61s/it]↑ -- 1回あたりの演算速度(秒)

(py38a) PS C:\anaconda_win\workspace_py38\stable_diffusion> python stable_diffusion2.py Starting.. - Program title : Stable Diffusion2 OpenVINO™ Ver 0.02 - OpenVINO engine: 2022.1.0-7019-cdb9bec7210-releases/2022/1 - OpenCV version : 4.5.5 - Log level : 3 - Output dir : result/ - Output header : output_ - model : bes-dev/stable-diffusion-v1-4-openvino - beta_start : 0.00085 - beta_end : 0.012 - beta_schedule : scaled_linear - eta : 0.0 - tokenizer : openai/clip-vit-large-patch14 Prompt: Beautiful green forest where light shines (和訳): 光が輝く美しい緑の森 ** start 0 ** 4029269746 32it [03:26, 6.46s/it] -Output-: result/output_4029269746.png ** end ** 00:03:49 Finished.

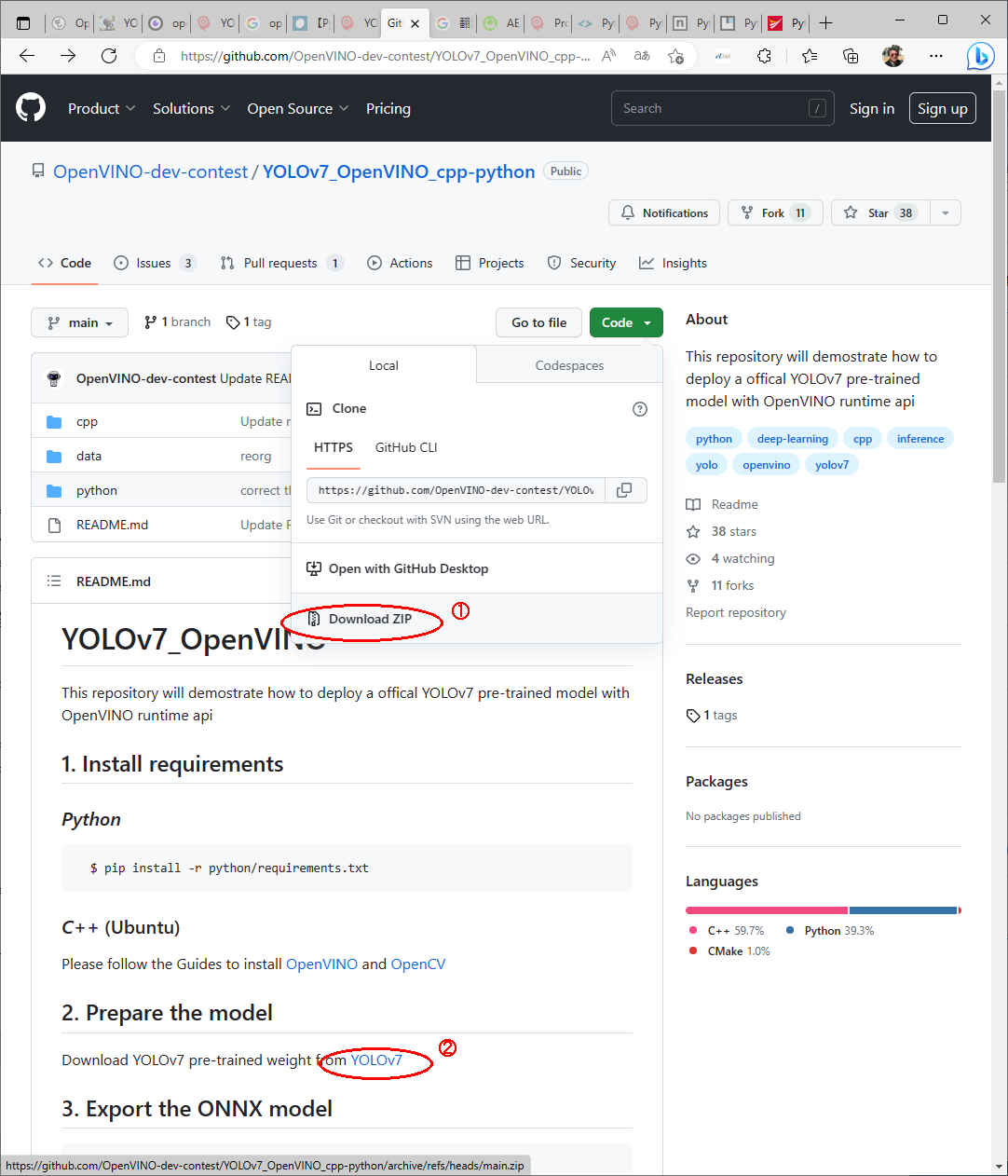

/work

├─openvino

├─yolov7

├─YOLOv7_OpenVINO_cpp-python-main

└─yolov7-main

├─ :

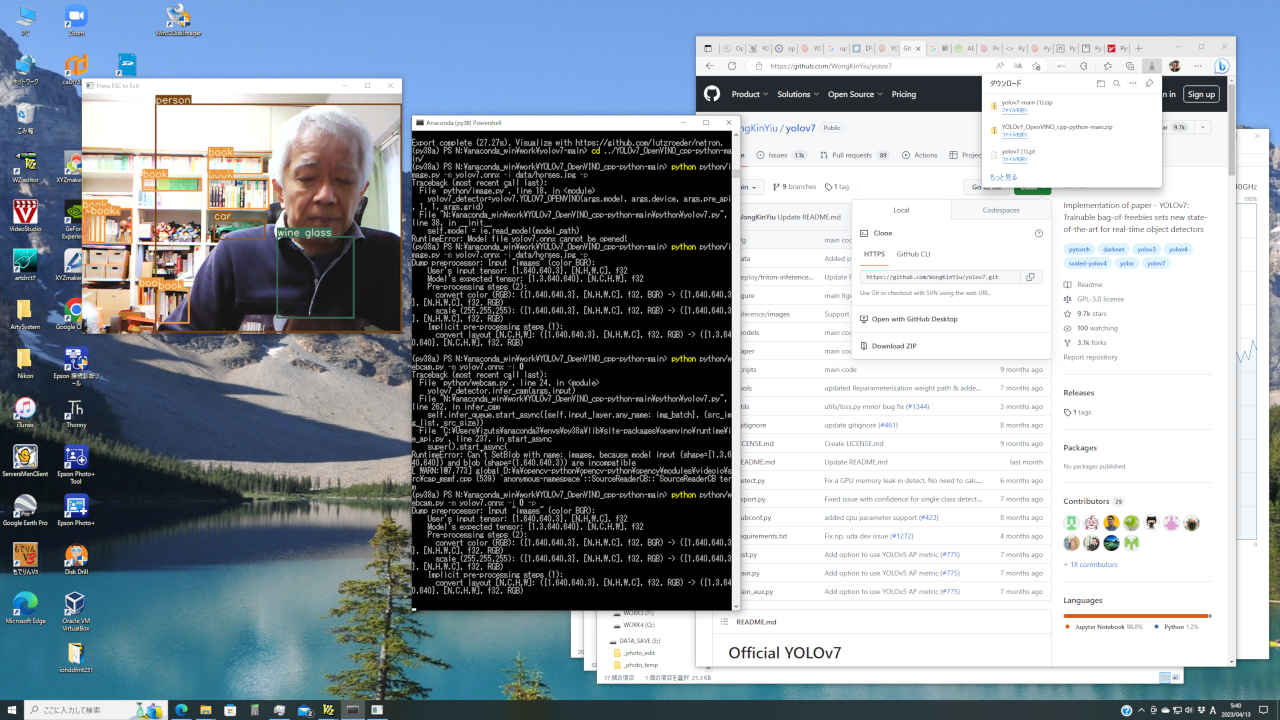

└─yolo7.pt(py38a) PS > cd /anaconda_win/work/yolov7-main (py38a) PS > python export.py --weights yolov7.pt

(py38a) PS > cd /anaconda_win/work/yolov7-main (py38a) PS > python export.py --weights yolov7.pt Import onnx_graphsurgeon failure: No module named 'onnx_graphsurgeon' Namespace(batch_size=1, conf_thres=0.25, device='cpu', dynamic=False, dynamic_batch=False, end2end=False, fp16=False, grid=False, img_size=[640, 640], include_nms=False, int8=False, iou_thres=0.45, max_wh=None, simplify=False, topk_all=100, weights='yolov7.pt') YOLOR 2023-4-13 torch 2.0.0+cpu CPU Fusing layers... RepConv.fuse_repvgg_block RepConv.fuse_repvgg_block RepConv.fuse_repvgg_block Model Summary: 306 layers, 36905341 parameters, 36905341 gradients Starting TorchScript export with torch 2.0.0+cpu... TorchScript export success, saved as yolov7.torchscript.pt CoreML export failure: No module named 'coremltools' Starting TorchScript-Lite export with torch 2.0.0+cpu... TorchScript-Lite export success, saved as yolov7.torchscript.ptl Starting ONNX export with onnx 1.13.1... N:\anaconda_win\work\yolov7-main\models\yolo.py:582: TracerWarning: Converting a tensor to a Python boolean might cause the trace to be incorrect. We can't record the data flow of Python values, so this value will be treated as a constant in the future. This means that the trace might not generalize to other inputs! if augment: N:\anaconda_win\work\yolov7-main\models\yolo.py:614: TracerWarning: Converting a tensor to a Python boolean might cause the trace to be incorrect. We can't record the data flow of Python values, so this value will be treated as a constant in the future. This means that the trace might not generalize to other inputs! if profile: N:\anaconda_win\work\yolov7-main\models\yolo.py:629: TracerWarning: Converting a tensor to a Python boolean might cause the trace to be incorrect. We can't record the data flow of Python values, so this value will be treated as a constant in the future. This means that the trace might not generalize to other inputs! if profile: ============== Diagnostic Run torch.onnx.export version 2.0.0+cpu ============== verbose: False, log level: Level.ERROR ======================= 0 NONE 0 NOTE 0 WARNING 0 ERROR ======================== ONNX export success, saved as yolov7.onnx Export complete (27.27s). Visualize with https://github.com/lutzroeder/netron.

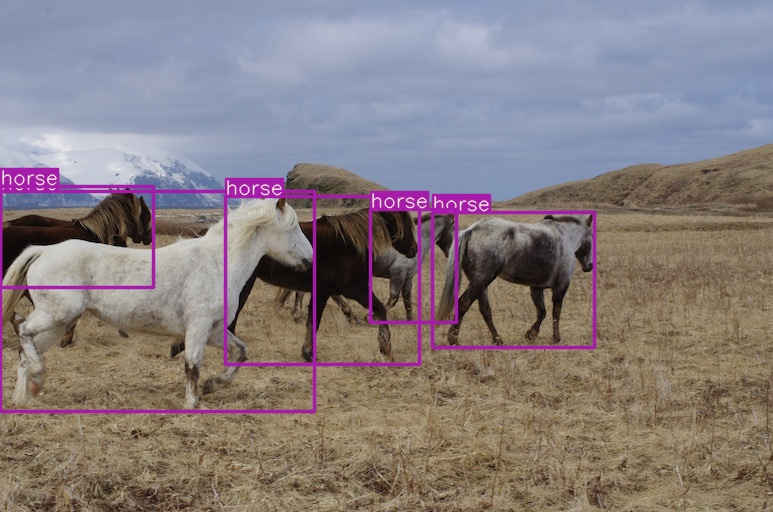

(py38a) PS > cd /anaconda_win/work/YOLOv7_OpenVINO_cpp-python-main/ (py38a) PS > python python/image.py -m yolov7.onnx -i data/horses.jpg -p

(py38a) PS C:\anaconda_win\work\YOLOv7_OpenVINO_cpp-python-main> python python/image.py -m yolov7.onnx -i data/horses.jpg -p

Dump preprocessor: Input "images" (color BGR):

User's input tensor: {1,640,640,3}, [N,H,W,C], f32

Model's expected tensor: {1,3,640,640}, [N,C,H,W], f32

Pre-processing steps (2):

convert color (RGB): ({1,640,640,3}, [N,H,W,C], f32, BGR) -> ({1,640,640,3}, [N,H,W,C], f32, RGB)

scale (255,255,255): ({1,640,640,3}, [N,H,W,C], f32, RGB) -> ({1,640,640,3}, [N,H,W,C], f32, RGB)

Implicit pre-processing steps (1):

convert layout [N,C,H,W]: ({1,640,640,3}, [N,H,W,C], f32, RGB) -> ({1,3,640,640}, [N,C,H,W], f32, RGB)

#br

(py38a) PS > cd /anaconda_win/work/YOLOv7_OpenVINO_cpp-python-main/ (py38a) PS > python python/webcam.py -m yolov7.onnx -i 0 -p

(py38a) PS > cd /anaconda_win/work/YOLOv7_OpenVINO_cpp-python-main/

(py38a) PS > python python/webcam.py -m yolov7.onnx -i 0 -p

Dump preprocessor: Input "images" (color BGR):

User's input tensor: {1,640,640,3}, [N,H,W,C], f32

Model's expected tensor: {1,3,640,640}, [N,C,H,W], f32

Pre-processing steps (2):

convert color (RGB): ({1,640,640,3}, [N,H,W,C], f32, BGR) -> ({1,640,640,3}, [N,H,W,C], f32, RGB)

scale (255,255,255): ({1,640,640,3}, [N,H,W,C], f32, RGB) -> ({1,640,640,3}, [N,H,W,C], f32, RGB)

Implicit pre-processing steps (1):

convert layout [N,C,H,W]: ({1,640,640,3}, [N,H,W,C], f32, RGB) -> ({1,3,640,640}, [N,C,H,W], f32, RGB)

Traceback (most recent call last):

File "python/webcam.py", line 24, in <module>

yolov7_detector.infer_cam(args.input)

File "N:\anaconda_win\work\YOLOv7_OpenVINO_cpp-python-main\python\yolov7.py", line 262, in infer_cam

self.infer_queue.start_async({self.input_layer.any_name: img_batch}, (src_img_list, src_size))

File "C:\Users\izuts\anaconda3\envs\py38a\lib\site-packages\openvino\runtime\ie_api.py", line 241, in start_async

inputs, get_input_types(self[self.get_idle_request_id()])

KeyboardInterrupt

[ WARN:0@166.632] global D:\a\opencv-python\opencv-python\opencv\modules\videoio\src\cap_msmf.cpp (539) `anonymous-namespace'::SourceReaderCB::~SourceReaderCB terminating async callback

(py38a) PS > cd /anaconda_win/work/YOLOv7_OpenVINO_cpp-python-main/

(py38a) PS> python python/webcam.py -m yolov7.onnx -i 0

Traceback (most recent call last):

File "python/webcam.py", line 24, in <module>

yolov7_detector.infer_cam(args.input)

File "N:\anaconda_win\work\YOLOv7_OpenVINO_cpp-python-main\python\yolov7.py", line 262, in infer_cam

self.infer_queue.start_async({self.input_layer.any_name: img_batch}, (src_img_list, src_size))

File "C:\Users\izuts\anaconda3\envs\py38a\lib\site-packages\openvino\runtime\ie_api.py", line 237, in start_async

super().start_async(

RuntimeError: Can't SetBlob with name: images, because model input (shape={1,3,640,640}) and blob (shape=(1.640.640.3)) are incompatible

[ WARN:1@7.773] global D:\a\opencv-python\opencv-python\opencv\modules\videoio\src\cap_msmf.cpp (539) `anonymous-namespace'::SourceReaderCB::~SourceReaderCB term

(py38a) PS > cd /anaconda_win/work/yolov7 (py38a) PS C:\anaconda_win\work\yolov7> python object_detect_yolo7.py

| コマンドオプション | デフォールト設定 | 意味 | ※注 |

| -h, --help | - | ヘルプ表示 | |

| -i, --input | ../../Videos/car_m.mp4 | カメラ(cam)または動画・静止画像ファイル | ※1 |

| -m, --model | ../../model/yolov7.onnx | 学習済みモデル(IR/onnx) | |

| -d, --device | CPU | デバイス指定 (CPU/GPU/MYRIAD) | |

| --conf | 0.25 | クラス判定の閾値 (数値が小さい程オブジェクトは増えるが、ノイズも増える | ※2 |

| --iou | 0.45 | Intersection Over Union(検出領域が重なっている割合、数値が大きいほど重なり度合いが高い) | |

| -l, --label | coco.names_jp | ラベル・ファイル | |

| --log | 3 | ログ出力レベル(0/1/2/3/4/5) | |

| -t, --title | y | タイトル表示 (y/n) | |

| -s, --speed | y | スピード計測表示 (y/n) | |

| -o, --out | non | 処理結果を出力する場合のファイル名 | |

| -p, --pre_api | False | Preprocessing api. | |

| -bs, --batchsize | 1 | Batch size. | |

| -n, --nireq | 1 | number of infer request. | |

| -g, --grid | False | With grid in model. |

(py38a) PS N:\anaconda_win\work\yolov7> python object_detect_yolo7.py -h

usage: object_detect_yolo7.py [-h] [-i INPUT_IMAGE] [-m MODEL] [-d DEVICE]

[-lb LABEL_FILE] [--log LOG] [-t TITLE]

[-s SPEED] [-o IMAGE_OUT] [-p] [-bs BATCHSIZE]

[-n NIREQ] [-g]

optional arguments:

-h, --help show this help message and exit

-i INPUT_IMAGE, --input INPUT_IMAGE

Absolute path to image file or cam for camera

stream.Default value is '../../Videos/car_m.mp4'

-m MODEL, --model MODEL

Model Path to an .xml file with a trained

model.Default value is ../../model/yolov7.onnx

-d DEVICE, --device DEVICE

Optional. Specify a target device to infer on. CPU,

GPU, FPGA, HDDL or MYRIAD is acceptable. The demo will

look for a suitable plugin for the device specified.

Default value is CPU

--conf CONF object confidence threshold

--iou IOU IOU threshold for NMS

-lb LABEL_FILE, --labels LABEL_FILE

Absolute path to labels file.Default value is

'coco.names_jp'

--log LOG Log level(-1/0/1/2/3/4/5) Default value is '3'

-t TITLE, --title TITLE

Program title flag.(y/n) Default value is 'y'

-s SPEED, --speed SPEED

Speed display flag.(y/n) Default calue is 'y'

-o IMAGE_OUT, --out IMAGE_OUT

Processed image file path. Default value is 'non'

-p, --pre_api Preprocessing api.

-bs BATCHSIZE, --batchsize BATCHSIZE

Batch size.

-n NIREQ, --nireq NIREQ

number of infer request.

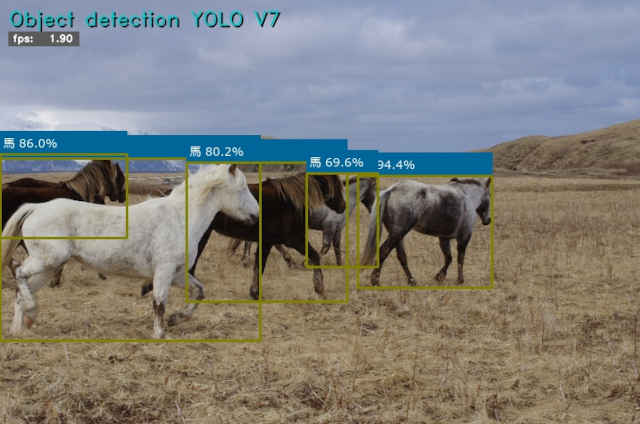

-g, --grid With grid in model.(py38a) PS C:\anaconda_win\work\YOLOv7_OpenVINO_cpp-python-main> cd ../yolov7 (py38a) PS C:\anaconda_win\work\yolov7> python object_detect_yolo7.py Starting.. - Program title : Object detection YOLO V7 - OpenCV version : 4.5.5 - OpenVINO engine: 2022.1.0-7019-cdb9bec7210-releases/2022/1 - Input image : ../../Videos/car_m.mp4 - Model : ../../model/yolov7.onnx - Device : CPU - Confidence thr : 0.1 - IOU threshold : 0.6 - Label : coco.names_jp - Log level : 3 - Title flag : y - Speed flag : y - Processed out : non - Preprocessing : False - Batch size : 1 - number of inf : 1 - With grid : False FPS average: 1.80 Finished.

(py38a) PS C:\anaconda_win\work\yolov7> python object_detect_yolo7.py -i ../../Videos/car1_m.mp4

(py38a) PS C:\anaconda_win\work\yolov7> python object_detect_yolo7.py -i ../../Videos/car2_m.mp4

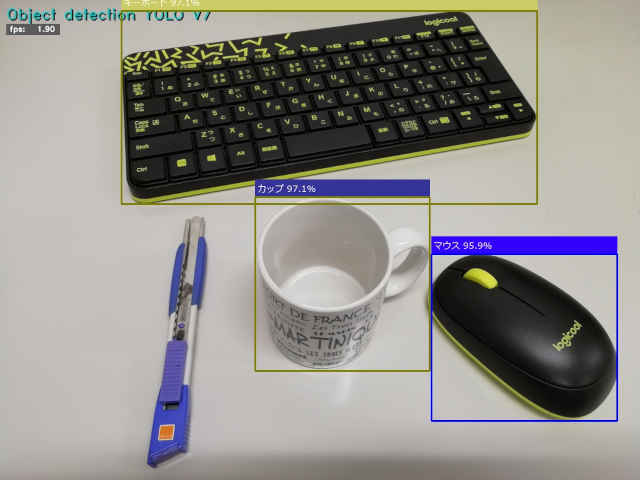

(py38a) PS C:\anaconda_win\work\yolov7> python object_detect_yolo7.py -i ../../Images/desk-image.jpg

(py38a) PS C:\anaconda_win\work\yolov7> python object_detect_yolo7.py -i ../../Images/car-person.jpg

(py38a) PS C:\anaconda_win\work\yolov7> python object_detect_yolo7.py -i ../../Images/bus.jpg

(py38a) PS C:\anaconda_win\work\yolov7> python object_detect_yolo7.py -i ../../Images/zidane.jpg

(py38a) PS C:\anaconda_win\work\yolov7> python object_detect_yolo7.py -i 0※ 指定できるファイル拡張子 .mp4 .bmp .png .jpg .jpeg .JPG .tif

# -*- coding: utf-8 -*-

##------------------------------------------

## Object detection YOLO V7 Ver 0.03

## with OpenVINO™ runtime

##

## 2023.04.12 Masahiro Izutsu

##------------------------------------------

## GitHub https://github.com/OpenVINO-dev-contest/YOLOv7_OpenVINO_cpp-python

##

## yolo7_ov.py

## ver 0.03 2023.07.18 conf, iou コマンドパラメータによる設定追加

# import処理

import sys

import os

import yolov7s

import argparse

import cv2

import numpy as np

from openvino.inference_engine import get_version

import my_logging

import my_file

import my_color80

import my_process

import my_movedlg

import my_dialog

import my_winstat

from my_print import printG, printR, printY, printC, printB, printN

# 定数定義

from os.path import expanduser

MODEL_DEF = expanduser('../../model/yolov7.onnx')

INPUT_IMAGE_DEF = '../../Videos/car_m.mp4' # 入力イメージ・パラメータ初期値

LABEL_DEF = 'coco.names_jp'

# Parses arguments for the application

def parse_args():

parser = argparse.ArgumentParser()

parser.add_argument('-i', '--input', metavar = 'INPUT_IMAGE', type=str, default = INPUT_IMAGE_DEF,

help = 'Absolute path to image file or cam for camera stream.'

'Default value is \''+INPUT_IMAGE_DEF+'\'')

parser.add_argument('-m', '--model', type=str,

default = MODEL_DEF,

help = 'Model Path to an .xml file with a trained model.'

'Default value is '+MODEL_DEF)

parser.add_argument('-d', '--device', default='CPU', type = str,

help = 'Optional. Specify a target device to infer on. CPU, GPU, FPGA, HDDL or MYRIAD is '

'acceptable. The demo will look for a suitable plugin for the device specified. '

'Default value is CPU')

parser.add_argument('--conf', default = 0.25, type = float, help = 'object confidence threshold')

parser.add_argument('--iou', default = 0.45, type = float, help = 'IOU threshold for NMS')

parser.add_argument('-lb', '--labels', metavar = 'LABEL_FILE', type = str, default = LABEL_DEF,

help = 'Absolute path to labels file.'

'Default value is \''+LABEL_DEF+'\'')

parser.add_argument('--log', metavar = 'LOG', default = '3',

help = 'Log level(-1/0/1/2/3/4/5) Default value is \'3\'')

parser.add_argument('-t', '--title', metavar = 'TITLE',

default = 'y',

help = 'Program title flag.(y/n) Default value is \'y\'')

parser.add_argument('-s', '--speed', metavar = 'SPEED',

default = 'y',

help = 'Speed display flag.(y/n) Default calue is \'y\'')

parser.add_argument('-o', '--out', metavar = 'IMAGE_OUT',

default = 'non',

help = 'Processed image file path. Default value is \'non\'')

parser.add_argument('-p', '--pre_api', required = False, action='store_true',

help = 'Preprocessing api.')

parser.add_argument('-bs', '--batchsize', required = False, default = 1, type=int,

help = 'Batch size.')

parser.add_argument('-n', '--nireq', required = False, default = 1, type=int,

help = 'number of infer request.')

parser.add_argument('-g', '--grid', required = False, action = 'store_true',

help = 'With grid in model.')

return parser

# モデル基本情報の表示

def display_info(title, input_str, model, device, conf, iou, labels, log, titleflg, speedflg, outpath, pre_api, batchsize, nireq, grid):

printG(f' - Program title : {title}')

printG(f' - OpenCV version : {cv2.__version__}')

printG(f' - OpenVINO engine: {get_version()}')

printY(f' - Input image : {input_str}')

printY(f' - Model : {model}')

printY(f' - Device : {device}')

printY(f' - Confidence thr : {conf}')

printY(f' - IOU threshold : {iou}')

printY(f' - Label : {labels}')

printY(f' - Log level : {log}')

printY(f' - Title flag : {titleflg}')

printY(f' - Speed flag : {speedflg}')

printY(f' - Processed out : {outpath}')

printB(f' - Preprocessing : {pre_api}')

printB(f' - Batch size : {batchsize}')

printB(f' - number of inf : {nireq}')

printB(f' - With grid : {grid}')

# ** main関数 **

def main():

# 入力パラメータの処理

args = parse_args().parse_args()

input_str = args.input

log = args.log

logsel = int(log)

labels = args.labels

titleflg = args.title

speedflg = args.speed

model = args.model

device = args.device

outpath = args.out

conf = args.conf

iou = args.iou

pre_api = args.pre_api

batchsize = args.batchsize

nireq = args.nireq

grid = args.grid

# ファイル処理クラスのインスタンス

my_file_treatment = my_file.FileTreatment(logsel)

# 入力ソースの違いによる前処理

if input_str == '0':

input_str = my_dialog.select_image_movie_file(initdir = '../../Videos')

if len(input_str) <= 0:

printR('Program canceled.')

return 0

input_stream = input_str

if input_str.lower() == "cam" or input_str.lower() == "camera":

input_stream = 0

isstream = True

else:

filetype = my_file_treatment.is_pict(input_stream)

isstream = filetype == 'None'

if (filetype == 'NotFound'):

printR(f"'{input_stream}': Input file Not found.")

return 0

if isstream and outpath != 'non' and os.path.splitext(outpath)[1] != '.mp4':

printR(f"'{outpath}': Output file type is '.mp4'")

return 0

# アプリケーション・ログ設定

module = os.path.basename(__file__)

module_name = os.path.splitext(module)[0]

logger = my_logging.get_module_logger_sel(module_name, logsel)

logger.info('Starting..')

# YOLO7 オブジェクト

yolov7_detector=yolov7s.YOLOV7_OPENVINO(model, device, conf, iou, pre_api, batchsize, nireq, grid)

title = yolov7_detector.title

# 分類ラベルの取得

with open(labels, encoding='utf-8') as labels_file:

label_list = labels_file.read().splitlines()

yolov7_detector.set_classes(label_list)

# 情報表示

display_info(title, input_str, model, device, conf, iou, labels, log, titleflg, speedflg, outpath, pre_api, batchsize, nireq, grid)

logger.debug(f'Object list\n {label_list}')

# 関数内パラメータ

window_name = yolov7_detector.title + " (hit 'q' or 'esc' key to exit)"

# 入力準備

if (isstream):

# カメラ

cap = cv2.VideoCapture(input_stream)

success, img_frame = cap.read()

if success == False:

logger.error('camera read error !!')

loopflg = cap.isOpened()

img_width = int(cap.get(cv2.CAP_PROP_FRAME_WIDTH))

img_height = int(cap.get(cv2.CAP_PROP_FRAME_HEIGHT))

else:

# 画像ファイル読み込み

img_frame = cv2.imread(input_stream)

if img_frame is None:

logger.error('unable to read the input.')

return 0

# アスペクト比を固定してリサイズ(スクリーンに収まるサイズ)

h, w = my_movedlg.get_display_size()

mx = h - 10 if h > w else w - 10

img_frame = my_process.frame_resize(img_frame, mx)

loopflg = True # 1回ループ

img_width = img_frame.shape[1]

img_height = img_frame.shape[0]

# ウインドウ初期設定

cv2.namedWindow(window_name, flags=cv2.WINDOW_AUTOSIZE | cv2.WINDOW_GUI_NORMAL)

cv2.moveWindow(window_name, 20, 0)

# 処理結果の記録 step1

if (outpath != 'non'):

if (isstream):

fps = int(cap.get(cv2.CAP_PROP_FPS))

fourcc = cv2.VideoWriter_fourcc('m', 'p', '4', 'v')

outvideo = cv2.VideoWriter(outpath, fourcc, fps, (img_width, img_height))

# YOLO7 Set callback function for postprocess

yolov7_detector.infer_queue.set_callback(yolov7_detector.postprocess)

# fps計測開始

yolov7_detector.fpsWithTick.get()

# メインループ

while (loopflg):

if img_frame is None:

logger.error('unable to read the input.')

return 0

src_img_list = []

src_img_list.append(img_frame)

img = yolov7_detector.letterbox(img_frame, yolov7_detector.img_size)

src_size = img_frame.shape[:2]

img = img.astype(dtype=np.float32)

if (yolov7_detector.pre_api == False):

img = cv2.cvtColor(img, cv2.COLOR_BGR2RGB) # BGR to RGB

img /= 255.0

img = img.transpose(2, 0, 1) # NHWC to NCHW

input_image = np.expand_dims(img, 0)

# Do inference

yolov7_detector.infer_queue.start_async({yolov7_detector.input_layer.any_name: input_image}, (src_img_list, src_size))

# ウインドウの更新/画像表示

yolov7_detector.infer_queue.wait_all()

cv2.imshow(window_name, img_frame)

# 処理結果の記録 step2

if (outpath != 'non'):

if (isstream):

outvideo.write(img_frame)

else:

cv2.imwrite(outpath, img_frame)

# 何らかのキーが押されたら終了

breakflg = False

while(True):

key = cv2.waitKey(1) # (カメラ・アクセス速度調整)

if key == 27 or key == 113: # 'esc' or 'q'

breakflg = True

break

if not my_winstat._is_visible(window_name): # 'Close' button

breakflg = True

break

if (isstream):

break

if ((breakflg == False) and isstream):

# 次のフレームを読み出す

success, img_frame = cap.read()

if success == False: # 動画終了

break

loopflg = cap.isOpened()

else:

loopflg = False

# 終了処理

if (isstream):

cap.release()

# 処理結果の記録 step3

if (outpath != 'non'):

if (isstream):

outvideo.release()

cv2.destroyAllWindows()

printC('FPS average: {:>10.2f}'.format(yolov7_detector.fpsWithTick.get_average()))

logger.info('Finished.\n')

if __name__ == "__main__":

sys.exit(main() or 0)

# -*- coding: utf-8 -*-

##------------------------------------------

## YOLOV7_OPENVINO Ver 0.03

##

## 2023.04.12 Masahiro Izutsu

##------------------------------------------

## GitHub https://github.com/OpenVINO-dev-contest/YOLOv7_OpenVINO_cpp-python

##

## yolo7s.py

## ver 0.02 2023.05.19 ラベルファイル数を取得するように変更

## ver 0.03 2023.07.18 conf, iou コマンドパラメータによる設定追加

from openvino.runtime import Core

import cv2

import numpy as np

import random

import time

from openvino.preprocess import PrePostProcessor, ColorFormat

from openvino.runtime import Layout, AsyncInferQueue, PartialShape

import my_fps

import my_puttext

import my_color80

TEXT_COLOR = my_color80.CR_white

class YOLOV7_OPENVINO(object):

def __init__(self, model_path, device, conf, iou, pre_api, batchsize, nireq, grid):

self.title = 'Object detection YOLO V7'

# 日本語フォント指定

self.fontFace = my_puttext.get_font()

# 計測値

self.fpsWithTick = my_fps.fpsWithTick()

self.speedflg = 'y'

# set the hyperparameters

self.classes = [

"person", "bicycle", "car", "motorcycle", "airplane", "bus", "train", "truck", "boat", "traffic light",

"fire hydrant", "stop sign", "parking meter", "bench", "bird", "cat", "dog", "horse", "sheep", "cow",

"elephant", "bear", "zebra", "giraffe", "backpack", "umbrella", "handbag", "tie", "suitcase", "frisbee",

"skis", "snowboard", "sports ball", "kite", "baseball bat", "baseball glove", "skateboard", "surfboard",

"tennis racket", "bottle", "wine glass", "cup", "fork", "knife", "spoon", "bowl", "banana", "apple",

"sandwich", "orange", "broccoli", "carrot", "hot dog", "pizza", "donut", "cake", "chair", "couch",

"potted plant", "bed", "dining table", "toilet", "tv", "laptop", "mouse", "remote", "keyboard", "cell phone",

"microwave", "oven", "toaster", "sink", "refrigerator", "book", "clock", "vase", "scissors", "teddy bear",

"hair drier", "toothbrush"

]

self.batchsize = batchsize

self.grid = grid

self.img_size = (640, 640)

self.conf_thres = conf

self.iou_thres = iou

self.class_num = len(self.classes)

self.colors = [[random.randint(0, 255) for _ in range(3)] for _ in self.classes]

self.stride = [8, 16, 32]

self.anchor_list = [[12, 16, 19, 36, 40, 28], [36, 75, 76, 55, 72, 146], [142, 110, 192, 243, 459, 401]]

self.anchor = np.array(self.anchor_list).astype(float).reshape(3, -1, 2)

area = self.img_size[0] * self.img_size[1]

self.size = [int(area / self.stride[0] ** 2), int(area / self.stride[1] ** 2), int(area / self.stride[2] ** 2)]

self.feature = [[int(j / self.stride[i]) for j in self.img_size] for i in range(3)]

ie = Core()

self.model = ie.read_model(model_path)

self.input_layer = self.model.input(0)

new_shape = PartialShape([self.batchsize, 3, self.img_size[0], self.img_size[1]])

self.model.reshape({self.input_layer.any_name: new_shape})

self.pre_api = pre_api

if (self.pre_api == True):

# Preprocessing API

ppp = PrePostProcessor(self.model)

# Declare section of desired application's input format

ppp.input().tensor() \

.set_layout(Layout("NHWC")) \

.set_color_format(ColorFormat.BGR)

# Here, it is assumed that the model has "NCHW" layout for input.

ppp.input().model().set_layout(Layout("NCHW"))

# Convert current color format (BGR) to RGB

ppp.input().preprocess() \

.convert_color(ColorFormat.RGB) \

.scale([255.0, 255.0, 255.0])

self.model = ppp.build()

print(f'Dump preprocessor: {ppp}')

self.compiled_model = ie.compile_model(model=self.model, device_name=device)

self.infer_queue = AsyncInferQueue(self.compiled_model, nireq)

def set_classes(self, classes):

self.classes = classes

self.class_num = len(self.classes)

def letterbox(self, img, new_shape=(640, 640), color=(114, 114, 114)):

# Resize and pad image while meeting stride-multiple constraints

shape = img.shape[:2] # current shape [height, width]

if isinstance(new_shape, int):

new_shape = (new_shape, new_shape)

# Scale ratio (new / old)

r = min(new_shape[0] / shape[0], new_shape[1] / shape[1])

new_unpad = int(round(shape[1] * r)), int(round(shape[0] * r))

dw, dh = new_shape[1] - new_unpad[0], new_shape[0] - \

new_unpad[1] # wh padding

# divide padding into 2 sides

dw /= 2

dh /= 2

# resize

if shape[::-1] != new_unpad:

img = cv2.resize(img, new_unpad, interpolation=cv2.INTER_LINEAR)

top, bottom = int(round(dh - 0.1)), int(round(dh + 0.1))

left, right = int(round(dw - 0.1)), int(round(dw + 0.1))

# add border

img = cv2.copyMakeBorder(img, top, bottom, left, right, cv2.BORDER_CONSTANT, value=color)

return img

def xywh2xyxy(self, x):

# Convert nx4 boxes from [x, y, w, h] to [x1, y1, x2, y2] where xy1=top-left, xy2=bottom-right

y = np.copy(x)

y[:, 0] = x[:, 0] - x[:, 2] / 2 # top left x

y[:, 1] = x[:, 1] - x[:, 3] / 2 # top left y

y[:, 2] = x[:, 0] + x[:, 2] / 2 # bottom right x

y[:, 3] = x[:, 1] + x[:, 3] / 2 # bottom right y

return y

def nms(self, prediction, conf_thres, iou_thres):

predictions = np.squeeze(prediction[0])

# Filter out object confidence scores below threshold

obj_conf = predictions[:, 4]

predictions = predictions[obj_conf > conf_thres]

obj_conf = obj_conf[obj_conf > conf_thres]

# Multiply class confidence with bounding box confidence

predictions[:, 5:] *= obj_conf[:, np.newaxis]

# Get the scores

scores = np.max(predictions[:, 5:], axis=1)

# Filter out the objects with a low score

valid_scores = scores > conf_thres

predictions = predictions[valid_scores]

scores = scores[valid_scores]

# Get the class with the highest confidence

class_ids = np.argmax(predictions[:, 5:], axis=1)

# Get bounding boxes for each object

boxes = self.xywh2xyxy(predictions[:, :4])

# Apply non-maxima suppression to suppress weak, overlapping bounding boxes

# indices = nms(boxes, scores, self.iou_threshold)

indices = cv2.dnn.NMSBoxes(boxes.tolist(), scores.tolist(), conf_thres, iou_thres)

return boxes[indices], scores[indices], class_ids[indices]

def clip_coords(self, boxes, img_shape):

# Clip bounding xyxy bounding boxes to image shape (height, width)

boxes[:, 0].clip(0, img_shape[1]) # x1

boxes[:, 1].clip(0, img_shape[0]) # y1

boxes[:, 2].clip(0, img_shape[1]) # x2

boxes[:, 3].clip(0, img_shape[0]) # y2

def scale_coords(self, img1_shape, img0_shape, coords, ratio_pad=None):

# Rescale coords (xyxy) from img1_shape to img0_shape

# gain = old / new

if ratio_pad is None:

gain = min(img1_shape[0] / img0_shape[0],

img1_shape[1] / img0_shape[1])

padding = (img1_shape[1] - img0_shape[1] * gain) / \

2, (img1_shape[0] - img0_shape[0] * gain) / 2

else:

gain = ratio_pad[0][0]

padding = ratio_pad[1]

coords[:, [0, 2]] -= padding[0] # x padding

coords[:, [1, 3]] -= padding[1] # y padding

coords[:, :4] /= gain

self.clip_coords(coords, img0_shape)

def sigmoid(self, x):

return 1 / (1 + np.exp(-x))

def draw(self, img, boxinfo):

# タイトル描画

if len(self.title) != 0:

cv2.putText(img, self.title, (10 + 2, 30 + 2), cv2.FONT_HERSHEY_DUPLEX, fontScale=0.8, color=(0, 0, 0), lineType=cv2.LINE_AA)

cv2.putText(img, self.title, (10, 30), cv2.FONT_HERSHEY_DUPLEX, fontScale=0.8, color=(200, 200, 0), lineType=cv2.LINE_AA)

# FPSを表示

fps = self.fpsWithTick.get()

st_fps = 'fps: {:>6.2f}'.format(fps)

if (self.speedflg == 'y'):

cv2.rectangle(img, (10, 38), (95, 55), (90, 90, 90), -1)

cv2.putText(img, st_fps, (15, 50), cv2.FONT_HERSHEY_DUPLEX, fontScale=0.4, color=(255, 255, 255), lineType=cv2.LINE_AA)

for xyxy, conf, cls in boxinfo:

xmin = int(xyxy[0])

ymin = int(xyxy[1])

xmax = int(xyxy[2])

ymax = int(xyxy[3])

label = self.classes[int(cls)] + ' ' + str(round(conf * 100, 1)) + '%'

# Plots one bounding box on image img

color_boder = my_color80.get_boder_bgr80(int(cls))

color_back = my_color80.get_back_bgr80(int(cls))

x1, y1, x2, y2 = my_puttext.cv2_putText(img, label, (xmin + 4, ymin - 7), self.fontFace, 14, TEXT_COLOR, 0, True) # 描画範囲の取得

cv2.rectangle(img, (xmin, ymin -28), (max(xmax, x2), ymin), color_back, -1)

cv2.rectangle(img, (xmin, ymin), (xmax, ymax), color_boder, 2)

my_puttext.cv2_putText(img, label, (xmin + 4, ymin - 7), self.fontFace, 14, TEXT_COLOR, 0)

def postprocess(self, infer_request, info):

src_img_list, src_size = info

for batch_id in range(self.batchsize):

if self.grid:

results = np.expand_dims(infer_request.get_output_tensor(0).data[batch_id], axis=0)

else:

output = []

# Get the each feature map's output data

output.append(self.sigmoid(infer_request.get_output_tensor(0).data[batch_id].reshape(-1, self.size[0]*3, 5+self.class_num)))

output.append(self.sigmoid(infer_request.get_output_tensor(1).data[batch_id].reshape(-1, self.size[1]*3, 5+self.class_num)))

output.append(self.sigmoid(infer_request.get_output_tensor(2).data[batch_id].reshape(-1, self.size[2]*3, 5+self.class_num)))

# Postprocessing

grid = []

for _, f in enumerate(self.feature):

grid.append([[i, j] for j in range(f[0]) for i in range(f[1])])

result = []

for i in range(3):

src = output[i]

xy = src[..., 0:2] * 2. - 0.5

wh = (src[..., 2:4] * 2) ** 2

dst_xy = []

dst_wh = []

for j in range(3):

dst_xy.append((xy[:, j * self.size[i]:(j + 1) * self.size[i], :] + grid[i]) * self.stride[i])

dst_wh.append(wh[:, j * self.size[i]:(j + 1) *self.size[i], :] * self.anchor[i][j])

src[..., 0:2] = np.concatenate((dst_xy[0], dst_xy[1], dst_xy[2]), axis=1)

src[..., 2:4] = np.concatenate((dst_wh[0], dst_wh[1], dst_wh[2]), axis=1)

result.append(src)

results = np.concatenate(result, 1)

boxes, scores, class_ids = self.nms(results, self.conf_thres, self.iou_thres)

img_shape = self.img_size

self.scale_coords(img_shape, src_size, boxes)

# Draw the results

self.draw(src_img_list[batch_id], zip(boxes, scores, class_ids))

※「py38a」仮想環境で実行可能な修正を加えて本プロジェクト内に再配置した

(py38a) PS C:\anaconda_win\work\YOLOv7_OpenVINO_cpp-python-main> cd ../openvino (py38a) PS C:\anaconda_win\work\openvino> python object_detect_yolo5.py Starting.. - Program title : Object detection YOLO V5 - OpenCV version : 4.5.5 - OpenVINO engine: 2022.1.0-7019-cdb9bec7210-releases/2022/1 - Input image : ../../Videos/car_m.mp4 - Model : ../../model/yolov5s.xml - Device : CPU - Label : coco.names_jp - Threshold : 0.5 - Threshold (IOU): 0.4 - Log level : 3 - Program Title : y - Speed flag : y - Processed out : non Loading model to the plugin Starting inference... FPS average: 2.70 Finished.

(py38a) PS C:\anaconda_win\work\openvino> python object_detect_yolo3.py

Starting..

- Program title : Object detection TinyYOLO V3

- OpenCV version : 4.5.5

- OpenVINO engine: 2022.1.0-7019-cdb9bec7210-releases/2022/1

- Input image : ../../Videos/car_m.mp4

- Model : ../../model/public/FP32/yolo-v3-tiny-tf.xml

- Device : CPU

- Labels File : coco.names_jp

- Threshold : 0.6

- Intersection Over Union: 0.25

- Log level : 3

- Program Title : y

- Speed flag : y

- Processed out : non

- Input Shape : [1, 3, 416, 416]

- Output Shapes :

- output #0 name : conv2d_12/Conv2D/YoloRegion

- output shape: [1, 255, 26, 26]

- output #1 name : conv2d_9/Conv2D/YoloRegion

- output shape: [1, 255, 13, 13]

FPS average: 17.30

Finished.# -*- coding: utf-8 -*-

##------------------------------------------

## Object detection YOLO V5 Ver 0.05

## with OpenVINO™ toolkit

##

## 2022.11.01 Masahiro Izutsu

##------------------------------------------

## ver 0.00 2021.10.02 yolov5_demo_OV.py (original: yolov5_demo_OV2021.3.py)

## YOLO V5 OpenVINO demoprogram

## for https://github.com/ultralytics/yolov5/tree/v5.0

## GitHub https://github.com/violet17/yolov5_demo

##

## object_detect_yolo5.py (yolov5_demo_OV.py 2021.10.02)

## ver 0.05 2023.04.12 クラス描画範囲の出力対応

# 定数定義

title = 'Object detection YOLO V5'

from os.path import expanduser

MODEL_DEF = expanduser('../../model/yolov5s.xml')

INPUT_IMAGE_DEF = '../../Videos/car_m.mp4' # 入力イメージ・パラメータ初期値

LABEL_DEF = 'coco.names_jp'

# モジュール読み込み

from openvino.inference_engine import IECore

from openvino.inference_engine import get_version

# import処理

import sys

import cv2

import numpy as np

import os

import argparse

import my_logging

import my_puttext

import my_file

import my_movedlg

import my_dialog

import my_process

import my_winstat

import my_fps

import my_color80

from my_print import printG, printR, printY, printC, printB, printN

from math import exp as exp

# Adjust these thresholds

PROB_THRESHOLD = 0.5

IOU_THRESHOLD = 0.4

# Used for display

TEXT_COLOR = my_color80.CR_white

# Parses arguments for the application

def parse_args():

parser = argparse.ArgumentParser()

parser.add_argument('-i', '--input', metavar = 'INPUT_IMAGE', type=str, default = INPUT_IMAGE_DEF,

help = 'Absolute path to image file or cam for camera stream.'

'Default value is \''+INPUT_IMAGE_DEF+'\'')

parser.add_argument('-m', '--model', type=str,

default = MODEL_DEF,

help = 'Model Path to an .xml file with a trained model.'

'Default value is '+MODEL_DEF)

parser.add_argument('-d', '--device', default='CPU', type = str,

help = 'Optional. Specify a target device to infer on. CPU, GPU, FPGA, HDDL or MYRIAD is '

'acceptable. The demo will look for a suitable plugin for the device specified. '

'Default value is CPU')

parser.add_argument('-lb', '--labels', metavar = 'LABEL_FILE', type = str, default = LABEL_DEF,

help = 'Absolute path to labels file.'

'Default value is \''+LABEL_DEF+'\'')

parser.add_argument('--threshold', metavar = 'FLOAT', type = float, default = PROB_THRESHOLD,

help = 'Optional. Probability threshold for detections filtering'

'Default value is \''+ str(PROB_THRESHOLD) +'\'')

parser.add_argument('--iou', metavar = 'FLOAT', type = float, default = IOU_THRESHOLD,

help = 'Optional. Intersection over union threshold for overlapping detections filtering'

'Default value is \''+ str(IOU_THRESHOLD) +'\'')

parser.add_argument('--log', metavar = 'LOG', default = '3',

help = 'Log level(-1/0/1/2/3/4/5) Default value is \'3\'')

parser.add_argument('-t', '--title', metavar = 'TITLE',

default = 'y',

help = 'Program title flag.(y/n) Default value is \'y\'')

parser.add_argument('-s', '--speed', metavar = 'SPEED',

default = 'y',

help = 'Speed display flag.(y/n) Default calue is \'y\'')

parser.add_argument('-o', '--out', metavar = 'IMAGE_OUT',

default = 'non',

help = 'Processed image file path. Default value is \'non\'')

return parser

# モデル基本情報の表示

def display_info(input_str, model, device, labels, threshold, iou_threshold, log, titleflg, speedflg, outpath):

printG(f' - Program title : {title}')

printG(f' - OpenCV version : {cv2.__version__}')

printG(f' - OpenVINO engine: {get_version()}')

printY(f' - Input image : {input_str}')

printY(f' - Model : {model}')

printY(f' - Device : {device}')

printY(f' - Label : {labels}')

printY(f' - Threshold : {threshold}')

printY(f' - Threshold (IOU): {iou_threshold}')

printY(f' - Log level : {log}')

printY(f' - Program Title : {titleflg}')

printY(f' - Speed flag : {speedflg}')

printY(f' - Processed out : {outpath}')

class YoloParams:

# ------------------------------------------- Extracting layer parameters ------------------------------------------

# Magic numbers are copied from yolo samples

def __init__(self, side):

self.num = 3 #if 'num' not in param else int(param['num'])

self.coords = 4 #if 'coords' not in param else int(param['coords'])

self.classes = 80 #if 'classes' not in param else int(param['classes'])

self.side = side

self.anchors = [10.0, 13.0, 16.0, 30.0, 33.0, 23.0, 30.0, 61.0, 62.0, 45.0, 59.0, 119.0, 116.0, 90.0, 156.0,

198.0,

373.0, 326.0] #if 'anchors' not in param else [float(a) for a in param['anchors'].split(',')]

#self.isYoloV3 = False

#if param.get('mask'):

# mask = [int(idx) for idx in param['mask'].split(',')]

# self.num = len(mask)

# maskedAnchors = []

# for idx in mask:

# maskedAnchors += [self.anchors[idx * 2], self.anchors[idx * 2 + 1]]

# self.anchors = maskedAnchors

# self.isYoloV3 = True # Weak way to determine but the only one.

def log_params(self, logger):

params_to_print = {'classes': self.classes, 'num': self.num, 'coords': self.coords, 'anchors': self.anchors}

[logger.debug(" {:8}: {}".format(param_name, param)) for param_name, param in params_to_print.items()]

def letterbox(img, size=(640, 640), color=(114, 114, 114), auto=True, scaleFill=False, scaleup=True):

# Resize image to a 32-pixel-multiple rectangle https://github.com/ultralytics/yolov3/issues/232

shape = img.shape[:2] # current shape [height, width]

w, h = size

# Scale ratio (new / old)

r = min(h / shape[0], w / shape[1])

if not scaleup: # only scale down, do not scale up (for better test mAP)

r = min(r, 1.0)

# Compute padding

ratio = r, r # width, height ratios

new_unpad = int(round(shape[1] * r)), int(round(shape[0] * r))

dw, dh = w - new_unpad[0], h - new_unpad[1] # wh padding

if auto: # minimum rectangle

dw, dh = np.mod(dw, 64), np.mod(dh, 64) # wh padding

elif scaleFill: # stretch

dw, dh = 0.0, 0.0

new_unpad = (w, h)

ratio = w / shape[1], h / shape[0] # width, height ratios

dw /= 2 # divide padding into 2 sides

dh /= 2

if shape[::-1] != new_unpad: # resize

img = cv2.resize(img, new_unpad, interpolation=cv2.INTER_LINEAR)

top, bottom = int(round(dh - 0.1)), int(round(dh + 0.1))

left, right = int(round(dw - 0.1)), int(round(dw + 0.1))

img = cv2.copyMakeBorder(img, top, bottom, left, right, cv2.BORDER_CONSTANT, value=color) # add border

top2, bottom2, left2, right2 = 0, 0, 0, 0

if img.shape[0] != h:

top2 = (h - img.shape[0])//2

bottom2 = top2

img = cv2.copyMakeBorder(img, top2, bottom2, left2, right2, cv2.BORDER_CONSTANT, value=color) # add border

elif img.shape[1] != w:

left2 = (w - img.shape[1])//2

right2 = left2

img = cv2.copyMakeBorder(img, top2, bottom2, left2, right2, cv2.BORDER_CONSTANT, value=color) # add border

return img

def scale_bbox(x, y, height, width, class_id, confidence, im_h, im_w, resized_im_h=640, resized_im_w=640):

gain = min(resized_im_w / im_w, resized_im_h / im_h) # gain = old / new

pad = (resized_im_w - im_w * gain) / 2, (resized_im_h - im_h * gain) / 2 # wh padding

x = int((x - pad[0])/gain)

y = int((y - pad[1])/gain)

w = int(width/gain)

h = int(height/gain)

xmin = max(0, int(x - w / 2))

ymin = max(0, int(y - h / 2))

xmax = min(im_w, int(xmin + w))

ymax = min(im_h, int(ymin + h))

# Method item() used here to convert NumPy types to native types for compatibility with functions, which don't

# support Numpy types (e.g., cv2.rectangle doesn't support int64 in color parameter)

return dict(xmin=xmin, xmax=xmax, ymin=ymin, ymax=ymax, class_id=class_id.item(), confidence=confidence.item())

def entry_index(side, coord, classes, location, entry):

side_power_2 = side ** 2

n = location // side_power_2

loc = location % side_power_2

return int(side_power_2 * (n * (coord + classes + 1) + entry) + loc)

def parse_yolo_region(blob, resized_image_shape, original_im_shape, params, threshold):

# ------------------------------------------ Validating output parameters ------------------------------------------

out_blob_n, out_blob_c, out_blob_h, out_blob_w = blob.shape

predictions = 1.0/(1.0+np.exp(-blob))

assert out_blob_w == out_blob_h, "Invalid size of output blob. It sould be in NCHW layout and height should " \

"be equal to width. Current height = {}, current width = {}" \

"".format(out_blob_h, out_blob_w)

# ------------------------------------------ Extracting layer parameters -------------------------------------------

orig_im_h, orig_im_w = original_im_shape

resized_image_h, resized_image_w = resized_image_shape

objects = list()

side_square = params.side * params.side

# ------------------------------------------- Parsing YOLO Region output -------------------------------------------

bbox_size = int(out_blob_c/params.num) #4+1+num_classes

for row, col, n in np.ndindex(params.side, params.side, params.num):

bbox = predictions[0, n*bbox_size:(n+1)*bbox_size, row, col]

x, y, width, height, object_probability = bbox[:5]

class_probabilities = bbox[5:]

if object_probability < threshold:

continue

x = (2*x - 0.5 + col)*(resized_image_w/out_blob_w)

y = (2*y - 0.5 + row)*(resized_image_h/out_blob_h)

if int(resized_image_w/out_blob_w) == 8 & int(resized_image_h/out_blob_h) == 8: #80x80,

idx = 0

elif int(resized_image_w/out_blob_w) == 16 & int(resized_image_h/out_blob_h) == 16: #40x40

idx = 1

elif int(resized_image_w/out_blob_w) == 32 & int(resized_image_h/out_blob_h) == 32: # 20x20

idx = 2

width = (2*width)**2* params.anchors[idx * 6 + 2 * n]

height = (2*height)**2 * params.anchors[idx * 6 + 2 * n + 1]

class_id = np.argmax(class_probabilities)

confidence = object_probability

objects.append(scale_bbox(x=x, y=y, height=height, width=width, class_id=class_id, confidence=confidence,

im_h=orig_im_h, im_w=orig_im_w, resized_im_h=resized_image_h, resized_im_w=resized_image_w))

return objects

def intersection_over_union(box_1, box_2):

width_of_overlap_area = min(box_1['xmax'], box_2['xmax']) - max(box_1['xmin'], box_2['xmin'])

height_of_overlap_area = min(box_1['ymax'], box_2['ymax']) - max(box_1['ymin'], box_2['ymin'])

if width_of_overlap_area < 0 or height_of_overlap_area < 0:

area_of_overlap = 0

else:

area_of_overlap = width_of_overlap_area * height_of_overlap_area

box_1_area = (box_1['ymax'] - box_1['ymin']) * (box_1['xmax'] - box_1['xmin'])

box_2_area = (box_2['ymax'] - box_2['ymin']) * (box_2['xmax'] - box_2['xmin'])

area_of_union = box_1_area + box_2_area - area_of_overlap

if area_of_union == 0:

return 0

return area_of_overlap / area_of_union

# ** main関数 **

def main():

# 日本語フォント指定

fontFace = my_puttext.get_font()

# 入力パラメータの処理

ARGS = parse_args().parse_args()

input_str = ARGS.input

log = ARGS.log

logsel = int(log)

labels = ARGS.labels

titleflg = ARGS.title

speedflg = ARGS.speed

model = ARGS.model

threshold = ARGS.threshold

iou_threshold = ARGS.iou

device = ARGS.device

outpath = ARGS.out

# ファイル処理クラスのインスタンス

my_file_treatment = my_file.FileTreatment(logsel)

# 入力ソースの違いによる前処理

if input_str == '0':

input_str = my_dialog.select_image_movie_file(initdir = '../../Videos')

if len(input_str) <= 0:

printR('Program canceled.')

return 0

input_stream = input_str

if input_str.lower() == "cam" or input_str.lower() == "camera":

input_stream = 0

isstream = True

else:

filetype = my_file_treatment.is_pict(input_stream)

isstream = filetype == 'None'

if (filetype == 'NotFound'):

printR(f"'{input_stream}': Input file Not found.")

return 0

if isstream and outpath != 'non' and os.path.splitext(outpath)[1] != '.mp4':

printR(f"'{outpath}': Output file type is '.mp4'")

return 0

# アプリケーション・ログ設定

module = os.path.basename(__file__)

module_name = os.path.splitext(module)[0]

logger = my_logging.get_module_logger_sel(module_name, logsel)

logger.info('Starting..')

# 分類ラベルの取得

with open(labels, encoding='utf-8') as labels_file:

label_list = labels_file.read().splitlines()

# 情報表示

display_info(input_str, model, device, labels, threshold, iou_threshold, log, titleflg, speedflg, outpath)

logger.debug(f'Object list\n {label_list}')

## 1. Plugin initialization for specified device and load extensions library if specified

logger.debug("Creating Inference Engine...")

ie = IECore()

## 2. Reading the IR generated by the Model Optimizer (.xml and .bin files)

logger.debug(f"Loading network:\n\t{model}")

net = ie.read_network(model=model)

## 3. Load CPU extension for support specific layer

## 4. Preparing inputs

logger.debug("Preparing inputs")

input_blob = next(iter(net.input_info))

# Defaulf batch_size is 1

net.batch_size = 1

# Read and pre-process input images

in_n, in_c, in_h, in_w = net.input_info[input_blob].input_data.shape

# 関数内パラメータ

window_name = title + " (hit 'q' or 'esc' key to exit)"

# 入力準備

if (isstream):

# カメラ

cap = cv2.VideoCapture(input_stream)

success, img_frame = cap.read()

if success == False:

logger.error('camera read error !!')

loopflg = cap.isOpened()

img_width = int(cap.get(cv2.CAP_PROP_FRAME_WIDTH))

img_height = int(cap.get(cv2.CAP_PROP_FRAME_HEIGHT))

else:

# 画像ファイル読み込み

img_frame = cv2.imread(input_stream)

if img_frame is None:

logger.error('unable to read the input.')

return 0

# アスペクト比を固定してリサイズ(スクリーンに収まるサイズ)

h, w = my_movedlg.get_display_size()

mx = h - 10 if h > w else w - 10

img_frame = my_process.frame_resize(img_frame, mx)

loopflg = True # 1回ループ

img_width = img_frame.shape[1]

img_height = img_frame.shape[0]

# ウインドウ初期設定

cv2.namedWindow(window_name, flags=cv2.WINDOW_AUTOSIZE | cv2.WINDOW_GUI_NORMAL)

cv2.moveWindow(window_name, 20, 0)

# 処理結果の記録 step1

if (outpath != 'non'):

if (isstream):

fps = int(cap.get(cv2.CAP_PROP_FPS))

fourcc = cv2.VideoWriter_fourcc('m', 'p', '4', 'v')

outvideo = cv2.VideoWriter(outpath, fourcc, fps, (img_width, img_height))

## 5. Loading model to the plugin

logger.info("Loading model to the plugin")

exec_net = ie.load_network(network=net, num_requests=2, device_name=device)

cur_request_id = 0

render_time = 0

parsing_time = 0

## 6. Doing inference

logger.info("Starting inference...")

# 計測値初期化

fpsWithTick = my_fps.fpsWithTick()

frame_count = 0

fps_total = 0

fpsWithTick.get() # fps計測開始

# メインループ

while (loopflg):

if img_frame is None:

logger.error('unable to read the input.')

return 0

frame = img_frame

in_frame = letterbox(frame, (in_w, in_h))

in_frame0 = in_frame

# resize input_frame to network size

in_frame = in_frame.transpose((2, 0, 1)) # Change data layout from HWC to CHW

in_frame = in_frame.reshape((in_n, in_c, in_h, in_w))

# Start inference

req_handle = exec_net.start_async(request_id=0, inputs={input_blob: in_frame})

status = req_handle.wait()

# Collecting object detection results

objects = list()

if exec_net.requests[cur_request_id].wait(-1) == 0:

output = exec_net.requests[cur_request_id].output_blobs

for layer_name, out_blob in output.items():

layer_params = YoloParams(side=out_blob.buffer.shape[2])

logger.debug("Layer {} parameters: ".format(layer_name)) # ログを表示

layer_params.log_params(logger)

objects += parse_yolo_region(out_blob.buffer, in_frame.shape[2:], frame.shape[:-1], layer_params, threshold)

# Filtering overlapping boxes with respect to the --iou_threshold CLI parameter

objects = sorted(objects, key=lambda obj : obj['confidence'], reverse=True)

for i in range(len(objects)):

if objects[i]['confidence'] == 0:

continue

for j in range(i + 1, len(objects)):

if intersection_over_union(objects[i], objects[j]) > iou_threshold:

objects[j]['confidence'] = 0

# Drawing objects with respect to the --prob_threshold CLI parameter

objects = [obj for obj in objects if obj['confidence'] >= threshold]

if (titleflg == 'y'):

logger.debug("\nDetected boxes for batch {}:".format(1))

logger.debug(" Class ID | Confidence | XMIN | YMIN | XMAX | YMAX | COLOR ")

origin_im_size = frame.shape[:-1]

for obj in objects:

# Validation bbox of detected object

if obj['xmax'] > origin_im_size[1] or obj['ymax'] > origin_im_size[0] or obj['xmin'] < 0 or obj['ymin'] < 0:

continue

# オブジェクト別の色指定

BOX_COLOR = my_color80.get_boder_bgr80(obj['class_id'])

LABEL_BG_COLOR = my_color80.get_back_bgr80(obj['class_id'])

det_label = label_list[obj['class_id']] if label_list and len(label_list) >= obj['class_id'] else \

str(obj['class_id'])

if (titleflg == 'y'):

logger.debug(

"{:^9} | {:10f} | {:4} | {:4} | {:4} | {:4} | {} ".format(det_label, obj['confidence'], obj['xmin'],

obj['ymin'], obj['xmax'], obj['ymax'],

BOX_COLOR))

lablstr = det_label + ' ' + str(round(obj['confidence'] * 100, 1)) + ' %'

x1, y1, x2, y2 = my_puttext.cv2_putText(frame, lablstr, (obj['xmin'] + 4, obj['ymin'] - 7), fontFace, 14, TEXT_COLOR, 0, True) # 描画範囲の取得

cv2.rectangle(frame, (obj['xmin'], obj['ymin']-26), (max(obj['xmax'], x2), obj['ymin']), LABEL_BG_COLOR, -1)

cv2.rectangle(frame, (obj['xmin'], obj['ymin']), (obj['xmax'], obj['ymax']), BOX_COLOR, 2)

my_puttext.cv2_putText(frame, lablstr, (obj['xmin'] + 4, obj['ymin'] - 7), fontFace, 14, TEXT_COLOR, 0)

# FPSを計算する

fps = fpsWithTick.get()

st_fps = 'fps: {:>6.2f}'.format(fps)

if (speedflg == 'y'):

cv2.rectangle(frame, (10, 38), (95, 55), (90, 90, 90), -1)

cv2.putText(frame, st_fps, (15, 50), cv2.FONT_HERSHEY_DUPLEX, fontScale=0.4, color=(255, 255, 255), lineType=cv2.LINE_AA)

# タイトル描画

if (titleflg == 'y'):

cv2.putText(frame, title, (10 + 2, 30 + 2), cv2.FONT_HERSHEY_DUPLEX, fontScale=0.8, color=(0, 0, 0), lineType=cv2.LINE_AA)

cv2.putText(frame, title, (10, 30), cv2.FONT_HERSHEY_DUPLEX, fontScale=0.8, color=(200, 200, 0), lineType=cv2.LINE_AA)

# ウインドウの更新/画像表示

cv2.imshow(window_name, frame)

# 処理結果の記録 step2

if (outpath != 'non'):

if (isstream):

outvideo.write(frame)

else:

cv2.imwrite(outpath, frame)

# 何らかのキーが押されたら終了

breakflg = False

while(True):

key = cv2.waitKey(1) # (カメラ・アクセス速度調整)

if key == 27 or key == 113: # 'esc' or 'q'

breakflg = True

break

if not my_winstat._is_visible(window_name): # 'Close' button

breakflg = True

break

if (isstream):

break

if ((breakflg == False) and isstream):

# 次のフレームを読み出す

success, img_frame = cap.read()

if success == False: # 動画終了

break

loopflg = cap.isOpened()

else:

loopflg = False

# 終了処理

if (isstream):

cap.release()

# 処理結果の記録 step3

if (outpath != 'non'):

if (isstream):

outvideo.release()

cv2.destroyAllWindows()

del net

del exec_net

printC('FPS average: {:>10.2f}'.format(fpsWithTick.get_average()))

logger.info('Finished.\n')

if __name__ == '__main__':

sys.exit(main() or 0)

# -*- coding: utf-8 -*-

##------------------------------------------

## Object detection Ver 0.06

## with OpenVINO™ toolkit

##

## model: yolo-v3-tiny-tf

##

## 2022.10.30 Masahiro Izutsu

##------------------------------------------

## ver 0.00 2021.02.24

## ver 0.01 2021.03.25 device parameter

## ver 0.02 2021.06.23 fps display

## ver 0.03 2022.01.04 linux/windows

## object_detect_yolo3_3.py (object_detect_yolo3_2.py ver 0.03 2022.01.04)

## ver 0.05 2022.11.26 object has no attribute 'outputs'

## object_detect_yolo3.py (object_detect_yolo3_3.py ver 0.05 2022.11.26)

## ver 0.06 2023.04.12 クラス描画範囲の出力対応

# 定数定義

title = 'Object detection TinyYOLO V3'

from os.path import expanduser

MODEL_DEF_DETECT = expanduser('../../model/public/FP32/yolo-v3-tiny-tf.xml')

INPUT_IMAGE_DEF = '../../Videos/car_m.mp4' # 入力イメージ・パラメータ初期値

LABEL_DEF = 'coco.names_jp'

# モジュール読み込み

from openvino.inference_engine import IECore

from openvino.inference_engine import get_version

# import処理

import sys

import cv2

import numpy as np

import os

import argparse

import my_logging

import my_puttext

import my_file

import my_movedlg

import my_dialog

import my_process

import my_winstat

import my_fps

import my_color80

from my_print import printG, printR, printY, printC, printB, printN

# Adjust these thresholds

DETECTION_THRESHOLD = 0.60

IOU_THRESHOLD = 0.25

# Tiny yolo anchor box values

anchors = [10,14, 23,27, 37,58, 81,82, 135,169, 344,319]

# Used for display

TEXT_COLOR = my_color80.CR_white # white text

# Parses arguments for the application

def parse_args():

parser = argparse.ArgumentParser()

parser.add_argument('-i', '--input', metavar = 'INPUT_IMAGE', type=str, default = INPUT_IMAGE_DEF,

help = 'Absolute path to image file or cam for camera stream.'

'Default value is \''+INPUT_IMAGE_DEF+'\'')

parser.add_argument('--ir', metavar = 'IR_File', type=str,

default = MODEL_DEF_DETECT,

help = 'Absolute path to the neural network IR xml file.'

'Default value is '+MODEL_DEF_DETECT)

parser.add_argument('-d', '--device', default='CPU', type = str,

help = 'Optional. Specify a target device to infer on. CPU, GPU, FPGA, HDDL or MYRIAD is '

'acceptable. The demo will look for a suitable plugin for the device specified. '

'Default value is CPU')

parser.add_argument('-lb', '--labels', metavar = 'LABEL_FILE', type = str, default = LABEL_DEF,

help = 'Absolute path to labels file.'

'Default value is \''+LABEL_DEF+'\'')

parser.add_argument('--threshold', metavar = 'FLOAT', type = float, default = DETECTION_THRESHOLD,

help = 'Threshold for detection.'

'Default value is \''+ str(DETECTION_THRESHOLD) +'\'')

parser.add_argument('--iou', metavar = 'FLOAT', type = float, default = IOU_THRESHOLD,

help = 'Intersection Over Union.'

'Default value is \''+ str(IOU_THRESHOLD) +'\'')

parser.add_argument('--log', metavar = 'LOG', default = '3',

help = 'Log level(-1/0/1/2/3/4/5) Default value is \'3\'')

parser.add_argument('-t', '--title', metavar = 'TITLE',

default = 'y',