私的AI研究会 > YOLOv7_Colab

Google Colaboratory 上に「YOLO V7」を実装しカスタムデータによる学習をおこなう

cd /content/drive/MyDrive・結果表示

/content/drive/MyDrive※ カレントディレクトリを確認するコマンド

!pwd

!git clone https://github.com/WongKinYiu/yolov7・成功するとGoogleドライブに「yolov7」というディレクトリが作成される

Cloning into 'yolov7'... remote: Enumerating objects: 1154, done. remote: Counting objects: 100% (15/15), done. remote: Compressing objects: 100% (8/8), done. remote: Total 1154 (delta 8), reused 13 (delta 7), pack-reused 1139 Receiving objects: 100% (1154/1154), 70.42 MiB | 17.19 MiB/s, done. Resolving deltas: 100% (494/494), done. Updating files: 100% (104/104), done.

cd yolov7

!pip install -r requirements.txt

Looking in indexes: https://pypi.org/simple, https://us-python.pkg.dev/colab-wheels/public/simple/

Requirement already satisfied: matplotlib>=3.2.2 in /usr/local/lib/python3.10/dist-packages (from -r requirements.txt (line 4)) (3.7.1)

Requirement already satisfied: numpy<1.24.0,>=1.18.5 in /usr/local/lib/python3.10/dist-packages (from -r requirements.txt (line 5)) (1.22.4)

Requirement already satisfied: opencv-python>=4.1.1 in /usr/local/lib/python3.10/dist-packages (from -r requirements.txt (line 6)) (4.7.0.72)

Requirement already satisfied: Pillow>=7.1.2 in /usr/local/lib/python3.10/dist-packages (from -r requirements.txt (line 7)) (8.4.0)

:

:

Requirement already satisfied: mpmath>=0.19 in /usr/local/lib/python3.10/dist-packages (from sympy->torch!=1.12.0,>=1.7.0->-r requirements.txt (line 11)) (1.3.0)

Requirement already satisfied: pyasn1<0.6.0,>=0.4.6 in /usr/local/lib/python3.10/dist-packages (from pyasn1-modules>=0.2.1->google-auth<3,>=1.6.3->tensorboard>=2.4.1->-r requirements.txt (line 17)) (0.5.0)

Requirement already satisfied: oauthlib>=3.0.0 in /usr/local/lib/python3.10/dist-packages (from requests-oauthlib>=0.7.0->google-auth-oauthlib<1.1,>=0.5->tensorboard>=2.4.1->-r requirements.txt (line 17)) (3.2.2)

Installing collected packages: jedi, thop

Successfully installed jedi-0.18.2 thop-0.1.1.post2209072238

!wget https://github.com/WongKinYiu/yolov7/releases/download/v0.1/yolov7-e6e.pt

--2023-05-09 06:26:35-- https://github.com/WongKinYiu/yolov7/releases/download/v0.1/yolov7-e6e.pt Resolving github.com (github.com)... 140.82.112.4 Connecting to github.com (github.com)|140.82.112.4|:443... connected. HTTP request sent, awaiting response... 302 Found Location: https://objects.githubusercontent.com/github-production-release-asset-2e65be/511187726/5b2a5641-54d0-4dd0-a210-42bdc38235fa?X-Amz-Algorithm=AWS4-HMAC-SHA256&X-Amz-Credential=AKIAIWNJYAX4CSVEH53A%2F20230509%2Fus-east-1%2Fs3%2Faws4_request&X-Amz-Date=20230509T062636Z&X-Amz-Expires=300&X-Amz-Signature=f16c20498fe9b50826031b6664935a988b9685cf5b4ec3f224b6e7ea5fd331f0&X-Amz-SignedHeaders=host&actor_id=0&key_id=0&repo_id=511187726&response-content-disposition=attachment%3B%20filename%3Dyolov7-e6e.pt&response-content-type=application%2Foctet-stream [following] --2023-05-09 06:26:36-- https://objects.githubusercontent.com/github-production-release-asset-2e65be/511187726/5b2a5641-54d0-4dd0-a210-42bdc38235fa?X-Amz-Algorithm=AWS4-HMAC-SHA256&X-Amz-Credential=AKIAIWNJYAX4CSVEH53A%2F20230509%2Fus-east-1%2Fs3%2Faws4_request&X-Amz-Date=20230509T062636Z&X-Amz-Expires=300&X-Amz-Signature=f16c20498fe9b50826031b6664935a988b9685cf5b4ec3f224b6e7ea5fd331f0&X-Amz-SignedHeaders=host&actor_id=0&key_id=0&repo_id=511187726&response-content-disposition=attachment%3B%20filename%3Dyolov7-e6e.pt&response-content-type=application%2Foctet-stream Resolving objects.githubusercontent.com (objects.githubusercontent.com)... 185.199.108.133, 185.199.109.133, 185.199.110.133, ... Connecting to objects.githubusercontent.com (objects.githubusercontent.com)|185.199.108.133|:443... connected. HTTP request sent, awaiting response... 200 OK Length: 304425133 (290M) [application/octet-stream] Saving to: ‘yolov7-e6e.pt’ yolov7-e6e.pt 100%[===================>] 290.32M 64.4MB/s in 4.2s 2023-05-09 06:26:40 (69.2 MB/s) - ‘yolov7-e6e.pt’ saved [304425133/304425133]

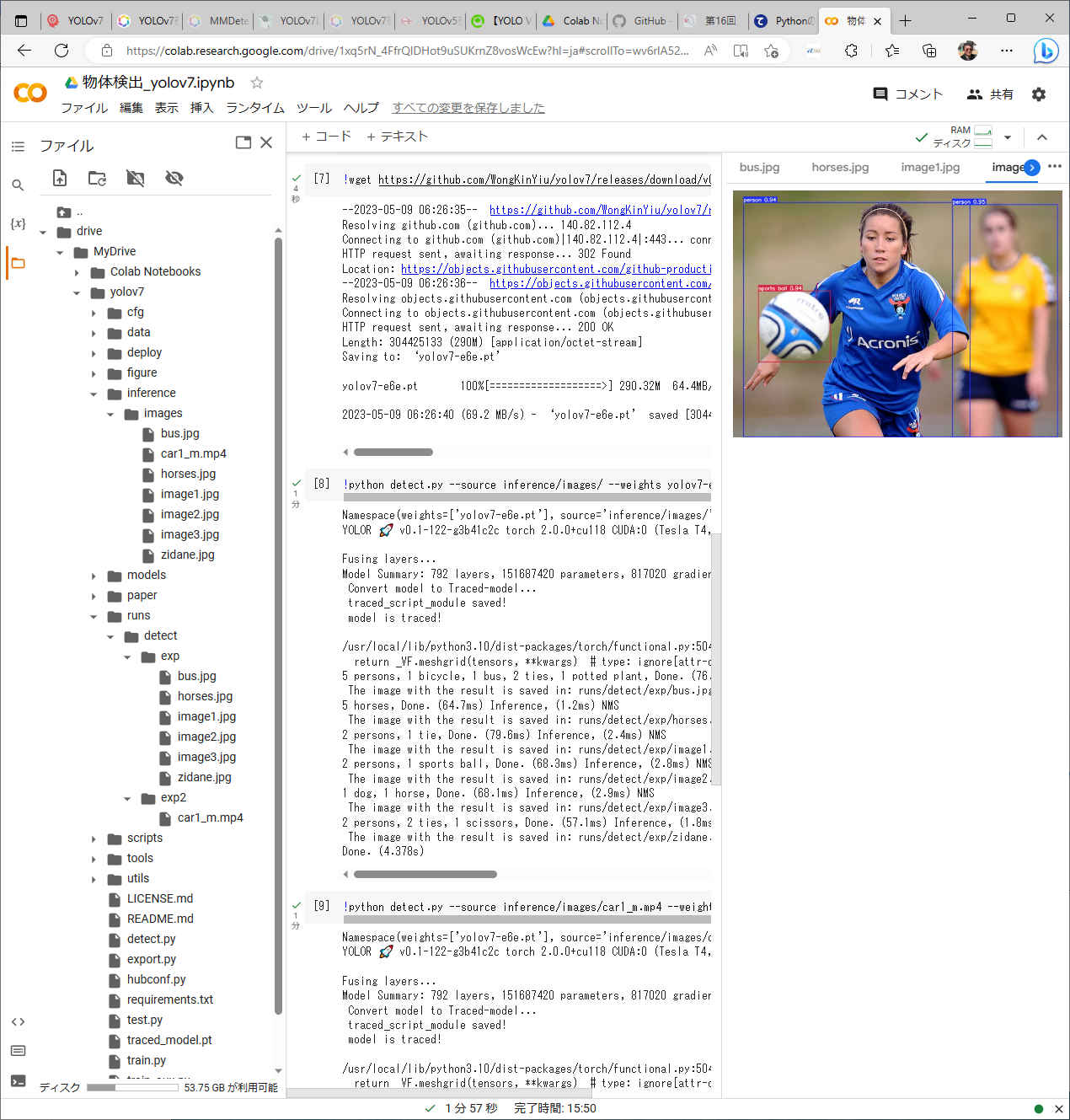

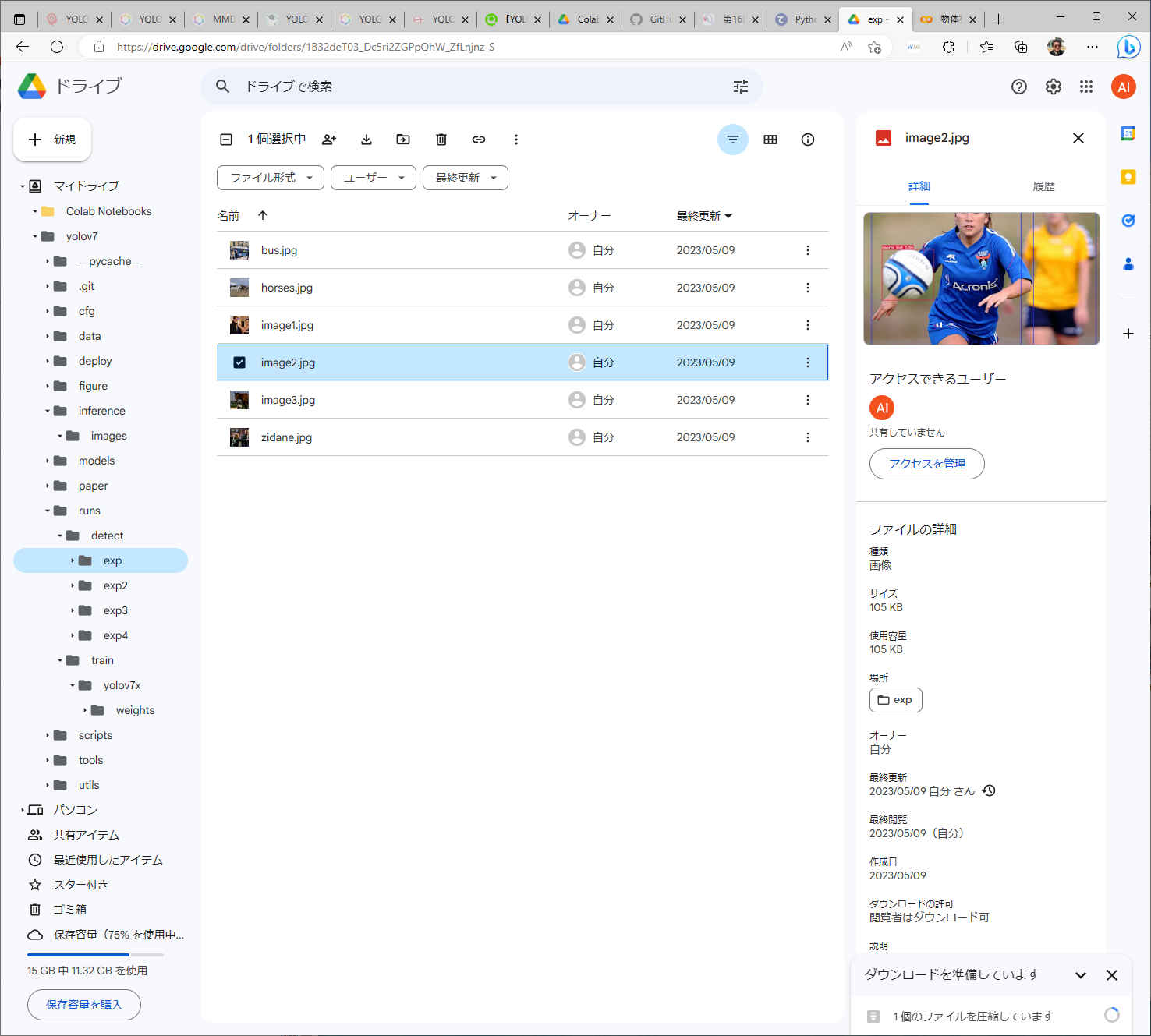

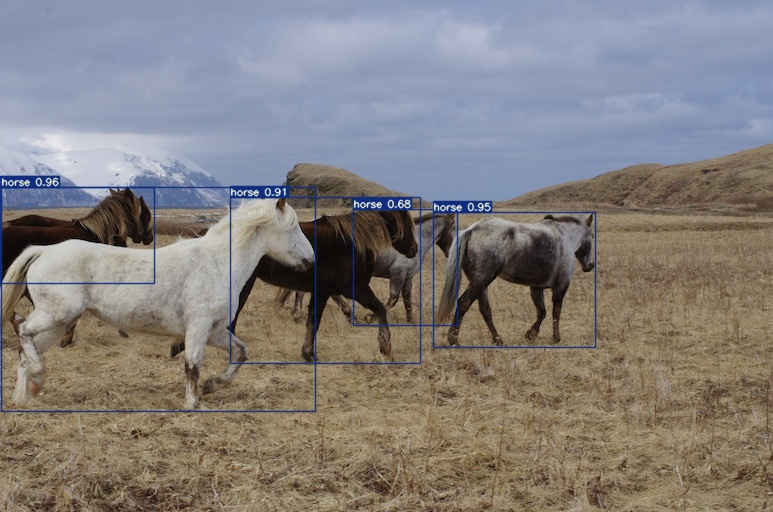

!python detect.py --source inference/images/ --weights yolov7-e6e.pt --conf 0.25 --img-size 1280 --device 0

Namespace(weights=['yolov7-e6e.pt'], source='inference/images/', img_size=1280, conf_thres=0.25, iou_thres=0.45, device='0', view_img=False, save_txt=False, save_conf=False, nosave=False, classes=None, agnostic_nms=False, augment=False, update=False, project='runs/detect', name='exp', exist_ok=False, no_trace=False) YOLOR 🚀 v0.1-122-g3b41c2c torch 2.0.0+cu118 CUDA:0 (Tesla T4, 15101.8125MB) Fusing layers... Model Summary: 792 layers, 151687420 parameters, 817020 gradients Convert model to Traced-model... traced_script_module saved! model is traced! /usr/local/lib/python3.10/dist-packages/torch/functional.py:504: UserWarning: torch.meshgrid: in an upcoming release, it will be required to pass the indexing argument. (Triggered internally at ../aten/src/ATen/native/TensorShape.cpp:3483.) return _VF.meshgrid(tensors, **kwargs) # type: ignore[attr-defined] 5 persons, 1 bicycle, 1 bus, 2 ties, 1 potted plant, Done. (76.8ms) Inference, (31.8ms) NMS The image with the result is saved in: runs/detect/exp/bus.jpg 5 horses, Done. (64.7ms) Inference, (1.2ms) NMS The image with the result is saved in: runs/detect/exp/horses.jpg 2 persons, 1 tie, Done. (79.6ms) Inference, (2.4ms) NMS The image with the result is saved in: runs/detect/exp/image1.jpg 2 persons, 1 sports ball, Done. (68.3ms) Inference, (2.8ms) NMS The image with the result is saved in: runs/detect/exp/image2.jpg 1 dog, 1 horse, Done. (68.1ms) Inference, (2.9ms) NMS The image with the result is saved in: runs/detect/exp/image3.jpg 2 persons, 2 ties, 1 scissors, Done. (57.1ms) Inference, (1.8ms) NMS The image with the result is saved in: runs/detect/exp/zidane.jpg Done. (4.378s)

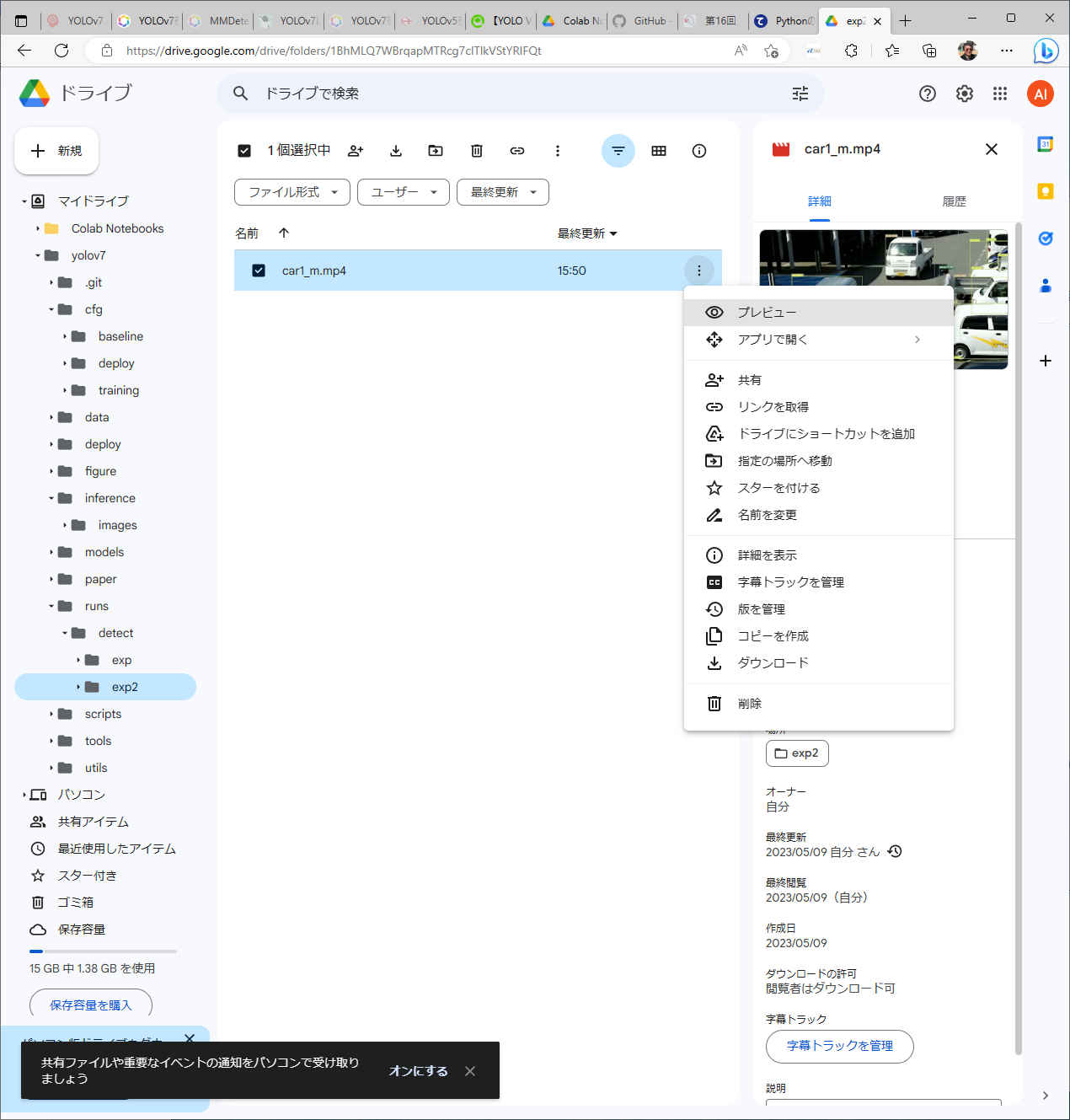

!python detect.py --source inference/images/car1_m.mp4 --weights yolov7-e6e.pt --conf 0.25 --img-size 1280 --device 0

Namespace(weights=['yolov7-e6e.pt'], source='inference/images/car1_m.mp4', img_size=1280, conf_thres=0.25, iou_thres=0.45, device='0', view_img=False, save_txt=False, save_conf=False, nosave=False, classes=None, agnostic_nms=False, augment=False, update=False, project='runs/detect', name='exp', exist_ok=False, no_trace=False)

YOLOR 🚀 v0.1-122-g3b41c2c torch 2.0.0+cu118 CUDA:0 (Tesla T4, 15101.8125MB)

Fusing layers...

Model Summary: 792 layers, 151687420 parameters, 817020 gradients

Convert model to Traced-model...

traced_script_module saved!

model is traced!

/usr/local/lib/python3.10/dist-packages/torch/functional.py:504: UserWarning: torch.meshgrid: in an upcoming release, it will be required to pass the indexing argument. (Triggered internally at ../aten/src/ATen/native/TensorShape.cpp:3483.)

return _VF.meshgrid(tensors, **kwargs) # type: ignore[attr-defined]

video 1/1 (1/210) /content/drive/MyDrive/yolov7/inference/images/car1_m.mp4: 1 person, 8 cars, 7 trucks, Done. (59.1ms) Inference, (2.3ms) NMS

video 1/1 (2/210) /content/drive/MyDrive/yolov7/inference/images/car1_m.mp4: 1 person, 8 cars, 7 trucks, Done. (57.8ms) Inference, (1.2ms) NMS

video 1/1 (3/210) /content/drive/MyDrive/yolov7/inference/images/car1_m.mp4: 1 person, 7 cars, 7 trucks, Done. (53.7ms) Inference, (1.1ms) NMS

video 1/1 (4/210) /content/drive/MyDrive/yolov7/inference/images/car1_m.mp4: 1 person, 7 cars, 7 trucks, Done. (52.8ms) Inference, (1.4ms) NMS

:

:

video 1/1 (207/210) /content/drive/MyDrive/yolov7/inference/images/car1_m.mp4: 1 person, 7 cars, 6 trucks, 1 handbag, Done. (53.3ms) Inference, (1.5ms) NMS

video 1/1 (208/210) /content/drive/MyDrive/yolov7/inference/images/car1_m.mp4: 1 person, 6 cars, 5 trucks, 1 handbag, Done. (53.5ms) Inference, (1.3ms) NMS

video 1/1 (209/210) /content/drive/MyDrive/yolov7/inference/images/car1_m.mp4: 1 person, 7 cars, 5 trucks, 1 handbag, Done. (53.5ms) Inference, (1.2ms) NMS

Done. (16.581s)

cd /content/drive/MyDrive/yolov7・結果表示

/content/drive/MyDrive/yolov7

!wget https://github.com/WongKinYiu/yolov7/releases/download/v0.1/yolov7x.pt

--2023-05-09 19:32:47-- https://github.com/WongKinYiu/yolov7/releases/download/v0.1/yolov7x.pt Resolving github.com (github.com)... 140.82.121.3 Connecting to github.com (github.com)|140.82.121.3|:443... connected. HTTP request sent, awaiting response... 302 Found Location: https://objects.githubusercontent.com/github-production-release-asset-2e65be/511187726/c0e9f375-a42b-45d5-9e96-3156476cf121?X-Amz-Algorithm=AWS4-HMAC-SHA256&X-Amz-Credential=AKIAIWNJYAX4CSVEH53A%2F20230509%2Fus-east-1%2Fs3%2Faws4_request&X-Amz-Date=20230509T193247Z&X-Amz-Expires=300&X-Amz-Signature=90a83ec24aa6576418101adabf32d2b766e8308eaa57a7397feb60ee08099e7f&X-Amz-SignedHeaders=host&actor_id=0&key_id=0&repo_id=511187726&response-content-disposition=attachment%3B%20filename%3Dyolov7x.pt&response-content-type=application%2Foctet-stream [following] --2023-05-09 19:32:47-- https://objects.githubusercontent.com/github-production-release-asset-2e65be/511187726/c0e9f375-a42b-45d5-9e96-3156476cf121?X-Amz-Algorithm=AWS4-HMAC-SHA256&X-Amz-Credential=AKIAIWNJYAX4CSVEH53A%2F20230509%2Fus-east-1%2Fs3%2Faws4_request&X-Amz-Date=20230509T193247Z&X-Amz-Expires=300&X-Amz-Signature=90a83ec24aa6576418101adabf32d2b766e8308eaa57a7397feb60ee08099e7f&X-Amz-SignedHeaders=host&actor_id=0&key_id=0&repo_id=511187726&response-content-disposition=attachment%3B%20filename%3Dyolov7x.pt&response-content-type=application%2Foctet-stream Resolving objects.githubusercontent.com (objects.githubusercontent.com)... 185.199.108.133, 185.199.109.133, 185.199.110.133, ... Connecting to objects.githubusercontent.com (objects.githubusercontent.com)|185.199.108.133|:443... connected. HTTP request sent, awaiting response... 200 OK Length: 143099649 (136M) [application/octet-stream] Saving to: ‘yolov7x.pt’ yolov7x.pt 100%[===================>] 136.47M 50.0MB/s in 2.7s 2023-05-09 19:32:51 (50.0 MB/s) - ‘yolov7x.pt’ saved [143099649/143099649]

# 学習データのパスを指定する train: ./data/mask_wearing/train/images val: ./data/mask_wearing/valid/images # 検出のクラス数 nc: 2 # クラス名 names: ['mask', 'no-mask']

yolov7

┗ data

┗ mask_wearing

yolov7 ┠ data ┃ ┗ mask_wearing ┗ mask_wearing.yaml

!python train.py --workers 8 --batch-size 16 --data mask_wearing.yaml --cfg cfg/training/yolov7x.yaml --weights 'yolov7x.pt' --name yolov7x --hyp data/hyp.scratch.p5.yaml --epochs 300 --device 0

2023-05-09 19:51:09.261741: I tensorflow/core/platform/cpu_feature_guard.cc:182] This TensorFlow binary is optimized to use available CPU instructions in performance-critical operations.

To enable the following instructions: AVX2 AVX512F FMA, in other operations, rebuild TensorFlow with the appropriate compiler flags.

2023-05-09 19:51:10.197626: W tensorflow/compiler/tf2tensorrt/utils/py_utils.cc:38] TF-TRT Warning: Could not find TensorRT

YOLOR 🚀 v0.1-122-g3b41c2c torch 2.0.0+cu118 CUDA:0 (Tesla T4, 15101.8125MB)

Namespace(weights='yolov7x.pt', cfg='cfg/training/yolov7x.yaml', data='mask_wearing.yaml', hyp='data/hyp.scratch.p5.yaml', epochs=300, batch_size=16, img_size=[640, 640], rect=False, resume=False, nosave=False, notest=False, noautoanchor=False, evolve=False, bucket='', cache_images=False, image_weights=False, device='0', multi_scale=False, single_cls=False, adam=False, sync_bn=False, local_rank=-1, workers=8, project='runs/train', entity=None, name='yolov7x', exist_ok=False, quad=False, linear_lr=False, label_smoothing=0.0, upload_dataset=False, bbox_interval=-1, save_period=-1, artifact_alias='latest', freeze=[0], v5_metric=False, world_size=1, global_rank=-1, save_dir='runs/train/yolov7x', total_batch_size=16)

tensorboard: Start with 'tensorboard --logdir runs/train', view at http://localhost:6006/

hyperparameters: lr0=0.01, lrf=0.1, momentum=0.937, weight_decay=0.0005, warmup_epochs=3.0, warmup_momentum=0.8, warmup_bias_lr=0.1, box=0.05, cls=0.3, cls_pw=1.0, obj=0.7, obj_pw=1.0, iou_t=0.2, anchor_t=4.0, fl_gamma=0.0, hsv_h=0.015, hsv_s=0.7, hsv_v=0.4, degrees=0.0, translate=0.2, scale=0.9, shear=0.0, perspective=0.0, flipud=0.0, fliplr=0.5, mosaic=1.0, mixup=0.15, copy_paste=0.0, paste_in=0.15, loss_ota=1

wandb: Install Weights & Biases for YOLOR logging with 'pip install wandb' (recommended)

Overriding model.yaml nc=80 with nc=2

from n params module arguments

0 -1 1 1160 models.common.Conv [3, 40, 3, 1]

1 -1 1 28960 models.common.Conv [40, 80, 3, 2]

2 -1 1 57760 models.common.Conv [80, 80, 3, 1]

3 -1 1 115520 models.common.Conv [80, 160, 3, 2]

4 -1 1 10368 models.common.Conv [160, 64, 1, 1]

5 -2 1 10368 models.common.Conv [160, 64, 1, 1]

6 -1 1 36992 models.common.Conv [64, 64, 3, 1]

7 -1 1 36992 models.common.Conv [64, 64, 3, 1]

8 -1 1 36992 models.common.Conv [64, 64, 3, 1]

9 -1 1 36992 models.common.Conv [64, 64, 3, 1]

10 -1 1 36992 models.common.Conv [64, 64, 3, 1]

11 -1 1 36992 models.common.Conv [64, 64, 3, 1]

12[-1, -3, -5, -7, -8] 1 0 models.common.Concat [1]

13 -1 1 103040 models.common.Conv [320, 320, 1, 1]

14 -1 1 0 models.common.MP []

15 -1 1 51520 models.common.Conv [320, 160, 1, 1]

16 -3 1 51520 models.common.Conv [320, 160, 1, 1]

17 -1 1 230720 models.common.Conv [160, 160, 3, 2]

18 [-1, -3] 1 0 models.common.Concat [1]

19 -1 1 41216 models.common.Conv [320, 128, 1, 1]

20 -2 1 41216 models.common.Conv [320, 128, 1, 1]

21 -1 1 147712 models.common.Conv [128, 128, 3, 1]

22 -1 1 147712 models.common.Conv [128, 128, 3, 1]

23 -1 1 147712 models.common.Conv [128, 128, 3, 1]

24 -1 1 147712 models.common.Conv [128, 128, 3, 1]

25 -1 1 147712 models.common.Conv [128, 128, 3, 1]

26 -1 1 147712 models.common.Conv [128, 128, 3, 1]

27[-1, -3, -5, -7, -8] 1 0 models.common.Concat [1]

28 -1 1 410880 models.common.Conv [640, 640, 1, 1]

29 -1 1 0 models.common.MP []

30 -1 1 205440 models.common.Conv [640, 320, 1, 1]

31 -3 1 205440 models.common.Conv [640, 320, 1, 1]

32 -1 1 922240 models.common.Conv [320, 320, 3, 2]

33 [-1, -3] 1 0 models.common.Concat [1]

34 -1 1 164352 models.common.Conv [640, 256, 1, 1]

35 -2 1 164352 models.common.Conv [640, 256, 1, 1]

36 -1 1 590336 models.common.Conv [256, 256, 3, 1]

37 -1 1 590336 models.common.Conv [256, 256, 3, 1]

38 -1 1 590336 models.common.Conv [256, 256, 3, 1]

39 -1 1 590336 models.common.Conv [256, 256, 3, 1]

40 -1 1 590336 models.common.Conv [256, 256, 3, 1]

41 -1 1 590336 models.common.Conv [256, 256, 3, 1]

42[-1, -3, -5, -7, -8] 1 0 models.common.Concat [1]

43 -1 1 1640960 models.common.Conv [1280, 1280, 1, 1]

44 -1 1 0 models.common.MP []

45 -1 1 820480 models.common.Conv [1280, 640, 1, 1]

46 -3 1 820480 models.common.Conv [1280, 640, 1, 1]

47 -1 1 3687680 models.common.Conv [640, 640, 3, 2]

48 [-1, -3] 1 0 models.common.Concat [1]

49 -1 1 328192 models.common.Conv [1280, 256, 1, 1]

50 -2 1 328192 models.common.Conv [1280, 256, 1, 1]

51 -1 1 590336 models.common.Conv [256, 256, 3, 1]

52 -1 1 590336 models.common.Conv [256, 256, 3, 1]

53 -1 1 590336 models.common.Conv [256, 256, 3, 1]

54 -1 1 590336 models.common.Conv [256, 256, 3, 1]

55 -1 1 590336 models.common.Conv [256, 256, 3, 1]

56 -1 1 590336 models.common.Conv [256, 256, 3, 1]

57[-1, -3, -5, -7, -8] 1 0 models.common.Concat [1]

58 -1 1 1640960 models.common.Conv [1280, 1280, 1, 1]

59 -1 1 11887360 models.common.SPPCSPC [1280, 640, 1]

60 -1 1 205440 models.common.Conv [640, 320, 1, 1]

61 -1 1 0 torch.nn.modules.upsampling.Upsample [None, 2, 'nearest']

62 43 1 410240 models.common.Conv [1280, 320, 1, 1]

63 [-1, -2] 1 0 models.common.Concat [1]

64 -1 1 164352 models.common.Conv [640, 256, 1, 1]

65 -2 1 164352 models.common.Conv [640, 256, 1, 1]

66 -1 1 590336 models.common.Conv [256, 256, 3, 1]

67 -1 1 590336 models.common.Conv [256, 256, 3, 1]

68 -1 1 590336 models.common.Conv [256, 256, 3, 1]

69 -1 1 590336 models.common.Conv [256, 256, 3, 1]

70 -1 1 590336 models.common.Conv [256, 256, 3, 1]

71 -1 1 590336 models.common.Conv [256, 256, 3, 1]

72[-1, -3, -5, -7, -8] 1 0 models.common.Concat [1]

73 -1 1 410240 models.common.Conv [1280, 320, 1, 1]

74 -1 1 51520 models.common.Conv [320, 160, 1, 1]

75 -1 1 0 torch.nn.modules.upsampling.Upsample [None, 2, 'nearest']

76 28 1 102720 models.common.Conv [640, 160, 1, 1]

77 [-1, -2] 1 0 models.common.Concat [1]

78 -1 1 41216 models.common.Conv [320, 128, 1, 1]

79 -2 1 41216 models.common.Conv [320, 128, 1, 1]

80 -1 1 147712 models.common.Conv [128, 128, 3, 1]

81 -1 1 147712 models.common.Conv [128, 128, 3, 1]

82 -1 1 147712 models.common.Conv [128, 128, 3, 1]

83 -1 1 147712 models.common.Conv [128, 128, 3, 1]

84 -1 1 147712 models.common.Conv [128, 128, 3, 1]

85 -1 1 147712 models.common.Conv [128, 128, 3, 1]

86[-1, -3, -5, -7, -8] 1 0 models.common.Concat [1]

87 -1 1 102720 models.common.Conv [640, 160, 1, 1]

88 -1 1 0 models.common.MP []

89 -1 1 25920 models.common.Conv [160, 160, 1, 1]

90 -3 1 25920 models.common.Conv [160, 160, 1, 1]

91 -1 1 230720 models.common.Conv [160, 160, 3, 2]

92 [-1, -3, 73] 1 0 models.common.Concat [1]

93 -1 1 164352 models.common.Conv [640, 256, 1, 1]

94 -2 1 164352 models.common.Conv [640, 256, 1, 1]

95 -1 1 590336 models.common.Conv [256, 256, 3, 1]

96 -1 1 590336 models.common.Conv [256, 256, 3, 1]

97 -1 1 590336 models.common.Conv [256, 256, 3, 1]

98 -1 1 590336 models.common.Conv [256, 256, 3, 1]

99 -1 1 590336 models.common.Conv [256, 256, 3, 1]

100 -1 1 590336 models.common.Conv [256, 256, 3, 1]

101[-1, -3, -5, -7, -8] 1 0 models.common.Concat [1]

102 -1 1 410240 models.common.Conv [1280, 320, 1, 1]

103 -1 1 0 models.common.MP []

104 -1 1 103040 models.common.Conv [320, 320, 1, 1]

105 -3 1 103040 models.common.Conv [320, 320, 1, 1]

106 -1 1 922240 models.common.Conv [320, 320, 3, 2]

107 [-1, -3, 59] 1 0 models.common.Concat [1]

108 -1 1 656384 models.common.Conv [1280, 512, 1, 1]

109 -2 1 656384 models.common.Conv [1280, 512, 1, 1]

110 -1 1 2360320 models.common.Conv [512, 512, 3, 1]

111 -1 1 2360320 models.common.Conv [512, 512, 3, 1]

112 -1 1 2360320 models.common.Conv [512, 512, 3, 1]

113 -1 1 2360320 models.common.Conv [512, 512, 3, 1]

114 -1 1 2360320 models.common.Conv [512, 512, 3, 1]

115 -1 1 2360320 models.common.Conv [512, 512, 3, 1]

116[-1, -3, -5, -7, -8] 1 0 models.common.Concat [1]

117 -1 1 1639680 models.common.Conv [2560, 640, 1, 1]

118 87 1 461440 models.common.Conv [160, 320, 3, 1]

119 102 1 1844480 models.common.Conv [320, 640, 3, 1]

120 117 1 7375360 models.common.Conv [640, 1280, 3, 1]

121 [118, 119, 120] 1 49406 models.yolo.IDetect [2, [[12, 16, 19, 36, 40, 28], [36, 75, 76, 55, 72, 146], [142, 110, 192, 243, 459, 401]], [320, 640, 1280]]

Model Summary: 467 layers, 70821830 parameters, 70821830 gradients

Transferred 630/644 items from yolov7x.pt

Scaled weight_decay = 0.0005

Optimizer groups: 108 .bias, 108 conv.weight, 111 other

train: Scanning 'data/mask_wearing/train/labels' images and labels... 105 found, 0 missing, 0 empty, 0 corrupted: 100% 105/105 [00:47<00:00, 2.22it/s]

train: New cache created: data/mask_wearing/train/labels.cache

val: Scanning 'data/mask_wearing/valid/labels' images and labels... 29 found, 0 missing, 0 empty, 0 corrupted: 100% 29/29 [00:25<00:00, 1.13it/s]

val: New cache created: data/mask_wearing/valid/labels.cache

autoanchor: Analyzing anchors... anchors/target = 5.92, Best Possible Recall (BPR) = 0.9986

Image sizes 640 train, 640 test

Using 2 dataloader workers

Logging results to runs/train/yolov7x

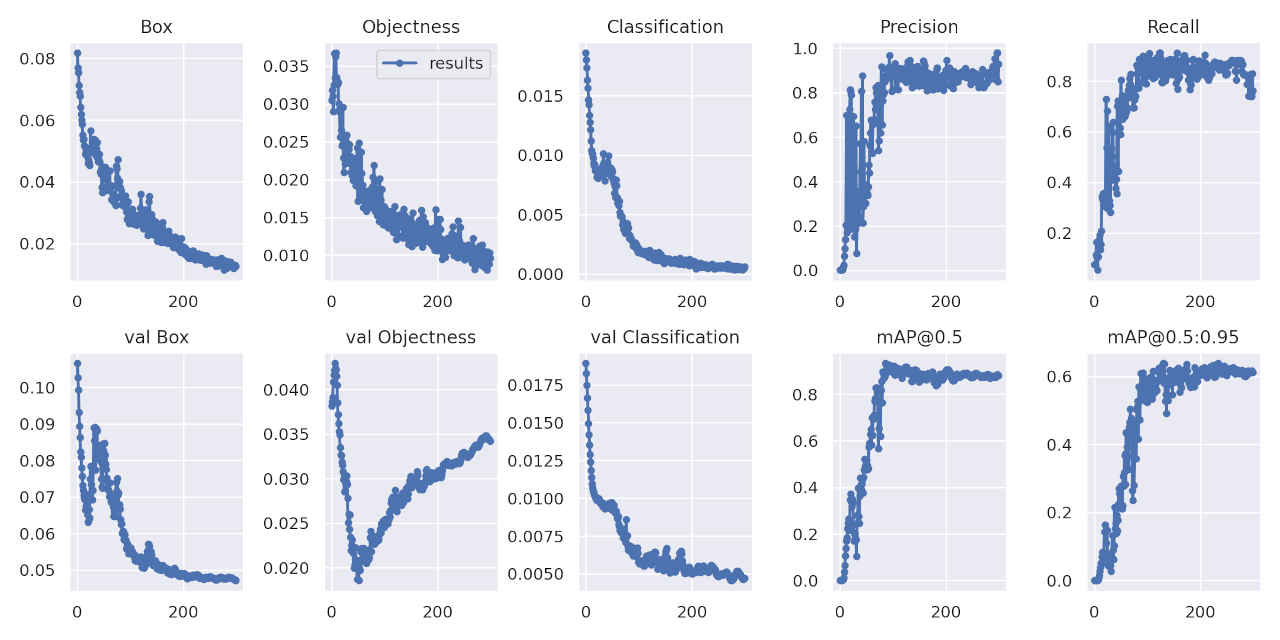

Starting training for 300 epochs...

Epoch gpu_mem box obj cls total labels img_size

0/299 3.06G 0.08177 0.0305 0.01861 0.1309 81 640: 100% 7/7 [00:44<00:00, 6.30s/it]

Class Images Labels P R mAP@.5 mAP@.5:.95: 0% 0/1 [00:00<?, ?it/s]/usr/local/lib/python3.10/dist-packages/torch/functional.py:504: UserWarning: torch.meshgrid: in an upcoming release, it will be required to pass the indexing argument. (Triggered internally at ../aten/src/ATen/native/TensorShape.cpp:3483.)

return _VF.meshgrid(tensors, **kwargs) # type: ignore[attr-defined]

Class Images Labels P R mAP@.5 mAP@.5:.95: 100% 1/1 [00:09<00:00, 9.84s/it]

all 29 162 0.00168 0.0743 0.000209 3.65e-05

Epoch gpu_mem box obj cls total labels img_size

1/299 9.27G 0.0769 0.03184 0.01804 0.1268 148 640: 100% 7/7 [00:12<00:00, 1.85s/it]

Class Images Labels P R mAP@.5 mAP@.5:.95: 100% 1/1 [00:06<00:00, 6.75s/it]

all 29 162 0.00182 0.0739 0.000278 4.05e-05

Epoch gpu_mem box obj cls total labels img_size

2/299 14.1G 0.07545 0.03108 0.01735 0.1239 110 640: 100% 7/7 [00:10<00:00, 1.56s/it]

Class Images Labels P R mAP@.5 mAP@.5:.95: 100% 1/1 [00:03<00:00, 3.69s/it]

all 29 162 0.00212 0.0739 0.000378 4.61e-05

Epoch gpu_mem box obj cls total labels img_size

3/299 14.1G 0.07119 0.02899 0.01632 0.1165 86 640: 100% 7/7 [00:10<00:00, 1.57s/it]

Class Images Labels P R mAP@.5 mAP@.5:.95: 100% 1/1 [00:02<00:00, 2.77s/it]

all 29 162 0.00278 0.113 0.000604 0.000102

Epoch gpu_mem box obj cls total labels img_size

4/299 14.1G 0.06906 0.0325 0.01565 0.1172 168 640: 100% 7/7 [00:10<00:00, 1.52s/it]

Class Images Labels P R mAP@.5 mAP@.5:.95: 100% 1/1 [00:02<00:00, 2.32s/it]

all 29 162 0.00314 0.162 0.000883 0.000148

:

:

Epoch gpu_mem box obj cls total labels img_size

146/299 14.4G 0.02325 0.01482 0.001114 0.03918 179 640: 100% 7/7 [00:15<00:00, 2.21s/it]

Class Images Labels P R mAP@.5 mAP@.5:.95: 100% 1/1 [00:01<00:00, 1.08s/it]

all 29 162 0.919 0.831 0.895 0.6

Epoch gpu_mem box obj cls total labels img_size

147/299 14.4G 0.02545 0.01349 0.0008791 0.03982 121 640: 100% 7/7 [00:10<00:00, 1.48s/it]

Class Images Labels P R mAP@.5 mAP@.5:.95: 0% 0/1 [00:00<?, ?it/s]

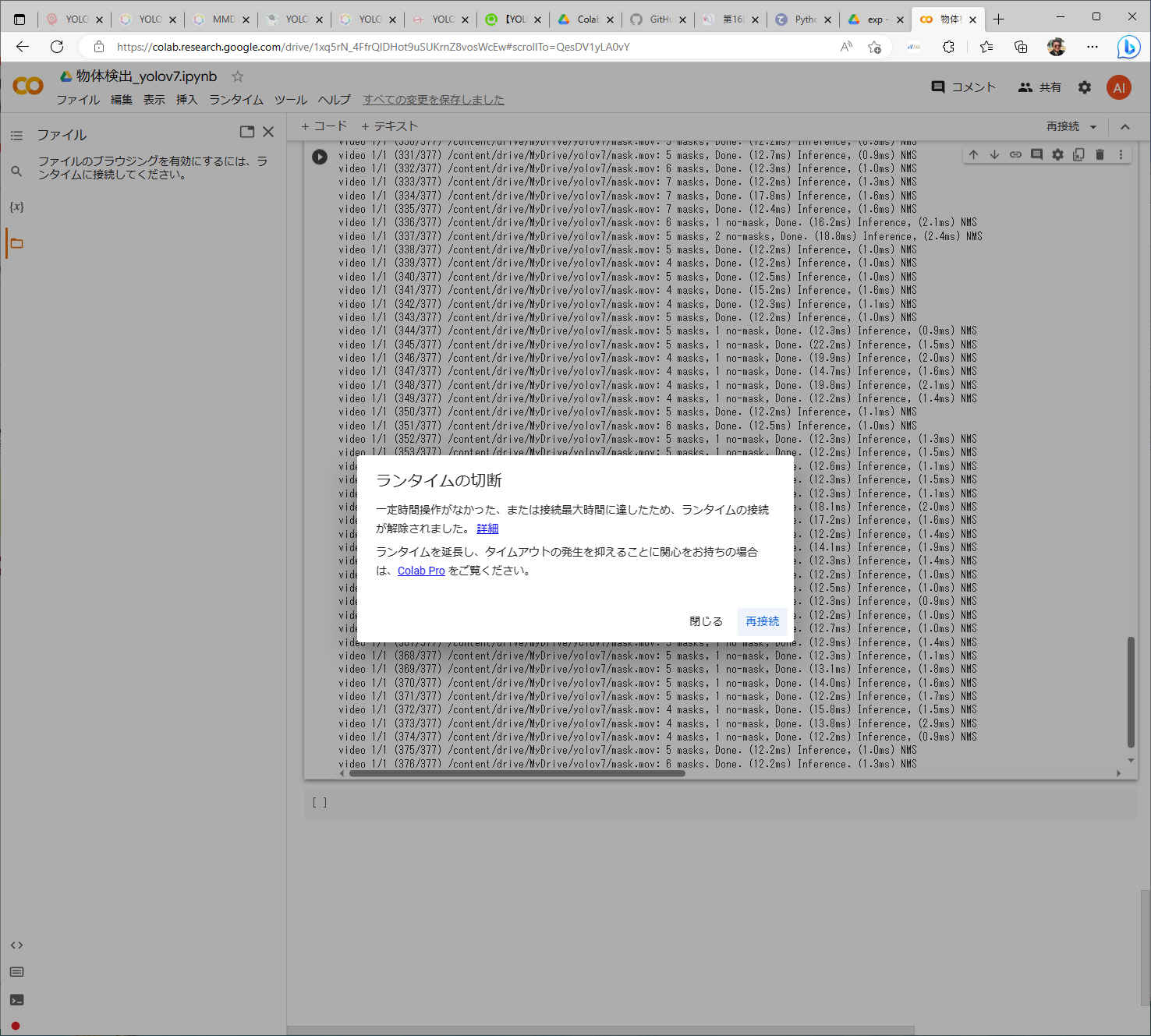

cd /content/drive/MyDrive/yolov7・引数に「–resume」を追加することで、前回の途中から学習をする

cd /content/drive/MyDrive/yolov7 !python train.py --workers 8 --batch-size 16 --data mask_wearing.yaml --cfg cfg/training/yolov7x.yaml --weights 'yolov7x.pt' --name yolov7x --hyp data/hyp.scratch.p5.yaml --epochs 300 --device 0 --resume

2023-05-09 22:00:48.988514: I tensorflow/core/platform/cpu_feature_guard.cc:182] This TensorFlow binary is optimized to use available CPU instructions in performance-critical operations.

To enable the following instructions: AVX2 FMA, in other operations, rebuild TensorFlow with the appropriate compiler flags.

2023-05-09 22:00:49.845202: W tensorflow/compiler/tf2tensorrt/utils/py_utils.cc:38] TF-TRT Warning: Could not find TensorRT

Resuming training from ./runs/train/yolov7x/weights/last.pt

YOLOR 🚀 v0.1-122-g3b41c2c torch 2.0.0+cu118 CUDA:0 (Tesla T4, 15101.8125MB)

Namespace(weights='./runs/train/yolov7x/weights/last.pt', cfg='', data='mask_wearing.yaml', hyp='data/hyp.scratch.p5.yaml', epochs=300, batch_size=16, img_size=[640, 640], rect=False, resume=True, nosave=False, notest=False, noautoanchor=False, evolve=False, bucket='', cache_images=False, image_weights=False, device='0', multi_scale=False, single_cls=False, adam=False, sync_bn=False, local_rank=-1, workers=8, project='runs/train', entity=None, name='yolov7x', exist_ok=False, quad=False, linear_lr=False, label_smoothing=0.0, upload_dataset=False, bbox_interval=-1, save_period=-1, artifact_alias='latest', freeze=[0], v5_metric=False, world_size=1, global_rank=-1, save_dir='runs/train/yolov7x', total_batch_size=16)

tensorboard: Start with 'tensorboard --logdir runs/train', view at http://localhost:6006/

hyperparameters: lr0=0.01, lrf=0.1, momentum=0.937, weight_decay=0.0005, warmup_epochs=3.0, warmup_momentum=0.8, warmup_bias_lr=0.1, box=0.05, cls=0.3, cls_pw=1.0, obj=0.7, obj_pw=1.0, iou_t=0.2, anchor_t=4.0, fl_gamma=0.0, hsv_h=0.015, hsv_s=0.7, hsv_v=0.4, degrees=0.0, translate=0.2, scale=0.9, shear=0.0, perspective=0.0, flipud=0.0, fliplr=0.5, mosaic=1.0, mixup=0.15, copy_paste=0.0, paste_in=0.15, loss_ota=1

wandb: Install Weights & Biases for YOLOR logging with 'pip install wandb' (recommended)

from n params module arguments

0 -1 1 1160 models.common.Conv [3, 40, 3, 1]

1 -1 1 28960 models.common.Conv [40, 80, 3, 2]

2 -1 1 57760 models.common.Conv [80, 80, 3, 1]

3 -1 1 115520 models.common.Conv [80, 160, 3, 2]

4 -1 1 10368 models.common.Conv [160, 64, 1, 1]

5 -2 1 10368 models.common.Conv [160, 64, 1, 1]

6 -1 1 36992 models.common.Conv [64, 64, 3, 1]

7 -1 1 36992 models.common.Conv [64, 64, 3, 1]

:

:

Transferred 644/644 items from ./runs/train/yolov7x/weights/last.pt

Scaled weight_decay = 0.0005

Optimizer groups: 108 .bias, 108 conv.weight, 111 other

train: Scanning 'data/mask_wearing/train/labels.cache' images and labels... 105 found, 0 missing, 0 empty, 0 corrupted: 100% 105/105 [00:00<?, ?it/s]

val: Scanning 'data/mask_wearing/valid/labels.cache' images and labels... 29 found, 0 missing, 0 empty, 0 corrupted: 100% 29/29 [00:00<?, ?it/s]

Image sizes 640 train, 640 test

Using 2 dataloader workers

Logging results to runs/train/yolov7x

Starting training for 300 epochs...

Epoch gpu_mem box obj cls total labels img_size

148/299 3.8G 0.027 0.01341 0.001347 0.04176 81 640: 100% 7/7 [00:46<00:00, 6.68s/it]

Class Images Labels P R mAP@.5 mAP@.5:.95: 0% 0/1 [00:00<?, ?it/s]/usr/local/lib/python3.10/dist-packages/torch/functional.py:504: UserWarning: torch.meshgrid: in an upcoming release, it will be required to pass the indexing argument. (Triggered internally at ../aten/src/ATen/native/TensorShape.cpp:3483.)

return _VF.meshgrid(tensors, **kwargs) # type: ignore[attr-defined]

Class Images Labels P R mAP@.5 mAP@.5:.95: 100% 1/1 [00:08<00:00, 8.33s/it]

all 29 162 0.858 0.869 0.87 0.598

Epoch gpu_mem box obj cls total labels img_size

149/299 9.18G 0.02592 0.01415 0.001092 0.04116 148 640: 100% 7/7 [00:09<00:00, 1.36s/it]

Class Images Labels P R mAP@.5 mAP@.5:.95: 100% 1/1 [00:01<00:00, 1.59s/it]

all 29 162 0.83 0.868 0.854 0.582

:

:

Epoch gpu_mem box obj cls total labels img_size

297/299 14.3G 0.0129 0.008834 0.0004932 0.02223 68 640: 100% 7/7 [00:10<00:00, 1.43s/it]

Class Images Labels P R mAP@.5 mAP@.5:.95: 100% 1/1 [00:01<00:00, 1.19s/it]

all 29 162 0.979 0.74 0.884 0.617

Epoch gpu_mem box obj cls total labels img_size

298/299 14.3G 0.01303 0.0103 0.0004993 0.02383 139 640: 100% 7/7 [00:11<00:00, 1.61s/it]

Class Images Labels P R mAP@.5 mAP@.5:.95: 100% 1/1 [00:01<00:00, 1.22s/it]

all 29 162 0.85 0.83 0.881 0.612

Epoch gpu_mem box obj cls total labels img_size

299/299 14.3G 0.01279 0.009579 0.0005955 0.02297 96 640: 100% 7/7 [00:11<00:00, 1.61s/it]

Class Images Labels P R mAP@.5 mAP@.5:.95: 100% 1/1 [00:01<00:00, 1.77s/it]

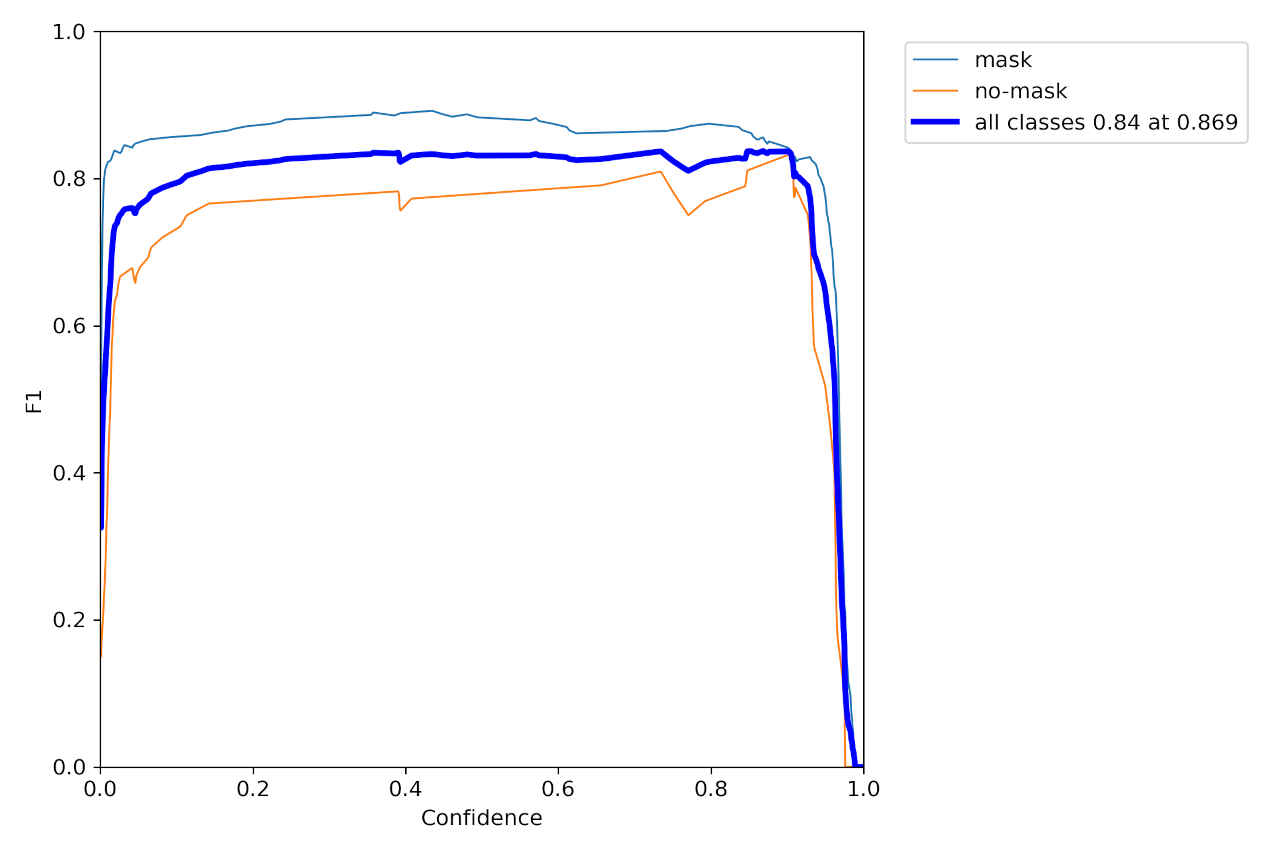

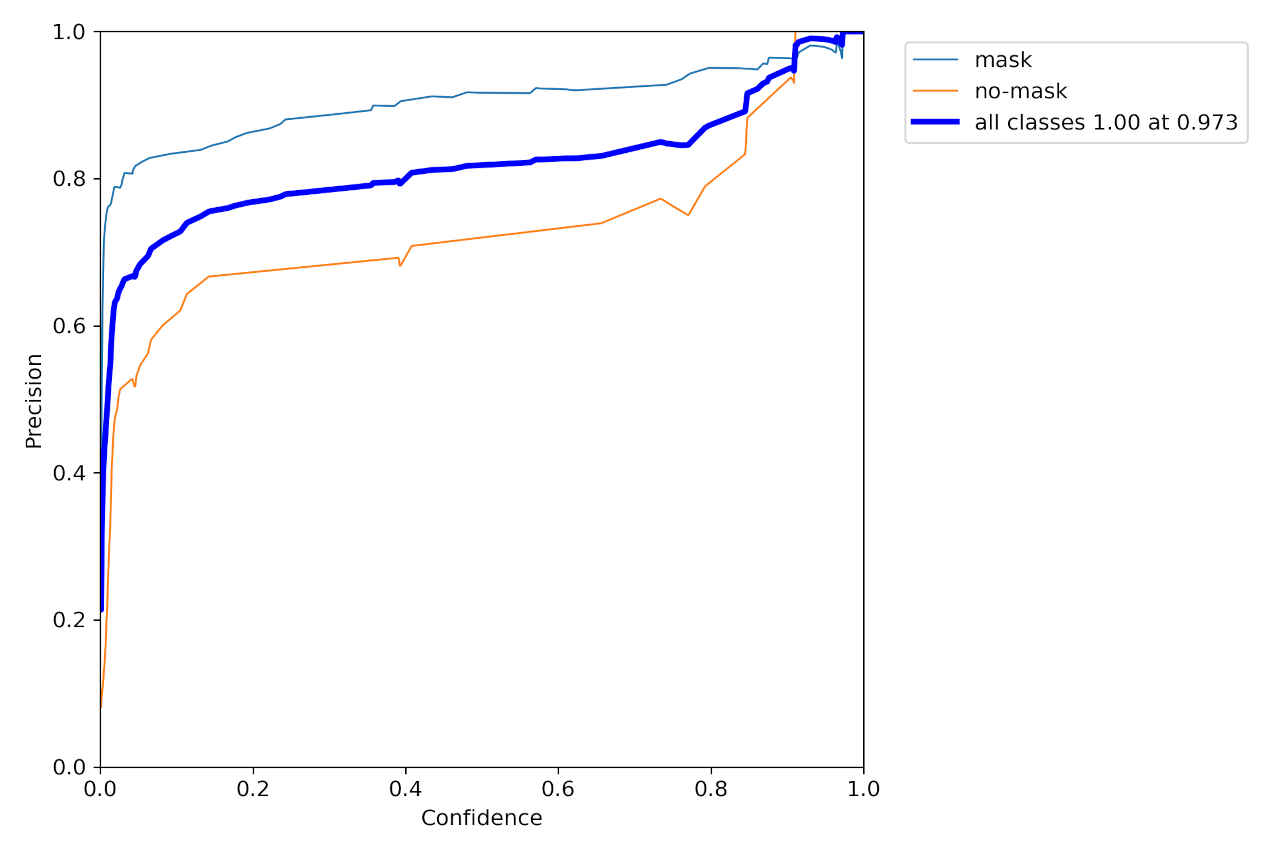

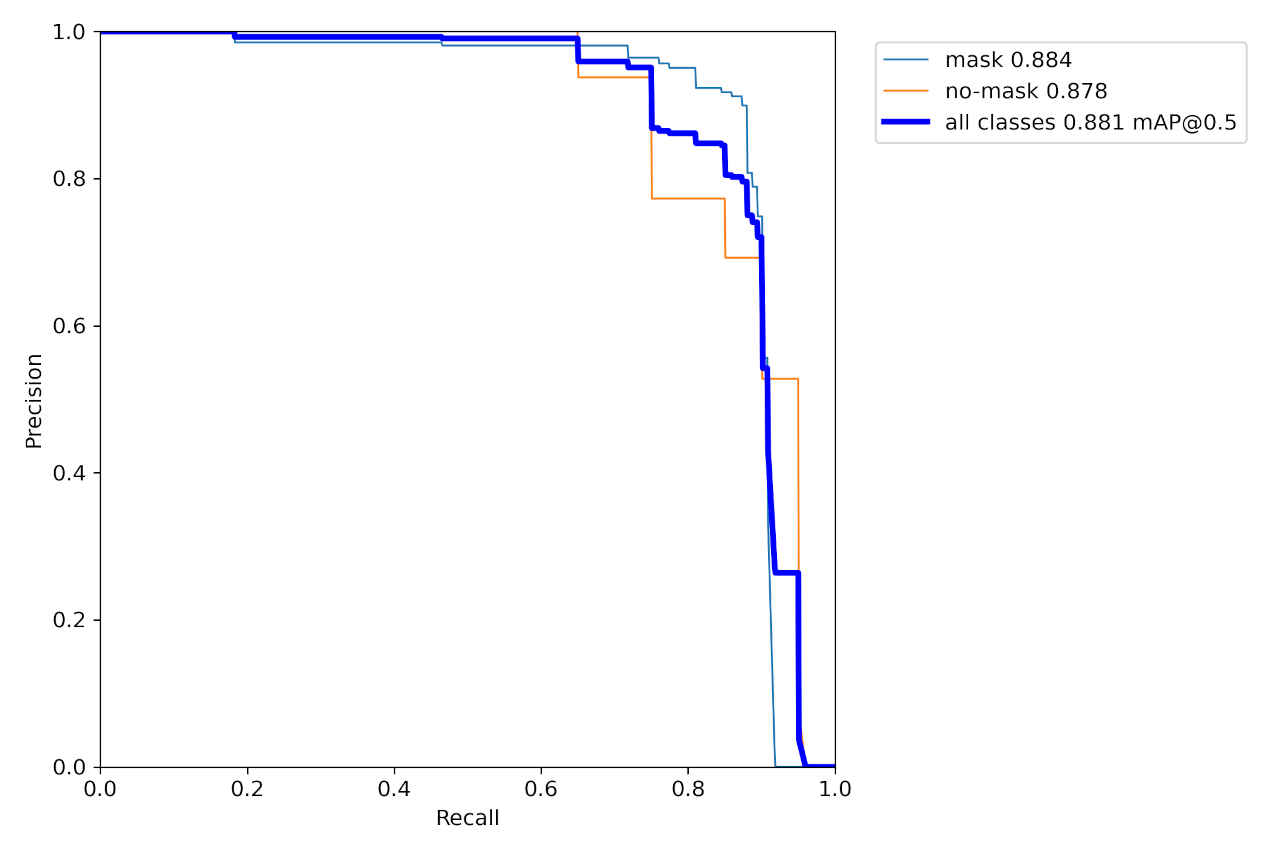

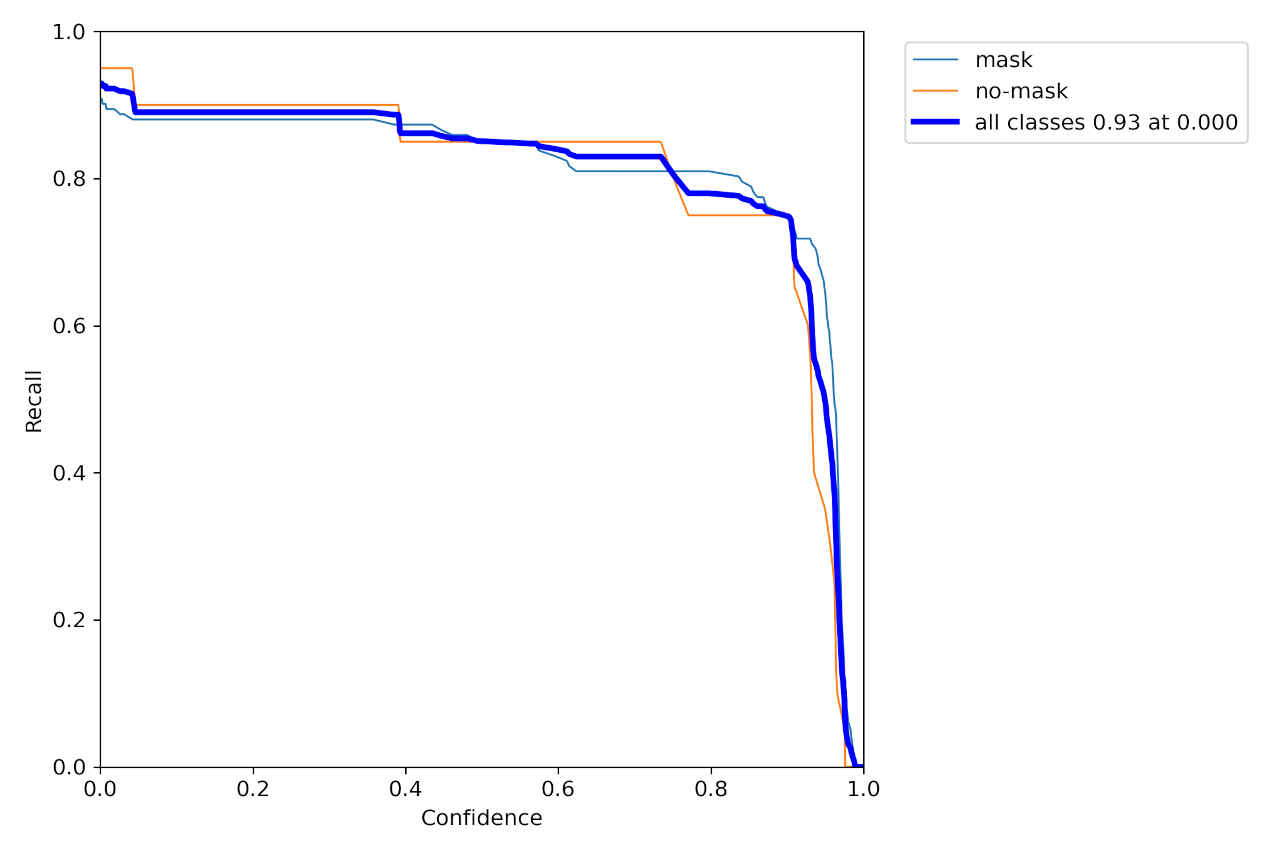

all 29 162 0.93 0.762 0.881 0.612

mask 29 142 0.956 0.774 0.884 0.623

no-mask 29 20 0.903 0.75 0.878 0.602

152 epochs completed in 0.784 hours.

Optimizer stripped from runs/train/yolov7x/weights/last.pt, 142.1MB

Optimizer stripped from runs/train/yolov7x/weights/best.pt, 142.1MB

!python detect.py --weights runs/train/yolov7x/weights/best.pt --conf 0.25 --img-size 640 --source mask.jpg

Namespace(weights=['runs/train/yolov7x/weights/best.pt'], source='mask.jpg', img_size=640, conf_thres=0.25, iou_thres=0.45, device='', view_img=False, save_txt=False, save_conf=False, nosave=False, classes=None, agnostic_nms=False, augment=False, update=False, project='runs/detect', name='exp', exist_ok=False, no_trace=False) YOLOR 🚀 v0.1-122-g3b41c2c torch 2.0.0+cu118 CUDA:0 (Tesla T4, 15101.8125MB) Fusing layers... IDetect.fuse Model Summary: 362 layers, 70789182 parameters, 0 gradients Convert model to Traced-model... traced_script_module saved! model is traced! /usr/local/lib/python3.10/dist-packages/torch/functional.py:504: UserWarning: torch.meshgrid: in an upcoming release, it will be required to pass the indexing argument. (Triggered internally at ../aten/src/ATen/native/TensorShape.cpp:3483.) return _VF.meshgrid(tensors, **kwargs) # type: ignore[attr-defined] 1 mask, Done. (28.8ms) Inference, (36.6ms) NMS The image with the result is saved in: runs/detect/exp3/mask.jpg Done. (0.413s)

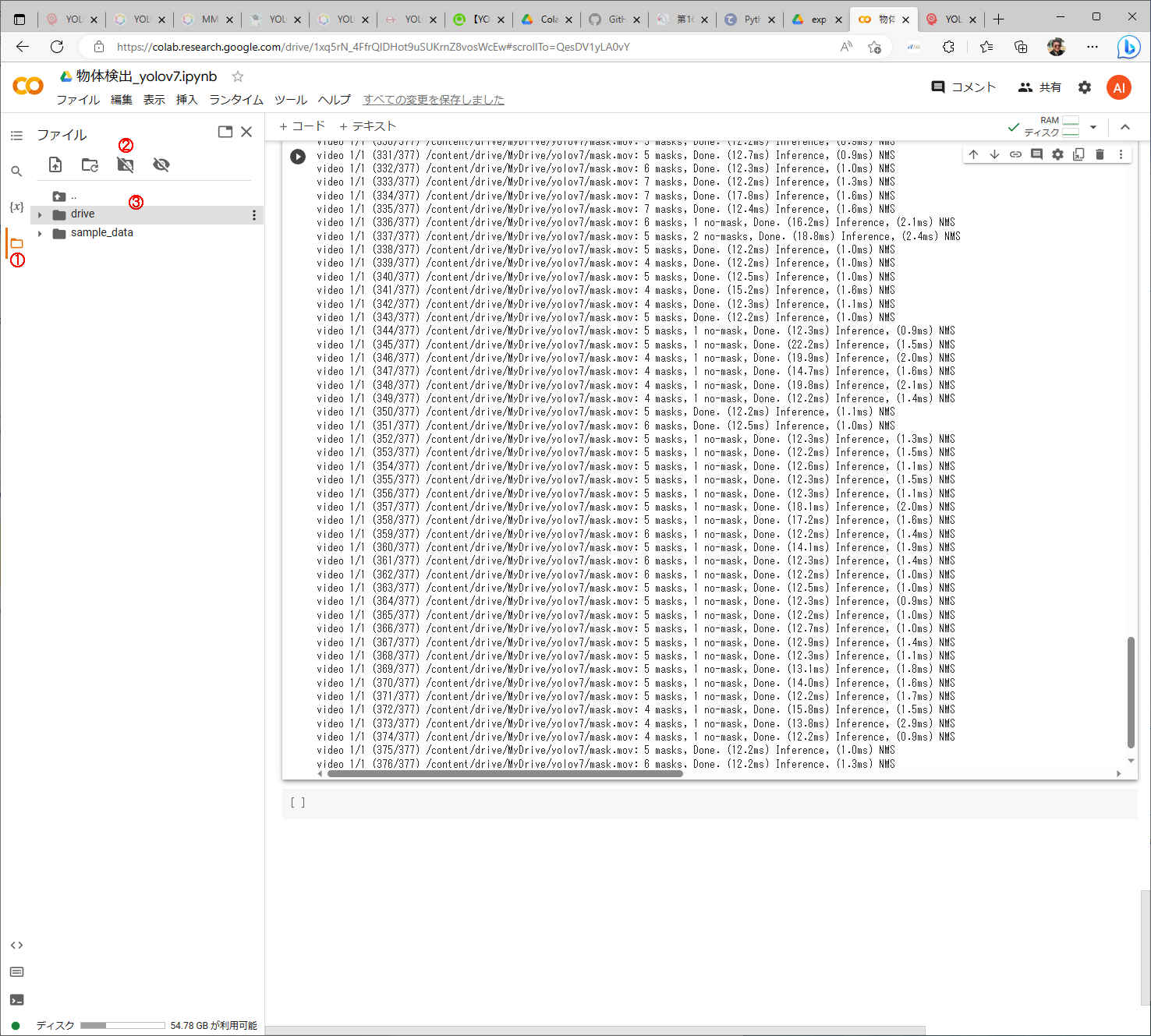

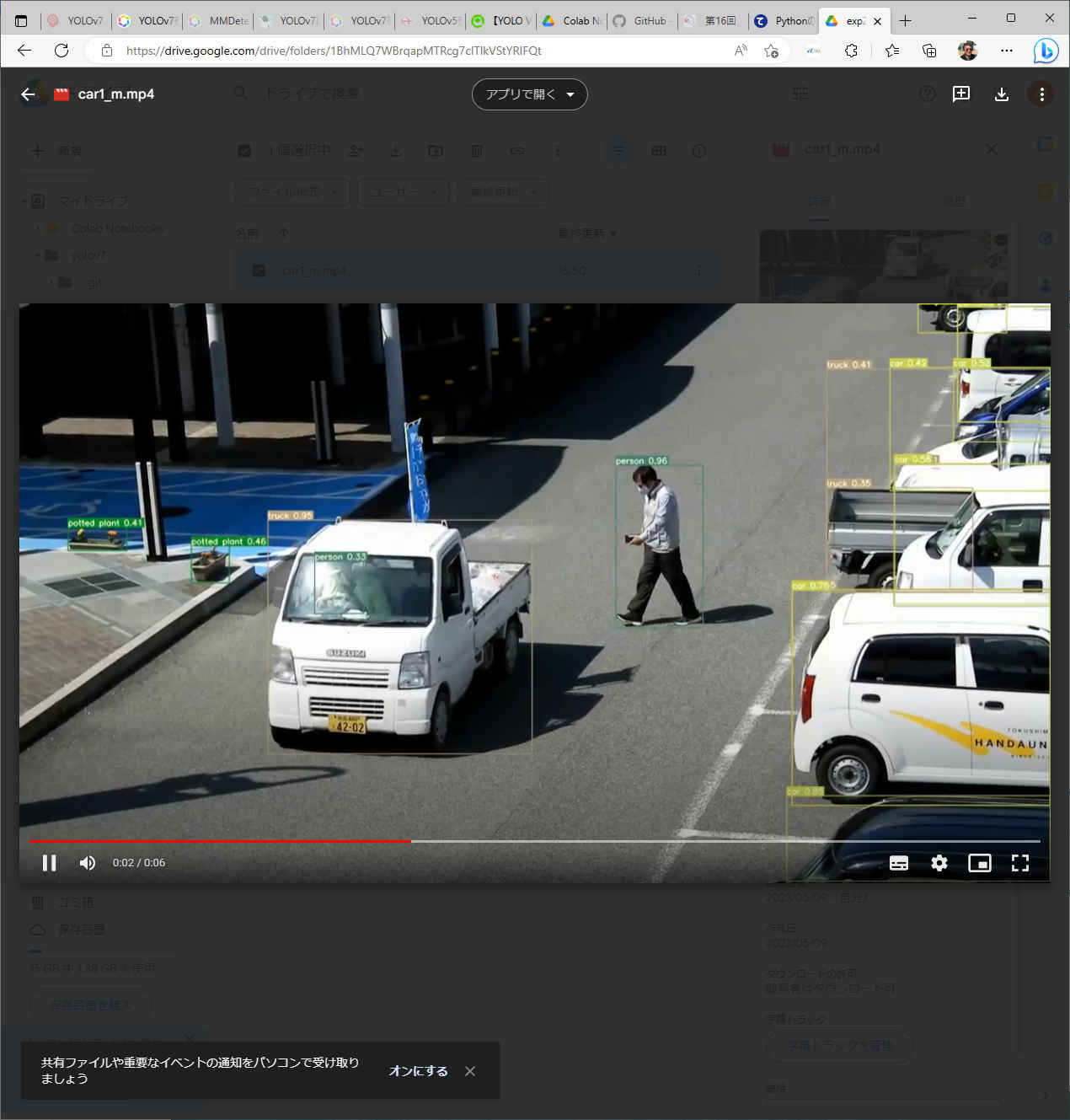

!python detect.py --weights runs/train/yolov7x/weights/best.pt --conf 0.25 --img-size 640 --source mask.mov

Namespace(weights=['runs/train/yolov7x/weights/best.pt'], source='mask.mov', img_size=640, conf_thres=0.25, iou_thres=0.45, device='', view_img=False, save_txt=False, save_conf=False, nosave=False, classes=None, agnostic_nms=False, augment=False, update=False, project='runs/detect', name='exp', exist_ok=False, no_trace=False)

YOLOR 🚀 v0.1-122-g3b41c2c torch 2.0.0+cu118 CUDA:0 (Tesla T4, 15101.8125MB)

Fusing layers...

IDetect.fuse

Model Summary: 362 layers, 70789182 parameters, 0 gradients

Convert model to Traced-model...

traced_script_module saved!

model is traced!

/usr/local/lib/python3.10/dist-packages/torch/functional.py:504: UserWarning: torch.meshgrid: in an upcoming release, it will be required to pass the indexing argument. (Triggered internally at ../aten/src/ATen/native/TensorShape.cpp:3483.)

return _VF.meshgrid(tensors, **kwargs) # type: ignore[attr-defined]

video 1/1 (1/377) /content/drive/MyDrive/yolov7/mask.mov: 6 masks, Done. (25.2ms) Inference, (1.4ms) NMS

video 1/1 (2/377) /content/drive/MyDrive/yolov7/mask.mov: 6 masks, Done. (25.2ms) Inference, (1.2ms) NMS

video 1/1 (3/377) /content/drive/MyDrive/yolov7/mask.mov: 6 masks, Done. (25.2ms) Inference, (1.1ms) NMS

:

:

video 1/1 (373/377) /content/drive/MyDrive/yolov7/mask.mov: 4 masks, 1 no-mask, Done. (13.8ms) Inference, (2.9ms) NMS

video 1/1 (374/377) /content/drive/MyDrive/yolov7/mask.mov: 4 masks, 1 no-mask, Done. (12.2ms) Inference, (0.9ms) NMS

video 1/1 (375/377) /content/drive/MyDrive/yolov7/mask.mov: 5 masks, Done. (12.2ms) Inference, (1.0ms) NMS

video 1/1 (376/377) /content/drive/MyDrive/yolov7/mask.mov: 6 masks, Done. (12.2ms) Inference, (1.3ms) NMS

video 1/1 (377/377) /content/drive/MyDrive/yolov7/mask.mov: 4 masks, 1 no-mask, Done. (12.8ms) Inference, (0.9ms) NMS

Done. (13.416s)

!pip install onnx

Looking in indexes: https://pypi.org/simple, https://us-python.pkg.dev/colab-wheels/public/simple/

Collecting onnx

Downloading onnx-1.14.0-cp310-cp310-manylinux_2_17_x86_64.manylinux2014_x86_64.whl (14.6 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 14.6/14.6 MB 103.1 MB/s eta 0:00:00

Requirement already satisfied: numpy in /usr/local/lib/python3.10/dist-packages (from onnx) (1.22.4)

Requirement already satisfied: protobuf>=3.20.2 in /usr/local/lib/python3.10/dist-packages (from onnx) (3.20.3)

Requirement already satisfied: typing-extensions>=3.6.2.1 in /usr/local/lib/python3.10/dist-packages (from onnx) (4.5.0)

Installing collected packages: onnx

Successfully installed onnx-1.14.0

!python export.py --weights runs/train/yolov7x/weights/best.pt

Import onnx_graphsurgeon failure: No module named 'onnx_graphsurgeon' Namespace(weights='runs/train/yolov7x/weights/best.pt', img_size=[640, 640], batch_size=1, dynamic=False, dynamic_batch=False, grid=False, end2end=False, max_wh=None, topk_all=100, iou_thres=0.45, conf_thres=0.25, device='cpu', simplify=False, include_nms=False, fp16=False, int8=False) YOLOR 🚀 v0.1-122-g3b41c2c torch 2.0.0+cu118 CPU Fusing layers... IDetect.fuse Model Summary: 362 layers, 70789182 parameters, 0 gradients Starting TorchScript export with torch 2.0.0+cu118... TorchScript export success, saved as runs/train/yolov7x/weights/best.torchscript.pt CoreML export failure: No module named 'coremltools' Starting TorchScript-Lite export with torch 2.0.0+cu118... TorchScript-Lite export success, saved as runs/train/yolov7x/weights/best.torchscript.ptl Starting ONNX export with onnx 1.14.0... /content/drive/MyDrive/yolov7/models/yolo.py:582: TracerWarning: Converting a tensor to a Python boolean might cause the trace to be incorrect. We can't record the data flow of Python values, so this value will be treated as a constant in the future. This means that the trace might not generalize to other inputs! if augment: /content/drive/MyDrive/yolov7/models/yolo.py:614: TracerWarning: Converting a tensor to a Python boolean might cause the trace to be incorrect. We can't record the data flow of Python values, so this value will be treated as a constant in the future. This means that the trace might not generalize to other inputs! if profile: /content/drive/MyDrive/yolov7/models/yolo.py:629: TracerWarning: Converting a tensor to a Python boolean might cause the trace to be incorrect. We can't record the data flow of Python values, so this value will be treated as a constant in the future. This means that the trace might not generalize to other inputs! if profile: ============= Diagnostic Run torch.onnx.export version 2.0.0+cu118 ============= verbose: False, log level: Level.ERROR ======================= 0 NONE 0 NOTE 0 WARNING 0 ERROR ======================== ONNX export success, saved as runs/train/yolov7x/weights/best.onnx Export complete (71.51s). Visualize with https://github.com/lutzroeder/netron.

mask no-mask・mask.nams_jp

マスク着用 マスク未装着

(py38a) PS cd /anaconda_win/work/yolov7 (py38a) PS > python object_detect_yolo7.py -m mask_best.onnx -l mask.names_jp -i ../../Images/mask.jpg -o out_mask.jpg Starting.. - Program title : Object detection YOLO V7 - OpenCV version : 4.5.5 - OpenVINO engine: 2022.1.0-7019-cdb9bec7210-releases/2022/1 - Input image : ../../Images/mask.jpg - Model : mask_best.onnx - Device : CPU - Label : mask.names_jp - Log level : 3 - Title flag : y - Speed flag : y - Processed out : out_mask.jpg - Preprocessing : False - Batch size : 1 - number of inf : 1 - With grid : False FPS average: 1.20 Finished.

PukiWiki 1.5.2 © 2001-2019 PukiWiki Development Team. Powered by PHP 7.4.3-4ubuntu2.20. HTML convert time: 0.027 sec.