私的AI研究会 > NCAppVol1

多少ソースコードをアレンジできるようになってきたので、これまでの成果を参考に Neural Compute Application を製作してみる。

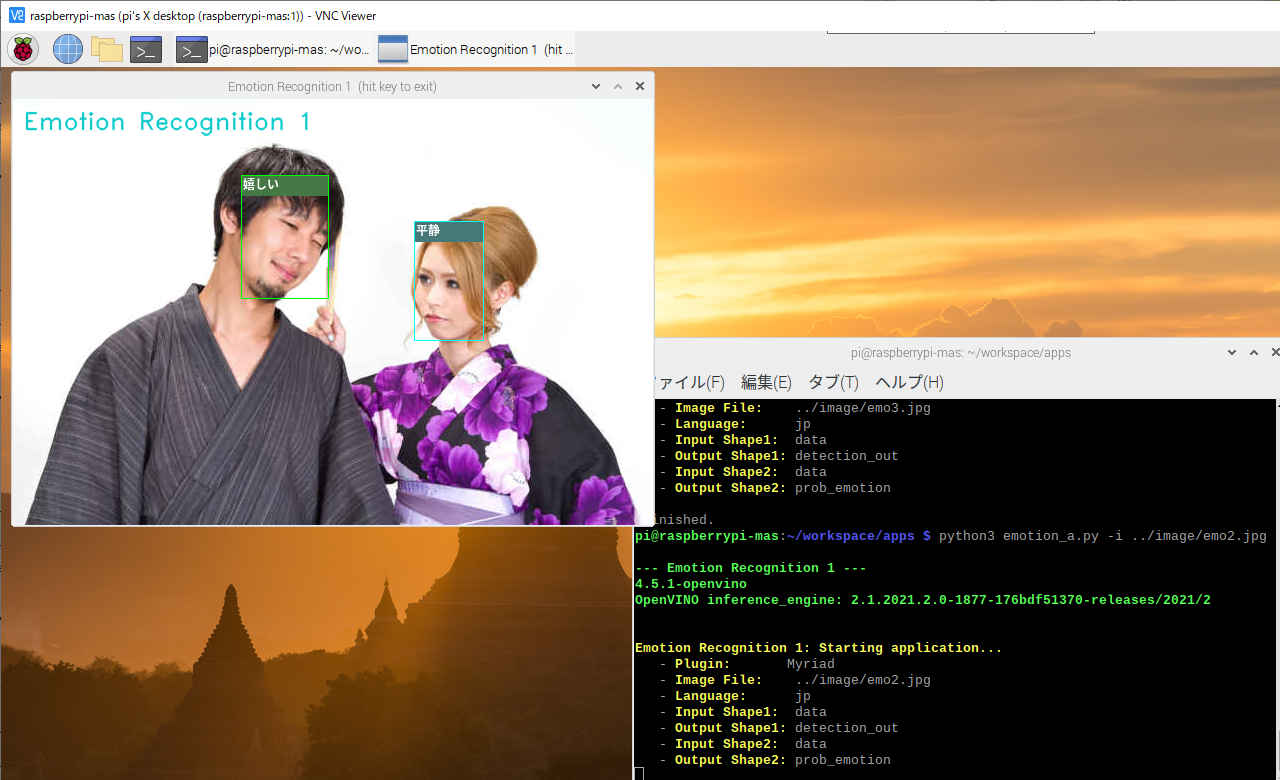

画像から顔を部分を特定しディープラーニングで感情推論をする。

| コマンドオプション | デフォールト設定 | 意味 |

| -h, --help | ヘルプ表示 | |

| -i, --image | cam | カメラ(cam)または入力画像ファイル |

| -l, --language | jp | 言語 (en/jp) |

| -t, --title | y | タイトル表示 (y/n) |

pi@raspberrypi:~/workspace/apps $ python3 emotion.py -h

--- Emotion Recognition ---

4.5.1-openvino

OpenVINO inference_engine: 2.1.2021.2.0-1877-176bdf51370-releases/2021/2

usage: emotion.py [-h] [-i IMAGE_FILE] [-l LANGUAGE] [-t TITLE]

Image classifier using Intelツョ Neural Compute Stick 2.

optional arguments:

-h, --help show this help message and exit

-i IMAGE_FILE, --image IMAGE_FILE

Absolute path to image file or cam for camera stream.

-l LANGUAGE, --language LANGUAGE

Language.

-t TITLE, --title TITLE

Language.$ cd ~/workspace/apps pi@raspberrypi:~/workspace/apps $ python3 emotion.py --- Emotion Recognition --- 4.5.1-openvino openVINO inference_engine: 2.1.2021.2.0-1877-176bdf51370-releases/2021/2 Emotion Recognition: Starting application... - Plugin: Myriad - Image File: 0 - Language: jp - Input Shape1: data - Output Shape1: detection_out - Input Shape2: data - Output Shape2: prob_emotion - Program Title: y Finished.

pi@raspberrypi:~/workspace/apps $ python3 emotion.py -i ../image/emo2.jpg --- Emotion Recognition --- 4.5.1-openvino OpenVINO inference_engine: 2.1.2021.2.0-1877-176bdf51370-releases/2021/2 Emotion Recognition: Starting application... - Plugin: Myriad - Image File: ../image/emo2.jpg - Language: jp - Input Shape1: data - Output Shape1: detection_out - Input Shape2: data - Output Shape2: prob_emotion - Program Title: y Finished.

pi@raspberrypi:~/workspace/apps $ python3 emotion.py -i ../image/emo1.jpg

pi@raspberrypi:~/workspace/apps $ python3 emotion.py -i ../image/emo3.jpg

pi@raspberrypi:~/workspace/apps $ python3 emotion.py -i ../../Videos/video-test.mp4

# -*- coding: utf-8 -*-

##------------------------------------------

## OpenVINO™ toolkit

## Emotion Recognition

##

## model: face-detection-adas-0001

## emotions-recognition-retail-0003

##

## 2021.02.24 Masahiro Izutsu

##------------------------------------------

## emotion.py

# Color Escape Code

GREEN = '\033[1;32m'

RED = '\033[1;31m'

NOCOLOR = '\033[0m'

YELLOW = '\033[1;33m'

# 定数定義

DEVICE = "MYRIAD"

MODULE_FACE = '../FP16/face-detection-adas-0001'

MODULE_AGE = '../FP16/emotions-recognition-retail-0003'

WINDOW_WIDTH = 640

TEXT_COLOR = (255, 255, 255) # white text

# モジュール読み込み

from openvino.inference_engine import IECore

from openvino.inference_engine import get_version

# import処理

import sys

import cv2

import numpy as np

import argparse

import myfunction

# タイトル・バージョン情報

title = 'Emotion Recognition'

print(GREEN)

print('--- {} ---'.format(title))

print(cv2.__version__)

print("OpenVINO inference_engine:", get_version())

print(NOCOLOR)

# Parses arguments for the application

def parse_args():

parser = argparse.ArgumentParser(description = 'Image classifier using \

Intel® Neural Compute Stick 2.' )

parser.add_argument( '-i', '--image', metavar = 'IMAGE_FILE',

type=str, default = 'cam',

help = 'Absolute path to image file or cam for camera stream.')

parser.add_argument( '-l', '--language', metavar = 'LANGUAGE', default = 'jp',

help = 'Language.')

parser.add_argument( '-t', '--title', metavar = 'TITLE', default = 'y',

help = 'Language.')

return parser

# モデル基本情報の表示

def display_info(image, lang, input_blob, out_blob, input_blob_emo, out_blob_emo, titleflg):

print(YELLOW + title + ': Starting application...' + NOCOLOR)

print(' - ' + YELLOW + 'Plugin: ' + NOCOLOR + 'Myriad')

print(' - ' + YELLOW + 'Image File: ' + NOCOLOR, image)

print(' - ' + YELLOW + 'Language: ' + NOCOLOR, lang)

print(' - ' + YELLOW + 'Input Shape1: ' + NOCOLOR, input_blob)

print(' - ' + YELLOW + 'Output Shape1:' + NOCOLOR, out_blob)

print(' - ' + YELLOW + 'Input Shape2: ' + NOCOLOR, input_blob_emo)

print(' - ' + YELLOW + 'Output Shape2:' + NOCOLOR, out_blob_emo)

print(' - ' + YELLOW + 'Program Title:' + NOCOLOR, titleflg)

# 画像の種類を判別する

# 戻り値: 'jeg''png'... 画像ファイル

# 'None' 画像ファイル以外 (動画ファイル)

# 'NotFound' ファイルが存在しない

import imghdr

def is_pict(filename):

try:

imgtype = imghdr.what(filename)

except FileNotFoundError as e:

imgtype = 'NotFound'

return str(imgtype)

# ** main関数 **

def main():

# 日本語フォント指定

fontPIL = 'NotoSansCJK-Bold.ttc'

# Argument parsing and parameter setting

ARGS = parse_args().parse_args()

input_stream = ARGS.image

lang = ARGS.language

titleflg = ARGS.title

if ARGS.image.lower() == "cam" or ARGS.image.lower() == "camera":

input_stream = 0

isstream = True

else:

filetype = is_pict(input_stream)

isstream = filetype == 'None'

if (filetype == 'NotFound'):

print(RED + "\ninput file Not found." + NOCOLOR)

quit()

# 感情ラベル

if (lang == 'jp'):

list_emotion = ['平静', '嬉しい', '悲しい', '驚き', '怒り']

else:

list_emotion = ['neutral', 'happy', 'sad', 'surprise', 'anger']

# 感情色ラベル

color_emotion = [(255, 255, 0), ( 0, 255, 0), ( 0, 255, 255), (255, 0, 255), ( 0, 0, 255)]

bkcolor_emotion = [(120, 120, 70), ( 70, 120, 70), ( 70, 120, 120), (120, 70, 120), ( 70, 70, 120)]

textcolor_emotion = [(255, 255, 255), (255, 255, 255), (255, 255, 255), (255, 255, 255), (255, 255, 255)]

# モデルの読み込み (顔検出)face-detection-adas-0001

ie = IECore()

net = ie.read_network(model = MODULE_FACE + '.xml', weights = MODULE_FACE + '.bin')

exec_net = ie.load_network(network = net, device_name = DEVICE)

# 入出力設定(顔検出)

input_blob = net.input_info['data'].name

out_blob = next(iter(net.outputs))

n, c, h, w = net.input_info[input_blob].input_data.shape

# モデルの読み込み(感情検出)emotions-recognition-retail-0003

net_emo = ie.read_network(model = MODULE_AGE + '.xml', weights = MODULE_AGE + '.bin')

exec_net_emo = ie.load_network(network = net_emo, device_name=DEVICE)

# 入出力設定(感情)

input_blob_emo = net.input_info['data'].name

out_blob_emo = next(iter(net_emo.outputs))

n_emo, c_emo, h_emo, w_emo = net.input_info[input_blob_emo].input_data.shape

# 情報表示

display_info(input_stream, lang, input_blob, out_blob, input_blob_emo, out_blob_emo, titleflg)

# 入力準備

if (isstream):

# カメラ

cap = cv2.VideoCapture(input_stream)

ret, frame = cap.read()

loopflg = cap.isOpened()

else:

# 画像ファイル読み込み

frame = cv2.imread(input_stream)

if frame is None:

print(RED + "\nUnable to read the input." + NOCOLOR)

quit()

# アスペクト比を固定してリサイズ

img_h, img_w = frame.shape[:2]

if (img_w > WINDOW_WIDTH):

height = round(img_h * (WINDOW_WIDTH / img_w))

frame = cv2.resize(frame, dsize = (WINDOW_WIDTH, height))

loopflg = True # 1回ループ

# メインループ

while (loopflg):

if frame is None:

print(RED + "\nUnable to read the input." + NOCOLOR)

quit()

# 入力データフォーマットへ変換

img = cv2.resize(frame, (w, h)) # サイズ変更

img = img.transpose((2, 0, 1)) # HWC > CHW

img = np.expand_dims(img, axis=0) # 次元合せ

# 推論実行

out = exec_net.infer(inputs={'data': img})

# 出力から必要なデータのみ取り出し

out = out['detection_out']

out = np.squeeze(out) #サイズ1の次元を全て削除

# 検出されたすべての顔領域に対して1つずつ処理

for detection in out:

# conf値の取得

confidence = float(detection[2])

# バウンディングボックス座標を入力画像のスケールに変換

xmin = int(detection[3] * frame.shape[1])

ymin = int(detection[4] * frame.shape[0])

xmax = int(detection[5] * frame.shape[1])

ymax = int(detection[6] * frame.shape[0])

# conf値が0.5より大きい場合のみバウンディングボックス表示

if confidence > 0.5:

# 顔検出領域はカメラ範囲内に補正する。特にminは補正しないとエラーになる

if xmin < 0:

xmin = 0

if ymin < 0:

ymin = 0

if xmax > frame.shape[1]:

xmax = frame.shape[1]

if ymax > frame.shape[0]:

ymax = frame.shape[0]

# 顔領域のみ切り出し

frame_face = frame[ ymin:ymax, xmin:xmax ]

# 入力データフォーマットへ変換

img = cv2.resize(frame_face, (64, 64)) # サイズ変更

img = img.transpose((2, 0, 1)) # HWC > CHW

img = np.expand_dims(img, axis=0) # 次元合せ

# 推論実行

out = exec_net_emo.infer(inputs={'data': img})

# 出力から必要なデータのみ取り出し

out = out['prob_emotion']

out = np.squeeze(out) # 不要な次元の削減

# 出力値が最大のインデックスを得る

emoid = np.argmax(out)

emotion = list_emotion[emoid]

# バウンディングボックス(顔領域)表示

cv2.rectangle(frame, (xmin, ymin-20), (xmax, ymin), bkcolor_emotion[emoid], -1)

# cv2.putText(frame, emotion, (xmin, ymin-4), cv2.FONT_HERSHEY_DUPLEX, fontScale=0.6, color=cor, lineType=cv2.LINE_AA)

myfunction.cv2_putText(img = frame,

text = emotion,

org = (xmin+2, ymin-4),

fontFace = fontPIL,

fontScale = 12,

color = textcolor_emotion[emoid],

mode = 0)

cv2.rectangle(frame, (xmin, ymin-20), (xmax, ymax), color_emotion[emoid], thickness = 1)

# タイトル描画

if (titleflg == 'y'):

cv2.putText(frame, title, (10, 30), cv2.FONT_HERSHEY_DUPLEX, fontScale=0.8, color=(200, 200, 0), lineType=cv2.LINE_AA)

# 画像表示

window_name = title + ' (hit key to exit)'

cv2.imshow(window_name, frame)

cv2.moveWindow(window_name, 10, 40)

# 何らかのキーが押されたら終了

breakflg = False

while(True):

key = cv2.waitKey(1)

prop_val = cv2.getWindowProperty(window_name, cv2.WND_PROP_ASPECT_RATIO)

if ((key != -1) or (prop_val < 0.0)):

breakflg = True

break

if (isstream):

break

if ((breakflg == False) and isstream):

# 次のフレームを読み出す

ret, frame = cap.read()

if ret == False:

break

loopflg = cap.isOpened()

else:

loopflg = False

# 終了処理

if (isstream):

cap.release()

cv2.destroyAllWindows()

print('\n Finished.')

# main関数エントリーポイント(実行開始)

if __name__ == "__main__":

sys.exit(main())

| コマンドオプション | デフォールト設定 | 意味 |

| -h, --help | - | ヘルプ表示 |

| -i, --image | cam | カメラ(cam)または動画・静止画像ファイル |

| -m_dt, --m_detector | 必須指定 | IR フォーマットの顔検出モデル |

| -m_re, --m_recognition | 必須指定 | IR フォーマット顔識別モデル |

| -d, --device | 必須指定 | デバイス指定 (CPU/MYRIAD) |

| -l, --language | jp | 言語 (en/jp) |

| -t, --title | y | タイトル表示 (y/n) |

| -s, --speed | y | スピード計測表示 (y/n) |

| -o, --out | non | 処理結果を出力する場合のファイルパス |

$ python3 emotion2.py -h

--- Emotion Recognition 2 ---

4.5.1-openvino

OpenVINO inference_engine: 2.1.2021.2.0-1877-176bdf51370-releases/2021/2

usage: emotion2.py [-h] [-i IMAGE_FILE] [-m_dt M_DETECTOR]

[-m_re M_RECOGNITION] [-d DEVICE] [-l LANGUAGE] [-t TITLE]

[-s SPEED] [-o IMAGE_OUT]

optional arguments:

-h, --help show this help message and exit

-i IMAGE_FILE, --image IMAGE_FILE

Absolute path to image file or cam for camera stream.

-m_dt M_DETECTOR, --m_detector M_DETECTOR

Detector Path to an .xml file with a trained

model.Default value is

/home/mizutu/model/intel/FP32/face-detection-

adas-0001.xml

-m_re M_RECOGNITION, --m_recognition M_RECOGNITION

Emotion Path to an .xml file with a trained

model.Default value is

/home/mizutu/model/intel/FP32/emotions-recognition-

retail-0003.xml

-d DEVICE, --device DEVICE

Optional. Specify a target device to infer on. CPU,

GPU, FPGA, HDDL or MYRIAD is acceptable. The demo will

look for a suitable plugin for the device specified.

Default value is CPU

-l LANGUAGE, --language LANGUAGE

Language.(jp/en) Default value is 'jp'

-t TITLE, --title TITLE

Program title flag.(y/n) Default value is 'y'

-s SPEED, --speed SPEED

Speed display flag.(y/n) Default calue is 'y'

-o IMAGE_OUT, --out IMAGE_OUT

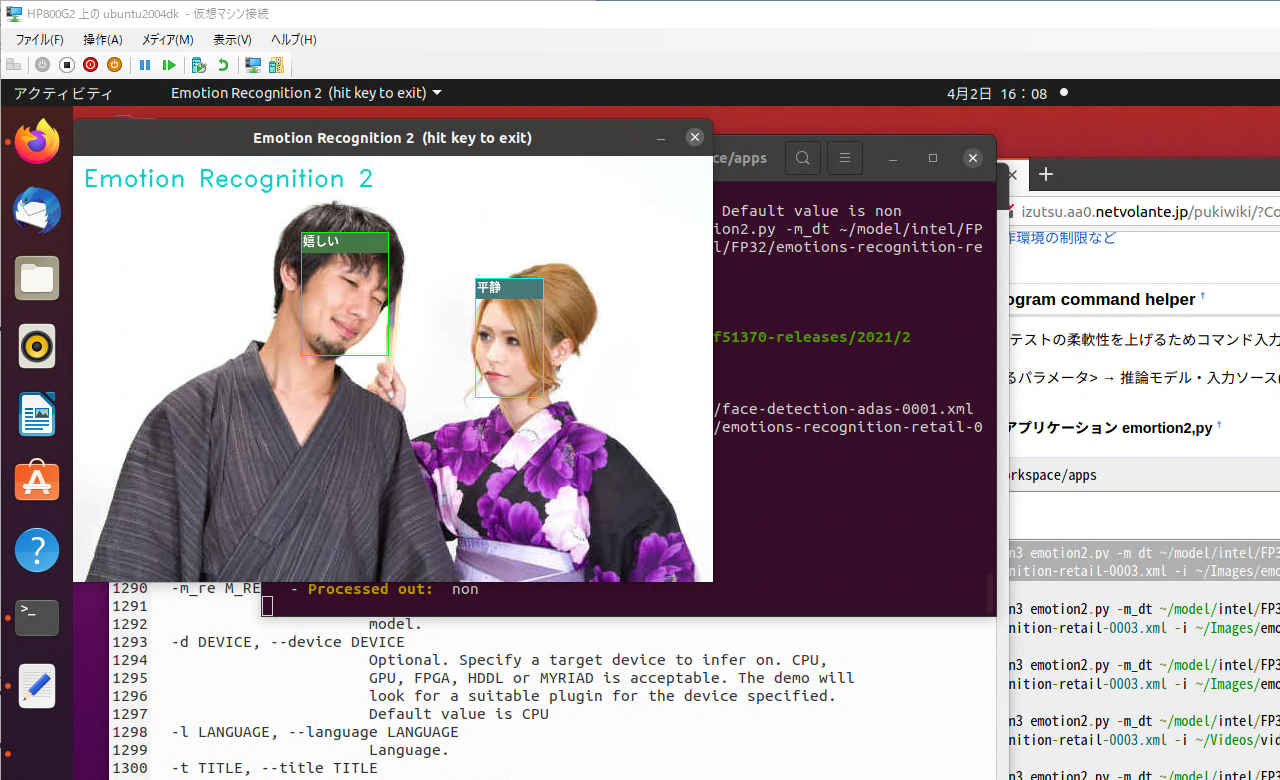

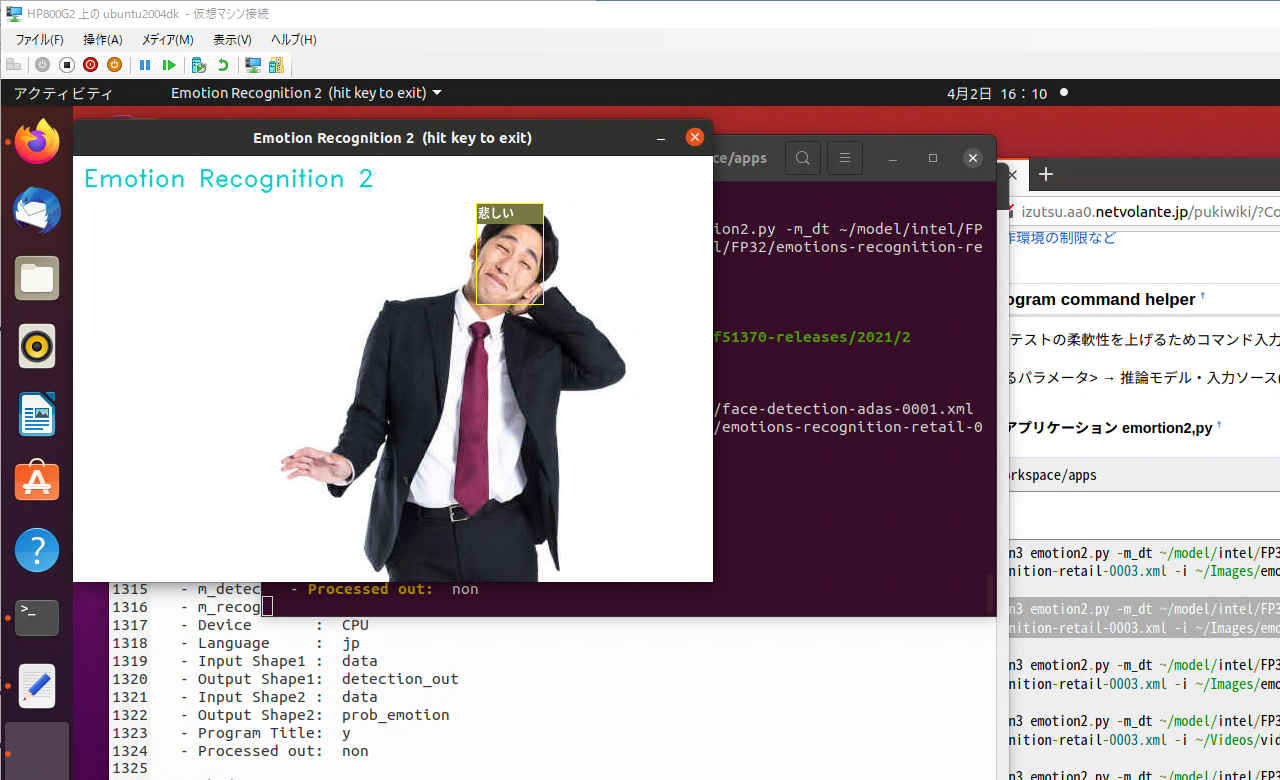

Processed image file path. Default value is 'non'$ python3 emotion2.py -i ~/Images/emo2.jpg --- Emotion Recognition 2 --- 4.5.2-openvino OpenVINO inference_engine: 2.1.2021.3.0-2787-60059f2c755-releases/2021/3 Emotion Recognition 2: Starting application... - Image File : /home/mizutu/Images/emo2.jpg - m_detect : /home/mizutu/model/intel/FP32/face-detection-adas-0001.xml - m_recognition: /home/mizutu/model/intel/FP32/emotions-recognition-retail-0003.xml - Device : CPU - Language : jp - Input Shape1 : data - Output Shape1: detection_out - Input Shape2 : data - Output Shape2: prob_emotion - Program Title: y - Speed flag : y - Processed out: non FPS average: 19.00 Finished.

$ python3 emotion2.py -i ~/Images/emo1.jpg --- Emotion Recognition 2 --- 4.5.2-openvino OpenVINO inference_engine: 2.1.2021.3.0-2787-60059f2c755-releases/2021/3 Emotion Recognition 2: Starting application... - Image File : /home/mizutu/Images/emo1.jpg - m_detect : /home/mizutu/model/intel/FP32/face-detection-adas-0001.xml - m_recognition: /home/mizutu/model/intel/FP32/emotions-recognition-retail-0003.xml - Device : CPU - Language : jp - Input Shape1 : data - Output Shape1: detection_out - Input Shape2 : data - Output Shape2: prob_emotion - Program Title: y - Speed flag : y - Processed out: non FPS average: 22.40 Finished.

$ cd ~/workspace/apps◦ CPU

$ python3 emotion2.py -i ~/Images/emo2.jpg

$ python3 emotion2.py -i ~/Images/emo1.jpg

$ python3 emotion2.py -i ~/Images/emo3.jpg

$ python3 emotion2.py -i ~/Videos/video-test.mp4

$ python3 emotion2.py -i cam◦ NCS2(MYRIAD)

$ python3 emotion2.py -m_dt ~/model/intel/FP16/face-detection-adas-0001.xml -m_re ~/model/intel/FP16/emotions-recognition-retail-0003.xml -i ~/Images/emo2.jpg -d MYRIAD

$ python3 emotion2.py -m_dt ~/model/intel/FP16/face-detection-adas-0001.xml -m_re ~/model/intel/FP16/emotions-recognition-retail-0003.xml -i ~/Images/emo1.jpg -d MYRIAD

$ python3 emotion2.py -m_dt ~/model/intel/FP16/face-detection-adas-0001.xml -m_re ~/model/intel/FP16/emotions-recognition-retail-0003.xml -i ~/Images/emo3.jpg -d MYRIAD

$ python3 emotion2.py -m_dt ~/model/intel/FP16/face-detection-adas-0001.xml -m_re ~/model/intel/FP16/emotions-recognition-retail-0003.xml -i ~/Videos/video-test.mp4 -d MYRIAD

$ python3 emotion2.py -m_dt ~/model/intel/FP16/face-detection-adas-0001.xml -m_re ~/model/intel/FP16/emotions-recognition-retail-0003.xml -i cam -d MYRIAD

# -*- coding: utf-8 -*-

##------------------------------------------

## OpenVINO™ toolkit

## Emotion Recognition

##

## model: face-detection-adas-0001

## emotions-recognition-retail-0003

##

## 2021.02.24 Masahiro Izutsu

##------------------------------------------

## 2021.03.25 model/device parameter

## 2021.06.23 fps display

# Color Escape Code

GREEN = '\033[1;32m'

RED = '\033[1;31m'

NOCOLOR = '\033[0m'

YELLOW = '\033[1;33m'

# 定数定義

WINDOW_WIDTH = 640

TEXT_COLOR = (255, 255, 255) # white text

from os.path import expanduser

MODEL_DEF_FACE = expanduser('~/model/intel/FP32/face-detection-adas-0001.xml')

MODEL_DEF_EMO = expanduser('~/model/intel/FP32/emotions-recognition-retail-0003.xml')

# モジュール読み込み

from openvino.inference_engine import IECore

from openvino.inference_engine import get_version

# import処理

import sys

import cv2

import numpy as np

import argparse

import myfunction

import mylib

# タイトル・バージョン情報

title = 'Emotion Recognition 2'

print(GREEN)

print('--- {} ---'.format(title))

print(cv2.__version__)

print("OpenVINO inference_engine:", get_version())

print(NOCOLOR)

# Parses arguments for the application

def parse_args():

parser = argparse.ArgumentParser()

parser.add_argument('-i', '--image', metavar = 'IMAGE_FILE', type = str, default = 'cam',

help = 'Absolute path to image file or cam for camera stream.')

parser.add_argument('-m_dt', '--m_detector', type=str,

default = MODEL_DEF_FACE,

help = 'Detector Path to an .xml file with a trained model.'

'Default value is '+MODEL_DEF_FACE)

parser.add_argument('-m_re', '--m_recognition', type=str,

default = MODEL_DEF_EMO,

help = 'Emotion Path to an .xml file with a trained model.'

'Default value is '+MODEL_DEF_EMO)

parser.add_argument('-d', '--device', default='CPU', type=str,

help = 'Optional. Specify a target device to infer on. CPU, GPU, FPGA, HDDL or MYRIAD is '

'acceptable. The demo will look for a suitable plugin for the device specified. '

'Default value is CPU')

parser.add_argument('-l', '--language', metavar = 'LANGUAGE',

default = 'jp',

help = 'Language.(jp/en) Default value is \'jp\'')

parser.add_argument('-t', '--title', metavar = 'TITLE',

default = 'y',

help = 'Program title flag.(y/n) Default value is \'y\'')

parser.add_argument('-s', '--speed', metavar = 'SPEED',

default = 'y',

help = 'Speed display flag.(y/n) Default calue is \'y\'')

parser.add_argument('-o', '--out', metavar = 'IMAGE_OUT',

default = 'non',

help = 'Processed image file path. Default value is \'non\'')

return parser

# モデル基本情報の表示

def display_info(image, detector, recognition, device, lang, input_blob, out_blob, input_blob_emo, out_blob_emo, titleflg, speedflg, outpath):

print(YELLOW + title + ': Starting application...' + NOCOLOR)

print(' - ' + YELLOW + 'Image File : ' + NOCOLOR, image)

print(' - ' + YELLOW + 'm_detect : ' + NOCOLOR, detector)

print(' - ' + YELLOW + 'm_recognition: ' + NOCOLOR, recognition)

print(' - ' + YELLOW + 'Device : ' + NOCOLOR, device)

print(' - ' + YELLOW + 'Language : ' + NOCOLOR, lang)

print(' - ' + YELLOW + 'Input Shape1 : ' + NOCOLOR, input_blob)

print(' - ' + YELLOW + 'Output Shape1: ' + NOCOLOR, out_blob)

print(' - ' + YELLOW + 'Input Shape2 : ' + NOCOLOR, input_blob_emo)

print(' - ' + YELLOW + 'Output Shape2: ' + NOCOLOR, out_blob_emo)

print(' - ' + YELLOW + 'Program Title: ' + NOCOLOR, titleflg)

print(' - ' + YELLOW + 'Speed flag : ' + NOCOLOR, speedflg)

print(' - ' + YELLOW + 'Processed out: ' + NOCOLOR, outpath)

# 画像の種類を判別する

# 戻り値: 'jeg''png'... 画像ファイル

# 'None' 画像ファイル以外 (動画ファイル)

# 'NotFound' ファイルが存在しない

import imghdr

def is_pict(filename):

try:

imgtype = imghdr.what(filename)

except FileNotFoundError as e:

imgtype = 'NotFound'

return str(imgtype)

# ** main関数 **

def main():

# 日本語フォント指定

fontPIL = 'NotoSansCJK-Bold.ttc'

# Argument parsing and parameter setting

ARGS = parse_args().parse_args()

input_stream = ARGS.image

lang = ARGS.language

titleflg = ARGS.title

speedflg = ARGS.speed

if ARGS.image.lower() == "cam" or ARGS.image.lower() == "camera":

input_stream = 0

isstream = True

else:

filetype = is_pict(input_stream)

isstream = filetype == 'None'

if (filetype == 'NotFound'):

print(RED + "\ninput file Not found." + NOCOLOR)

quit()

model_detector=ARGS.m_detector

model_recognition=ARGS.m_recognition

device = ARGS.device

outpath = ARGS.out

# 感情ラベル

if (lang == 'jp'):

list_emotion = ['平静', '嬉しい', '悲しい', '驚き', '怒り']

else:

list_emotion = ['neutral', 'happy', 'sad', 'surprise', 'anger']

# 感情色ラベル

color_emotion = [(255, 255, 0), ( 0, 255, 0), ( 0, 255, 255), (255, 0, 255), ( 0, 0, 255)]

bkcolor_emotion = [(120, 120, 70), ( 70, 120, 70), ( 70, 120, 120), (120, 70, 120), ( 70, 70, 120)]

textcolor_emotion = [(255, 255, 255), (255, 255, 255), (255, 255, 255), (255, 255, 255), (255, 255, 255)]

# モデルの読み込み (顔検出)face-detection-adas-0001

ie = IECore()

net = ie.read_network(model = model_detector, weights = model_detector[:-4] + '.bin')

exec_net = ie.load_network(network = net, device_name = device)

# 入出力設定(顔検出)

input_blob = net.input_info['data'].name

out_blob = next(iter(net.outputs))

n, c, h, w = net.input_info[input_blob].input_data.shape

# モデルの読み込み(感情検出)emotions-recognition-retail-0003

net_emo = ie.read_network(model = model_recognition, weights = model_recognition[:-4] + '.bin')

exec_net_emo = ie.load_network(network = net_emo, device_name=device)

# 入出力設定(感情)

input_blob_emo = net.input_info['data'].name

out_blob_emo = next(iter(net_emo.outputs))

n_emo, c_emo, h_emo, w_emo = net.input_info[input_blob_emo].input_data.shape

# 情報表示

display_info(input_stream, model_detector, model_recognition, device, lang, input_blob, out_blob, input_blob_emo, out_blob_emo, titleflg, speedflg, outpath)

# 入力準備

if (isstream):

# カメラ

cap = cv2.VideoCapture(input_stream)

ret, frame = cap.read()

loopflg = cap.isOpened()

else:

# 画像ファイル読み込み

frame = cv2.imread(input_stream)

if frame is None:

print(RED + "\nUnable to read the input." + NOCOLOR)

quit()

# アスペクト比を固定してリサイズ

img_h, img_w = frame.shape[:2]

if (img_w > WINDOW_WIDTH):

height = round(img_h * (WINDOW_WIDTH / img_w))

frame = cv2.resize(frame, dsize = (WINDOW_WIDTH, height))

loopflg = True # 1回ループ

# 処理結果の記録 step1

if (outpath != 'non'):

if (isstream):

fps = int(cap.get(cv2.CAP_PROP_FPS))

out_w = int(cap.get(cv2.CAP_PROP_FRAME_WIDTH))

out_h = int(cap.get(cv2.CAP_PROP_FRAME_HEIGHT))

fourcc = cv2.VideoWriter_fourcc('m', 'p', '4', 'v')

outvideo = cv2.VideoWriter(outpath, fourcc, fps, (out_w, out_h))

# 計測値初期化

fpsWithTick = mylib.fpsWithTick()

frame_count = 0

fps_total = 0

fpsWithTick.get() # fps計測開始

# メインループ

while (loopflg):

if frame is None:

print(RED + "\nUnable to read the input." + NOCOLOR)

quit()

# 入力データフォーマットへ変換

img = cv2.resize(frame, (w, h)) # サイズ変更

img = img.transpose((2, 0, 1)) # HWC > CHW

img = np.expand_dims(img, axis=0) # 次元合せ

# 推論実行

out = exec_net.infer(inputs={'data': img})

# 出力から必要なデータのみ取り出し

out = out['detection_out']

out = np.squeeze(out) #サイズ1の次元を全て削除

# 検出されたすべての顔領域に対して1つずつ処理

for detection in out:

# conf値の取得

confidence = float(detection[2])

# バウンディングボックス座標を入力画像のスケールに変換

xmin = int(detection[3] * frame.shape[1])

ymin = int(detection[4] * frame.shape[0])

xmax = int(detection[5] * frame.shape[1])

ymax = int(detection[6] * frame.shape[0])

# conf値が0.5より大きい場合のみバウンディングボックス表示

if confidence > 0.5:

# 顔検出領域はカメラ範囲内に補正する。特にminは補正しないとエラーになる

if xmin < 0:

xmin = 0

if ymin < 0:

ymin = 0

if xmax > frame.shape[1]:

xmax = frame.shape[1]

if ymax > frame.shape[0]:

ymax = frame.shape[0]

# 顔領域のみ切り出し

frame_face = frame[ ymin:ymax, xmin:xmax ]

# 入力データフォーマットへ変換

img = cv2.resize(frame_face, (64, 64)) # サイズ変更

img = img.transpose((2, 0, 1)) # HWC > CHW

img = np.expand_dims(img, axis=0) # 次元合せ

# 推論実行

out = exec_net_emo.infer(inputs={'data': img})

# 出力から必要なデータのみ取り出し

out = out['prob_emotion']

out = np.squeeze(out) # 不要な次元の削減

# 出力値が最大のインデックスを得る

emoid = np.argmax(out)

emotion = list_emotion[emoid]

# バウンディングボックス(顔領域)表示

cv2.rectangle(frame, (xmin, ymin-20), (xmax, ymin), bkcolor_emotion[emoid], -1)

# cv2.putText(frame, emotion, (xmin, ymin-4), cv2.FONT_HERSHEY_DUPLEX, fontScale=0.6, color=cor, lineType=cv2.LINE_AA)

myfunction.cv2_putText(img = frame,

text = emotion,

org = (xmin+2, ymin-4),

fontFace = fontPIL,

fontScale = 12,

color = textcolor_emotion[emoid],

mode = 0)

cv2.rectangle(frame, (xmin, ymin-20), (xmax, ymax), color_emotion[emoid], thickness = 1)

# FPSを計算する

fps = fpsWithTick.get()

st_fps = 'fps: {:>6.2f}'.format(fps)

if (speedflg == 'y'):

cv2.rectangle(frame, (10, 38), (95, 55), (90, 90, 90), -1)

cv2.putText(frame, st_fps, (15, 50), cv2.FONT_HERSHEY_DUPLEX, fontScale=0.4, color=(255, 255, 255), lineType=cv2.LINE_AA)

# タイトル描画

if (titleflg == 'y'):

cv2.putText(frame, title, (10, 30), cv2.FONT_HERSHEY_DUPLEX, fontScale=0.8, color=(200, 200, 0), lineType=cv2.LINE_AA)

# 画像表示

window_name = title + " (hit 'q' or 'esc' key to exit)"

cv2.namedWindow(window_name, cv2.WINDOW_AUTOSIZE)

cv2.imshow(window_name, frame)

# 処理結果の記録 step2

if (outpath != 'non'):

if (isstream):

outvideo.write(frame)

else:

cv2.imwrite(outpath, frame)

# 何らかのキーが押されたら終了

breakflg = False

while(True):

key = cv2.waitKey(1)

prop_val = cv2.getWindowProperty(window_name, cv2.WND_PROP_ASPECT_RATIO)

if key == 27 or key == 113 or (prop_val < 0.0): # 'esc' or 'q'

breakflg = True

break

if (isstream):

break

if ((breakflg == False) and isstream):

# 次のフレームを読み出す

ret, frame = cap.read()

if ret == False:

break

loopflg = cap.isOpened()

else:

loopflg = False

# 終了処理

if (isstream):

cap.release()

# 処理結果の記録 step3

if (outpath != 'non'):

if (isstream):

outvideo.release()

cv2.destroyAllWindows()

print('\nFPS average: {:>10.2f}'.format(fpsWithTick.get_average()))

print('\n Finished.')

# main関数エントリーポイント(実行開始)

if __name__ == "__main__":

sys.exit(main())

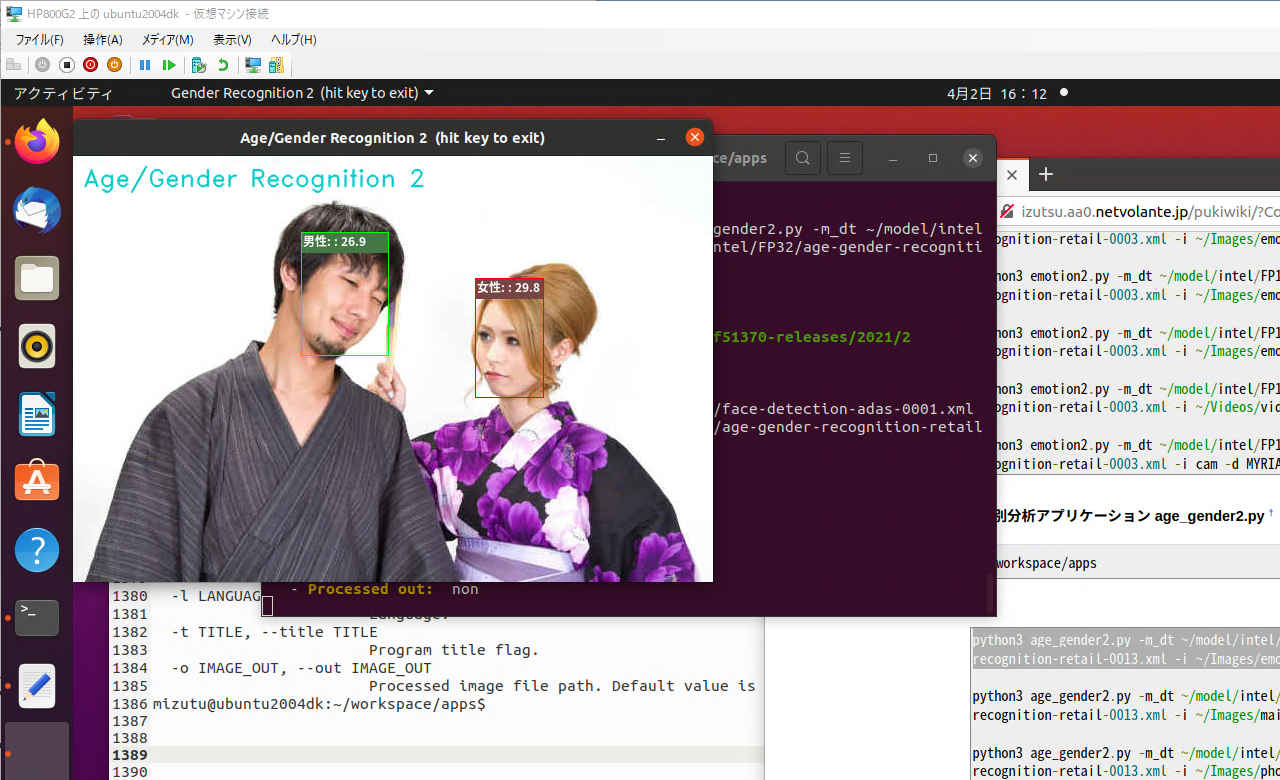

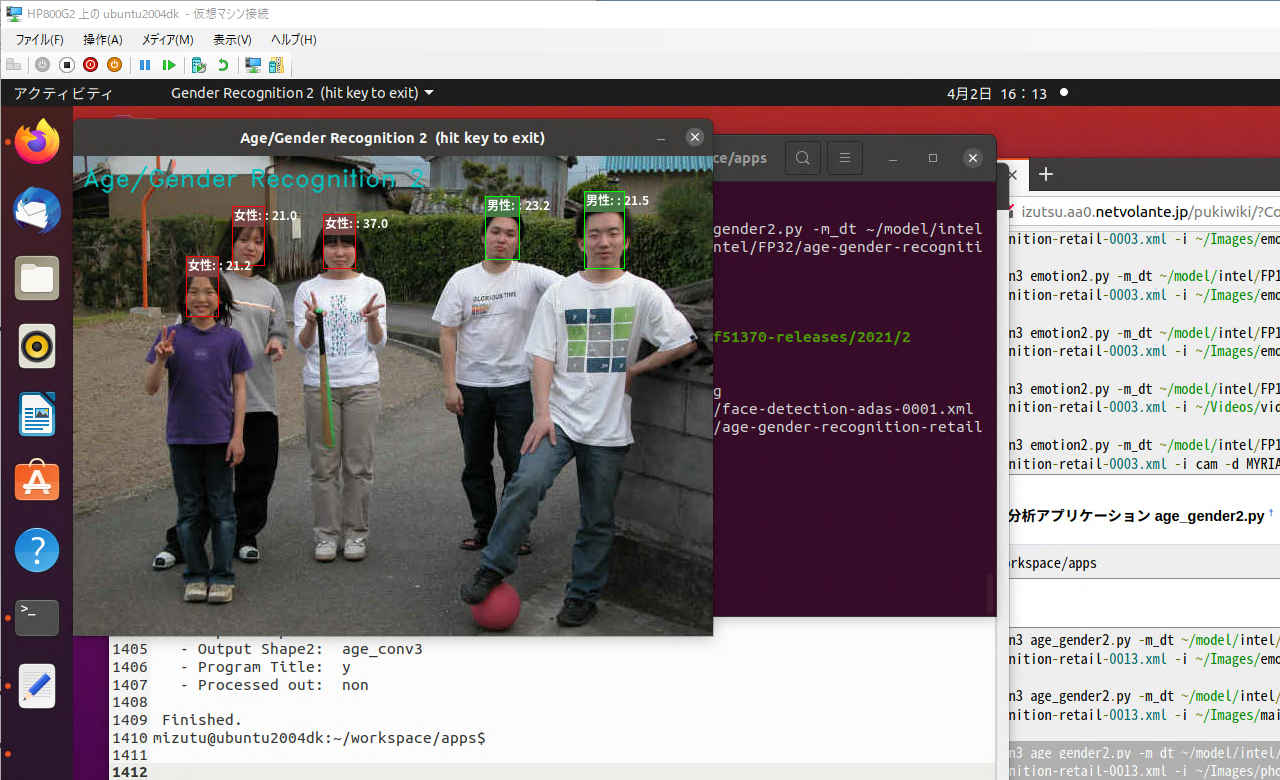

画像から顔を部分を特定しディープラーニングで年齢/性別を推論する。

| コマンドオプション | デフォールト設定 | 意味 |

| -h, --help | ヘルプ表示 | |

| -i, --image | cam | カメラ(cam)または入力画像ファイル |

| -l, --language | jp | 言語 (en/jp) |

| -t, --title | y | タイトル表示 (y/n) |

pi@raspberryp:~/workspace/apps $ python3 age_gender.py -h

--- Age/Gender Recognition ---

4.5.1-openvino

OpenVINO inference_engine: 2.1.2021.2.0-1877-176bdf51370-releases/2021/2

usage: age_gender.py [-h] [-i IMAGE_FILE] [-l LANGUAGE] [-t TITLE]

Image classifier using Intelツョ Neural Compute Stick 2.

optional arguments:

-h, --help show this help message and exit

-i IMAGE_FILE, --image IMAGE_FILE

Absolute path to image file or cam for camera stream.

-l LANGUAGE, --language LANGUAGE

Language.

-t TITLE, --title TITLE

Language.pi@raspberrypi:~/workspace/apps $ python3 age_gender.py --- Age/Gender Recognition --- 4.5.1-openvino OpenVINO inference_engine: 2.1.2021.2.0-1877-176bdf51370-releases/2021/2 Age/Gender Recognition: Starting application... - Plugin: Myriad - Image File: 0 - Language: jp - Input Shape1: data - Output Shape1: detection_out - Input Shape2: data - Output Shape2: age_conv3 Finished.

pi@raspberrypi:~/workspace/apps $ python3 age_gender.py -i ../image/main001.jpg --- Age/Gender Recognition --- 4.5.1-openvino OpenVINO inference_engine: 2.1.2021.2.0-1877-176bdf51370-releases/2021/2 Age/Gender Recognition: Starting application... - Plugin: Myriad - Image File: ../image/main001.jpg - Language: jp - Input Shape1: data - Output Shape1: detection_out - Input Shape2: data - Output Shape2: age_conv3 Finished.

pi@raspberrypi:~/workspace/apps $ python3 age_gender.py -i ../image/emo2.jpg

pi@raspberrypi:~/workspace/apps $ python3 age_gender.py -i ../image/photo3.jpg

pi@raspberrypi:~/workspace/apps $ python3 age_gender.py -i ../../Videos/video-test.mp4

# -*- coding: utf-8 -*-

##------------------------------------------

## OpenVINO™ toolkit

## Age/Gender Recognition

##

## model: face-detection-adas-0001

## age-gender-recognition-retail-0013

##

## 2021.02.24 Masahiro Izutsu

##------------------------------------------

## age_gender.py

# Color Escape Code

GREEN = '\033[1;32m'

RED = '\033[1;31m'

NOCOLOR = '\033[0m'

YELLOW = '\033[1;33m'

# 定数定義

DEVICE = "MYRIAD"

MODULE_FACE = '../FP16/face-detection-adas-0001'

MODULE_AGE = '../FP16/age-gender-recognition-retail-0013'

WINDOW_WIDTH = 640

BOX_COLOR_M = ( 0,255, 0)

BOX_COLOR_F = ( 0, 0, 255)

LABEL_BG_COLOR_M = ( 70, 120, 70) # greyish green background for text

LABEL_BG_COLOR_F = ( 70, 70, 120) # greyish red background for text

TEXT_COLOR = (255, 255, 255) # white text

# モジュール読み込み

from openvino.inference_engine import IECore

from openvino.inference_engine import get_version

# import処理

import sys

import cv2

import numpy as np

import argparse

import myfunction

# タイトル・バージョン情報

title = 'Age/Gender Recognition'

print(GREEN)

print('--- {} ---'.format(title))

print(cv2.__version__)

print("OpenVINO inference_engine:", get_version())

print(NOCOLOR)

# Parses arguments for the application

def parse_args():

parser = argparse.ArgumentParser(description = 'Image classifier using \

Intel® Neural Compute Stick 2.' )

parser.add_argument( '-i', '--image', metavar = 'IMAGE_FILE',

type=str, default = 'cam',

help = 'Absolute path to image file or cam for camera stream.')

parser.add_argument( '-l', '--language', metavar = 'LANGUAGE', default = 'jp',

help = 'Language.')

parser.add_argument( '-t', '--title', metavar = 'TITLE', default = 'y',

help = 'Language.')

return parser

# モデル基本情報の表示

def display_info(image, lang, input_blob, out_blob, input_blob_age, out_blob_age, titleflg):

print(YELLOW + title + ': Starting application...' + NOCOLOR)

print(' - ' + YELLOW + 'Plugin: ' + NOCOLOR + 'Myriad')

print(' - ' + YELLOW + 'Image File: ' + NOCOLOR, image)

print(' - ' + YELLOW + 'Language: ' + NOCOLOR, lang)

print(' - ' + YELLOW + 'Input Shape1: ' + NOCOLOR, input_blob)

print(' - ' + YELLOW + 'Output Shape1:' + NOCOLOR, out_blob)

print(' - ' + YELLOW + 'Input Shape2: ' + NOCOLOR, input_blob_age)

print(' - ' + YELLOW + 'Output Shape2:' + NOCOLOR, out_blob_age)

print(' - ' + YELLOW + 'Program Title:' + NOCOLOR, titleflg)

# 画像の種類を判別する

# 戻り値: 'jeg''png'... 画像ファイル

# 'None' 画像ファイル以外 (動画ファイル)

# 'NotFound' ファイルが存在しない

import imghdr

def is_pict(filename):

try:

imgtype = imghdr.what(filename)

except FileNotFoundError as e:

imgtype = 'NotFound'

return str(imgtype)

# ** main関数 **

def main():

# 日本語フォント指定

fontPIL = 'NotoSansCJK-Bold.ttc'

# Argument parsing and parameter setting

ARGS = parse_args().parse_args()

input_stream = ARGS.image

lang = ARGS.language

titleflg = ARGS.title

if ARGS.image.lower() == "cam" or ARGS.image.lower() == "camera":

input_stream = 0

isstream = True

else:

filetype = is_pict(input_stream)

isstream = filetype == 'None'

if (filetype == 'NotFound'):

print(RED + "\ninput file Not found." + NOCOLOR)

quit()

# 性別ラベル

if (lang == 'jp'):

label = ('女性: ', '男性: ')

else:

label = ('Female: ', 'Male: ')

# モデルの読み込み (顔検出)face-detection-adas-0001

ie = IECore()

net = ie.read_network(model = MODULE_FACE + '.xml', weights = MODULE_FACE + '.bin')

exec_net = ie.load_network(network = net, device_name = DEVICE)

# 入出力設定(顔検出)

input_blob = net.input_info['data'].name

out_blob = next(iter(net.outputs))

n, c, h, w = net.input_info[input_blob].input_data.shape

# モデルの読み込み(年齢/性別)age-gender-recognition-retail-0013

net_age = ie.read_network(model = MODULE_AGE + '.xml', weights = MODULE_AGE + '.bin')

exec_net_age = ie.load_network(network = net_age, device_name=DEVICE)

# 入出力設定(年齢/性別)

input_blob_age = net.input_info['data'].name

out_blob_age = next(iter(net_age.outputs))

n_age, c_age, h_age, w_age = net.input_info[input_blob_age].input_data.shape

# 情報表示

display_info(input_stream, lang, input_blob, out_blob, input_blob_age, out_blob_age, titleflg)

# 入力準備

if (isstream):

# カメラ

cap = cv2.VideoCapture(input_stream)

ret, frame = cap.read()

loopflg = cap.isOpened()

else:

# 画像ファイル読み込み

frame = cv2.imread(input_stream)

if frame is None:

print(RED + "\nUnable to read the input." + NOCOLOR)

quit()

# アスペクト比を固定してリサイズ

img_h, img_w = frame.shape[:2]

if (img_w > WINDOW_WIDTH):

height = round(img_h * (WINDOW_WIDTH / img_w))

frame = cv2.resize(frame, dsize = (WINDOW_WIDTH, height))

loopflg = True # 1回ループ

# メインループ

while (loopflg):

if frame is None:

print(RED + "\nUnable to read the input." + NOCOLOR)

quit()

# 入力データフォーマットへ変換

img = cv2.resize(frame, (w, h)) # サイズ変更

img = img.transpose((2, 0, 1)) # HWC > CHW

img = np.expand_dims(img, axis=0) # 次元合せ

# 推論実行

out = exec_net.infer(inputs={'data': img})

# 出力から必要なデータのみ取り出し

out = out['detection_out']

out = np.squeeze(out) # サイズ1の次元を全て削除

# 検出されたすべての顔領域に対して1つずつ処理

for detection in out:

# conf値の取得

confidence = float(detection[2])

# バウンディングボックス座標を入力画像のスケールに変換

xmin = int(detection[3] * frame.shape[1])

ymin = int(detection[4] * frame.shape[0])

xmax = int(detection[5] * frame.shape[1])

ymax = int(detection[6] * frame.shape[0])

# conf値が0.5より大きい場合のみバウンディングボックス表示

if confidence > 0.5:

# 顔検出領域はカメラ範囲内に補正する。特にminは補正しないとエラーになる

if xmin < 0:

xmin = 0

if ymin < 0:

ymin = 0

if xmax > frame.shape[1]:

xmax = frame.shape[1]

if ymax > frame.shape[0]:

ymax = frame.shape[0]

# 顔領域のみ切り出し

frame_face = frame[ ymin:ymax, xmin:xmax ]

# 入力データフォーマットへ変換

img = cv2.resize(frame_face, (62, 62)) # サイズ変更

img = img.transpose((2, 0, 1)) # HWC > CHW

img = np.expand_dims(img, axis=0) # 次元合せ

# 推論実行

out = exec_net_age.infer(inputs={'data': img})

# 出力から必要なデータのみ取り出し

age = out['age_conv3']

prob = out['prob']

age = age[0][0][0][0] * 100

gender = label[np.argmax(prob[0])]

if gender == label[0]:

box_color = BOX_COLOR_F

label_bgcolor = LABEL_BG_COLOR_F

else:

box_color = BOX_COLOR_M

label_bgcolor = LABEL_BG_COLOR_M

out_str = gender+':'+'{:>5.1f}'.format(age)

label_text_color = TEXT_COLOR

# バウンディングボックス(顔領域)表示

cv2.rectangle(frame, (xmin, ymin-20), (xmax, ymin), label_bgcolor, -1)

# cv2.putText(frame, out_str, (xmin, ymin-4), cv2.FONT_HERSHEY_DUPLEX, fontScale=0.6, color=cor, lineType=cv2.LINE_AA)

myfunction.cv2_putText(img = frame,

text = out_str,

org = (xmin+2, ymin-4),

fontFace = fontPIL,

fontScale = 12,

color = label_text_color,

mode = 0)

cv2.rectangle(frame, (xmin, ymin-20), (xmax, ymax), box_color, thickness = 1)

# タイトル描画

if (titleflg == 'y'):

cv2.putText(frame, title, (10, 30), cv2.FONT_HERSHEY_DUPLEX, fontScale=0.8, color=(200, 200, 0), lineType=cv2.LINE_AA)

# 画像表示

window_name = title + ' (hit key to exit)'

cv2.imshow(window_name, frame)

cv2.moveWindow(window_name, 10, 40)

# 何らかのキーが押されたら終了

breakflg = False

while(True):

key = cv2.waitKey(1)

prop_val = cv2.getWindowProperty(window_name, cv2.WND_PROP_ASPECT_RATIO)

if ((key != -1) or (prop_val < 0.0)):

breakflg = True

break

if (isstream):

break

if ((breakflg == False) and isstream):

# 次のフレームを読み出す

ret, frame = cap.read()

if ret == False:

break

loopflg = cap.isOpened()

else:

loopflg = False

# 終了処理

if (isstream):

cap.release()

cv2.destroyAllWindows()

print('\n Finished.')

# main関数エントリーポイント(実行開始)

if __name__ == "__main__":

sys.exit(main())

| コマンドオプション | デフォールト設定 | 意味 |

| -h, --help | - | ヘルプ表示 |

| -i, --image | cam | カメラ(cam)または動画・静止画像ファイル |

| -m_dt, --m_detector | 必須指定 | IR フォーマットの顔検出モデル |

| -m_re, --m_recognition | 必須指定 | IR フォーマット年齢/性別分析モデル |

| -d, --device | 必須指定 | デバイス指定 (CPU/MYRIAD) |

| -l, --language | jp | 言語 (en/jp) |

| -t, --title | y | タイトル表示 (y/n) |

| -s, --speed | y | スピード計測表示 (y/n) |

| -o, --out | non | 処理結果を出力する場合のファイルパス |

$ python3 age_gender2.py -h

--- Age/Gender Recognition 2 ---

4.5.2-openvino

OpenVINO inference_engine: 2.1.2021.3.0-2787-60059f2c755-releases/2021/3

usage: age_gender2.py [-h] [-i IMAGE_FILE] [-m_dt M_DETECTOR]

[-m_re M_RECOGNITION] [-d DEVICE] [-l LANGUAGE]

[-t TITLE] [-s SPEED] [-o IMAGE_OUT]

optional arguments:

-h, --help show this help message and exit

-i IMAGE_FILE, --image IMAGE_FILE

Absolute path to image file or cam for camera stream.

-m_dt M_DETECTOR, --m_detector M_DETECTOR

Detector Path to an .xml file with a trained

model.Default value is

/home/mizutu/model/intel/FP32/face-detection-

adas-0001.xml

-m_re M_RECOGNITION, --m_recognition M_RECOGNITION

Recognition Path to an .xml file with a trained

model.Default value is

/home/mizutu/model/intel/FP32/age-gender-recognition-

retail-0013.xml

-d DEVICE, --device DEVICE

Optional. Specify a target device to infer on. CPU,

GPU, FPGA, HDDL or MYRIAD is acceptable. The demo will

look for a suitable plugin for the device specified.

Default value is CPU

-l LANGUAGE, --language LANGUAGE

Language.(jp/en) Default value is 'jp'

-t TITLE, --title TITLE

Program title flag.(y/n) Default value is 'y'

-s SPEED, --speed SPEED

Speed display flag.(y/n) Default calue is 'y'

-o IMAGE_OUT, --out IMAGE_OUT

Processed image file path. Default value is 'non'$ python3 age_gender2.py -i ~/Images/emo2.jpg --- Age/Gender Recognition 2 --- 4.5.2-openvino OpenVINO inference_engine: 2.1.2021.3.0-2787-60059f2c755-releases/2021/3 Age/Gender Recognition 2: Starting application... - Image File : /home/mizutu/Images/emo2.jpg - m_detect : /home/mizutu/model/intel/FP32/face-detection-adas-0001.xml - m_recognition: /home/mizutu/model/intel/FP32/age-gender-recognition-retail-0013.xml - Device : CPU - Language : jp - Input Shape1 : data - Output Shape1: detection_out - Input Shape2 : data - Output Shape2: age_conv3 - Program Title: y - Speed flag : y - Processed out: non FPS average: 3.30 Finished.

$ python3 age_gender2.py -i ~/Images/photo3.jpg --- Age/Gender Recognition 2 --- 4.5.2-openvino OpenVINO inference_engine: 2.1.2021.3.0-2787-60059f2c755-releases/2021/3 Age/Gender Recognition 2: Starting application... - Image File : /home/mizutu/Images/photo3.jpg - m_detect : /home/mizutu/model/intel/FP32/face-detection-adas-0001.xml - m_recognition: /home/mizutu/model/intel/FP32/age-gender-recognition-retail-0013.xml - Device : CPU - Language : jp - Input Shape1 : data - Output Shape1: detection_out - Input Shape2 : data - Output Shape2: age_conv3 - Program Title: y - Speed flag : y - Processed out: non FPS average: 14.80 Finished.

$ cd ~/workspace/apps◦ CPU

$ python3 age_gender2.py -i ~/Images/emo2.jpg

$ python3 age_gender2.py -i ~/Images/main001.jpg

$ python3 age_gender2.py -i ~/Images/photo3.jpg

$ python3 age_gender2.py -i ~/Videos/video-test.mp4

$ python3 age_gender2.py -i cam◦ NCS2(MYRIAD)

$ python3 age_gender2.py -m_dt ~/model/intel/FP16/face-detection-adas-0001.xml -m_re ~/model/intel/FP16/age-gender-recognition-retail-0013.xml -i ~/Images/emo2.jpg -d MYRIAD

$ python3 age_gender2.py -m_dt ~/model/intel/FP16/face-detection-adas-0001.xml -m_re ~/model/intel/FP16/age-gender-recognition-retail-0013.xml -i ~/Images/main001.jpg -d MYRIAD

$ python3 age_gender2.py -m_dt ~/model/intel/FP16/face-detection-adas-0001.xml -m_re ~/model/intel/FP16/age-gender-recognition-retail-0013.xml -i ~/Images/photo3.jpg -d MYRIAD

$ python3 age_gender2.py -m_dt ~/model/intel/FP16/face-detection-adas-0001.xml -m_re ~/model/intel/FP16/age-gender-recognition-retail-0013.xml -i ~/Videos/video-test.mp4 -d MYRIAD

$ python3 age_gender2.py -m_dt ~/model/intel/FP16/face-detection-adas-0001.xml -m_re ~/model/intel/FP16/age-gender-recognition-retail-0013.xml -i cam -d MYRIAD

# -*- coding: utf-8 -*-

##------------------------------------------

## OpenVINO™ toolkit

## Age/Gender Recognition

##

## model: face-detection-adas-0001

## age-gender-recognition-retail-0013

##

## 2021.02.24 Masahiro Izutsu

##------------------------------------------

## 2021.03.25 model/device parameter

## 2021.06.23 fps display

# Color Escape Code

GREEN = '\033[1;32m'

RED = '\033[1;31m'

NOCOLOR = '\033[0m'

YELLOW = '\033[1;33m'

# 定数定義

WINDOW_WIDTH = 640

BOX_COLOR_M = ( 0,255, 0)

BOX_COLOR_F = ( 0, 0, 255)

LABEL_BG_COLOR_M = ( 70, 120, 70) # greyish green background for text

LABEL_BG_COLOR_F = ( 70, 70, 120) # greyish red background for text

TEXT_COLOR = (255, 255, 255) # white text

from os.path import expanduser

MODEL_DEF_FACE = expanduser('~/model/intel/FP32/face-detection-adas-0001.xml')

MODEL_DEF_AGE = expanduser('~/model/intel/FP32/age-gender-recognition-retail-0013.xml')

# モジュール読み込み

from openvino.inference_engine import IECore

from openvino.inference_engine import get_version

# import処理

import sys

import cv2

import numpy as np

import argparse

import myfunction

import mylib

# タイトル・バージョン情報

title = 'Age/Gender Recognition 2'

print(GREEN)

print('--- {} ---'.format(title))

print(cv2.__version__)

print("OpenVINO inference_engine:", get_version())

print(NOCOLOR)

# Parses arguments for the application

def parse_args():

parser = argparse.ArgumentParser()

parser.add_argument('-i', '--image', metavar = 'IMAGE_FILE', type=str, default = 'cam',

help = 'Absolute path to image file or cam for camera stream.')

parser.add_argument('-m_dt', '--m_detector', type=str,

default = MODEL_DEF_FACE,

help = 'Detector Path to an .xml file with a trained model.'

'Default value is '+MODEL_DEF_FACE)

parser.add_argument('-m_re', '--m_recognition', type=str,

default = MODEL_DEF_AGE,

help = 'Recognition Path to an .xml file with a trained model.'

'Default value is '+MODEL_DEF_AGE)

parser.add_argument('-d', '--device', default = 'CPU', type=str,

help = 'Optional. Specify a target device to infer on. CPU, GPU, FPGA, HDDL or MYRIAD is '

'acceptable. The demo will look for a suitable plugin for the device specified. '

'Default value is CPU')

parser.add_argument('-l', '--language', metavar = 'LANGUAGE',

default = 'jp',

help = 'Language.(jp/en) Default value is \'jp\'')

parser.add_argument('-t', '--title', metavar = 'TITLE',

default = 'y',

help = 'Program title flag.(y/n) Default value is \'y\'')

parser.add_argument('-s', '--speed', metavar = 'SPEED',

default = 'y',

help = 'Speed display flag.(y/n) Default calue is \'y\'')

parser.add_argument('-o', '--out', metavar = 'IMAGE_OUT',

default = 'non',

help = 'Processed image file path. Default value is \'non\'')

return parser

# モデル基本情報の表示

def display_info(image, detector, recognition, device, lang, input_blob, out_blob, input_blob_age, out_blob_age, titleflg, speedflg, outpath):

print(YELLOW + title + ': Starting application...' + NOCOLOR)

print(' - ' + YELLOW + 'Image File : ' + NOCOLOR, image)

print(' - ' + YELLOW + 'm_detect : ' + NOCOLOR, detector)

print(' - ' + YELLOW + 'm_recognition: ' + NOCOLOR, recognition)

print(' - ' + YELLOW + 'Device : ' + NOCOLOR, device)

print(' - ' + YELLOW + 'Language : ' + NOCOLOR, lang)

print(' - ' + YELLOW + 'Input Shape1 : ' + NOCOLOR, input_blob)

print(' - ' + YELLOW + 'Output Shape1: ' + NOCOLOR, out_blob)

print(' - ' + YELLOW + 'Input Shape2 : ' + NOCOLOR, input_blob_age)

print(' - ' + YELLOW + 'Output Shape2: ' + NOCOLOR, out_blob_age)

print(' - ' + YELLOW + 'Program Title: ' + NOCOLOR, titleflg)

print(' - ' + YELLOW + 'Speed flag : ' + NOCOLOR, speedflg)

print(' - ' + YELLOW + 'Processed out: ' + NOCOLOR, outpath)

# 画像の種類を判別する

# 戻り値: 'jeg''png'... 画像ファイル

# 'None' 画像ファイル以外 (動画ファイル)

# 'NotFound' ファイルが存在しない

import imghdr

def is_pict(filename):

try:

imgtype = imghdr.what(filename)

except FileNotFoundError as e:

imgtype = 'NotFound'

return str(imgtype)

# ** main関数 **

def main():

# 日本語フォント指定

fontPIL = 'NotoSansCJK-Bold.ttc'

# Argument parsing and parameter setting

ARGS = parse_args().parse_args()

input_stream = ARGS.image

lang = ARGS.language

titleflg = ARGS.title

speedflg = ARGS.speed

if ARGS.image.lower() == "cam" or ARGS.image.lower() == "camera":

input_stream = 0

isstream = True

else:

filetype = is_pict(input_stream)

isstream = filetype == 'None'

if (filetype == 'NotFound'):

print(RED + "\ninput file Not found." + NOCOLOR)

quit()

model_detector=ARGS.m_detector

model_recognition=ARGS.m_recognition

device = ARGS.device

outpath = ARGS.out

# 性別ラベル

if (lang == 'jp'):

label = ('女性: ', '男性: ')

else:

label = ('Female: ', 'Male: ')

# モデルの読み込み (顔検出)face-detection-adas-0001

ie = IECore()

net = ie.read_network(model = model_detector, weights = model_detector[:-4] + '.bin')

exec_net = ie.load_network(network = net, device_name = device)

# 入出力設定(顔検出)

input_blob = net.input_info['data'].name

out_blob = next(iter(net.outputs))

n, c, h, w = net.input_info[input_blob].input_data.shape

# モデルの読み込み(年齢/性別)age-gender-recognition-retail-0013

net_age = ie.read_network(model = model_recognition, weights = model_recognition[:-4] + '.bin')

exec_net_age = ie.load_network(network = net_age, device_name=device)

# 入出力設定(年齢/性別)

input_blob_age = net.input_info['data'].name

out_blob_age = next(iter(net_age.outputs))

n_age, c_age, h_age, w_age = net.input_info[input_blob_age].input_data.shape

# 情報表示

display_info(input_stream, model_detector, model_recognition, device, lang, input_blob, out_blob, input_blob_age, out_blob_age, titleflg, speedflg, outpath)

# 入力準備

if (isstream):

# カメラ

cap = cv2.VideoCapture(input_stream)

ret, frame = cap.read()

loopflg = cap.isOpened()

else:

# 画像ファイル読み込み

frame = cv2.imread(input_stream)

if frame is None:

print(RED + "\nUnable to read the input." + NOCOLOR)

quit()

# アスペクト比を固定してリサイズ

img_h, img_w = frame.shape[:2]

if (img_w > WINDOW_WIDTH):

height = round(img_h * (WINDOW_WIDTH / img_w))

frame = cv2.resize(frame, dsize = (WINDOW_WIDTH, height))

loopflg = True # 1回ループ

# 処理結果の記録 step1

if (outpath != 'non'):

if (isstream):

fps = int(cap.get(cv2.CAP_PROP_FPS))

out_w = int(cap.get(cv2.CAP_PROP_FRAME_WIDTH))

out_h = int(cap.get(cv2.CAP_PROP_FRAME_HEIGHT))

fourcc = cv2.VideoWriter_fourcc('m', 'p', '4', 'v')

outvideo = cv2.VideoWriter(outpath, fourcc, fps, (out_w, out_h))

# 計測値初期化

fpsWithTick = mylib.fpsWithTick()

frame_count = 0

fps_total = 0

fpsWithTick.get() # fps計測開始

# メインループ

while (loopflg):

if frame is None:

print(RED + "\nUnable to read the input." + NOCOLOR)

quit()

# 入力データフォーマットへ変換

img = cv2.resize(frame, (w, h)) # サイズ変更

img = img.transpose((2, 0, 1)) # HWC > CHW

img = np.expand_dims(img, axis=0) # 次元合せ

# 推論実行

out = exec_net.infer(inputs={'data': img})

# 出力から必要なデータのみ取り出し

out = out['detection_out']

out = np.squeeze(out) # サイズ1の次元を全て削除

# 検出されたすべての顔領域に対して1つずつ処理

for detection in out:

# conf値の取得

confidence = float(detection[2])

# バウンディングボックス座標を入力画像のスケールに変換

xmin = int(detection[3] * frame.shape[1])

ymin = int(detection[4] * frame.shape[0])

xmax = int(detection[5] * frame.shape[1])

ymax = int(detection[6] * frame.shape[0])

# conf値が0.5より大きい場合のみバウンディングボックス表示

if confidence > 0.5:

# 顔検出領域はカメラ範囲内に補正する。特にminは補正しないとエラーになる

if xmin < 0:

xmin = 0

if ymin < 0:

ymin = 0

if xmax > frame.shape[1]:

xmax = frame.shape[1]

if ymax > frame.shape[0]:

ymax = frame.shape[0]

# 顔領域のみ切り出し

frame_face = frame[ ymin:ymax, xmin:xmax ]

# 入力データフォーマットへ変換

img = cv2.resize(frame_face, (62, 62)) # サイズ変更

img = img.transpose((2, 0, 1)) # HWC > CHW

img = np.expand_dims(img, axis=0) # 次元合せ

# 推論実行

out = exec_net_age.infer(inputs={'data': img})

# 出力から必要なデータのみ取り出し

age = out['age_conv3']

prob = out['prob']

age = age[0][0][0][0] * 100

gender = label[np.argmax(prob[0])]

if gender == label[0]:

box_color = BOX_COLOR_F

label_bgcolor = LABEL_BG_COLOR_F

else:

box_color = BOX_COLOR_M

label_bgcolor = LABEL_BG_COLOR_M

out_str = gender+':'+'{:>5.1f}'.format(age)

label_text_color = TEXT_COLOR

# バウンディングボックス(顔領域)表示

cv2.rectangle(frame, (xmin, ymin-20), (xmax, ymin), label_bgcolor, -1)

# cv2.putText(frame, out_str, (xmin, ymin-4), cv2.FONT_HERSHEY_DUPLEX, fontScale=0.6, color=cor, lineType=cv2.LINE_AA)

myfunction.cv2_putText(img = frame,

text = out_str,

org = (xmin+2, ymin-4),

fontFace = fontPIL,

fontScale = 12,

color = label_text_color,

mode = 0)

cv2.rectangle(frame, (xmin, ymin-20), (xmax, ymax), box_color, thickness = 1)

# FPSを計算する

fps = fpsWithTick.get()

st_fps = 'fps: {:>6.2f}'.format(fps)

if (speedflg == 'y'):

cv2.rectangle(frame, (10, 38), (95, 55), (90, 90, 90), -1)

cv2.putText(frame, st_fps, (15, 50), cv2.FONT_HERSHEY_DUPLEX, fontScale=0.4, color=(255, 255, 255), lineType=cv2.LINE_AA)

# タイトル描画

if (titleflg == 'y'):

cv2.putText(frame, title, (10, 30), cv2.FONT_HERSHEY_DUPLEX, fontScale=0.8, color=(200, 200, 0), lineType=cv2.LINE_AA)

# 画像表示

window_name = title + " (hit 'q' or 'esc' key to exit)"

cv2.namedWindow(window_name, cv2.WINDOW_AUTOSIZE)

cv2.imshow(window_name, frame)

# 処理結果の記録 step2

if (outpath != 'non'):

if (isstream):

outvideo.write(frame)

else:

cv2.imwrite(outpath, frame)

# 何らかのキーが押されたら終了

breakflg = False

while(True):

key = cv2.waitKey(1)

prop_val = cv2.getWindowProperty(window_name, cv2.WND_PROP_ASPECT_RATIO)

if key == 27 or key == 113 or (prop_val < 0.0): # 'esc' or 'q'

breakflg = True

break

if (isstream):

break

if ((breakflg == False) and isstream):

# 次のフレームを読み出す

ret, frame = cap.read()

if ret == False:

break

loopflg = cap.isOpened()

else:

loopflg = False

# 終了処理

if (isstream):

cap.release()

# 処理結果の記録 step3

if (outpath != 'non'):

if (isstream):

outvideo.release()

cv2.destroyAllWindows()

print('\nFPS average: {:>10.2f}'.format(fpsWithTick.get_average()))

print('\n Finished.')

# main関数エントリーポイント(実行開始)

if __name__ == "__main__":

sys.exit(main())

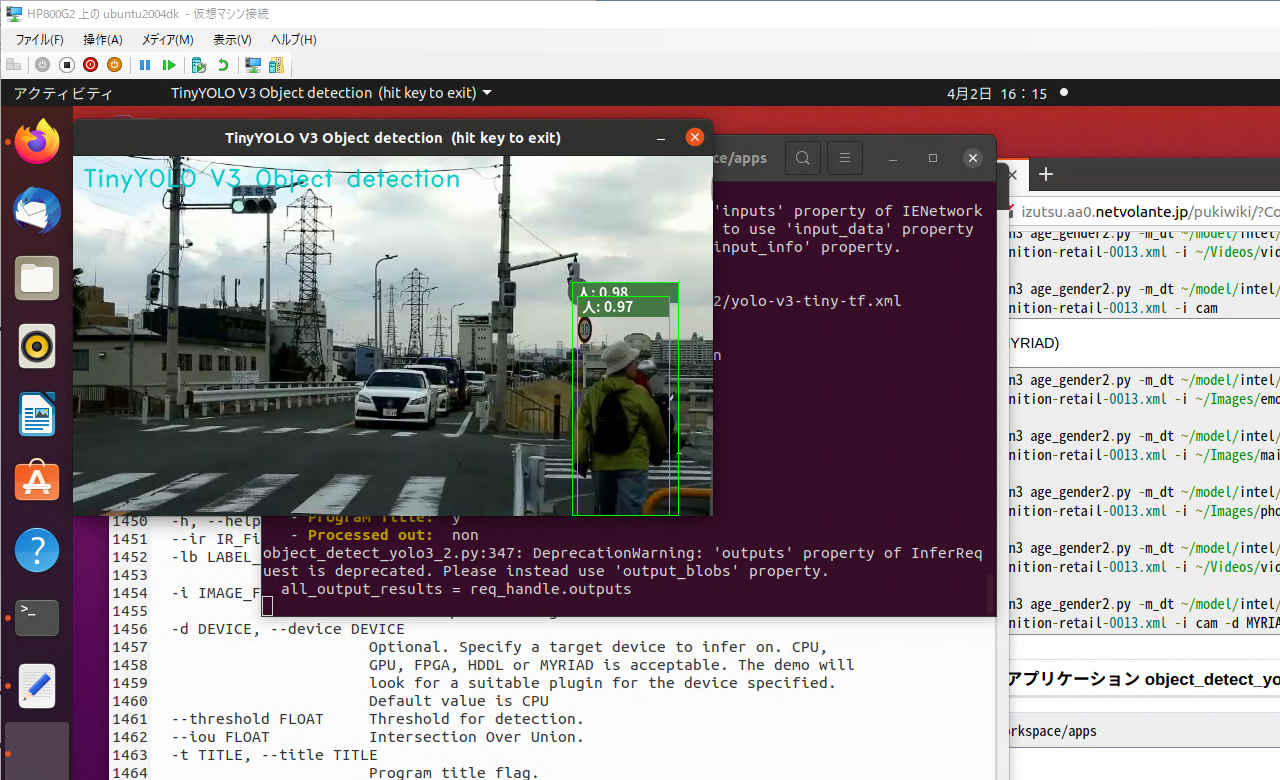

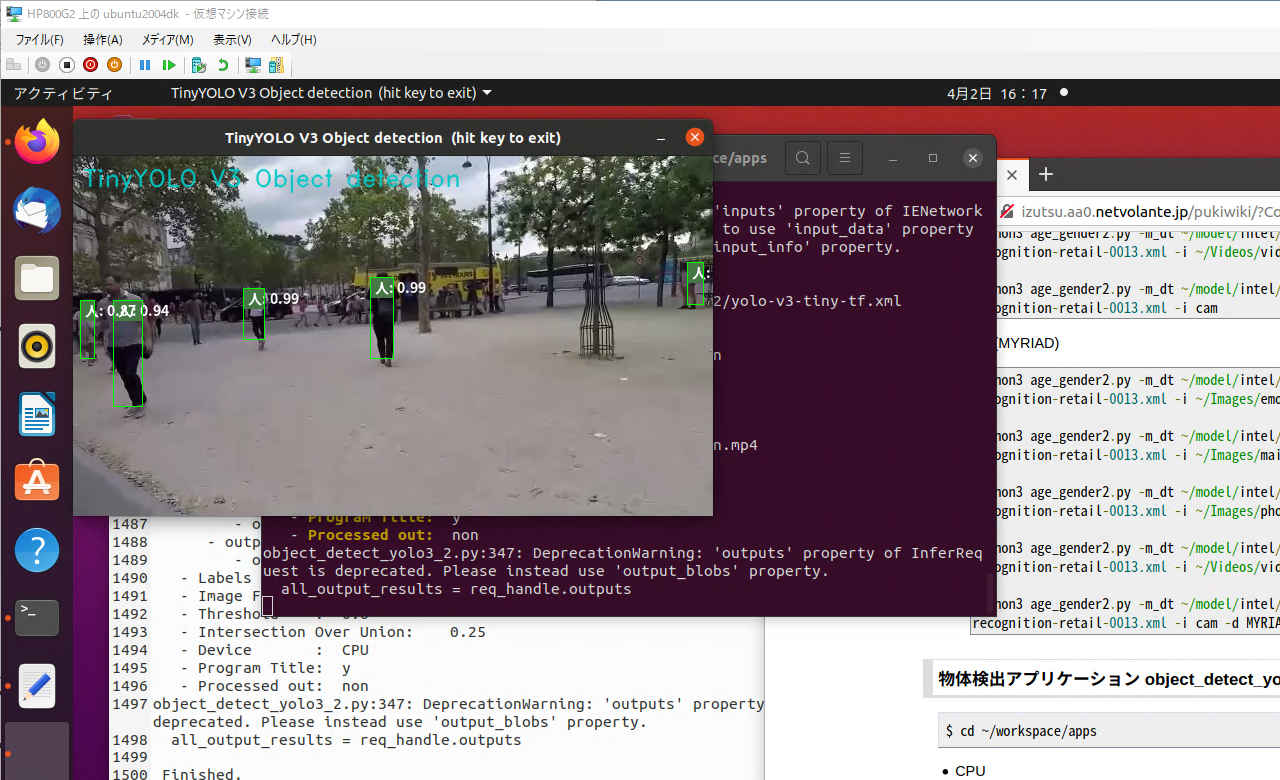

画像からディープラーニングで 80種類のオブジェクトを検出する。

| コマンドオプション | デフォールト設定 | 意味 |

| -h, --help | ヘルプ表示 | |

| --ir | ../public/yolo-v3-tiny-tf/FP16/yolo-v3-tiny-tf.xml | IRフォーマットの学習済みファイル |

| -l, --labels | coco.names_jp | オブジェクトのラベルファイル |

| -i, --image | cam | カメラ(cam)または入力画像ファイル |

| --threshold | DETECTION_THRESHOLD,0.60 | オブジェクトの閾値 |

| --iou' | 0.25 | オブジェクトの重なりレベル |

| -t, --title | y | タイトル表示 (y/n) |

pi@raspberrypi:~/workspace/apps $ python3 object_detect_yolo3.py -h

--- TinyYOLO V3 Object detection ---

4.5.1-openvino

OpenVINO inference_engine: 2.1.2021.2.0-1877-176bdf51370-releases/2021/2

usage: object_detect_yolo3.py [-h] [--ir IR_File] [-l LABEL_FILE]

[-i IMAGE_FILE or cam] [--threshold FLOAT]

[--iou FLOAT] [-t TITLE]

Image classifier using Intelツョ Neural Compute Stick 2.

optional arguments:

-h, --help show this help message and exit

--ir IR_File Absolute path to the neural network IR xml file.

-l LABEL_FILE, --labels LABEL_FILE

Absolute path to labels file.

-i IMAGE_FILE or cam, --input IMAGE_FILE or cam

Absolute path to image file or cam for camera stream.

--threshold FLOAT Threshold for detection.

--iou FLOAT Intersection Over Union.

-t TITLE, --title TITLE

Language.$ cd ~/workspace/apps

pi@raspberrypi:~/workspace/apps $ python3 object_detect_yolo3.py

--- TinyYOLO V3 Object detection ---

4.5.1-openvino

OpenVINO inference_engine: 2.1.2021.2.0-1877-176bdf51370-releases/2021/2

Running OpenVINO NCS Tensorflow TinyYolo v3 example...

Displaying image with objects detected in GUI...

Click in the GUI window and hit any key to exit.

object_detect_yolo3.py:272: DeprecationWarning: 'inputs' property of IENetwork class is deprecated. To access DataPtrs user need to use 'input_data' property of InputInfoPtr objects which can be accessed by 'input_info' property.

input_blob = next(iter(net.inputs))

Tiny Yolo v3: Starting application...

- Plugin: Myriad

- IR File: ../public/yolo-v3-tiny-tf/FP16/yolo-v3-tiny-tf.xml

- Input Shape: [1, 3, 416, 416]

- Output Shapes:

- output #0 name: conv2d_12/Conv2D/YoloRegion

- output shape: [1, 255, 26, 26]

- output #1 name: conv2d_9/Conv2D/YoloRegion

- output shape: [1, 255, 13, 13]

- Labels File: coco.names_jp

- Image File: 0

- Threshold: 0.6

- Intersection Over Union: 0.25

- Program Title: y

object_detect_yolo3.py:335: DeprecationWarning: 'outputs' property of InferRequest is deprecated. Please instead use 'output_blobs' property.

all_output_results = req_handle.outputs

Finished.

$ cd ~/workspace/apps

pi@raspberrypi:~/workspace/apps $ python3 object_detect_yolo3.py --threshold 0.001

--- TinyYOLO V3 Object detection ---

4.5.1-openvino

OpenVINO inference_engine: 2.1.2021.2.0-1877-176bdf51370-releases/2021/2

Running OpenVINO NCS Tensorflow TinyYolo v3 example...

Displaying image with objects detected in GUI...

Click in the GUI window and hit any key to exit.

object_detect_yolo3.py:272: DeprecationWarning: 'inputs' property of IENetwork class is deprecated. To access DataPtrs user need to use 'input_data' property of InputInfoPtr objects which can be accessed by 'input_info' property.

input_blob = next(iter(net.inputs))

Tiny Yolo v3: Starting application...

- Plugin: Myriad

- IR File: ../public/yolo-v3-tiny-tf/FP16/yolo-v3-tiny-tf.xml

- Input Shape: [1, 3, 416, 416]

- Output Shapes:

- output #0 name: conv2d_12/Conv2D/YoloRegion

- output shape: [1, 255, 26, 26]

- output #1 name: conv2d_9/Conv2D/YoloRegion

- output shape: [1, 255, 13, 13]

- Labels File: coco.names_jp

- Image File: 0

- Threshold: 0.001

- Intersection Over Union: 0.25

- Program Title: y

object_detect_yolo3.py:335: DeprecationWarning: 'outputs' property of InferRequest is deprecated. Please instead use 'output_blobs' property.

all_output_results = req_handle.outputs

Finished.

pi@raspberrypi:~/workspace/apps $ python3 object_detect_yolo3.py -i ../image/desk-image.jpg

pi@raspberrypi:~/workspace/apps $ python3 object_detect_yolo3.py -i ../../Videos/car.mp4

# -*- coding: utf-8 -*-

##------------------------------------------

## OpenVINO™ toolkit

## Object detection

##

## model: yolo-v3-tiny-tf

##

## 2021.02.24 Masahiro Izutsu

##------------------------------------------

## object_detect_yolo3.py

# Color Escape Code

GREEN = '\033[1;32m'

RED = '\033[1;31m'

NOCOLOR = '\033[0m'

YELLOW = '\033[1;33m'

# 定数定義

DEVICE = "MYRIAD"

WINDOW_WIDTH = 640

# モジュール読み込み

from openvino.inference_engine import IECore

from openvino.inference_engine import get_version

# import処理

import sys

import numpy as np

import cv2

import argparse

import myfunction

# タイトル・バージョン情報

title = 'TinyYOLO V3 Object detection'

print(GREEN)

print('--- {} ---'.format(title))

print(cv2.__version__)

print("OpenVINO inference_engine:", get_version())

print(NOCOLOR)

# Adjust these thresholds

DETECTION_THRESHOLD = 0.60

IOU_THRESHOLD = 0.25

# Tiny yolo anchor box values

anchors = [10,14, 23,27, 37,58, 81,82, 135,169, 344,319]

# Used for display

BOX_COLOR = (0,255,0)

LABEL_BG_COLOR = (70, 120, 70) # greyish green background for text

TEXT_COLOR = (255, 255, 255) # white text

TEXT_FONT = cv2.FONT_HERSHEY_SIMPLEX

WINDOW_SIZE_W = 640

WINDOW_SIZE_H = 480

# Parses arguments for the application

def parse_args():

parser = argparse.ArgumentParser(description = 'Image classifier using \

Intel® Neural Compute Stick 2.' )

parser.add_argument( '--ir', metavar = 'IR_File',

type=str, default = '../public/yolo-v3-tiny-tf/FP16/yolo-v3-tiny-tf.xml',

help = 'Absolute path to the neural network IR xml file.')

parser.add_argument( '-l', '--labels', metavar = 'LABEL_FILE',

type=str, default = 'coco.names_jp',

help='Absolute path to labels file.')

parser.add_argument( '-i', '--input', metavar = 'IMAGE_FILE or cam',

type=str, default = 'cam',

help = 'Absolute path to image file or cam for camera stream.')

parser.add_argument( '--threshold', metavar = 'FLOAT',

type=float, default = DETECTION_THRESHOLD,

help = 'Threshold for detection.')

parser.add_argument( '--iou', metavar = 'FLOAT',

type=float, default = IOU_THRESHOLD,

help = 'Intersection Over Union.')

parser.add_argument( '-t', '--title', metavar = 'TITLE', default = 'y',

help = 'Language.')

return parser

# creates a mask to remove duplicate objects (boxes) and their related probabilities and classifications

# that should be considered the same object. This is determined by how similar the boxes are

# based on the intersection-over-union metric.

# box_list is as list of boxes (4 floats for centerX, centerY and Length and Width)

def get_duplicate_box_mask(box_list, iou_threshold):

# The intersection-over-union threshold to use when determining duplicates.

# objects/boxes found that are over this threshold will be

# considered the same object

max_iou = iou_threshold

box_mask = np.ones(len(box_list))

for i in range(len(box_list)):

if box_mask[i] == 0: continue

for j in range(i + 1, len(box_list)):

if get_intersection_over_union(box_list[i], box_list[j]) >= max_iou:

if box_list[i][4] < box_list[j][4]:

box_list[i], box_list[j] = box_list[j], box_list[i]

box_mask[j] = 0.0

filter_iou_mask = np.array(box_mask > 0.0, dtype='bool')

return filter_iou_mask

# Evaluate the intersection-over-union for two boxes

# The intersection-over-union metric determines how close

# two boxes are to being the same box. The closer the boxes

# are to being the same, the closer the metric will be to 1.0

# box_1 and box_2 are arrays of 4 numbers which are the (x, y)

# points that define the center of the box and the length and width of

# the box.

# Returns the intersection-over-union (between 0.0 and 1.0)

# for the two boxes specified.

def get_intersection_over_union(box_1, box_2):

# one diminsion of the intersecting box

intersection_dim_1 = min(box_1[0]+0.5*box_1[2],box_2[0]+0.5*box_2[2])-\

max(box_1[0]-0.5*box_1[2],box_2[0]-0.5*box_2[2])

# the other dimension of the intersecting box

intersection_dim_2 = min(box_1[1]+0.5*box_1[3],box_2[1]+0.5*box_2[3])-\

max(box_1[1]-0.5*box_1[3],box_2[1]-0.5*box_2[3])

if intersection_dim_1 < 0 or intersection_dim_2 < 0 :

# no intersection area

intersection_area = 0

else :

# intersection area is product of intersection dimensions

intersection_area = intersection_dim_1*intersection_dim_2

# calculate the union area which is the area of each box added

# and then we need to subtract out the intersection area since

# it is counted twice (by definition it is in each box)

union_area = box_1[2]*box_1[3] + box_2[2]*box_2[3] - intersection_area;

# now we can return the intersection over union

iou = intersection_area / union_area

#print("iou: ", iou)

return iou

# モデル基本情報の表示

def display_info(input_shape, net_outputs, image, ir, labels, threshold, iou_threshold, titleflg):

output_nodes = []

output_iter = iter(net_outputs)

for i in range(len(net_outputs)):

output_nodes.append(next(output_iter))

print(YELLOW + 'Tiny Yolo v3: Starting application...' + NOCOLOR)

print(' - ' + YELLOW + 'Plugin: ' + NOCOLOR + 'Myriad')

print(' - ' + YELLOW + 'IR File: ' + NOCOLOR, ir)

print(' - ' + YELLOW + 'Input Shape: ' + NOCOLOR, input_shape)

print(' - ' + YELLOW + 'Output Shapes:' + NOCOLOR)

for j in range(len(output_nodes)):

print(' - '+YELLOW+'output #' + str(j) + ' name: ' + NOCOLOR + output_nodes[j])

print(' - output shape: ' + NOCOLOR + str(net_outputs[output_nodes[j]].shape))

print(' - ' + YELLOW + 'Labels File: ' + NOCOLOR, labels)

print(' - ' + YELLOW + 'Image File: ' + NOCOLOR, image)

print(' - ' + YELLOW + 'Threshold: ' + NOCOLOR, threshold)

print(' - ' + YELLOW + 'Intersection Over Union: ' + NOCOLOR, iou_threshold)

print(' - ' + YELLOW + 'Program Title:' + NOCOLOR, titleflg)

# This function parses the output results from tiny yolo v3.

# The results are transposed so the output shape is (1, 13, 13, 255) or (1, 26, 26, 255). Original will be (1, 255, w, h).

# Tiny yolo does detection on two different scales using 13x13 grid and 26x26 grid.

# This is how the output is parsed:

# Imagine the image being split up into 13x13 or 26x26 grid. Each grid cell contains 3 anchor boxes.

# For each of those 3 anchor boxes, there are 85 values.

# 80 class probabilities + 4 coordinate values + 1 box confidence score = 85 values

# So that results in each grid cell having 255 values (85 values x 3 anchor boxes = 255 values)

def parseTinyYoloV3Output(output_node_results, filtered_objects, source_image_width, source_image_height, scaled_w, scaled_h, detection_threshold, num_labels):

# transpose the output node results

output_node_results = output_node_results.transpose(0,2,3,1)

output_h = output_node_results.shape[1]

output_w = output_node_results.shape[2]

# 80 class scores + 4 coordinate values + 1 objectness score = 85 values

# 85 values * 3 prior box scores per grid cell= 255 values

# 255 values * either 26 or 13 grid cells

num_of_classes = num_labels

num_anchor_boxes_per_cell = 3

# Set the anchor offset depending on the output result shape

anchor_offset = 0

if output_w == 13:

anchor_offset = 2 * 3

elif output_w == 26:

anchor_offset = 2 * 0

# used to calculate approximate coordinates of bounding box

x_ratio = float(source_image_width) / scaled_w

y_ratio = float(source_image_height) / scaled_h

# Filter out low scoring results

output_size = output_w * output_h

for result_counter in range(output_size):

row = int(result_counter / output_w)

col = int(result_counter % output_h)

for anchor_boxes in range(num_anchor_boxes_per_cell):

# check the box confidence score of the anchor box. This is how likely the box contains an object

box_confidence_score = output_node_results[0][row][col][anchor_boxes * num_of_classes + 5 + 4]

if box_confidence_score < detection_threshold:

continue

# Calculate the x, y, width, and height of the box

x_center = (col + output_node_results[0][row][col][anchor_boxes * num_of_classes + 5 + 0]) / output_w * scaled_w

y_center = (row + output_node_results[0][row][col][anchor_boxes * num_of_classes + 5 + 1]) / output_h * scaled_h

width = np.exp(output_node_results[0][row][col][anchor_boxes * num_of_classes + 5 + 2]) * anchors[anchor_offset + 2 * anchor_boxes]

height = np.exp(output_node_results[0][row][col][anchor_boxes * num_of_classes + 5 + 3]) * anchors[anchor_offset + 2 * anchor_boxes + 1]

# Now we check for anchor box for the highest class probabilities.

# If the probability exceeds the threshold, we save the box coordinates, class score and class id

for class_id in range(num_of_classes):

class_probability = output_node_results[0][row][col][anchor_boxes * num_of_classes + 5 + 5 + class_id]

# Calculate the class's confidence score by multiplying the box_confidence score by the class probabiity

class_confidence_score = class_probability * box_confidence_score

if (class_confidence_score) < detection_threshold:

continue

# Calculate the bounding box top left and bottom right vertexes

xmin = max(int((x_center - width / 2) * x_ratio), 0)

ymin = max(int((y_center - height / 2) * y_ratio), 0)

xmax = min(int(xmin + width * x_ratio), source_image_width-1)

ymax = min(int(ymin + height * y_ratio), source_image_height-1)

filtered_objects.append((xmin, ymin, xmax, ymax, class_confidence_score, class_id))

# 画像の種類を判別する

# 戻り値: 'jeg''png'... 画像ファイル

# 'None' 画像ファイル以外 (動画ファイル)

# 'NotFound' ファイルが存在しない

import imghdr

def is_pict(filename):

try:

imgtype = imghdr.what(filename)

except FileNotFoundError as e:

imgtype = 'NotFound'

return str(imgtype)

# ** main関数 **

def main():

# 日本語フォント指定

fontPIL = 'NotoSansCJK-Bold.ttc'

# Argument parsing and parameter setting

ARGS = parse_args().parse_args()

input_stream = ARGS.input

labels = ARGS.labels

titleflg = ARGS.title

if ARGS.input.lower() == "cam" or ARGS.input.lower() == "camera":

input_stream = 0

isstream = True

else:

filetype = is_pict(input_stream)

isstream = filetype == 'None'

if (filetype == 'NotFound'):

print(RED + "\ninput file Not found." + NOCOLOR)

quit()

ir = ARGS.ir

detection_threshold = ARGS.threshold

iou_threshold = ARGS.iou

# Prepare Categories

with open(labels) as labels_file:

label_list = labels_file.read().splitlines()

print(YELLOW + 'Running OpenVINO NCS Tensorflow TinyYolo v3 example...' + NOCOLOR)

print('\n Displaying image with objects detected in GUI...')

print(' Click in the GUI window and hit any key to exit.')

####################### 1. Create ie core and network #######################

# Select the myriad plugin and IRs to be used

ie = IECore()

net = ie.read_network(model = ir, weights = ir[:-3] + 'bin')

# Set up the input blobs

input_blob = next(iter(net.inputs))

input_shape = net.inputs[input_blob].shape

# Display model information

display_info(input_shape, net.outputs, input_stream, ir, labels, detection_threshold, iou_threshold, titleflg)

# Load the network and get the network input shape information

exec_net = ie.load_network(network = net, device_name = DEVICE)

n, c, network_input_h, network_input_w = input_shape

# 入力準備

if (isstream):

# カメラ

cap = cv2.VideoCapture(input_stream)

cap.set(cv2.CAP_PROP_FPS, 30)

cap.set(cv2.CAP_PROP_FRAME_WIDTH, WINDOW_SIZE_W)

cap.set(cv2.CAP_PROP_FRAME_HEIGHT, WINDOW_SIZE_H)

ret, frame = cap.read()

loopflg = cap.isOpened()

else:

# 画像ファイル読み込み

frame = cv2.imread(input_stream)

if frame is None:

print(RED + "\nUnable to read the input." + NOCOLOR)

quit()

# アスペクト比を固定してリサイズ

img_h, img_w = frame.shape[:2]

if (img_w > WINDOW_WIDTH):

height = round(img_h * (WINDOW_WIDTH / img_w))

frame = cv2.resize(frame, dsize = (WINDOW_WIDTH, height))

loopflg = True # 1回ループ

# Width and height calculations. These will be used to scale the bounding boxes

source_image_width = frame.shape[1]

source_image_height = frame.shape[0]

scaled_w = int(source_image_width * min(network_input_w/source_image_width, network_input_w/source_image_height))

scaled_h = int(source_image_height * min(network_input_h/source_image_width, network_input_h/source_image_height))

# メインループ

while (loopflg):

# Make a copy of the original frame. Get the frame's width and height.

if frame is None:

print(RED + "\nUnable to read the input." + NOCOLOR)

quit()

####################### 2. Preprocessing #######################

# Image preprocessing

# frame = cv2.flip(frame, 1)

display_image = frame

# Image preprocessing (resize, transpose, reshape)

input_image = cv2.resize(frame, (network_input_w, network_input_h), cv2.INTER_LINEAR)

input_image = input_image.astype(np.float32)

input_image = np.transpose(input_image, (2,0,1))

reshaped_image = input_image.reshape((n, c, network_input_h, network_input_w))

####################### 3. Perform Inference #######################

# Perform the inference asynchronously

req_handle = exec_net.start_async(request_id=0, inputs={input_blob: reshaped_image})

status = req_handle.wait()

####################### 4. Get results #######################

all_output_results = req_handle.outputs

####################### 5. Post processing for results #######################

# Post-processing for tiny yolo v3

# The post process consists of the following steps:

# 1. Parse the output and filter out low scores

# 2. Filter out duplicates using intersection over union

# 3. Draw boxes and text

## 1. Tiny yolo v3 has two outputs and we check/parse both outputs

filtered_objects = []

for output_node_results in all_output_results.values():

parseTinyYoloV3Output(output_node_results, filtered_objects, source_image_width, source_image_height, scaled_w, scaled_h, detection_threshold, len(label_list))

## 2. Filter out duplicate objects from all detected objects

filtered_mask = get_duplicate_box_mask(filtered_objects, iou_threshold)

## 3. Draw rectangles and set up display texts

for object_index in range(len(filtered_objects)):

if filtered_mask[object_index] == True:

# get all values from the filtered object list

xmin = filtered_objects[object_index][0]

ymin = filtered_objects[object_index][1]

xmax = filtered_objects[object_index][2]

ymax = filtered_objects[object_index][3]

confidence = filtered_objects[object_index][4]

class_id = filtered_objects[object_index][5]

# Set up the text for display

cv2.rectangle(display_image,(xmin, ymin), (xmax, ymin+20), LABEL_BG_COLOR, -1)

# cv2.putText(display_image, label_list[class_id] + ': %.2f' % confidence, (xmin+5, ymin+15), TEXT_FONT, 0.5, TEXT_COLOR, 1)

myfunction.cv2_putText(img = display_image,

text = label_list[class_id] + ': %.2f' % confidence,

org = (xmin+5, ymin+18), fontFace = fontPIL,

fontScale = 14,

color = TEXT_COLOR,

mode = 0)

# Set up the bounding box

cv2.rectangle(display_image, (xmin, ymin), (xmax, ymax), BOX_COLOR, 1)

# タイトル描画

if (titleflg == 'y'):

cv2.putText(display_image, title, (10, 30), cv2.FONT_HERSHEY_DUPLEX, fontScale=0.8, color=(200, 200, 0), lineType=cv2.LINE_AA)

# 画像表示

window_name = title + ' (hit key to exit)'

cv2.imshow(window_name, display_image)

cv2.moveWindow(window_name, 10, 40)

# 何らかのキーが押されたら終了

breakflg = False

while(True):

key = cv2.waitKey(1)

prop_val = cv2.getWindowProperty(window_name, cv2.WND_PROP_ASPECT_RATIO)

if ((key != -1) or (prop_val < 0.0)):

breakflg = True

break

if (isstream):

break

if ((breakflg == False) and isstream):

# 次のフレームを読み出す

ret, frame = cap.read()

if ret == False:

break

loopflg = cap.isOpened()

else:

loopflg = False

# 終了処理

if (isstream):

cap.release()

cv2.destroyAllWindows()

del net

del exec_net

print('\n Finished.')

# main関数エントリーポイント(実行開始)

if __name__ == "__main__":

sys.exit(main())

| コマンドオプション | デフォールト設定 | 意味 |

| -h, --help | - | ヘルプ表示 |

| --ir | 必須項目 | IRフォーマットの学習済みファイル |

| -l, --labels | coco.names_jp | ラベルファイル(coco.names_jp/coco.names) |

| -i, --image | cam | カメラ(cam)または動画・静止画像ファイル |

| -d, --device | 必須指定 | デバイス指定 (CPU/MYRIAD) |

| --threshold | CENTER;0.60 | オブジェクトの閾値 |

| --iou' | 0.25 | オブジェクトの重なりレベル |

| -t, --title | y | タイトル表示 (y/n) |

| -s, --speed | y | スピード計測表示 (y/n) |

| -o, --out | non | 処理結果を出力する場合のファイルパス |

$ python3 object_detect_yolo3_2.py -h

--- TinyYOLO V3 Object detection ---

4.5.2-openvino

OpenVINO inference_engine: 2.1.2021.3.0-2787-60059f2c755-releases/2021/3

usage: object_detect_yolo3_2.py [-h] [--ir IR_File] [-lb LABEL_FILE]

[-i IMAGE_FILE or cam] [-d DEVICE]

[--threshold FLOAT] [--iou FLOAT] [-t TITLE]

[-s SPEED] [-o IMAGE_OUT]

optional arguments:

-h, --help show this help message and exit

--ir IR_File Absolute path to the neural network IR xml

file.Default value is

/home/mizutu/model/public/FP32/yolo-v3-tiny-tf.xml

-lb LABEL_FILE, --labels LABEL_FILE

Absolute path to labels file.Default value is

coco.names_jp

-i IMAGE_FILE or cam, --input IMAGE_FILE or cam

Absolute path to image file or cam for camera stream.

-d DEVICE, --device DEVICE

Optional. Specify a target device to infer on. CPU,

GPU, FPGA, HDDL or MYRIAD is acceptable. The demo will

look for a suitable plugin for the device specified.

Default value is CPU

--threshold FLOAT Threshold for detection.

--iou FLOAT Intersection Over Union.

-t TITLE, --title TITLE

Program title flag.(y/n) Default value is 'y'

-s SPEED, --speed SPEED

Speed display flag.(y/n) Default calue is 'y'

-o IMAGE_OUT, --out IMAGE_OUT

Processed image file path. Default value is 'non'$ python3 object_detect_yolo3_2.py -i ~/Videos/car.mp4

--- TinyYOLO V3 Object detection ---

4.5.2-openvino

OpenVINO inference_engine: 2.1.2021.3.0-2787-60059f2c755-releases/2021/3

Running OpenVINO NCS Tensorflow TinyYolo v3 example...

Displaying image with objects detected in GUI...

Click in the GUI window and hit any key to exit.

object_detect_yolo3_2.py:289: DeprecationWarning: 'inputs' property of IENetwork class is deprecated. To access DataPtrs user need to use 'input_data' property of InputInfoPtr objects which can be accessed by 'input_info' property.

input_blob = next(iter(net.inputs))

Tiny Yolo v3: Starting application...

- IR File : /home/mizutu/model/public/FP32/yolo-v3-tiny-tf.xml

- Input Shape : [1, 3, 416, 416]

- Output Shapes:

- output #0 name: conv2d_12/Conv2D/YoloRegion

- output shape: [1, 255, 26, 26]

- output #1 name: conv2d_9/Conv2D/YoloRegion

- output shape: [1, 255, 13, 13]

- Labels File : coco.names_jp

- Image File : /home/mizutu/Videos/car.mp4

- Threshold : 0.6

- Intersection Over Union: 0.25

- Device : CPU

- Program Title: y

- Speed flag : y

- Processed out: non

object_detect_yolo3_2.py:367: DeprecationWarning: 'outputs' property of InferRequest is deprecated. Please instead use 'output_blobs' property.

all_output_results = req_handle.outputs

FPS average: 12.10

Finished.$ python3 object_detect_yolo3_2.py -i ~/Videos/car_person.mp4

--- TinyYOLO V3 Object detection ---

4.5.2-openvino

OpenVINO inference_engine: 2.1.2021.3.0-2787-60059f2c755-releases/2021/3

Running OpenVINO NCS Tensorflow TinyYolo v3 example...

Displaying image with objects detected in GUI...

Click in the GUI window and hit any key to exit.

object_detect_yolo3_2.py:289: DeprecationWarning: 'inputs' property of IENetwork class is deprecated. To access DataPtrs user need to use 'input_data' property of InputInfoPtr objects which can be accessed by 'input_info' property.

input_blob = next(iter(net.inputs))

Tiny Yolo v3: Starting application...

- IR File : /home/mizutu/model/public/FP32/yolo-v3-tiny-tf.xml

- Input Shape : [1, 3, 416, 416]

- Output Shapes:

- output #0 name: conv2d_12/Conv2D/YoloRegion

- output shape: [1, 255, 26, 26]

- output #1 name: conv2d_9/Conv2D/YoloRegion

- output shape: [1, 255, 13, 13]

- Labels File : coco.names_jp

- Image File : /home/mizutu/Videos/car_person.mp4

- Threshold : 0.6

- Intersection Over Union: 0.25

- Device : CPU

- Program Title: y

- Speed flag : y

- Processed out: non

object_detect_yolo3_2.py:367: DeprecationWarning: 'outputs' property of InferRequest is deprecated. Please instead use 'output_blobs' property.

all_output_results = req_handle.outputs

FPS average: 12.50

Finished.$ cd ~/workspace/apps◦ CPU

$ python3 object_detect_yolo3_2.py -i ~/Videos/car.mp4

$ python3 object_detect_yolo3_2.py -i ~/Videos/car_person.mp4

$ python3 object_detect_yolo3_2.py -i ~/Images/desk-image.jpg