私的AI研究会 > Tesseract4

実用的な AI開発に向けて、文字認識エンジン「Tesseract」(テッセラクト)を使用した「OCR アプリケーション」を開発する。(その2)

「PyYAML」パッケージを利用する。

OCR YAML file test:

BasicCoordinates:

- 640

- 480

FileName: C:/filename

KyeName:

Location:

- 10

- 20

- 30

- 40

PreProcess: 0

Text: 請求書

Date:

Location:

- 10

- 20

- 30

- 40

PreProcess: 0

Text: 2022年01月11日

:KeyTable: - KyeName - Date - CompName - Title - B4Tax - TotalMoney

(py37) $ cd ~/workspace_py37/tryocr

(py37) $ python3 mylib_yaml.py

:

['KyeName', 'Date', 'CompName', 'Title', 'B4Tax', 'TotalMoney'] 6

[640, 480] C:/filename

[10, 20, 30, 40] 0 請求書

[10, 20, 30, 40] 0 2022年01月11日

[10, 20, 30, 40] 0 会社名

[10, 20, 30, 40] 0 案件名

[10, 20, 30, 40] 0 税抜金額

[10, 20, 30, 40] 0 税込み金額# -*- coding: utf-8 -*-

##------------------------------------------

## My Library YAML file process

##

## 2022.01.11 Masahiro Izutsu

##------------------------------------------

## mylib_yaml.py

## 2022.01.14 追加書き込み対応

import yaml

import codecs

import os

class YamlProcess:

logf = False

out_path = None

# 初期化

# out_path: 出力ファイル・パス

# flg: ログ出力フラグ

def __init__(self, out_path, flg = False):

self.logf = flg

self.out_path = out_path

# セクション/キーの追加書き込み

# section: セクション名

# key: データ名

# data: オブジェクト

def write_append_key(self, section, key, data):

if os.path.isfile(self.out_path):

with codecs.open(self.out_path, 'r+', 'utf-8') as f:

obj = None

try:

obj = yaml.safe_load(f)

except yaml.YAMLError as exc:

print(exc)

if obj != None and section in obj:

obj[section][key] = data

f.seek(0)

yaml.dump(obj, f, encoding='utf-8', allow_unicode=True)

if self.logf:

print(yaml.dump(obj))

else:

new_data = {key: data}

self.write_append(section, new_data)

else:

new_data = {key: data}

self.write_section(section, new_data)

# セクションの追加書き込み

# section: セクション名

# data: オブジェクト

def write_append(self, section, data):

if os.path.isfile(self.out_path):

with codecs.open(self.out_path, 'r+', 'utf-8') as f:

try:

obj = yaml.safe_load(f)

except yaml.YAMLError as exc:

print(exc)

obj[section] = data

f.seek(0)

yaml.dump(obj, f, encoding='utf-8', allow_unicode=True)

if self.logf:

print(yaml.dump(obj))

else:

self.write_section(section, data)

# セクションの書き込み

# section: セクション名

# data: オブジェクト

def write_section(self, section, data):

obj = {section: data}

self.write_object(obj)

# オブジェクトの書き込み

# obj: オブジェクト

def write_object(self, obj):

with codecs.open(self.out_path, 'w', 'utf-8') as f:

yaml.dump(obj, f, encoding='utf-8', allow_unicode=True)

if self.logf:

print(yaml.dump(obj))

# セクション/サブ・セクション/キーの読み出し

# section: セクション名

# sub_sec: サブ・セクション名

# key: データ名

def load_section_sub_key(self, section, sub_sec, key):

obj = self.load_section_key(section, sub_sec)

data = None

if obj != None and key in obj:

data = obj[key]

return data

# セクション/キーの読み出し

# section: セクション名

# key: データ名

def load_section_key(self, section, key):

obj = self.load_section(section)

data = None

if obj != None and key in obj:

data = obj[key]

return data

# セクションの読み出し

# 戻り値: セクション・オブジェクト

def load_section(self, section):

obj = self.load_object()

data = None

if obj != None and section in obj:

data = obj[section]

return data

# オブジェクトの読み出し

# 戻り値: オブジェクト

def load_object(self):

with codecs.open(self.out_path, 'r', 'utf-8') as f:

try:

obj = yaml.safe_load(f)

except yaml.YAMLError as exc:

print(exc)

return obj

##---------------

def main():

from os.path import expanduser

# 配置ファイル作成

out_path1 = expanduser('./output_test.yaml')

name1 = 'file1'

myyaml1 = YamlProcess(out_path1, True)

path = 'C:/filename'

base_cord = [640, 480]

locat = [10, 20, 30, 40]

prepros = 0

# 基本情報を書く

data = {'.FileName': path, '.BasicCoordinates': base_cord}

myyaml1.write_append(name1, data)

# 配置情報を書く

data = {'Location': locat, 'PreProcess': prepros, 'Text': '請求書'}

myyaml1.write_append_key(name1, 'KyeName', data)

data = {'Location': locat, 'PreProcess': prepros, 'Text': '2022年01月11日'}

myyaml1.write_append_key(name1, 'Date', data)

data = {'Location': locat, 'PreProcess': prepros, 'Text': '会社名'}

myyaml1.write_append_key(name1, 'CompName', data)

data = {'Location': locat, 'PreProcess': prepros, 'Text': '案件名'}

myyaml1.write_append_key(name1, 'Title', data)

data = {'Location': locat, 'PreProcess': prepros, 'Text': '税抜金額'}

myyaml1.write_append_key(name1, 'B4Tax', data)

data = {'Location': locat, 'PreProcess': prepros, 'Text': '税込み金額'}

myyaml1.write_append_key(name1, 'TotalMoney', data)

# キーテーブル作成

out_path2 = expanduser('./output_table.yaml')

myyaml2 = YamlProcess(out_path2, True)

key_table = ['KyeName', 'Date', 'CompName', 'Title', 'B4Tax', 'TotalMoney']

myyaml2.write_append('KeyTable', key_table)

#----------------------------------

# キーテーブルを得る

table = myyaml2.load_section('KeyTable')

print(table, len(table))

data = myyaml1.load_section(name1)

# 基本情報を得る

base_size = data['.BasicCoordinates']

file_name = data['.FileName']

print(base_size, file_name)

# 配置情報を得る

location = []

prepros = []

text = []

i = 0

for tn in table:

location.append(data[tn]['Location'])

prepros.append(data[tn]['PreProcess'])

text.append(data[tn]['Text'])

print(location[i], prepros[i], text[i])

i = i + 1

if __name__ == "__main__":

main()

サイトの都合で「{」「}」(半角括弧)が「{」「}」(全角括弧)になっているので注意‼

| コマンドオプション | デフォールト設定 | 意味 |

| -h, --help | ヘルプ表示 | |

| -i, --image | images/sample0.png | 入力画像ファイル |

| -l, --language | jpn | 言語 |

| --layout | 6 | tesseractレイアウト(0-13) |

| -t, --title | n | タイトル表示 (y/n) |

| --log | y | ログ出力フラグ (y/n) |

| --mlt | y | 連続編集フラグ (y/n) |

KeyDisp: - 請求書 - 日 付 - 会社名 - 案件名 - 税抜金額 - 税込金額 KeyTable: - KyeName - Date - CompName - Title - B4Tax - TotalMoney Template: ← 登録したテンプレートのキーファイルをアプリが書く - sample0.png

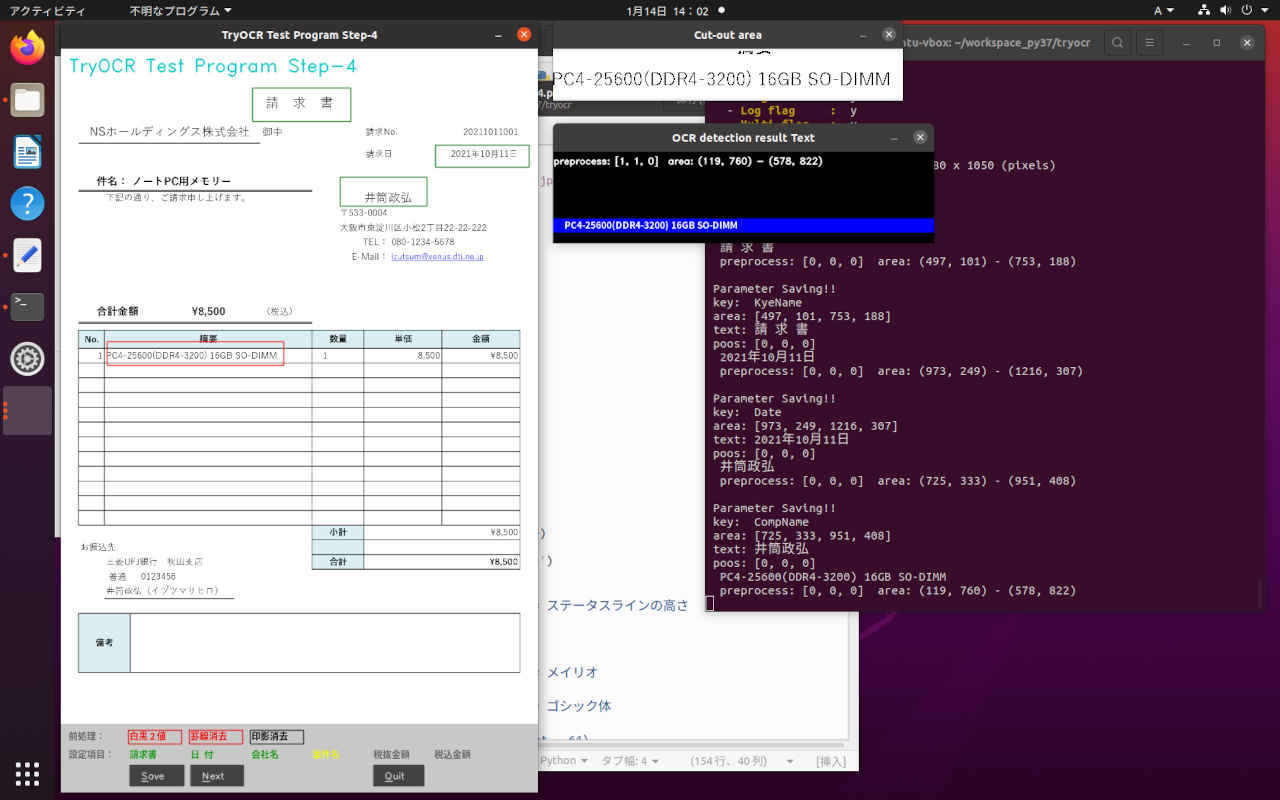

(py37) cd ~/workspace_py37/tryocr

(py37) $ python3 tryocr_step4.py

--- TryOCR Test Program Step-4 ---

OpenCV version 4.5.2

TryOCR Test Program Step-4: Starting application...

- Image File : images/sample0.png

- Language : jpn

- Layout : 6

- Program Title: n

- Log flag : y

- Multi flag : y

file: <images/sample0.png>

Screen size: width x height = 1680 x 1050 (pixels)

:

Finished.sample0.png: ← テンプレートとなる元画像のファイル名

.BasicCoordinates: ← 元画像のサイズ [幅,高さ]

- 1240

- 1754

.FileName: images/sample0.png ← 元画像のファイルパス

B4Tax: ← '税抜き価格'のデータ・セクション

Location: ← 領域 {Xmin, Ymin, Xmax, Ymax]

- 1103

- 1227

- 1190

- 1281

PreProcess: 2 ← 前処理 [0=なし, 1=白黒変換, 2=罫線消去, 4=印影消去]

Text: \8,500

CompName: ← '会社名'のデータ・セクション

Location: ← 領域 {Xmin, Ymin, Xmax, Ymax]

- 725

- 333

- 951

: ↓続く# -*- coding: utf-8 -*-

##------------------------------------------

## TryOCR Test Programe Step-4 Ver 0.01

## with tesseract & PyOCR & cvui

## platform: linux / windows

##

## 2022.01.12 Masahiro Izutsu

##------------------------------------------

## tryocr_step4.py

## 2022.01.17 ver 0.01 エラー処理追加

## 前処理: '白黒2値', '罫線消去', '印影消去'

# 選択をマウスで

# Color Escape Code

GREEN = '\033[1;32m'

RED = '\033[1;31m'

NOCOLOR = '\033[0m'

YELLOW = '\033[1;33m'

# 定数定義

LINE_WORD_BOX_COLOR = (0, 0, 240)

WORD_BOX_COLOR = (255, 0, 0)

CONTENTS_COLOR = (0, 128, 0)

from os.path import expanduser

DEF_INPUT_FILE = expanduser('images/sample0.png')

CONFIG_FILE = expanduser('tryocr.yaml')

OUTPUT_FILE = expanduser('tryocr_templ.yaml')

# import処理

from PIL import Image

import sys

import pyocr

import pyocr.builders

import cv2

import cvui

import argparse

import myfunction

import numpy as np

import mylib_gui

import mylib_frame

import mylib_preprocess

import mylib_screen

import platform

from tkinter import filedialog

import mylib_yaml

import os

# タイトル・バージョン情報

title = 'TryOCR Test Program Step-4 Ver 0.01'

print(GREEN)

print('--- {} ---'.format(title))

print(' OpenCV version {} '.format(cv2.__version__))

print(NOCOLOR)

# Parses arguments for the application

def parse_args():

parser = argparse.ArgumentParser()

parser.add_argument('-i', '--image', metavar = 'IMAGE_FILE', type = str, default = DEF_INPUT_FILE,

help = 'Absolute path to image file. Default value is \'' + DEF_INPUT_FILE + '\'')

parser.add_argument('-l', '--language', metavar = 'LANGUAGE',

default = 'jpn',

help = 'Language. Default value is \'jpn\'')

parser.add_argument('--layout', metavar = 'LAYOUT',

default = 6,

help = 'Tesseract layout Default value is 6')

parser.add_argument('-t', '--title', metavar = 'TITLE',

default = 'n',

help = 'Program title flag.(y/n) Default value is \'n\'')

parser.add_argument('--log', metavar = 'LOG',

default = 'y',

help = 'Log flag.(y/n) Default value is \'y\'')

parser.add_argument('--mlt', metavar = 'MULTI',

default = 'y',

help = 'Multi flag.(y/n) Default value is \'y\'')

return parser

# モデル基本情報の表示

def display_info(image, lang, layout, titleflg, logflg, mltflg):

print(YELLOW + title + ': Starting application...' + NOCOLOR)

print(' - ' + YELLOW + 'Image File : ' + NOCOLOR, image)

print(' - ' + YELLOW + 'Language : ' + NOCOLOR, lang)

print(' - ' + YELLOW + 'Layout : ' + NOCOLOR, layout)

print(' - ' + YELLOW + 'Program Title: ' + NOCOLOR, titleflg)

print(' - ' + YELLOW + 'Log flag : ' + NOCOLOR, logflg)

print(' - ' + YELLOW + 'Multi flag : ' + NOCOLOR, mltflg)

# 編集項目を探す

def ckeck_mode(mode):

nxt = -1

for n in range(len(mode)):

if mode[n] == 0:

nxt = n

break

return nxt

# 基本情報を書く

def write_base_info(myyaml, section, path, base_cord):

data = {'.FileName': path, '.BasicCoordinates': base_cord}

myyaml.write_append(section, data)

def write_base_info1(myyaml, section, path):

myyaml.write_append_key(section, '.FileName', path)

def write_base_info2(myyaml, section, base_cord):

myyaml.write_append_key(section, '.BasicCoordinates', base_cord)

# 配置情報を書く

def write_area_info(myyaml, section, key, locat, prepros, text):

data = {'Location': locat, 'PreProcess': prepros, 'Text': text}

myyaml.write_append_key(section, key, data)

# 配置情報を読む

def read_area_info(myyaml, section, sub_sec) :

locat = myyaml.load_section_sub_key(section, sub_sec, 'Location')

prepros = myyaml.load_section_sub_key(section, sub_sec, 'PreProcess')

text = myyaml.load_section_sub_key(section, sub_sec, 'Text')

return locat, prepros, text

# 登録セクション名(ファイル名) を書く

def write_section_name(myyaml, name):

templ_lst = myyaml.load_section('Template')

if templ_lst == None:

templ_lst = []

if name not in templ_lst:

templ_lst.append(name)

myyaml.write_append('Template', templ_lst)

# 画像注釈編集アプリケーション・メイン処理

def image_annotation(lena_frame_org, name, lang='jpn', layout=6, titleflg=False, logflag=False, mltflg=True):

WINDOW_NAME = title

ROI_WINDOW = 'Cut-out area'

ROI_POPUP = 'OCR detection result Text'

preprocess_mode = [0, 0, 0]

set_mode = 0

wlock1 = 0

wlock2 = 0

wlock3 = 0

# 設定処理

conf_file = CONFIG_FILE

out_file = OUTPUT_FILE

section_name = name

myyaml_cnf = mylib_yaml.YamlProcess(conf_file)

myyaml_out = mylib_yaml.YamlProcess(out_file)

key_table = myyaml_cnf.load_section('KeyTable')

key_disp = myyaml_cnf.load_section('KeyDisp')

table_n = len(key_table)

save_mode = [0] * table_n

status_h = 90 # ステータスラインの高さ

# 日本語フォント指定

if platform.system()=='Windows':

fontPIL = 'meiryo.ttc' # メイリオ

else:

fontPIL = 'NotoSansCJK-Bold.ttc' # ゴシック体

# ディスプレイ解像度を得る (Ubuntu の場合 height - 64)

monitor_height, monitor_width = mylib_screen.get_display_size(logflag)

maxsize = monitor_height - 64 - 100

# 画像の前処理

imgpros = mylib_preprocess.ImagePreprocess(False) # 初期化

# OCR

tools = pyocr.get_available_tools()

if len(tools) == 0:

print(RED + "\nOCR tool Not found." + NOCOLOR)

quit()

tool = tools[0]

# mylib_frame ライブラリ

imgfr = mylib_frame.ImageFrame(lena_frame_org) # 初期化

imgfr.set_screen_size(monitor_width, monitor_height)

lena_frame = imgfr.frame_resize(maxsize)

lena_frame_h, lena_frame_w = lena_frame.shape[:2]

# 画面ステータス領域 (画面下部 status_h pixel)の確保

frame = np.zeros((lena_frame_h + status_h, lena_frame_w, 3), np.uint8)

frame[:,:,:] = 200

popup_frame = np.zeros((120, 500, 3), np.uint8)

anchor = cvui.Point()

roi = cvui.Rect(0, 0, 0, 0)

working = False

frame_h, frame_w = frame.shape[:2]

outf = False

org_h, org_w = imgfr.get_original_size()

scale_h, scale_w = imgfr.get_scale()

write_base_info2(myyaml_out, section_name, [org_w, org_h])

if logflag:

print('\n original h x w : {:=5} x {:=5}'.format(org_h, org_w))

print(' display h x w : {:=5} x {:=5}'.format(lena_frame_h, lena_frame_w))

print(' scale h x w : {:.3f} x {:.3f}'.format(scale_h, scale_w))

print(' -----------')

# Init cvui and tell it to create a OpenCV window, i.e. cv.namedWindow(WINDOW_NAME).

cv2.namedWindow(WINDOW_NAME, flags=cv2.WINDOW_AUTOSIZE | cv2.WINDOW_GUI_NORMAL)

cvui.init(WINDOW_NAME)

# OCR 項目設定データ

ocr_text = ''

ocr_rect = [0, 0, 0, 0]

# 設定データの保存場所

text_save = []

save_rect = []

for n in range(table_n):

text_save.append(ocr_text)

save_rect.append(cvui.Rect(0, 0, 0, 0))

# 登録済みの配置データ

for n in range(table_n):

# 配置情報の読み出し

ocr_rect, pros, ocr_text = read_area_info(myyaml_out, name, key_table[n])

save_mode[n] = 1 if ocr_rect != None and pros != None and ocr_text != None else 0

if ocr_text == None:

ocr_text = ''

if ocr_rect == None:

x0 = 0

y0 = 0

x1 = 0

y1 = 0

else:

# オリジナル座標 → 表示座標

x0, y0 = imgfr.get_org2res_xy(ocr_rect[0], ocr_rect[1])

x1, y1 = imgfr.get_org2res_xy(ocr_rect[2], ocr_rect[3])

# テキスト・領域の保存

text_save[n] = ocr_text

save_rect[n].x = x0

save_rect[n].y = y0

save_rect[n].width = x1 - x0

save_rect[n].height = y1 - y0

next = ckeck_mode(save_mode)

if next < 0:

set_mode = 0

if logflag:

print(' -Saved all parameters!!-')

else:

set_mode = next

## --

# 処理ループ

while (True):

# 画面上部に画像を配置

frame[0:lena_frame_h,:] = lena_frame

frame[lena_frame_h:,:,:] = 200

# マウス・イベント

if cvui.mouse(cvui.LEFT_BUTTON, cvui.DOWN):

if cvui.mouse().y < lena_frame_h:

# マウスポインタにアンカーを配置

anchor.x = cvui.mouse().x

anchor.y = cvui.mouse().y

# 作業中の通知(作業中はウインドウの更新しない)

working = True

if cvui.mouse(cvui.LEFT_BUTTON, cvui.IS_DOWN):

if cvui.mouse().y < lena_frame_h:

# 領域を設定

width = cvui.mouse().x - anchor.x

height = cvui.mouse().y - anchor.y

roi.x = anchor.x + width if width < 0 else anchor.x

roi.y = anchor.y + height if height < 0 else anchor.y

roi.width = abs(width)

roi.height = abs(height)

# 座標とサイズを表示

cvui.printf(frame, roi.x + 5, roi.y + 5, 0.3, 0xff0000, '(%d,%d)', roi.x, roi.y)

cvui.printf(frame, cvui.mouse().x + 5, cvui.mouse().y + 5, 0.3, 0xff0000, 'w:%d, h:%d', roi.width, roi.height)

if cvui.mouse(cvui.UP):

if cvui.mouse().y < lena_frame_h:

# 領域指定作業の終了

working = False

outf = True

wlock1 = 0

wlock2 = 0

wlock3 = 0

# 領域内を確認

lenaRows, lenaCols, lenaChannels = lena_frame.shape

if roi.x < 0:

roi.x = 0

if roi.y < 0:

roi.y = 0

if roi.x + roi.width > lena_frame_w:

roi.width = lena_frame_w - roi.x

if roi.y + roi.height > lena_frame_h:

roi.height = lena_frame_h - roi.y

# 設定領域をレンダリング

cvui.rect(frame, roi.x, roi.y, roi.width, roi.height, 0xff0000)

# 設定済み領域の表示

for n in range(table_n):

rc = save_rect[n]

if rc.width > 0 and rc.height >0:

cvui.rect(frame, rc.x, rc.y, rc.width, rc.height, 0x008000)

# テキストの描画

if len(text_save[n]) > 0:

myfunction.cv2_putText(img = frame,

text = text_save[n],

org = (rc.x, rc.y),

fontFace = fontPIL,

fontScale = 12,

color = (0,160,0),

mode = 0)

# -------------------------

# ステータスライン - 前処理:

fs = 12

xs = 80

rc = cvui.Rect(10, lena_frame_h+8, xs-10, fs + 6)

myfunction.cv2_putText(frame, '前処理:', (rc.x, rc.y), fontPIL, fs, (100,100,100), 1)

cr = (0,0,0) if preprocess_mode[0] == 0 else (0,0,240)

myfunction.cv2_putText(frame, '白黒2値', (rc.x+xs, rc.y), fontPIL, fs, cr, 1)

cr = (0,0,0) if preprocess_mode[1] == 0 else (0,0,240)

myfunction.cv2_putText(frame, '罫線消去', (rc.x+xs*2, rc.y), fontPIL, fs, cr, 1)

cr = (0,0,0) if preprocess_mode[2] == 0 else (0,0,240)

myfunction.cv2_putText(frame, '印影消去', (rc.x+xs*3, rc.y), fontPIL, fs, cr, 1)

crx = 0x202020 if preprocess_mode[0] == 0 else 0xe00000

cvui.rect(frame, rc.x+xs-2, rc.y, rc.width, rc.height, crx)

crx = 0x202020 if preprocess_mode[1] == 0 else 0xe00000

cvui.rect(frame, rc.x+xs*2-2, rc.y, rc.width, rc.height, crx)

crx = 0x202020 if preprocess_mode[2] == 0 else 0xe00000

cvui.rect(frame, rc.x+xs*3-2, rc.y, rc.width, rc.height, crx)

status0 = cvui.iarea(rc.x+xs, rc.y, rc.width, rc.height);

status1 = cvui.iarea(rc.x+xs*2, rc.y, rc.width, rc.height);

status2 = cvui.iarea(rc.x+xs*3, rc.y, rc.width, rc.height);

if status0 == cvui.CLICK:

preprocess_mode[0] = 1 if preprocess_mode[0] == 0 else 0

outf = True

if status1 == cvui.CLICK:

preprocess_mode[1] = 1 if preprocess_mode[1] == 0 else 0

outf = True

if status2 == cvui.CLICK:

preprocess_mode[2] = 1 if preprocess_mode[2] == 0 else 0

outf = True

# ステータスライン - 設定項目:

rc = cvui.Rect(10, lena_frame_h+32, xs-10, fs+8)

myfunction.cv2_putText(frame, '設定項目:', (rc.x, rc.y), fontPIL, fs, (100,100,100), 1)

n = 1

for disp in key_disp:

cr = (0,240,240) if set_mode == n - 1 else (100,100,100) if save_mode[n - 1] == 0 else (0,160,0)

myfunction.cv2_putText(frame, disp, (rc.x + n*xs, rc.y), fontPIL, fs, cr, 1)

n = n + 1

status10 = cvui.iarea(rc.x+xs, rc.y, rc.width, rc.height);

status11 = cvui.iarea(rc.x+2*xs, rc.y, rc.width, rc.height);

status12 = cvui.iarea(rc.x+3*xs, rc.y, rc.width, rc.height);

status13 = cvui.iarea(rc.x+4*xs, rc.y, rc.width, rc.height);

status14 = cvui.iarea(rc.x+5*xs, rc.y, rc.width, rc.height);

status15 = cvui.iarea(rc.x+6*xs, rc.y, rc.width, rc.height);

if status10 == cvui.CLICK:

set_mode = 0

if status11 == cvui.CLICK:

set_mode = 1

if status12 == cvui.CLICK:

set_mode = 2

if status13 == cvui.CLICK:

set_mode = 3

if status14 == cvui.CLICK:

set_mode = 4

if status15 == cvui.CLICK:

set_mode = 5

# ステータスライン - 作業ボタン

btn_x = 90

btn_y = lena_frame_h + 54

btn_w = 70

btn_h = 32

# 'Save'

if cvui.button(frame, btn_x, btn_y, "&Save"):

if len(ocr_text) > 0:

save_mode[set_mode] = 1

save_rect[set_mode] = roi

text_save[set_mode] = ocr_text

# 配置情報の記録

prepros = preprocess_mode[0] + preprocess_mode[1]*2 + preprocess_mode[2]*4

write_area_info(myyaml_out, section_name, key_table[set_mode], ocr_rect, prepros, ocr_text)

write_section_name(myyaml_cnf, section_name)

if logflag:

print('\n Parameter Saving!!')

print(' key: ', key_table[set_mode])

print(' area:', ocr_rect)

print(' text:', ocr_text)

print(' poos:', preprocess_mode)

next = ckeck_mode(save_mode)

if next < 0:

set_mode = 0

if logflag:

print(' -Saved all parameters!!-')

else:

set_mode = next

roi = cvui.Rect(0, 0, 0, 0)

ocr_text = ''

cv2.destroyWindow(ROI_WINDOW)

cv2.destroyWindow(ROI_POPUP)

# 'Next'

if mltflg:

if cvui.button(frame, btn_x + btn_w + 10, btn_y, "&Next"):

key = ord('n')

break

# 'Quit'

if cvui.button(frame, btn_x + (btn_w + 10) * 4, btn_y, "&Quit"):

key = ord('q')

break

# -------------------------

# タイトル描画

if (titleflg == 'y'):

cv2.putText(frame, title, (10, 30), cv2.FONT_HERSHEY_DUPLEX, fontScale=0.6, color=(200, 200, 0), lineType=cv2.LINE_AA)

# ウインドウの更新

cvui.update()

# 画面の表示

cv2.imshow(WINDOW_NAME, frame)

if wlock1 < 10:

cv2.moveWindow(WINDOW_NAME, 80, 0)

wlock1 = wlock1 + 1

else:

wlock1 = 10

# 得られた表示座標から元画像の位置を計算して画像を切り出す

if outf and roi.area() > 50 and working == False:

x0, y0 = imgfr.get_res2org_xy(roi.x, roi.y)

x1, y1 = imgfr.get_res2org_xy(roi.x + roi.width, roi.y + roi.height)

lenaRoi = lena_frame_org[y0 : y1, x0 : x1]

ocr_rect = [x0, y0, x1, y1]

# 前処理

if preprocess_mode[2] != 0:

lenaRoi = imgpros.image_processing_execution(lenaRoi, 4)

if preprocess_mode[1] != 0:

lenaRoi = imgpros.image_processing_execution(lenaRoi, 2)

if preprocess_mode[0] != 0:

lenaRoi = imgpros.image_processing_execution(lenaRoi, 1)

# OCR入力画像表示

lenaRoi_h, lenaRoi_w = lenaRoi.shape[:2]

cv2.namedWindow(ROI_WINDOW, flags=cv2.WINDOW_AUTOSIZE | cv2.WINDOW_GUI_NORMAL)

cv2.imshow(ROI_WINDOW, lenaRoi)

if wlock2 < 10:

cv2.moveWindow(ROI_WINDOW, frame_w + 100, 0)

wlock2 = wlock2 + 1

else:

wlock2 = 10

# 切り出した領域を OCR

# PILのイメージにする

lenaRoi1 = cv2.cvtColor(lenaRoi, cv2.COLOR_RGB2BGR)

imgRoi = Image.fromarray(lenaRoi1)

# txt is a Python string

ocr_text = tool.image_to_string(imgRoi, lang=lang,

builder=pyocr.builders.TextBuilder(tesseract_layout=layout))

# テキストの描画

if len(ocr_text)>0:

msg = 'preprocess: {} area: ({}, {}) - ({}, {})'.format(preprocess_mode, ocr_rect[0], ocr_rect[1], ocr_rect[2], ocr_rect[3])

popup_frame[:,:,:] = 0

cv2.rectangle(popup_frame, (0, 88), (500, 105), (255,0,0), -1)

myfunction.cv2_putText(img = popup_frame,

text = ocr_text,

org = (15, 104),

fontFace = fontPIL,

fontScale = 12,

color = (255,255,255),

mode = 0)

cv2.putText(popup_frame, msg, (0, 16), cv2.FONT_HERSHEY_DUPLEX, fontScale=0.4, color=(255, 255, 255), lineType=cv2.LINE_AA)

else:

msg = ' Cannot be converted !!\n'

msg0 = 'もう一度指定してくささい ‼'

popup_frame[:,:,:] = 0

myfunction.cv2_putText(img = popup_frame,

text = msg0,

org = (120, 60),

fontFace = fontPIL,

fontScale = 16,

color = (240, 240, 0),

mode = 0)

cv2.namedWindow(ROI_POPUP, flags=cv2.WINDOW_AUTOSIZE | cv2.WINDOW_GUI_NORMAL)

cv2.imshow(ROI_POPUP, popup_frame)

if wlock3 < 10:

cv2.moveWindow(ROI_POPUP, frame_w + 100, lenaRoi_h + 100)

wlock3 = wlock3 + 1

else:

wlock3 = 10

if outf and logflag:

print(' ', ocr_text)

print(' ', msg)

outf = False

key = cv2.waitKey(10)

if key == 27 or key == 113: # 'esc' or 'q'

break

elif key >= ord('0') and key <= ord('7'): # 前処理モード変更

mode = key - ord('0')

preprocess_mode[2] = 1 if mode & 0x4 != 0 else 0

preprocess_mode[1] = 1 if mode & 0x2 != 0 else 0

preprocess_mode[0] = 1 if mode & 0x1 != 0 else 0

outf = True

if not mylib_gui._is_visible(title): # 'Close' button

break

cv2.destroyAllWindows()

if logflag:

print(' -----------\n')

return key

# ** main関数 **

def main():

loop_flg = True

myyaml = mylib_yaml.YamlProcess(OUTPUT_FILE)

# Argument parsing and parameter setting

ARGS = parse_args().parse_args()

filename = ARGS.image

lang = ARGS.language

layout = int(ARGS.layout)

titleflg = ARGS.title

logflg = ARGS.log

logflag = True if logflg == 'y' else False

mltflg = ARGS.mlt

mltflag = True if mltflg == 'y' else False

# 情報表示

display_info(filename, lang, layout, titleflg, logflg, mltflg)

while(loop_flg):

if logflag:

print('\n file: <{}>'.format(filename))

# OpenCV でイメージを読む

frame = cv2.imread(filename)

if frame is None:

print(RED + "\nUnable to read the input." + NOCOLOR)

quit()

# 画像注釈

name = os.path.basename(filename)

write_base_info1(myyaml, name, filename)

ret = image_annotation(frame, name, lang, layout, titleflg, logflag, mltflag)

if ret == 27 or ret == 113 or ret == -1:

loop_flg = False

# 画像ファイルの選択

if loop_flg:

filename = filedialog.askopenfilename(

title = "画像ファイルを開く",

filetypes = [("Image file", ".bmp .png .jpg .tif"),

("Bitmap", ".bmp"),

("PNG", ".png"),

("JPEG", ".jpg")], # ファイルフィルタ

initialdir = "./" # 自分自身のディレクトリ

)

if len(filename) == 0:

break

if logflag:

print('\n Finished.')

# main関数エントリーポイント(実行開始)

if __name__ == "__main__":

sys.exit(main())

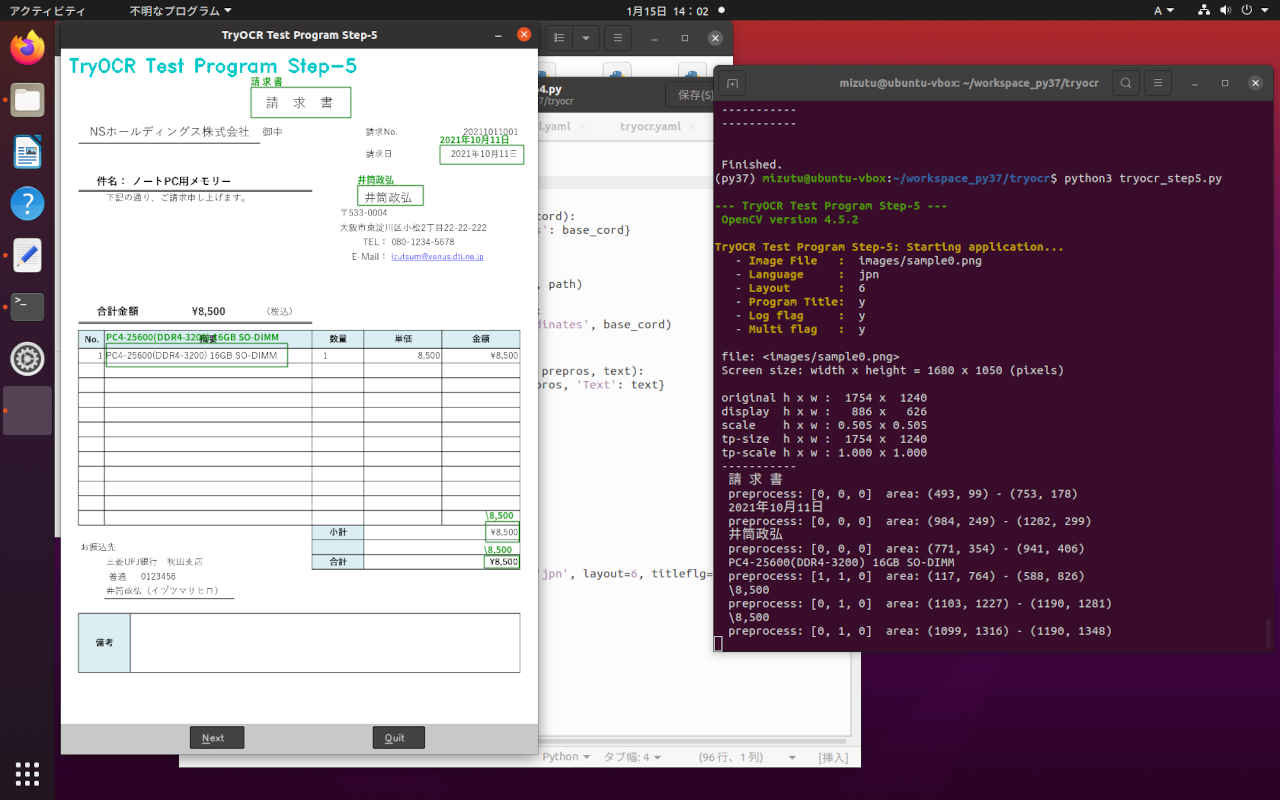

(py37) $ python3 tryocr_step5.py -h

--- TryOCR Test Program Step-5 ---

OpenCV version 4.5.2

usage: tryocr_step5.py [-h] [-i IMAGE_FILE] [-l LANGUAGE] [--layout LAYOUT]

[-t TITLE] [--log LOG] [--mlt MULTI]

optional arguments:

-h, --help show this help message and exit

-i IMAGE_FILE, --image IMAGE_FILE

Absolute path to image file. Default value is

'images/sample0.png'

-l LANGUAGE, --language LANGUAGE

Language. Default value is 'jpn'

--layout LAYOUT Tesseract layout Default value is 6

-t TITLE, --title TITLE

Program title flag.(y/n) Default value is 'y'

--log LOG Log flag.(y/n) Default value is 'y'

--mlt MULTI Multi flag.(y/n) Default value is 'y'

(py37) cd ~/workspace_py37/tryocr (py37) $ python3 tryocr_step5.py --- TryOCR Test Program Step-5 --- OpenCV version 4.5.2 TryOCR Test Program Step-5: Starting application... - Image File : images/sample0.png - Language : jpn - Layout : 6 - Program Title: n - Log flag : y - Multi flag : y file: <images/sample0.png> Screen size: width x height = 1680 x 1050 (pixels) original h x w : 1754 x 1240 display h x w : 886 x 626 scale h x w : 0.505 x 0.505 tp-size h x w : 1754 x 1240 tp-scale h x w : 1.000 x 1.000 ----------- 請 求 書 preprocess: [0, 0, 0] area: (493, 99) - (753, 178) 2021年10月11日 preprocess: [0, 0, 0] area: (984, 249) - (1202, 299) 井筒政弘 preprocess: [0, 0, 0] area: (771, 354) - (941, 406) PC4-25600(DDR4-3200) 16GB SO-DIMM preprocess: [1, 1, 0] area: (117, 764) - (588, 826) \8,500 preprocess: [0, 1, 0] area: (1103, 1227) - (1190, 1281) \8,500 preprocess: [0, 1, 0] area: (1099, 1316) - (1190, 1348) ----------- Finished.

# -*- coding: utf-8 -*-

##------------------------------------------

## TryOCR Test Programe Step-5 Ver 0.01

## with tesseract & PyOCR & cvui

## platform: linux / windows

##

## 2022.01.15 Masahiro Izutsu

##------------------------------------------

## tryocr_step5.py

## 2022.01.17 Ver 0.01 エラー処理追加

## 配置情報ファイルを使って処理する

# Color Escape Code

GREEN = '\033[1;32m'

RED = '\033[1;31m'

NOCOLOR = '\033[0m'

YELLOW = '\033[1;33m'

# 定数定義

LINE_WORD_BOX_COLOR = (0, 0, 240)

WORD_BOX_COLOR = (255, 0, 0)

CONTENTS_COLOR = (0, 128, 0)

from os.path import expanduser

DEF_INPUT_FILE = expanduser('images/sample1.png')

CONFIG_FILE = expanduser('tryocr.yaml')

MAPING_FILE = expanduser('tryocr_templ.yaml')

# import処理

from PIL import Image

import sys

import pyocr

import pyocr.builders

import cv2

import cvui

import argparse

import myfunction

import numpy as np

import mylib_gui

import mylib_frame

import mylib_preprocess

import mylib_screen

import platform

from tkinter import filedialog

import mylib_yaml

import os

# タイトル・バージョン情報

title = 'TryOCR Test Program Step-5 Ver 0.01'

print(GREEN)

print('--- {} ---'.format(title))

print(' OpenCV version {} '.format(cv2.__version__))

print(NOCOLOR)

# Parses arguments for the application

def parse_args():

parser = argparse.ArgumentParser()

parser.add_argument('-i', '--image', metavar = 'IMAGE_FILE', type = str, default = DEF_INPUT_FILE,

help = 'Absolute path to image file. Default value is \'' + DEF_INPUT_FILE + '\'')

parser.add_argument('-l', '--language', metavar = 'LANGUAGE',

default = 'jpn',

help = 'Language. Default value is \'jpn\'')

parser.add_argument('--layout', metavar = 'LAYOUT',

default = 6,

help = 'Tesseract layout Default value is 6')

parser.add_argument('-t', '--title', metavar = 'TITLE',

default = 'n',

help = 'Program title flag.(y/n) Default value is \'n\'')

parser.add_argument('--log', metavar = 'LOG',

default = 'y',

help = 'Log flag.(y/n) Default value is \'y\'')

parser.add_argument('--mlt', metavar = 'MULTI',

default = 'y',

help = 'Multi flag.(y/n) Default value is \'y\'')

return parser

# モデル基本情報の表示

def display_info(image, lang, layout, titleflg, logflg, mltflg):

print(YELLOW + title + ': Starting application...' + NOCOLOR)

print(' - ' + YELLOW + 'Image File : ' + NOCOLOR, image)

print(' - ' + YELLOW + 'Language : ' + NOCOLOR, lang)

print(' - ' + YELLOW + 'Layout : ' + NOCOLOR, layout)

print(' - ' + YELLOW + 'Program Title: ' + NOCOLOR, titleflg)

print(' - ' + YELLOW + 'Log flag : ' + NOCOLOR, logflg)

print(' - ' + YELLOW + 'Multi flag : ' + NOCOLOR, mltflg)

# 基本情報を読む

def read_base_info1(myyaml, section):

path = myyaml.load_section_key(section, '.FileName')

return path

def read_base_info2(myyaml, section):

base_cord = myyaml.load_section_key(section, '.BasicCoordinates')

return base_cord

# 配置情報を読む

def read_area_info(myyaml, section, sub_sec) :

locat = myyaml.load_section_sub_key(section, sub_sec, 'Location')

prepros = myyaml.load_section_sub_key(section, sub_sec, 'PreProcess')

text = myyaml.load_section_sub_key(section, sub_sec, 'Text')

return locat, prepros, text

# 登録セクション名(ファイル名) を読む out: キー・リスト

def read_section_name(myyaml):

templ_lst = myyaml.load_section('Template')

return templ_lst

# 帳票 OCR・メイン処理

def image_ocr_process(lena_frame_org, name, lang='jpn', layout=6, titleflg=False, logflag=False, mltflg=True):

WINDOW_NAME = title

preprocess_mode = [0, 0, 0]

set_mode = 0

wlock1 = 0

# 配置情報読み出し・設定処理

conf_file = CONFIG_FILE

map_file = MAPING_FILE

myyaml_cnf = mylib_yaml.YamlProcess(conf_file, True)

myyaml_map = mylib_yaml.YamlProcess(map_file, True)

key_table = myyaml_cnf.load_section('KeyTable')

key_disp = myyaml_cnf.load_section('KeyDisp')

load_mode = [0] * len(key_table)

status_h = 40 # ステータスラインの高さ

# 日本語フォント指定

if platform.system()=='Windows':

fontPIL = 'meiryo.ttc' # メイリオ

else:

fontPIL = 'NotoSansCJK-Bold.ttc' # ゴシック体

# ディスプレイ解像度を得る (Ubuntu の場合 height - 64)

monitor_height, monitor_width = mylib_screen.get_display_size(logflag)

maxsize = monitor_height - 64 - 100

# 画像の前処理

imgpros = mylib_preprocess.ImagePreprocess(False) # 初期化

# OCR

tools = pyocr.get_available_tools()

if len(tools) == 0:

print(RED + "\nOCR tool Not found." + NOCOLOR)

quit()

tool = tools[0]

# mylib_frame ライブラリ

imgfr = mylib_frame.ImageFrame(lena_frame_org) # 初期化

imgfr.set_screen_size(monitor_width, monitor_height)

lena_frame = imgfr.frame_resize(maxsize)

lena_frame_h, lena_frame_w = lena_frame.shape[:2]

# 画面ステータス領域 (画面下部 status_h pixel)の確保

frame = np.zeros((lena_frame_h + status_h, lena_frame_w, 3), np.uint8)

frame[:,:,:] = 200

popup_frame = np.zeros((120, 500, 3), np.uint8)

anchor = cvui.Point()

roi = cvui.Rect(0, 0, 0, 0)

frame_h, frame_w = frame.shape[:2]

outf = True

org_h, org_w = imgfr.get_original_size()

scale_h, scale_w = imgfr.get_scale()

# テンプレート関連設定

template_tbl = read_section_name(myyaml_cnf)

section_name = template_tbl[0] # !!! この版では最初のエントリを使う

base_cord = read_base_info2(myyaml_map, section_name)

base_scale_w = base_cord[0] / org_w

base_scale_h = base_cord[1] / org_h

table_n = len(key_table)

if logflag:

print('\n original h x w : {:=5} x {:=5}'.format(org_h, org_w))

print(' display h x w : {:=5} x {:=5}'.format(lena_frame_h, lena_frame_w))

print(' scale h x w : {:.3f} x {:.3f}'.format(scale_h, scale_w))

print(' tp-size h x w : {:=5} x {:=5}'.format(base_cord[1], base_cord[0]))

print(' tp-scale h x w : {:.3f} x {:.3f}'.format(base_scale_h, base_scale_w))

print(' -----------')

# Init cvui and tell it to create a OpenCV window, i.e. cv.namedWindow(WINDOW_NAME).

cv2.namedWindow(WINDOW_NAME, flags=cv2.WINDOW_AUTOSIZE | cv2.WINDOW_GUI_NORMAL)

cvui.init(WINDOW_NAME)

# OCR 項目設定データ

ocr_text = ''

ocr_rect = [0, 0, 0, 0]

# 設定データの保存場所

text_save = []

rect_save = []

for n in range(table_n):

text_save.append(ocr_text)

rect_save.append(ocr_rect)

# メッセージ・ウインドウ設定

msg_win_h = 100

msg_win_w = 400

msg_win_xof = 0 if lena_frame_w < msg_win_w else int((lena_frame_w - msg_win_w) / 2)

msg_win_yof = 0 if lena_frame_h < msg_win_h else int((lena_frame_h - msg_win_h) / 2)

msg_bg_color = (49, 52, 49)

# 処理ループ

while (True):

# 画面上部に画像を配置

frame[0:lena_frame_h,:] = lena_frame

frame[lena_frame_h:,:,:] = 200

if outf:

cv2.rectangle(frame, (msg_win_xof, msg_win_yof), (msg_win_xof + msg_win_w, msg_win_yof + msg_win_h), (49, 52, 49), -1)

myfunction.cv2_putText(img = frame,

text = 'ファイル名: < {} >'.format(name),

org = (msg_win_xof + 40, msg_win_yof + 20),

fontFace = fontPIL,

fontScale = 12,

color = (255,255,255),

mode = 1)

myfunction.cv2_putText(img = frame,

text = '処理中...',

org = (msg_win_xof + 180, msg_win_yof + 60),

fontFace = fontPIL,

fontScale = 12,

color = (240,240, 0),

mode = 1)

else:

for n in range(table_n):

# 領域表示

cvui.rect(frame, rect_save[n][0], rect_save[n][1], rect_save[n][2], rect_save[n][3], 0x008000)

# テキストの描画

if len(text_save[n]) > 0:

myfunction.cv2_putText(img = frame,

text = text_save[n],

org = (rect_save[n][0], rect_save[n][1]),

fontFace = fontPIL,

fontScale = 12,

color = (0,160,0),

mode = 0)

# -------------------------

# ステータスライン - 作業ボタン

btn_x = 90

btn_y = lena_frame_h + 4

btn_w = 70

btn_h = 32

# 'Next'

if mltflg:

if cvui.button(frame, btn_x + btn_w + 10, btn_y, "&Next"):

key = ord('n')

break

# 'Quit'

if cvui.button(frame, btn_x + (btn_w + 10) * 4, btn_y, "&Quit"):

key = ord('q')

break

# -------------------------

# タイトル描画

if (titleflg == 'y'):

cv2.putText(frame, title, (10, 30), cv2.FONT_HERSHEY_DUPLEX, fontScale=0.6, color=(200, 200, 0), lineType=cv2.LINE_AA)

# ウインドウの更新

cvui.update()

# 画面の表示

cv2.imshow(WINDOW_NAME, frame)

if wlock1 < 10:

cv2.moveWindow(WINDOW_NAME, 80, 0)

wlock1 = wlock1 + 1

else:

wlock1 = 10

key = cv2.waitKey(50)

if key == 27 or key == 113: # 'esc' or 'q'

break

if not mylib_gui._is_visible(title): # 'Close' button

break

# 登録セクションの処理

if outf:

for n in range(table_n):

# 配置情報の読み出し

ocr_rect, pros, ocr_text = read_area_info(myyaml_map, section_name, key_table[n])

if pros == None:

pros = 0

if ocr_text == None:

ocr_text = ''

prepros = int(pros)

preprocess_mode[2], mod = divmod(prepros, 4)

preprocess_mode[1], preprocess_mode[0] = divmod(mod, 2)

if ocr_rect == None:

roi.x = 0

roi.y = 0

roi.width = 0

roi.height = 0

else:

# オリジナル座標 → 表示座標

x0, y0 = imgfr.get_org2res_xy(round(ocr_rect[0] / base_scale_w), round(ocr_rect[1] / base_scale_h))

x1, y1 = imgfr.get_org2res_xy(round(ocr_rect[2] / base_scale_w), round(ocr_rect[3] / base_scale_h))

roi.x = x0

roi.y = y0

roi.width = x1 - x0

roi.height = y1 - y0

# 領域の保存

rect_save[n] = [roi.x, roi.y, roi.width, roi.height]

# 得られた表示座標から元画像の位置を計算して画像を切り出す

if roi.area() > 50:

x0, y0 = imgfr.get_res2org_xy(roi.x, roi.y)

x1, y1 = imgfr.get_res2org_xy(roi.x + roi.width, roi.y + roi.height)

lenaRoi = lena_frame_org[y0 : y1, x0 : x1]

ocr_rect = [x0, y0, x1, y1]

# 前処理

if preprocess_mode[2] != 0:

lenaRoi = imgpros.image_processing_execution(lenaRoi, 4)

if preprocess_mode[1] != 0:

lenaRoi = imgpros.image_processing_execution(lenaRoi, 2)

if preprocess_mode[0] != 0:

lenaRoi = imgpros.image_processing_execution(lenaRoi, 1)

# 切り出した領域を OCR

# PILのイメージにする

lenaRoi1 = cv2.cvtColor(lenaRoi, cv2.COLOR_RGB2BGR)

imgRoi = Image.fromarray(lenaRoi1)

# txt is a Python string

text = tool.image_to_string(imgRoi, lang=lang,

builder=pyocr.builders.TextBuilder(tesseract_layout=layout))

text_save[n] = text

# テキストの保存

if len(text) > 0:

msg = 'preprocess: {} area: ({}, {}) - ({}, {})'.format(preprocess_mode, ocr_rect[0], ocr_rect[1], ocr_rect[2], ocr_rect[3])

if outf and logflag:

print(' ', msg)

print(' ', text)

outf = False

cv2.destroyAllWindows()

if logflag:

print(' -----------\n')

return key

# ** main関数 **

def main():

loop_flg = True

# Argument parsing and parameter setting

ARGS = parse_args().parse_args()

filename = ARGS.image

lang = ARGS.language

layout = int(ARGS.layout)

titleflg = ARGS.title

logflg = ARGS.log

logflag = True if logflg == 'y' else False

mltflg = ARGS.mlt

mltflag = True if mltflg == 'y' else False

# 情報表示

display_info(filename, lang, layout, titleflg, logflg, mltflg)

while(loop_flg):

if logflag:

print('\n file: <{}>'.format(filename))

# OpenCV でイメージを読む

frame = cv2.imread(filename)

if frame is None:

print(RED + "\nUnable to read the input." + NOCOLOR)

quit()

# 帳票 OCR・メイン処理

name = os.path.basename(filename)

ret = image_ocr_process(frame, name, lang, layout, titleflg, logflag, mltflag)

if ret == 27 or ret == 113 or ret == -1:

loop_flg = False

# 画像ファイルの選択

if loop_flg:

filename = filedialog.askopenfilename(

title = "画像ファイルを開く",

filetypes = [("Image file", ".bmp .png .jpg .tif"),

("Bitmap", ".bmp"),

("PNG", ".png"),

("JPEG", ".jpg")], # ファイルフィルタ

initialdir = "./" # 自分自身のディレクトリ

)

if len(filename) == 0:

break

if logflag:

print('\n Finished.')

# main関数エントリーポイント(実行開始)

if __name__ == "__main__":

sys.exit(main())

→ 以降「OCR アプリケーション基礎編 3」へ続く

PukiWiki 1.5.2 © 2001-2019 PukiWiki Development Team. Powered by PHP 7.4.3-4ubuntu2.20. HTML convert time: 0.029 sec.